production-and-operations-management-5nbsped-9781259005107 compress

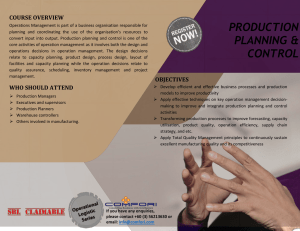

advertisement

Production and Operations

Management

Fifth Edition

About the Author

S.N. Chary has formerly been a Professor with Indian Institute of Management Bangalore where

he taught for over a quarter of a century. A prolific management analyst and thinker, he has written several books, papers and articles. He has been the Director, Kirloskar Institute of Advanced

Management Studies, Karnataka and is a well known management and educational consultant. He

is working on social change through people empowerment.

Production and Operations

Management

Fifth Edition

S N Chary

Former Professor

Indian Institute of Management

Bangalore

Tata McGraw Hill Education Private Limited

NEW DELHI

New Delhi New York St Louis San Francisco Auckland Bogotá Caracas Kuala Lumpur

Lisbon London Madrid Mexico City Milan Montreal San Juan

Santiago Singapore Sydney Tokyo Toronto

Published by Tata McGraw Hill Education Private Limited,

7 West Patel Nagar, New Delhi 110 008

Production and Operations Management, 5/e

Copyright © 2012, by Tata McGraw Hill Education Private Limited. No part of this publication can be

reproduced or distributed in any form or by any means, electronic, mechanical, photocopying, recording, or

otherwise or stored in a database or retrieval system without the prior written permission of the publishers.

The program listings (if any) may be entered, stored and executed in a computer system, but they may not be

reproduced for publication.

This edition can be exported from India only by the publishers,

Tata McGraw Hill Education Private Limited.

ISBN 13: 978-1-25-900510-7

ISBN 10: 1-25-900510-0

Vice President and Managing Director: Ajay Shukla

Head—Higher Education Publishing and Marketing: Vibha Mahajan

Publishing Manager—B&E/HSSL:

Deputy Manager (Sponsoring):

Development Editor: Anirudh Sharan

Senior Production Manager: Manohar Lal

Senior Production Executive: Atul Gupta

Marketing Manager—Higher Education:Vijay Sarathi

Assistant Product Manager:

Graphic Designer (Cover Design):

General Manager—Production:

Manager—Production:

Information contained in this work has been obtained by Tata McGraw Hill, from sources believed to be reliable.

However, neither Tata McGraw-Hill nor its authors guarantee the accuracy or completeness of any information

published herein, and neither Tata McGraw-Hill nor its authors shall be responsible for any errors, omissions, or

damages arising out of use of this information. This work is published with the understanding that Tata McGraw-Hill

and its authors are supplying information but are not attempting to render engineering or other professional services.

If such services are required, the assistance of an appropriate professional should be sought.

Typeset at Script Makers, A1-B, DDA Market, Paschim Vihar, New Delhi 110 063 and printed at Sai Printo Park,

A-102/4, Okhla Industrial Area, Phase-II, New Delhi-110020

Cover Printer: Sai Printo Park

RYCCRRAHDDLRD

This book

is

dedicated to

Gurudev

Preface

This latest edition incorporates the changes desired by the readers over the past editions, in view

of the changing global management culture. This book is primarily addressed to the students

studying production and operations management for the first time like those pursuing an MBA,

PGDM, MMS or PGDBA programme. The book would, however, be equally useful for a practicing executive.

Production and operations management is a part of the course curricula of a large number of

professional engineering and other technical courses. The students of these courses would find

this book very useful as a text.

The treatment of the topics and the language in this book has been kept simple to make students

easily grasp the concepts. This book is valuable as a primer for undergraduate students such as the

BBA or BBM students. This edition has additional solved problems at the end of several relevant

chapters to enhance the understanding of the chapter and the various shades of the topic. Each

solved problem highlights a significant aspect of the basic concept described in the chapter.

A chapter on ‘Lean Operations’ has been added since the philosophy underlying ‘lean’ is going

to be the essence of operations and other disciplines of management. ‘Lean’ is not just about cutting

out the flab in the organisation but about the tenet of ‘service’ to the customer and to the society.

That is the direction of the future.

This book has a universal appeal. It contains globally important topics and has numerous examples drawn from India and abroad. Hence, it can certainly be handy for readers across all the

continents.

While writing this book I have referred to several papers, articles and books. I take this opportunity to thank the authors and publishers of all the publications I have referred to and some of

whom I have quoted in this edition. Likewise, I have provided the examples and case studies from

many organisations. I thank all these organisations.

My family Geetha, Sathya, Kartik and Manjula have always been a great source of encouragement. Several thanks to them.

Above all, I thank my Gurudev and the Creator of all to whom this book is dedicated.

S.N. Chary

Preface to the First Edition

In the course of my teaching in the postgraduate programme, I have acutely felt the need for a

textbook on production and operations management which does justice to the stipulated curriculum for the basic course in this field. The curricula of the MBA programme offered by the Indian

universities are similar in many ways and a similar need would be felt there too. There are very

few books on production operations management by Indian authors. The dependence has been,

to a large extent, on books by foreign authors written primarily for their curricular requirements

and student audience, and based on their work environment.

There are five major reasons why a book tailored to meet the Indian situation is required. First,

the basic courses in this discipline in India are more detailed and involve more analysis than those

in most American universities. Available foreign books are generally of two kinds: (a) elementary,

and (b) very detailed on a special topic. Neither of them are suitable for our teaching purposes.

Second, there are certain topics which are relatively more important in the Indian environment.

For instance, project management, incentive schemes, job evaluation, logistics management, regulatory framework, etc. One may find these topics either underplayed or glossed over in the various

available foreign books as these pertain to a different economic environment.

Third, there are special Indian topics such as Tandon Committee recommendations or location

of facilities. These obviously have to be covered in a book meant for an Indian target audience.

Fourth, there are Indian concerns, such as management of sectors and services, problem of inflation, concern about closing the gap in technology and matching it with the available resources, etc.

These have to be reflected upon in the discussions of various topics which may appear universal.

Finally, the book should also appeal to those practising executives who wish to undertake a

refresher course in this discipline. The treatment of each topic should, therefore, be practical and

simple, yet comprehensive. The book should have sufficient material to offer on individual topics,

such as materials management or quality management.

I have made an attempt to fulfil these needs through this book. Readers from other developing countries may also find it useful for the possible reason that we share common concerns and

similar objectives. However, the book may have a universal appeal; it may also find interested

readership in the developed countries. Perhaps, its comprehensiveness and novel interpretation

of the topics could be the attractive features for foreign readers.

This book is my maiden venture. In the course of writing it, I have received much encouragement from my colleagues Prof. Prasanna Chandra, B K Chandrasekhar, Ronald W Haughton,

T S Nagabhushana, S Subba Rao, V K Tewari, G K Valecha and Vinod Vyasulu. I express my sincere

gratitude to them. My other colleagues, V Vilasini, Anna Robinson and S Murali have helped me

by way of secretarial assistance. My thanks are also due to them. In the course of writing this book

I have referred to many publications. Wherever possible, I have taken permission and given credits

for the sources and I thank them all very much. If some of the sources have been left out inadvertently, I seek their pardon. Any mistakes/errors in the book are, however, fully my responsibility.

I have drawn inspiration from the encouragement of the former Chairman of IIM Bangalore,

Mr V Krishnamurthy, now Chairman of Steel Authority of India. My heartfelt thanks to him for

writing the Foreword to this book.

Above all, I thank my Gurudev and Creator of all to whom this book is dedicated.

S N Chary

Contents

Preface

Preface to the First Edition

vii

ix

Section I

Role of Production and Operations Management in a Changing Business World

1. Production and Operations Management Function

1.5–1.17

2. Operations Strategy

2.1–2.18

3. Services

3.1–3.20

Section II

Useful Basic Tools

4. Relevant Cost Concepts

4.3–4.10

5. Linear Programming

5.1–5.9

6. Capital Budgeting

6.1–6.16

7. Queueing Theory

7.1–7.19

8. Forecasting

8.1–8.32

Section III

Imperatives of Quality and Productivity

9. Quality Management–I

9.5–9.34

10. Quality Management–II

10.1–10.33

11. New Quality Concepts and Initiatives, Total Quality Management

and Six Sigma

11.1–11.44

xx Content

12. Product Design

12.1–12.19

13. Maintenance Management — I

13.1–13.29

14. Maintenance Management – II

14.1–14.11

15. Work Study

15.1–15.31

16. Job Evaluation

16.1–16.11

17. Incentive Schemes

17.1–17.23

18. Job Redesign

18.1–18.4

19. Productivity

19.1–19.17

Section IV

Supply Chain Management

20. Purchasing

20.3–20.23

21. Inventory Models and Safety Stocks

21.1–21.46

22. ABC and Other Classification of Materials

22.1–22.10

23. Materials Requirement Planning

23.1–23.19

24. Other Aspects of Materials Management

24.1–24.12

25. Physical Distribution Management

25.1–25.29

26. Materials Management—An Integrated View

26.1–26.4

27. Supply Chain Management

27.1–27.21

28. Outsourcing

28.1–28.24

Section V

Spatial Decisions in Production and Operations Management

29. Plant Layout

29.3–29.30

30. Cellular Manufacturing

30.1–30.14

Content xv

31. Location of Facilities

31.1–31.26

Section VI

Timing Decisions

32. Production Planning and Control

32.5–32.11

33. Aggregate Planning

33.1–33.26

34. Scheduling

34.1–34.37

35. Project Management—I

35.1–35.18

36. Project Management—II

36.1–36.30

37. Just-In-Time Production

37.1–37.11

38. Lean Operations

38.1–38.23

Section VII

Present Concerns and Future Directions

39. Environmental Considerations in Production and

Operations Management

39.3–39.20

40 Where is Production and Operations Management Headed?

40.1–40.11

Bibliography

Index

B.1–B.37

I.1–I.15

Role of Production and

Operations Management

in a Changing Business World

Chapter 1

Production and Operations Management Function

Chapter 2

Operations Strategy

Chapter 3

Services

The last century, particularly the latter half of it, has seen an upsurge in industrial

activity. Industries are producing a variety of products. Consumerism has been

on a steep rise. People, all over the world, have been demanding more and better

products and devices. The developments in technology, particularly in the areas of

telecommunications, Internet, mass media and transport have made it possible for

products and ideas to travel across the globe with minimal effort. A Chinese girl

sitting in Shanghai can see on the television what girls of her age wear in distant

USA or Europe. A housewife from Delhi, while visiting her son in San Jose in the

Silicon Valley, is able to check out on the interiors and furniture available in that part

of the world. A middle class newly married couple from Bangalore thinks of Malaysia

or Mauritius as a honeymoon destination. The world has shrunk. Despite the political

boundaries, nations are getting closer in trade and commerce. Products are aplenty. A

variety of devices are available Communications are quicker. Consumers are the kings.

It is obvious that a lot is being demanded of the production and operations

management function. The responsibility of that function is to see that the required

products and devices are of a good quality, with an acceptable price and delivered at

a time and place the customer desires. Quality, productivity (and hence price) and

delivery have become the basic minimums that a firm must offer in order to stay

in competition. There is competition amongst firms even at the level of these basic offerings. In

order to have differentiation and a unique selling proposition (USP), a firm and its production or

operations function have to make continuous efforts. The USP that exists today may be copied by

another firm tomorrow, thus nullifying the competitive advantage. New products or variants of

products and devices have to be continuously designed, produced and delivered—all profitably.

Production/operations function has to very actively support the marketing function. Engineering

has to listen to customer needs. Materials and Logistics people have to react swiftly to the needs of

the manufacturing system. The human resources (HR) function has to ensure that people within

the firm not only have the needed skills but also the essential attitudes. The production operations

function has to interact with the HR function on this count. Each function needs to be attuned

to the other function within the firm. The modern business world has necessitated a high level of

integration within organisations. It is not that integration was not needed earlier and it is needed

now. It is not that quality of products was not a concern earlier and it is so only now. The basic roles

and requirements remain, but the intensity of application has increased. The role of production and

operations—which is central to the industrial organisation—has become much more intense.

In the competition to seek differentiation, manufacturing firms are finding ways of attaching

‘service’ components to the physical product. Services are no longer ‘frills’ that some firms

offered earlier. Services have become intertwined with the output of the production process.

It is the combination that the customer buys today. No manufacturing company is purely into

manufacturing. It is also, in part, a service-providing company. Therefore, the special characteristics

of services are getting incorporated into the design of the production system. For one, there is a

lot more interaction and interfacing of the production function with the customers today than it

was earlier. Customer, so to say, has come closer to, and sometimes within, the company. This has

ramifications for the production function. It is no longer an aloof outfit.

The service sector is increasing at a tremendous rate. For instance, there are more banks in one

town now than they were in an entire district earlier. More and more banks from the developed

world are doing business in the developing countries. Money is more easily available to the

consumers. Hence, more leisure activities are being pursued. The number of hotels is, therefore,

increasing rapidly. In the places where there were only government-run hospitals, now there are

corporate hospitals and lifestyle clinics providing health care combined with comfort. There are

thus primary, secondary and tertiary hospitals. A bank also has several back-office operations.

A corporate hospital offering comprehensive ‘executive health check-ups’ needs to streamline its

operations right from the minute a ‘customer’ arrives at the reception counter to her undergoing

a series of medical tests and check-ups to her receiving a complete report and personal advice

from the physician. Besides, this series of ‘check-up’ operations has to be a great ‘experience’ for

the customer. Operations management is being called upon to deliver these varied outputs. Since

the services sector is as large as (in some economies it is larger than) the manufacturing sector,

managing operations in non-manufacturing businesses has assumed as much significance as

managing production in a manufacturing set-up.

The fundamentals of this new production-cum-service economy are the same as the earlier

manufacturing dominated economy. However, the orientation has changed. For instance, in a bank

the customer is right at the place where the operations are taking place. In a hotel, she gets the

various ‘utilities’. In a way, she is the input that is being transformed. Same is true of a hospital and

an educational institution. Thus, ‘customer focus’ is built into the system. This attitude is rubbing

into the manufacturing firms too. The criteria for performance of an operations system today

strongly emphasise customer satisfaction. Production and operations management is becoming

less about machines and materials and more about service to the customer. The focus has shifted.

All that is done with regard to the machines and materials, spatial and timing or scheduling

decisions is with the customer in view.

This has made a huge difference. It has brought relationships to the fore. Since a customer is now

an individual or individual entity, the requirements on the production and operations system tend

to be multitudinous (number), multifarious (variety) and multi-temporal (time). The tolerance for

mistakes is low for a system that is subjected to several variations in demand. This requires real

leadership on the part of the firms so as to align the firm’s interests with that of its people inside

the firm and with that of its suppliers and others outside who provide inputs into the production

and operations system. The joint family concept may have gone from the society today, but it has

found a new place in the corporate world. A ‘supply chain’ is one such manifestation. Outsourcing

is increasingly being used to gain maximum advantage from the ‘core competence’ of other firms

in the ‘family’. Such relationships could spread over several continents; distance or political boundary is not necessarily a constraint. Production and operations have become globalised. A bank in

UK or USA can send its several accounting and other operations to be performed in Mumbai by

an India-based firm—just as an auto-part producing firm in Chennai provides supplies to an auto

manufacturer in Detroit. Long distance business relationships are possible because of the progress

in the fields of telecommunication, computers and the Internet. These technologies have made the

integration (which means extensive and intensive interconnections) of various business organisations feasible. One may call the present times as the world of inter-relationships.

Due to the inter-relationships, certain things have become easier. For instance, a firm could

truly depend on its business associates. Thus the market undulations, i.e., the changing needs of

the customers can be met more easily. However, it would behove on the firm to be equally reliable

or be better. A relationship comes with its accompanying responsibilities. The modern day production and operations management is expected to provide that kind of leadership.

If ‘efficiency’ was the all important factor yesterday, and if today ‘service’ and ‘relationships’

are the buzz words of production and operations management, what will be in store for tomorrow? The concept of a ‘product’ has changed over the past few decades to include ‘service’ in it

Environmental and larger social concerns are getting to increasingly occupy the decision space

of the management. The production and operations function will remain in the future, even in a

‘post-industrial’ society. Utilities—of form, state, possession and place—have to be provided and

operations cannot be wished away. What may perhaps change would be the nature of the product

and the service.

1

Production and Operations

Management Function

DEFINITION

Production and operations management concerns itself with the conversion of inputs into outputs,

using physical resources, so as to provide the desired utility/utilities—of form, place, possession or

state or a combination thereof—to the customer while meeting the other organisational objectives

of effectiveness, efficiency and adaptability. It distinguishes itself from the other functions such

as personnel, marketing, etc. by its primary concern for ‘conversion by using physical resources’.

Of course, there may be and would be a number of situations in either marketing or personnel or

other functions which can be classified or sub-classified under production and operations management. For example, (i) the physical distribution of items to the customers, (ii) the arrangement

of collection of marketing information, (iii) the actual selection and recruitment process, (iv)

the paper flow and conversion of the accounting information in an accounts office, (v) the paper

flow and conversion of data into information usable by the judge in a court of law, etc. can all be

put under the banner of production and operations management. The ‘conversion’ here is subtle,

unlike manufacturing which is obvious. While in case (i) and (ii) it is the conversion of ‘place’

and ‘possession’ characteristics of the product, in (iv) and (v) it is the conversion of the ‘state’

characteristics. And this ‘conversion’ is effected by using physical resources. This is not to deny

the use of other resources such as ‘information’ in production and operations management. The

input and/or output could also be non-physical such as ‘information’, but the conversion process

uses physical resources in addition to other non-physical resources. The management of the use

of physical resources for the conversion process is what distinguishes production and operations

management from other functional disciplines. Table 1.1 illustrates the many facets of the production and operations management function.

Often, production and operations management systems are described as providing physical

goods or services. Perhaps, a sharper distinction such as the four customer utilities and physical/

non-physical nature of inputs and/or outputs would be more appropriate. When we say that the

Central Government Health Service provides ‘service’ and the Indian Railways provide ‘service’,

these are two entirely different classes of utilities, therefore criteria for reference will have to be

entirely different for these two cases. To take another example the postal service and the telephones

1.6 Production and Operations Management

Table 1.1

Production and Operations Management—Some Cases

Case

Input

Physical

Resource/s Used

Output

Type of Input/

Output

Type of Utility

Provided to the

Customers

1.

Inorganic

chemicals

production

Ores

Chemical plant

and equipment,

other chemicals,

use of labour, etc.

Inorganic

chemical

Physical input

and physical

output

Form

2.

Outpatient

ward of a

general

hospital

Unhealthy

patients

Doctors, nurses,

other staff,

equipment, other

facilities

Healthier

patients

Physical input

and physical

output

State

3.

Educational

institution

‘Raw’ minds

Teachers, books,

teaching aids, etc.

‘Enlightened’

minds

Physical (?)

input and

physical (?)

output

State

4.

Sales office

Data from

market

Personnel, office

equipment and

facilities, etc.

Processed

‘information’

Non-physical

State

input and nonphysical output

5.

Petrol pump

Petrol (in

possession

of the petrol

pump owner)

Operators,

err- and boys,

equipment, etc

Petrol (in

possession of

the car owner)

Physical input

and physical

output

Possession

6.

Taxi service

Customer (at

Driver, taxi itself

railway station) petrol

Customer (at

his residence)

Physical input

and physical

output

Place

7.

Astrologer/

palmist

Customer

(mind full of

questions)

Astrologer,

Panchanga, other

books, etc.

Customer

(mind with

less questions)

(hopefully)

Physical input

and physical

output

State

8.

Maintenance

workshop

Equipment

gone ‘bad’

Mechanics,

‘Good’

Engineers, repairs Equipment

equipment, etc.

Physical input

and physical

output

State and form

9.

Income tax

office

‘Information’

Officers and

other staff, office

facility

Non-physical

State

input and

(possession?)

physical output

Raid

service are different because the major inputs and major outputs are totally different with different

criteria for their efficiency and effectiveness.

Also, a clear demarcation is not always possible between operations systems that provide

‘physical goods’ and those that provide ‘service’, as an activity deemed to be providing ‘physical

Production and Operations Management Function 1.7

goods’ may also be providing ‘service’, and vice versa. For example, one might say that ‘the food in

the Southern Railways is quite good’ however, food is not the main business of the Railways. To

take another example: A manufacturing firm can provide good ‘service’ by delivering goods on

time. Or, XYZ Refrigerator Company not only makes good refrigerators, but also provides good

‘after sales service’. The concepts of ‘physical goods production’ and ‘service provision’ are not

mutually exclusive; in fact, in most cases these are mixed, one being more predominant than the

other.

Similarly we may also say that the actual production and operations management systems are

quite complex involving multiple utilities to be provided to the customer, with a mix of physical and

non-physical inputs and outputs and perhaps with a multiplicity of customers. Today, our ‘customers’

need not only be outsiders but also our own ‘inside staff ’. In spite of these variations in (i) input type

(ii) output type, (iii) customers serviced, and (iv) type of utility provided to the customers,

production and operations management distinguishes itself in terms of ‘conversion effected by

the use of physical resources such as men, materials, and machinery.’

CRITERIA OF PERFORMANCE FOR THE PRODUCTION AND

OPERATIONS MANAGEMENT SYSTEM

Three objectives or criteria of performance of the production and operations management system

are

(a) Customer satisfaction.

(b) Effectiveness

(c) Efficiency

The case for ‘efficiency’ or ‘productive’ utilisation of resources is clear. Whether, the organisation

is in the private sector or in the public sector, is a ‘manufacturing’ or a ‘service’ organisation, or

a ‘profit-making’ or a ‘non-profit’ organisation, the productive or optimal utilisation of resource

inputs is always a desired objective. However, effectiveness has more dimensions to it. It involves

an optimality in the fulfillment of multiple objectives, with a possible prioritisation within the

objectives. This is not difficult to imagine because modern production and operations management

has to serve the so-called target customers, the people working within, as also the region, country

or society at large. In order to survive, the production/operations management system, has not

only to be ‘profitable’ and/or ‘efficient’, but, must necessarily satisfy many more ‘customers’. This

effectiveness has to be again viewed in terms of the short- and long-time horizons (depending

upon the operations system’s need to remain active for short or long time horizons)—because,

what may seem now like an ‘effective’ solution may not be ‘all that effective in the future’. In fact, the

effectiveness of the operations system may depend not only upon a multi-objectives satisfaction

but also on its flexibility or adaptability to changed situations in the future so that it continues to

fulfil the desirable objectives set while maintaining optimal efficiency.

JOBS/DECISIONS OF PRODUCTION/OPERATIONS MANAGEMENT

Typically, what are the different decisions taken in production and operations management? As

a discipline of ‘management’ which involves basically planning, implementation, monitoring,

and control, some of the jobs/decisions involved in the production and operations management

function are as mentioned in Table 1.2.

1.8 Production and Operations Management

Table 1.2

Jobs of Production and Operations Management

Long Time Horizon

Intermediate Time Horizon

Short Time Horizon

Product design

Product variations

Production/operations scheduling

Quality policy

Methods selection

Available materials allocation

Technology to be employed

Quality implementation,

inspection and control

methods

and handling

Machinery and plant / facility

selection

Machinery and plant facility

loading decisions

Plant / facility size selection—

phased addition

Forecasting

Progress check and change

in priorities in production / operations scheduling

Manpower training and

development—phased

programme

Output per unit period decision

Temporary manpower

Deployment of manpower

Long-gestation period raw

material supply projects—

phased development

Shift-working decisions

Temporary hiring or lay-off of

manpower

Supervision and immediate

attention to problem areas

in labour, materials,

machines, etc.

Warehousing arrangements

Purchasing policy

Insurance spares

Design of jobs

Purchasing source selection,

development and evaluation

Setting up work standards

Make or buy decision

Effluent and waste disposal

systems

Inventory policy for raw

material, work-in-progress,

and finished goods

Process selection

Site selection

Safety and maintenance

systems

Supply chain and outsourcing

Overtime decisions

Scheduling of manpower

Breakdown maintenance

Transport and delivery

arrangements

Preventive maintenance

scheduling

Implementation of safety

decisions

Industrial relations

Checking/setting up work

standards and incentive rates

CLASSIFICATION OF DECISION AREAS

The production and operations management function can be broadly divided into the following

four areas:

Production and Operations Management Function 1.9

1.

2.

3.

4.

Technology selection and management

Capacity management

Scheduling/Timing/Time allocation

System maintenance

Technology Selection and Management

This is primarily an aspect pertaining to the long-term decision with some spillover into the

intermediate region. Although it is not immediately connected with the day-to-day short-term

decisions handled in the facility plant, it is an important problem to be addressed particularly in

a manufacturing situation in an age of spectacular technological advances, so that an appropriate

choice is made by a particular organisation to suit its objectives, organisational preparedness and

its micro-economic perspectives. It is a decision that will have a significant bearing on the management of manpower, machinery, and materials capacity of the operations system and System

maintenance acreas. Some or similar product/service can be produced with a different process/

technology selection. For instance, sight seings for tourists can be arranged by bus mono-rail or

or cable car. To give an example from the manufacturing industry the technology in a foundry

could be manual or automated.

Capacity Management

The capacity management aspect once framed in a long-term perspective, revolves around matching of available capacity to demand or making certain capacity available to meet the demand variation. This is done on both the intermediate and short time horizons. Capacity management is very

important for achieving the organisational objectives of efficiency, customer service and overall

effectiveness. While lower than needed capacity results in non-fulfillment of some of the customer

services and other objectives of the production/operations system, a higher than necessary capacity results in lowered utilisation of the resources. There could be a ‘flexibility’ built into the capacity

availability, but this depends upon the ‘technology’ decision to some extent and also on the nature

of the production/operations system. While some operations systems can ‘flex’ significantly, some

have to use inventories of material’s and /or people as the flexible joint between the rigidities of a

system. The degree of flexibility required depends upon the customers demand fluctuations and

thus the demand characteristics of the operations system. Figure 1.1 illustrates the size, and variability of demand and the corresponding suitable operating system structure. While figure 1.1 is

easy to understand in a manufacturing context, it is also applicable to service operations.

As the product variety increases, the systems of production/operations change. In a system

characterised by large volume-low variety, one can have capacities of machinery and men which

are inflexible while taking advantage of the repetitive nature of activities involved in the system;

whereas in a high variety (and low volume) demand situation, the need is for a flexible operations

system even at some cost to the efficiency. It may be noted that the relationship between the flexibility, capacity and the desired system-type holds good even for the manufacturing and the service

industry.

Scheduling

Scheduling is another decision area of operations management which deals with the timing of

various activities—time phasing of the filling of the demands or rather, the time phasing of the

capacities to meet the demand as it keeps fluctuating. It is evident that as the span of fluctuations

1.10 Production and Operations Management

Figure 1.1

Production and Operations Systems

in variety and volume gets wider, the scheduling problem assumes greater importance. Thus, in

job-shop (i.e. tailor-made physical output or service) type operations systems, the scheduling

decisions are very important which determine the system effectiveness (e.g. customer delivery) as

well as the system efficiency (i.e. the productive use of the machinery and labour). Similarly, we

can also say that the need for system effectiveness coupled with system efficiencies determine the

system structure and the importance of scheduling. When ‘time’ is viewed by the customers as

an important attribute of the output from a production/operations system, then whatever may be

the ‘needs of variety and flexibility’, the need for ‘scheduling’ is greater and the operations system

choice is also accordingly influenced. Thus, when a mass production of output is envisaged, but

with a ‘quick response’ characteristic to the limited mild variation, the conventional mass production assembly line needs be modified to bring in the ‘scheduling’ element, so that the distinctive

time element is not lost in the mass of output. This necessitates significant modifications in the

mass-production system and what emerges is a new hybrid system particularly and eminently

suited to meet the operations system objectives.

The capacity management and scheduling cannot be viewed in isolation as each affects the other.

System Maintenance

The fourth area of operations management is regarding safeguards—that only desired outputs

will be produced in the ‘normal’ condition of the physical resources, and that the condition will

be maintained normal. This is an important area whereby ‘vigilance’ is maintained so that all

the good work of capacity creation, scheduling, etc. is not negated. Technology and / or process

selection and management has much to contribute towards this problem. A proper selection and

management procedure would give rise to few problems. Further, the checks (e.g. quality checks

on physical/non-physical output) on the system performance and the corrective action (e.g. repair

of an equipment) would reduce the chances of the dsired outputs being sullied. In a manufacturing

industry these may be the physical defects. In service operations, it could be each of confidence of

Production and Operations Management Function 1.11

the customers like the stealing of credit card accounts of customers.

NEW WAYS OF LOOKING AT THE DECISION AREAS

The classification presented earlier has been the traditional classification which is quite valid even

in the present times. However, the nature of production and operations management function is

changing to an increasing service orientation and therefore it has become necessary to relook at

the classification of the decision areas.

Traditionally, the focus of production and operations management discipline has been the

product and the process producing the product. Hence its earlier orientation towards technology,

capacity, scheduling and system maintenance. The focus has been internal i.e., on the operations

of the company. Thus, the traditional view may be termed as having been ‘product-centric’ or selfcentric (‘self ’ meaning company).

Transition from Product-centric to People-centric

However, with the changes in the customers’ requirements and with the changes occurring in the

social set-up worldwide, the production and operations discipline is placing increasing emphasis

on relationships with the people that interact with the firm such as customers, employees and

suppliers or business associates. The latter are important for the company to have its supplies of

materials and services flowing in as desired. The firm interfaces with its associates while taking

these ‘supply’ decisions.

It is ess ential to maintain the desired quality of the produce from the firm. However, it is being

increasingly realised now that the imperatives quality and productivity are intimately related to

the human input, i.e., the employees of the company, more than anything else. Therefore, another

area of decisions is that of HR decisions. The most important of all the human interactions of a

firm is that with its customers. In addition to quality and productivity the customer is interested

in the timeliness of the delivery of the desired goods/services. Thus, another area of decisions is

that of ‘timing’. These decision areas are, thus, focused on people—on ‘others’ (i.e., other than the

company as represented by the capital). There is one more area, that of spatial decisions – decisions regarding the locations and layouts. The former is related predominantly to the ‘supply’ and

‘timing’ problems. The latter is related to the ‘timing’ and ‘productivity’ issues. Thus the production

management decisions may be classified as those pertaining to: People, Supply, Time and Space.

This view is reflected in Table 1.3.

Table 1.3

Classification of Production and Operations Management Decisions: People-centric View

Target People

Decision Type

Affected Aspect

Employees

HR decisions

Quality and productivity; Safety/security

Business associates

Supply decisions

Supplies and capacity

Customers

Timing decisions

Production/operations planning and scheduling;

Technology/process

All the above

Spatial decisions

Location of plants/facilities, location of business

associates; Layouts

1.12 Production and Operations Management

Changes in the Long-term/Short-term Decisions

The recent philosophy is that the technology is subordinate to the customer as customer requirements dictate the technology generation and use. One may note that a distinction needs to be

made between scientific research and technology. Capacity of the operations system is related to

the technology employed. It is also much dependent upon the business associates who provide

capacity in terms of outsourced products, supplies and services. Scheduling, the timing decision,

is related to the customer. Technology, capacity and system maintenance are in some ways related

to it.

In consonance with this view, Table 1.2 listing the ‘jobs of production and operations management’

needs to be modified. For instance, it is not the technology which is a long-term decision, but the

company’s manpower and its suppliers. Product design is better fitted in the intermediate term as

the technology and the designs keep changing (or are required to be changed) often enough these

days. Plant selection, manpower training and setting up work standards would rather belong to

the intermediate term decisions. Outsourcing (not exactly ‘make or buy’) is a long-term decision

as it involves selecting business partners who would be partners for a long time. Such classification

based upon the long, intermediate, or short-term bases is useful in knowing the firm’s areas or

decisions that will have impacts for a long time. It also highlights the needs that have to be attended

to now (short-term decisions).

It may be interesting, at this juncture, to trace the history of production and operations management.

BRIEF HISTORY OF THE PRODUCTION AND

OPERATIONS MANAGEMENT FUNCTION

At the turn of the 20th century, the economic structure in most of the developed countries of today

was fast changing from a feudalistic economy to that of an industrial or capitalistic economy. The

nature of the industrial workers was changing and methods of exercising control over the workers,

to get the desired output, had also to be changed. This changed economic climate produced the

new techniques and concepts.

Fredric W. Taylor studied the simple output-to-time relationship for manual labour such as bricklaying. This formed the precursor of the present day ‘time-study’. Around the same time, Frank

Gilbreth and his learned wife Lillian Gilbreth examined the motions of the limbs of the workers

(such as the hands, legs, eyes, etc.) in performing the jobs, and tried to standardise these motions

into certain categories and utilise the classification to arrive at standards for time required to perform a given job. This was the precursor to the present day ‘motion study’. Although, to this day

Gilbreth’s classification of movements is used extensively, there have been various modifications

and newer classifications.

So far the focus was on controlling the work-output of the manual labourer or the machine

operator. The primary objective of production management was that of efficiency—efficiency

Production and Operations Management Function 1.13

of the individual operator. The aspects of collective efficiency came into being later, expressed

through the efforts of scientists such as Gantt who shifted the attention to scheduling of the

operations. (Even now, we use the Gantt Charts in operations scheduling.) The considerations of

efficiency in the use of materials followed later. It was almost 1930, before a basic inventory model

was presented by F.W. Harris.

Quality

After the progress of application of scientific principles to the manufacturing aspects, thought

progressed to control over the quality of the finished material itself. Till then, the focus was on

the quantitative aspects; later on it shifted to the quality aspects. ‘Quality’, which is an important

customer service objective, came to be recognised for scientific analysis. The analysis of productive

systems, therefore, now also included the ‘effectiveness’ criterion in addition to efficiency. In 1931,

Walter Shewart came up with his theory regarding Control Charts for quality or what is known as

‘process control’. These charts suggested a simple graphical methodology to monitor the quality

characteristics of the output and how to control it. In 1935, H.F. Dodge and H.G. Romig came up

with the application of statistical principles to the acceptance and/or rejection of the consignments

supplied by the suppliers to exercise control over the quality. This field, which has developed over

the years, is now known as ‘acceptance sampling’.

Effectiveness as a Function of Internal Climate

In addition to effectiveness for the customer, the concept of effectiveness as a function of internal

climate dawned on management scientists through the Hawthorne experiments which actually

had the purpose of increasing the efficiency of the individual worker. These experiments showed

that worker efficiency went up when the intensity of illumination was gradually increased, and

even when it was gradually decreased, the worker efficiency still kept rising. This puzzle could

be explained only through the angle of human psychology; the very fact that somebody cared,

mattered much to the workers who gave increased output. Till now, it was Taylor’s theory of

elementalisation of task and thus the specialisation in one task which found much use in Henry

Ford’s Assembly Line.

Advent of Operations Research Techniques

The birth of Operations Research (OR) during the World War II period saw a big boost in the

application of scientific techniques in management. During this war, the Allied Force took the

help of statisticians, scientists, engineers, etc. to analyse and answer questions such as: What is the

optimum way of mining the harbours of the areas occupied by the Japanese? What should be the

optimum size of the fleet of the supply ships, taking into account the costs of loss due to enemy

attack and the costs of employing the defence fleet? Such studies about the military operations was

termed as OR. After World War II, this field was further investigated and developed by academic

institutions. Various techniques such as linear programming, mathematical programming, game

theory, queueing theory, and the like developed by people such as George Dantzig, A. Charnes,

and W.W. Cooper, have become indispensible tools for management decision-making today.

1.14 Production and Operations Management

The Computer Era

After the breakthrough made by OR, another marvel came into being—the computer. Around

1955, IBM developed digital computers. This made possible the complex and repeated computations involved in various OR and other management science techniques. In effect, it helped

to spread the use of management science concepts and techniques in all fields of decision

making.

Service and Relationships Era

The advances in computing technology, associated software, electronics and communication

facilitated the manufacture of a variety of goods and its reach to the consumer. In parallel, the

demand for services such as transport, telecommunications and leisure activities also grew at a

rapid pace. Worldwide, the service economy became as important as that of physical goods. In fact,

manufacturing started emulating some of the practices and principles of services. The far-eastern

countries, particularly Japan seemed specialised in providing manufactured products with various

services/service elements accompanying it. During the last quarter of a century, service has become

an important factor in customer satisfaction. Likewise, many production management principles

have been finding their way into services in order to improve the efficiencies and effectiveness.

The very word ‘service’ signifies some kind of a ‘special bond’ or ‘human touch’. It is, therefore,

natural that production and operations management is now getting to be increasingly ‘relationship

oriented’. There is a web of relationships between the company, its customers and its business

associates and its social surroundings. The production / operations strategy, systems and procedures

have begun to transform accordingly.

LESSONS FROM HISTORY

Economic history and the history are of operations management both important in the sense

that these would suggest some lessons that can be learnt for the future. History helps us to know

the trends and to take a peek at the future. If the 21st century organisations have to manage

their operations well, they will have to try to understand the ‘what and how’ of the productivity

improvements in the past and what led to the various other developments in the field of production

and operations management. The past could tell us a thing or two about the future if not everything.

Looking at the speed with which technology, economies, production and service systems, and the

society’s values and framework seem to be changing, one may tend to think that ‘unprecedented’

things have been happening at the dawn of this millennium. However, there seems to be a common

thread running all through.

Limiting ourselves to history of production or manufacturing, we see that the effort has always

been on increasing productivity through swift and even flow of materials through the production

system. During the Taylor era, the production activities centered on an individual. Hence, methods

had to be found to standardise the work and to get the most out of the individual thus making the

manufacturing flows even and fast. Ford’s assembly line was an attempt at further improving the

speed and the evenness of the flows. Thereafter, quality improvements reduced the bottlenecks

and the variability in the processes considerably, thus further facilitating the even and rapid flow

of goods through the production system.

Production and Operations Management Function 1.15

The attempt has always been to reduce the ‘variability’, because variability could impede the

pace and the steadiness of the flow. Earlier, variability came mostly through the variability in

the work of the workers. However, in the later decades the variability came from the market.

Customers started expecting a variety of products. Manufacturers, therefore, started grouping like

products together. The flows of materials for those products were, therefore, exposed more easily;

this helped in quickly attending to bottlenecks, if any, and in ensuring a speedy and even flow

through the manufacturing system. Cellular manufacturing or Group Technology was thus born.

Materials Requirement Planning (MRP) and Enterprise Resource Planning (ERP) were other

efforts at reducing the variability by integrating various functions within the operations system

and the enterprise as a whole, respectively.

The customer expectations from the manufacturing system increased further. They started

asking for a variety in the products that had to be supplied ‘just in time’. In order to realise this,

the manufacturing company had to eliminate the ‘unevenness’ or variations within its own plant

as also limit the variations (unevenness) in the inputs that came from outside. This required the

‘outside system’ to be controlled for evenness in flow despite the requirements of varied items in

varied quantities at varied times. This could not be done effectively unless the ‘outsider’ became

an ‘insider’. Therefore, the entire perspective of looking at suppliers and other outside associates

in business changed.

As we notice, the scope of production and operations management has been expanding and

the discipline in getting more inclusive—from employees to business associates in supply chains

to external physical environment to the society at large. Its concerns are widening from a focus

Figure 1.2

The Journey of Production and Operations Management

1.16 Production and Operations Management

on employee’s efficiency to improvement in management systems and technological advances to

human relationship to social issues. Figure 1.2 depicts this progression.

The inroads made by the ‘service’ element have been subtle and the impacts have been profound.

It unfolded an overwhelming human and social perspective. Organisations are realising that what

is advantageous to the larger society, is advantageous to the organisation. This does not mean

that individual employee efficiencies, operations planning -and-control techniques, systems and

strategies are less relevant; in fact, they are as relevant or more so today in the larger social context.

Materials and supply management was important in yesteryears; it is even more important in the

light of environmental and other social concerns which production and operations management

discipline has to address today.

It is also extremely important to take adequate cognisance of further developments in science

and technology, the changes in value systems and in the social structures that are taking place

worldwide. Developments in science and technology give rise to certain social mores, like, for

instance, the mobile phones are doing today. Similarly, the changed social interactions and value

systems give rise to changed expectations from the people. The type of products and services that

need to be produced would, therefore, keep changing. Today a ‘product’ represents a certain group

of characteristics; a ‘service’ represents certain other utility and group of characteristics. These may

undergo changes, perhaps fundamental changes, in the days to come. We may see rapid shifts in

the lifestyles. So, whether it is for consumption or for the changed lifestyle, the type of products

demanded and the character of services desired may be quite different. Production and operations

management as a discipline has to respond to these requirements. That is the challenge.

QUESTIONS FOR DISCUSSION

1. How does the production and operations management function distinguish itself from

the other functions of management?

2. The historical development of production and operations management thought has been

briefly mentioned in this chapter. Can you project the future for the same?

3. Mention situations in (a) banking, (b) advertising, (c) agriculture, and (d) hoteliering

where production and operations management is involved. Describe the inputs, outputs,

processes, utilities.

4. What are the different types of production/operations systems? Where would each one of

them be applicable? Give practical examples.

5. How is production management related to operations research and quantitative

methods?

6. How has and how will the computer influence production and operations management?

Discuss.

7. Is there a difference between the terms “production management” and “operations

management”? If so, what is it?

8. What is the concept of “division of labour”? How is it applied to the assembly lines?

9. Can the above concept be applied to other “service” situations? Mention two such examples

and elaborate.

10. How do long-term, intermediate and short-term decision vary? Discuss the differences

in terms of their characteristics. Also discuss the increasing overlaps between these

decisions.

Production and Operations Management Function 1.17

11. Is the production and operations management function getting to be increasingly peoplecentric? If so, what may be the reasons?

12. Will ‘time’ be an important area of decision-making in the future? Reply in the context of

the production and operations management function. Explain your response.

13. ‘Historically, much of the developments in production and operations management

discipline have centered on reduction of variability.’ Do you agree with this statement?

Explain.

14. Looking at the economic history of nations, one may observe that those nations that

emphasised ‘time’, such as England and Japan, industrialised rapidly. Others like India

who did not much emphasise time, did not industrialise as rapidly. Do you agree with this

statement? Explain your response.

15. What may be your prognosis for the future of the production and operations management

discipline in general? Discuss.

16. In your opinion, where is India on the trajectory of the changing production and operations

management function? Discuss.

ASSIGNMENT QUESTIONS

1. Study any organisation(manufacturing or service, profit or non-profit, government or

private) of your choice regarding the impact of technology in its operations. Study the

past trends and the probable future and present a report.

2. Read books/articles on the history of Indian textile industry and its operations. What are

your significant observations ?

2

Operations Strategy

STRATEGIC MANAGEMENT PROCESS

Alfred Chandler* defined strategy as, “the determination of the basic long-term goals and objectives

of an enterprise, and the adoption of courses of action and the allocation of resources necessary for

carrying out these goals”. William F. Glueck** defined strategy as, “a unified, comprehensive, and

integrated plan designed to ensure that the basic objectives of the enterprise are achieved.” These

definitions view strategy as a rational planning process.

Henry Mintzberg,*** however, believes that strategies can emerge from within an organisation

without any formal plan. According to him, it is more than what a company plans to do; it is also

what it actually does. He defines strategy as a pattern in a stream of decisions or actions. So, there

are deliberate (planned) and emergent (unplanned) strategies.

In support of Mintzberg’s viewpoint, the case of the entry of Honda motorcycles into the

American market may be mentioned. Honda Motor Company’s intended strategy was to market

the 250 cc and 305 cc motorbicycles to the market segment of mobike enthusiasts, rather than

selling the 50 cc Honda Cubs which they thought were not suitable for the American market

where everything was big and luxurious. However, the sales of 250 cc and 305 cc mobikes did

not pick up. Incidentally, the Japanese executives of Honda used to move about on their 50 cc

Cubs, which attracted the attention of Sears Roebuck who thought they could sell the Cubs to a

broad market of Americans who were not motorcycle enthusiasts. Honda Motor Company was

initially very reluctant to sell the 50 cc (small) bikes and, that too, through a retail chain. ‘What

will happen to Honda image in the US?’, they thought. Expediency of the situation (failure in the

sales of bigger motorcycles) made them give a nod to Sears Roebuck. The rest is history. Within

a span of next five years, one out of every two motorcycles sold in the US was a Honda. Thus,

* Chandler, Alfred, Strategy and Structure: Chapters in the History of the American Enterprise, MIT Press, Cambridge, Mass., USA, 1962.

** Gluek, William F., Business Policy and Strategic Management, McGraw-Hill, NY, USA, 1980.

*** Mintzberg, Henry, ‘The Design School: Reconsidering the Basic Premises of Strategic Management’, Strategic

Management Journal, Vol. 11, No. 6, 1990, pp. 171–95.

2.2 Production and Operations Management

successful strategy involves more than just planning a course of action. How an organisation deals

with miscalculation, mistakes, and serendipitous events outside its field of vision is often crucial to

success over time.* Managers must be able to judge the worth of emergent strategies and nurture

the potentially good ones and abandon the unsuitable, although planned, ones.

Strategic planning can be of two types: ‘usual’ and ‘serendipitous’. What the Honda Company

had thought of doing earlier was the usual approach of a business strategy of deciding ‘who’

the customer is going to be, what to provide him/her and ‘how’ to provide. Thus, being in the

US market for 250 cc and 305 cc motorbikes for the bike-enthusiasts was a defining feature of

Honda’s usual business strategy. It was planned based on the normal modes of understanding a

market. This business strategy, in its turn, was to drive the functional strategies like the operations strategy, marketing channels and distribution and logistics strategy in the firm. However, the

actual experience in the field (in USA) gave Honda a new, previously unthought-of perspective

on the market – that of the dormant demand for a cheap transport vehicle that could be supplied

through a never before imagined channel of Sears Roebuck stores. This new perspective that

‘emerged’ made Honda to change its business strategy radically. The new perspective now became

a dominant factor in strategic planning. The company sold 50 cc Honda ‘cubs’ instead of the highly

powered motorbikes through an altogether new marketing channel with new logistics needs. This

is an ‘emergent strategy’ aspect of strategy formulation. Serendipity also has a role in deciding a

company’s business strategy in addition to planning based upon regularly available data on the

market. Figure 2.1 depicts these aspects of strategic planning.

Figure 2.1

Usual and Serendipitous Strategy Making

Another example of a ‘serendipitous strategy’ or, in other words, ‘seizing the unexpected opportunity’ is that of the 3M Company, which was a small firm during the 1920s, attempting to get

over the problems with its wet-and-dry sandpaper used in automobile companies. While trying

to develop a strong adhesive to solve the specific problem of the grit of the sandpaper getting detached and ruining the painting job, the R & D man at 3M happened to develop a weak adhesive

* Richard T. Pascale, ‘Perspectives on Strategy: The Real Story Behind Honda’s Success’, California Management

Review, Vol. 26, No. 3, Spring 1984, pp. 47–72.

Operations Strategy 2.3

instead of a strong adhesive. However, a paper coated with it could be peeled off easily without

leaving any residue. This serendipitous discovery was seized by 3M to develop a ‘masking tape’ to

cover the parts of the auto body that were not to be painted. It became a huge success. From this

time on, 3M devoted its business to ‘sticky tape’. The rest is history.

Thus, while strategy making is mostly a planned activity, an organisation has to be alert about

any chance opportunities that may emerge. Strategies can be ‘emergent’ also.

The major components of the strategic management process are: (a) Defining the mission and

objectives of the organisation, (b) Scanning the environment (external and internal), (c) Deciding

on an organisational strategy appropriate to the strengths and weaknesses of the organisation after

taking cognisance of the emerging opportunities and possible threats to the organisation, and (d)

Implementing the chosen strategy.

WHAT IS OPERATIONS/MANUFACTURING STRATEGY?

From the earlier discussion on strategy, it is clear that operations/manufacturing strategy has to be

an integral part of the overall organisational strategy. Without defining the mission and objectives

of the organisation, checking up on the external and internal environment, performing the SWOT

analysis for the organisation and then deciding on the basis for competing and/or key factors

for success, no operations/manufacturing strategy can be formulated. The latter presupposes an

in-depth knowledge/understanding about the organisation: its past direction, its strengths and

weaknesses, the forces affecting it and what it must become.

This means, there are two-way feedbacks in any process to arrive at the strategies of the

organisation and of its functional disciplines such as the operations function. It is understandable

that a functional strategy such as the operations strategy is derived from the overall organisational

and business strategies. Operations have to be congruent with the strategic stance of the business.

However, the business strategy may be derived based on the ‘strengths’ of the operations function

in that organisation. The ‘core competence’ of the firm is a very important consideration, because

that special competence can be exploited by the firm while competing in the market. For instance,

if the quality/reliability of products/services is a special strength of a firm, then that firm’s business

strategy is mainly based on its ability to supply quality products and reliable service to the customer.

Strategy making is not a one-way street. Strategy formulation is an iterative process where there

are feedbacks between business strategy and the functional strategy like the operations strategy.

It is true that since the customer should be the focus of a business, the customer and the

environment in which s/he is situated are of paramount importance in deciding upon a business

strategy and the functional strategies. While the core competencies and the exceptional strengths

of the functions like operations can be and should be leveraged in the market to provide improved

services/products to the customer, a firm cannot always design its strategies based upon its own

perceived strengths. Yesterday’s core competence may not be today’s competence. One has to

guard against such self-delusions. Knowing the pulse of the market, i.e. knowing the customer

and providing her/him the desired serivce/product through appropriate business and operations

strategies, is the prime raison-de-etre of the organisation. Figure 2.2 depicts these concepts.

2.4 Production and Operations Management

Figure 2.2

Two-Way Process of Strategy Making

Chapter 1 contains a table listing various long-term decisions. Some of the long-term decisions

could be strategic decisions. But, strictly speaking, not all long-term decisions are strategic decisions.

These could be decisions supportive to the strategy decided upon. In the earlier decades, when the

competition was not as intense as it is today at a global level, it was apt to speak only in terms of

technology strategy, market strategy and resources strategy in isolation. A company could develop

and produce a technologically superior product and could expect the customers to fall head over

heels to buy the product. For, the process of technology diffusion was significantly slower than that

during the present days. The technology leadership remained with the innovating company for a

substantial period of time. The companies could afford to stay glum in the belief that ‘our designs

are superior’, and not do anything else. After a passage of time, whether the customers needed the

‘superior’ design features offered in full or partially or did not need at all, the company would not

change it stance. It would keep banking on its ‘strategy’ of design competence. However, it is now

very much realised that all these ‘superiorities’ are for the customer. If a company sources its inputs

efficiently or even uniquely, it is so done keeping in view the interests of the customer. All strategies originate from the need to satisfy the customer without who there is no business. Strategic

planning is not a static and compartmentalised process, but it is an on-going and integrated

exercise.

After having decided upon the mission, objectives and (as a part of it) the targeted market

segment in which the company will compete, the company has to choose between the fundamental

strategic competitive options of (a) Meaningful differentiation and (b) Cost leadership.

MEANINGFUL DIFFERENTIATION

It means being different and superior in some aspect of the business that has value to the customer.

For instance, a wider product range or a functionally superior product or a superior after-sales-service. The underlying fundamental objective is to serve the customer better through various means.

A company may make a product with a superior design, but, then, that is done with the customer

in mind; it is not a design for design’s sake and waiting for customers to make a bee-line for the better designed product. The cart should never be put before the horse. Such customer-orientedness

will make the objective of the ‘differentiation’ clear to the decision-makers. Differentiation is a

strategy to win customers and to keep retaining them for a long time. The requirement, therefore,

Operations Strategy 2.5

is to keep retaining the advantage of ‘differentiation’ by continual improvement in the specifically

chosen area of differentiation. One could even diversify into other areas of differentiation.

FLEXIBILITY

In fact, today’s market requires that while a company differentiates itself (from the competitors), on

one aspect, it should perform adequately on other aspects of service too. Concentrating exclusively

on one aspect at the expense/neglect of other aspects is just not done. The company, therefore, does

an adequate or satisfactory job on various fronts, such as providing flexibility in terms of:

(i) Product design

(ii) Product range or product mix

(iii) Volumes

(iv) Quick deliveries

(v) Quick introduction of new product/design

(vi) Responding quickly to the changed needs of the customer or quickly attending to the problems of the customer.

While flexibility is one of the differentiation strategies, the former is fast becoming a vital

prerequisite for providing meaningful service to the customer. This is because the competitive

conditions are changing rapidly and a company cannot hold on to the advantage of a particular

kind of differentiation for a long time unless it is flexible enough to respond quickly to the changed

needs to the customer. Future is not viewed as a static extrapolation of the past trends and the

present; it is seen more as a dynamic phenomenon, although a few basic or floor-level issues might

remain the same.

Flexibility is a part of being responsive, responsible, reliable, accessible, communicative and

empathetic to the customer. These are all service quality characteristics. Flexibility involves not

only operations (manufacturing, supplies) but also the design and development, the project

execution, the marketing and the servicing functions. The manufacturing/operations part consists

of being flexible enough (flexibility in machines, process and in manpower) to process the altered

designs, the variety, in small batches with minimal wastage of time, of the desired quality, in a

shortest process-time possible and deliver on time for the logistics function to take over the next

part of the responsibility. Flexibility in operations should provide alternative potential platforms

to compete if the business so desires or if the market so requires it. It should provide the company

with a capability to be resilient to threats of new/improved competition, of substitute products

availability, of local government’s or international policies getting changed, of resource crunches

suddenly popping up. An operations system should offer market flexibilities through flexibilities

in machinery, processes, technology implementation, men, systems and arrangements and time

utilisation. The flexibility should improve the capacity (rather, the capability) for self-renewal and

adaptation to the changing external and internal environment. Competing for tomorrow should

be as much on the agenda as that for today.

COMPARISON: TRADITIONAL vs NEW APPROACHES

It may be interesting to compare the traditional and new approaches even while the basis for competition is the same. The comparison has been given in Table 2.1.

2.6 Production and Operations Management

Table 2.1

Approaches to Meaningful Differentiation through Product Availability

Basis for Competition: Product is available when needed by the customer.

Old Approach

Keep/increase buffer stocks of the finished

goods and other materials.

Invest in more machinery, hire more people,

get more materials and thus increase the production capacity.

The by-products are:

New Approach

Decrease response time by:

Reduction of operations lead times, delivery

times through continuous improvements.

No postponements or cancellations of the

scheduled production, thus ensuring the

supply on time.

Increased costs due to higher inventories and

higher investments.

Improvements in quality; producing right the

first time, self-inspection and certification; all of

this leading to unnecessary wastage of time and

in actual reduction in operations/process times.

Larger inventories (W.I.P. and Finished Goods)

require longer internal lead times, defeating

the very purpose behind larger inventories.

Improved machinery maintenance, improved

design of the products and processes, so that

the expenditure of time due to breakdowns,

rejects, reworks is avoided.

Stock-outs due to overload of some workcentres.

Urgent orders increased in order to meet the

stock-outs.

The by-products are:

Increased complexity giving rise to further

wastes and costs.

Improved reliability in terms of deliveries and

performance quality.

Confusion on the shop floor or in the operations system.

Simplicity in the system.

Failure to meet customer’s changing needs.

Reduced costs.

Reduced product variability.

Improved flexibilities (time, product-mix,

volumes).

Enhanced company/brand image.

Source: Adapted from Greenhalgh, Garry Robert, Manufacturing Strategy: Formulation & Implementation,

Addison-Wesley, Singapore, 1990.

COST LEADERSHIP

The other fundamental strategic competitive option that a company can choose is ‘cost leadership’

i.e. offering the product/service at the lowest price in the industry. This has to be achieved by cost

reductions. However, the difference between the modern approach and the traditional approach

Operations Strategy 2.7

to cost reduction shows the wide gulf in understanding the customer. The concern for customer

(and, therefore, the provision of strategic flexibility), which is an essential ingredient, makes all

the difference as shown in the comparison presented in Table 2.2.

Table 2.2

Approaches to Cost Leadership as a Strategy

Basis for Competition: Cost leadership

Traditional Approach

Modern Approach

Control on costs, especially related to direct

labour.

Eliminate only the non-value adding activities.

Reduction in the budgetary allocations, particularly easily implementable ones such as on

training, human resource development, and on

vendor development.

Improve quality of design and thus reduce costs

of input materials and costs of processing and

packaging, etc.

Defer investment in machines, including the

necessary replacements.