Uploaded by

gradyjacobs22

Measurement & Data Analysis: Engineering & Science

Measurement and

Data Analysis for

Engineering and Science

Fourth Edition

Measurement and

Data Analysis for

Engineering and Science

Fourth Edition

Patrick F. Dunn

Michael P. Davis

Boca Raton London New York

CRC Press is an imprint of the

Taylor & Francis Group, an informa business

MATLAB• is a trademark of The MathWorks, Inc. and is used with permission. The MathWorks does not warrant the

accuracy of the text or exercises in this book. This book’s use or discussion of MATLAB• software or related products

does not constitute endorsement or sponsorship by The MathWorks of a particular pedagogical approach or particular

use of the MATLAB• software.

CRC Press

Taylor & Francis Group

6000 Broken Sound Parkway NW, Suite 300

Boca Raton, FL 33487-2742

© 2018 by Taylor & Francis Group, LLC

CRC Press is an imprint of Taylor & Francis Group, an Informa business

No claim to original U.S. Government works

Printed on acid-free paper

Version Date: 20171106

International Standard Book Number-13: 978-1-1380-5086-0 (Hardback)

This book contains information obtained from authentic and highly regarded sources. Reasonable efforts have been

made to publish reliable data and information, but the author and publisher cannot assume responsibility for the validity

of all materials or the consequences of their use. The authors and publishers have attempted to trace the copyright

holders of all material reproduced in this publication and apologize to copyright holders if permission to publish in this

form has not been obtained. If any copyright material has not been acknowledged please write and let us know so we may

rectify in any future reprint.

Except as permitted under U.S. Copyright Law, no part of this book may be reprinted, reproduced, transmitted, or utilized

in any form by any electronic, mechanical, or other means, now known or hereafter invented, including photocopying,

microfilming, and recording, or in any information storage or retrieval system, without written permission from the

publishers.

For permission to photocopy or use material electronically from this work, please access www.copyright.com (http://

www.copyright.com/) or contact the Copyright Clearance Center, Inc. (CCC), 222 Rosewood Drive, Danvers, MA 01923,

978-750-8400. CCC is a not-for-profit organization that provides licenses and registration for a variety of users. For

organizations that have been granted a photocopy license by the CCC, a separate system of payment has been arranged.

Trademark Notice: Product or corporate names may be trademarks or registered trademarks, and are used only for

identification and explanation without intent to infringe.

Visit the Taylor & Francis Web site at

http://www.taylorandfrancis.com

and the CRC Press Web site at

http://www.crcpress.com

Contents

Preface

vii

Authors

ix

1 Introduction to Experimentation

1.1 A Famous Experiment . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1.2 Phases of an Experiment . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1.3 Example Experiments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1

1

2

5

Bibliography

7

I

9

Planning an Experiment

2 Planning an Experiment - Overview

11

3 Experiments

3.1 Role of Experiments . . . . . . . . .

3.2 The Experiment . . . . . . . . . . .

3.3 Classification of Experiments . . . .

3.4 Plan for Successful Experimentation

3.5 Problems . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

13

13

15

17

18

20

Bibliography

23

II

Identifying Components

25

4 Identifying Components - Overview

27

5 Fundamental Electronics

5.1 Concepts and Definitions . . . .

5.1.1 Charge . . . . . . . . . .

5.1.2 Current . . . . . . . . . .

5.1.3 Electric Force . . . . . . .

5.1.4 Electric Field . . . . . . .

5.1.5 Electric Potential . . . . .

5.1.6 Resistance and Resistivity

5.1.7 Power . . . . . . . . . . .

5.1.8 Capacitance . . . . . . . .

5.1.9 Inductance . . . . . . . .

5.2 Circuit Elements . . . . . . . . .

5.2.1 Resistor . . . . . . . . . .

5.2.2 Capacitor . . . . . . . . .

5.2.3 Inductor . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

31

31

32

32

33

33

33

34

35

35

35

36

36

37

37

i

ii

Contents

5.2.4 Transistor . . . . . . . . . . . . . .

5.2.5 Voltage Source . . . . . . . . . . .

5.2.6 Current Source . . . . . . . . . . .

5.3 RLC Combinations . . . . . . . . . . . .

5.4 Elementary DC Circuit Analysis . . . . .

5.4.1 Voltage Divider . . . . . . . . . . .

5.4.2 Electric Motor with Battery . . . .

5.4.3 Wheatstone Bridge . . . . . . . . .

5.5 Elementary AC Circuit Analysis . . . . .

5.6 Equivalent Circuits* . . . . . . . . . . . .

5.7 Meters* . . . . . . . . . . . . . . . . . . .

5.8 Impedance Matching and Loading Error*

5.9 Electrical Noise* . . . . . . . . . . . . . .

5.10 Problems . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

6

Measurement Systems: Sensors

6.1 The Measurement System . . .

6.2 Sensor Domains . . . . . . . .

6.3 Sensor Characteristics . . . . .

6.4 Physical Principles of Sensors .

6.5 Electric . . . . . . . . . . . . .

6.5.1 Resistive . . . . . . . . .

6.5.2 Capacitive . . . . . . . .

6.5.3 Inductive . . . . . . . .

6.6 Piezoelectric . . . . . . . . . .

6.7 Fluid Mechanic . . . . . . . .

6.8 Optic . . . . . . . . . . . . . .

6.9 Photoelastic . . . . . . . . . .

6.10 Thermoelectric . . . . . . . . .

6.11 Electrochemical . . . . . . . .

6.12 Sensor Scaling* . . . . . . . .

6.13 Problems . . . . . . . . . . . .

37

38

38

39

41

42

42

44

47

50

52

52

55

57

67

and Transducers

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

. . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

7 Measurement Systems: Other Components

7.1 Signal Conditioning, Processing, and Recording . . . . .

7.2 Amplifiers . . . . . . . . . . . . . . . . . . . . . . . . . .

7.3 Filters . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

7.4 Analog-to-Digital Converters . . . . . . . . . . . . . . . .

7.5 Smart Measurement Systems . . . . . . . . . . . . . . . .

7.5.1 Sensors and Microcontroller Platforms . . . . . . .

7.5.2 Arduino Microcontrollers . . . . . . . . . . . . . .

7.5.3 Wireless Transmission of Data . . . . . . . . . . .

7.5.4 Using the MATLAB Programming Environment .

7.5.5 Examples of Arduino Programming using Simulink

7.6 Other Example Measurement Systems . . . . . . . . . . .

7.7 Problems . . . . . . . . . . . . . . . . . . . . . . . . . . .

69

69

71

73

74

77

77

86

90

92

95

100

113

115

116

120

123

127

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

129

129

130

134

141

145

147

147

148

152

153

158

165

Contents

iii

Bibliography

III

171

Assessing Performance

173

8 Assessing Performance - Overview

175

9 Measurement Systems: Calibration and Response

9.1 Static Response Characterization by Calibration . .

9.2 Dynamic Response Characterization . . . . . . . . .

9.3 Zero-Order System Dynamic Response . . . . . . .

9.4 First-Order System Dynamic Response . . . . . . .

9.4.1 Response to Step-Input Forcing . . . . . . . .

9.4.2 Response to Sinusoidal-Input Forcing . . . .

9.5 Second-Order System Dynamic Response . . . . . .

9.5.1 Response to Step-Input Forcing . . . . . . . .

9.5.2 Response to Sinusoidal-Input Forcing . . . .

9.6 Combined-System Dynamic Response . . . . . . . .

9.7 Problems . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

213

10 Measurement Systems: Design-Stage Uncertainty

10.1 Design-Stage Uncertainty Analysis . . . . . . . . . . . . .

10.2 Design-Stage Uncertainty Estimate of a Measurand . . .

10.3 Design-Stage Uncertainty Estimate of a Result . . . . . .

10.4 Problems . . . . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

IV

233

11 Setting Sampling Conditions - Overview

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

235

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

13 The

13.1

13.2

13.3

13.4

13.5

13.6

13.7

13.8

13.9

Fourier Transform

Fourier Series of a Periodic Signal . . . .

Complex Numbers and Waves . . . . . .

Exponential Fourier Series . . . . . . . .

Spectral Representations . . . . . . . . .

Continuous Fourier Transform . . . . . .

Continuous Fourier Transform Properties*

Discrete Fourier Transform . . . . . . . .

Fast Fourier Transform . . . . . . . . . .

Problems . . . . . . . . . . . . . . . . . .

Bibliography

215

215

216

221

226

231

Setting Sampling Conditions

12 Signal Characteristics

12.1 Signal Classification . . . . .

12.2 Signal Variables . . . . . . .

12.3 Signal Statistical Parameters

12.4 Problems . . . . . . . . . . .

181

181

185

188

188

189

191

198

200

204

205

207

241

241

244

247

252

255

.

.

.

.

.

.

.

.

.

.

.

. .

. .

. .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

257

257

264

266

267

269

271

273

275

279

281

iv

Contents

14 Digital Signal Analysis

14.1 Digital Sampling . . . . . . .

14.2 Digital Sampling Errors . . .

14.2.1 Aliasing . . . . . . . .

14.2.2 Amplitude Ambiguity

14.3 Windowing* . . . . . . . . .

14.4 Determining a Sample Period

14.5 Problems . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

V

307

Analyzing Data

309

15 Analyzing Results - Overview

16 Probability

16.1 Basic Probability Concepts . . . . . .

16.1.1 Union and Intersection of Sets

16.1.2 Conditional Probability . . . .

16.1.3 Sample versus Population . . .

16.1.4 Plotting Statistical Information

16.2 Probability Density Function . . . . .

16.3 Various Probability Density Functions

16.3.1 Binomial Distribution . . . . .

16.3.2 Poisson Distribution . . . . . .

16.4 Central Moments . . . . . . . . . . .

16.5 Probability Distribution Function . .

16.6 Problems . . . . . . . . . . . . . . . .

311

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

17 Statistics

17.1 Normal Distribution . . . . . . . . . . . . . . . . . . . .

17.2 Normalized Variables . . . . . . . . . . . . . . . . . . .

17.3 Student’s t Distribution . . . . . . . . . . . . . . . . . .

17.4 Rejection of Data . . . . . . . . . . . . . . . . . . . . .

17.4.1 Single-Variable Outlier Determination . . . . . .

17.4.2 Paired-Variable Outlier Determination . . . . . .

17.5 Standard Deviation of the Means . . . . . . . . . . . .

17.6 Chi-Square Distribution . . . . . . . . . . . . . . . . . .

17.6.1 Estimating the True Variance . . . . . . . . . . .

17.6.2 Establishing a Rejection Criterion . . . . . . . .

17.6.3 Comparing Observed and Expected Distributions

17.7 Pooling Samples* . . . . . . . . . . . . . . . . . . . . .

17.8 Hypothesis Testing* . . . . . . . . . . . . . . . . . . . .

17.8.1 One-Sample t-Test . . . . . . . . . . . . . . . . .

17.8.2 Two-Sample t-Test . . . . . . . . . . . . . . . . .

17.9 Design of Experiments* . . . . . . . . . . . . . . . . . .

17.10 Factorial Design* . . . . . . . . . . . . . . . . . . . . .

17.11 Problems . . . . . . . . . . . . . . . . . . . . . . . . . .

Bibliography

283

283

284

285

289

297

301

305

319

319

320

321

325

325

333

338

340

341

343

346

349

355

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

357

357

360

365

371

371

373

375

377

381

382

382

384

386

388

388

390

392

395

403

Contents

v

18 Uncertainty Analysis

18.1 Modeling and Experimental Uncertainties . . . . . . . .

18.2 Probabilistic Basis of Uncertainty . . . . . . . . . . . .

18.3 Identifying Sources of Error . . . . . . . . . . . . . . . .

18.4 Systematic and Random Errors . . . . . . . . . . . . .

18.5 Quantifying Systematic and Random Errors . . . . . .

18.6 Measurement Uncertainty Analysis . . . . . . . . . . .

18.7 Uncertainty Analysis of a Multiple-Measurement Result

18.8 Uncertainty Analysis for Other Measurement Situations

18.9 Uncertainty Analysis Summary . . . . . . . . . . . . . .

18.10 Finite-Difference Uncertainties* . . . . . . . . . . . . .

18.10.1 Derivative Approximation* . . . . . . . . . . . .

18.10.2 Integral Approximation* . . . . . . . . . . . . .

18.10.3 Uncertainty Estimate Approximation* . . . . .

18.11 Uncertainty Based upon Interval Statistics* . . . . . .

18.12 Problems . . . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

19 Regression and Correlation

19.1 Least-Squares Approach . . . . . .

19.2 Least-Squares Regression Analysis

19.3 Linear Analysis . . . . . . . . . .

19.4 Higher-Order Analysis* . . . . . .

19.5 Multi-Variable Linear Analysis* .

19.6 Determining the Appropriate Fit .

19.7 Regression Confidence Intervals .

19.8 Regression Parameters . . . . . .

19.9 Linear Correlation Analysis . . . .

19.10 Signal Correlations in Time* . . .

19.10.1 Autocorrelation* . . . . . .

19.10.2 Cross-Correlation* . . . . .

19.11 Problems . . . . . . . . . . . . . .

445

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

VI

405

405

408

409

410

412

413

414

419

420

425

426

428

432

433

436

447

447

448

450

453

456

458

463

470

473

478

479

482

487

491

Reporting Results

493

20 Reporting Results - Overview

495

21 Units and Significant Figures

21.1 English and Metric Systems . . . . . . . . .

21.2 Systems of Units . . . . . . . . . . . . . . . .

21.3 SI Standards . . . . . . . . . . . . . . . . . .

21.4 Technical English and SI Conversion Factors

21.4.1 Length . . . . . . . . . . . . . . . . . .

21.4.2 Area and Volume . . . . . . . . . . . .

21.4.3 Density . . . . . . . . . . . . . . . . .

21.4.4 Mass and Weight . . . . . . . . . . . .

21.4.5 Force . . . . . . . . . . . . . . . . . .

21.4.6 Work and Energy . . . . . . . . . . . .

21.4.7 Power . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

499

499

501

505

507

507

507

508

508

509

510

510

vi

Contents

21.4.8 Light Radiation .

21.4.9 Temperature . .

21.4.10 Other Properties

21.5 Prefixes . . . . . . . . .

21.6 Significant Figures . . .

21.7 Problems . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Bibliography

22 Technical Communication

22.1 Guidelines for Writing . . . . . . . . .

22.1.1 Writing in General . . . . . . .

22.1.2 Writing Technical Memoranda

22.1.3 Number and Unit Formats . .

22.1.4 Graphical Presentation . . . .

22.2 Technical Memo . . . . . . . . . . . .

22.3 Technical Report . . . . . . . . . . . .

22.4 Oral Technical Presentation . . . . .

22.5 Problems . . . . . . . . . . . . . . . .

510

513

514

515

516

520

525

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

527

527

527

529

530

531

537

538

539

542

Bibliography

543

Glossary

545

Symbols

557

Review Problem Answers

565

Index

567

Preface

This text covers the fundamentals of experimentation used by both engineers and scientists.

These include the six phases of experimentation: planning an experiment, identifying its

measurement-system components, assessing the performance of the measurement system,

setting the signal sampling conditions, analyzing the experimental results, and reporting the

findings. Throughout the text, historical perspectives on various tools of experimentation

also are provided.

This is the fourth edition of Measurement and Data Analysis for Engineering and Science. The first edition was published in 2005 by McGraw-Hill and the latter editions by

CRC Press/Taylor & Francis Group. Since its beginning, the text has been adopted by an

increasing number of universities and colleges within the U.S., the U.K., and other countries.

This text has been updated. Also, new features have been added. These are as follows.

• Chapters now are organized in terms of six phases of experimentation. The chapters

relevant to each phase are preceded by an overview chapter. The chapters for a phase

are denoted in the margins by tabs with the title of that phase.

• Two example experiments are introduced in the first chapter. These experiments are

examined progressively in each of six overview chapters, in which the tools of that

phase are applied to the example experiments. Then, the tools are explained in detail

in that phase’s subsequent chapters.

• As with the previous edition, chapter sections that typically are not covered in an

introductory undergraduate course on experimentation are denoted by asterisks. The

text including all sections can be used in an upper-level undergraduate or introductory

graduate course on experimentation.

• Words highlighted in bold face within the text are defined also in the Glossary.

• Topics that have been either expanded or added to this edition include (a) the rejection of data outliers, (b) conditional probability, (c) probability distributions, (d)

hypothesis testing, and (e) MATLABr Sidebars.

• More problems have been added to this edition, both within the text and as homework

problems. There are now more than 125 solved example problems presented in the

text and more than 410 homework problems.

• The text is complemented by an extensive text website. Previous-edition chapter sections and appendices not in this latest edition, codes, files, and much more are available

at that site.

Instructors who adopt this text for their course can receive the problem solutions manual,

a laboratory exercise solution manual, and slide presentations from Notre Dame’s course in

Measurement and Data Analysis by contacting the publisher.

vii

viii

Preface

Over the span of four editions, many undergraduate students, graduate students, and

faculty have contributed to this text. They are acknowledged by name in the prefaces of the

previous editions. A special acknowledgment goes to our editor, Jonathan Plant, who has

been this text’s editor for all four editions. Without his encouragement, professionalism,

and continued support, this text never would have happened.

Finally, each member of our families always have supported us along the way. We sincerely thank everyone of them for always being there for us.

Patrick F. Dunn, University of Notre Dame and

Michael P. Davis, University of Southern Maine

Author Text Web Site: https://www3.nd.edu/∼pdunn/www.text/measurements.html

Publisher Text Web Site: https://www.crcpress.com/Measurement-and-Data-Analysis-forEngineering-and-Science-Fourth-Edition/Dunn/p/book/9781138050860

Written by PFD while at the University of Notre Dame, Notre Dame, Indiana, the University

of Notre Dame London Centre, London, England, Delft University of Technology, Delft, The

Netherlands, and, most recently, in beautiful Wisconsin, and, with this edition, by MPD in

scenic Maine.

For product information about MATLABr and Simulink, contact:

The MathWorks, Inc.

3 Apple Hill Drive

Matick, MA 01760-2098 USA

Tel: 508-647-7000

Fax: 508-647-7001

E-mail: info@mathworks.com

Web: www.mathworks.com

Arduino is an open-source electronics prototyping platform based on flexible, easy-touse hardware and software. It’s intended for artists, designers, hobbyists and anyone interested in creating interactive objects or environments. For product information see:

http://arduino.cc/

Authors

Patrick F. Dunn, Ph.D., P.E., is professor emeritus of aerospace and mechanical engineering at the University of Notre Dame, where he was a faculty member from 1985 to

2015. Prior to 1985, he was a mechanical engineer at Argonne National Laboratory from

1976 to 1985 and a postdoctoral fellow at Duke University from 1974 to 1976. He earned

his B.S., M.S., and Ph.D. degrees in engineering from Purdue University (1970, 1971, and

1974). He graduated from Abbot Pennings High School in 1966.

Professor Dunn is the author of over 160 scientific journal and refereed symposia publications, mostly involving experimentation, and a professional engineer in Indiana and Illinois.

He is a Fellow of the American Society of Mechanical Engineers and an Associate Fellow of

the American Institute of Aeronautics and Astronautics. He is the recipient of departmental,

college, and university teaching awards.

Professor Dunn’s scientific expertise is in fluid mechanics, microparticle behavior in fluid

flows, and uncertainty analysis. He is an experimentalist with over 45 years of laboratory

experience. He is the author of the textbooks Measurement and Data Analysis for Engineering and Science (first edition by McGraw-Hill, 2005; second edition by Taylor & Francis /

CRC Press, 2010; third edition by Taylor & Francis / CRC Press, 2014; this fourth edition

by Taylor & Francis / CRC Press), Fundamentals of Sensors for Engineering and Science

(first edition by Taylor & Francis / CRC Press, 2011) and Uncertainty Analysis for Forensic Science with R.M. Brach (first and second editions by Lawyers & Judges Publishing

Company, 2004 and 2009).

Michael P. Davis, Ph.D., is a lecturer in Mechanical Engineering at the University of

Southern Maine since 2014. He has extensive industry experience in gas turbine engines,

control systems, and manufacturing, working for Pratt & Whitney from 2000 to 2007 and

Bath Iron Works from 2008 to 2011. He was a founding faculty member of the University of

Maine Orono’s innovative Brunswick Engineering Program (2011 to 2014), which introduced

an integrated engineering curriculum to the first two years of a B.S. degree in engineering.

Dr. Davis holds a B.S. degree in Aerospace Engineering from the University of Notre Dame

(1998), an M.S. degree in Mechanical Engineering from Worcester Polytechnic Institute

(2000), and a Ph.D. in Mechanical Engineering from Notre Dame (2008).

Dr. Davis’s professional and scholarly interests include engineering education, fluid mechanics and heat transfer, and experimentation and measurement. He has taught courses

in design, solid mechanics and statics, thermodynamics, differential equations and linear

algebra, manufacturing statistics, and mechanical engineering laboratory.

ix

1

Introduction to Experimentation

CONTENTS

1.1

1.2

1.3

A Famous Experiment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Phases of an Experiment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Example Experiments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1

2

5

... the ultimate arbiter of truth is experiment, not the comfort one derives from a priori

beliefs, nor the beauty or elegance one ascribes to one’s theoretical models.

R

Lawrence M. Krauss. 2012. A Universe from Nothing. New York: Free Press.

Chance favours only the prepared mind.

Louis Pasteur, 1879.

1.1

A Famous Experiment

His idea was profound, yet simple - to determine the diameter of the earth by measuring

only one angle. His predecessors had postulated certain attributes of the earth and universe.

Three hundred years earlier, around 500 B.C., Anaximander suggested that the earth and

universe were three-dimensional. Later, Pythagoras proposed that the earth was spherical.

Aristotle followed arguing that the earth’s size was small compared to stellar distances [1].

From these suppositions, the Greek Eratosthenes reasoned that the sun’s rays were

essentially parallel to the earth. He also learned from travelers from Syene that no shadow

could be seen in the Syene well at noon on the summer solstice. This city was about 750

km due south of Alexandria, where Eratosthenes lived. At that moment, the sun must have

been directly overhead of the Syene well.

Armed with this information, Eratosthenes conceived of and performed his now-famous

experiment [2]. He placed an obelisk vertically on the ground in Alexandria and observed its

shadow at noon on the summer solstice. Then, he measured the angle between the obelisk

and its shadow. It was 7◦ 140 (2 % of the 360◦ circumference) [3].

Notably, Eratosthenes chose to measure an angle rather than the lengths of the obelisk

and its shadow. Angular measurement was sufficiently accurate in his time. In fact, his

angular measurement still compares to within 1/60th of a degree of that obtained by modern

measurements. However, Eratosthenes also needed to know the distance between Syene and

Alexandria to determine the earth’s diameter. Estimating this distance introduced the most

uncertainty into his result.

Eratosthenes reasoned that if it took a camel 50 days to travel between Alexandria

and Syene, covering a distance of approximately 100 stadia per day, then the distance

1

2

Measurement and Data Analysis for Engineering and Science

Planning

Identifying Measurement

Assessing Measurement

Setting Signal

Analyzing

Reporting

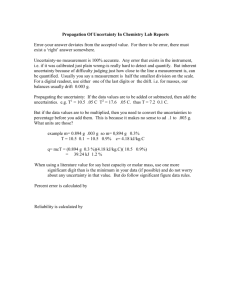

FIGURE 1.1

The phases of an experiment.

between the two cities was 5000 stadia. Using this distance and his angular measurement,

Eratosthenes calculated the earth’s circumference to be 250 000 stadia. This estimate of

the earth’s diameter was 7360 km (1 stadium equals 0.071 km), approximately 15 % larger

than current measurements (6378 km at the equator).

Eratosthenes’ experiment contained many elements of a good experiment. He prepared

himself well before beginning his experiment. He used known facts, made plausible assumptions, and employed the most accurate measurement methods of his time. He analyzed the

data and concluded by reporting his results.

1.2

Phases of an Experiment

Today, almost two centuries since Eratosthenes’ endeavor, experiments have become much

more involved. Computers, sensors, electronics, signal conditioning, signal processing, signal

analysis, probability, statistics, uncertainty analysis, and reporting are now parts of almost

all experiments. These parts of an experiment can be divided arbitrarily into six phases,

Introduction to Experimentation

3

as shown in Figure 1.1. In each phase, many practical questions can be answered. Some of

these are as follows.

1. Planning an Experiment:

•

•

•

•

What defines an experiment?

How are experiments classified?

What experimental approach should be taken?

What steps can assure a successful experiment?

2. Identifying Measurement System Components:

•

•

•

•

•

What are the main elements of a measurement system?

What basic electronics are used in most measurement systems?

How can the physical variables be sensed?

What electronic signal conditioning is required?

What measurement components are needed?

3. Assessing Measurement System Performance:

•

•

•

•

How are input physical stimulus and measurement system output related?

How are measurement system components calibrated?

What characterizes the static and dynamic responses of the components?

What uncertainties are there for a chosen measurement system?

4. Setting Signal Sampling Conditions:

•

•

•

•

•

How are signals classified?

How does classification affect measurement conditions?

How much data should be acquired?

What sampling rate is required ?

How do sampling conditions affect the results?

5. Analyzing Experimental Results:

•

•

•

•

•

•

•

•

What analytical techniques are used to examine experimental results?

What statistics are required?

What statistical confidence is associated with the data?

How are the data correlated?

How do they compare with theory and/or other experiments?

What are the overall uncertainties in the results?

When is it appropriate to reject data?

How is an experimental hypothesis accepted or rejected?

6. Reporting Experimental Results:

• What are the proper number, unit, and uncertainty expressions?

• What are the appropriate formats for the written document?

• What elements are needed to communicate the results effectively?

In this text, each phase of experimentation is introduced by its own overview chapter.

This overview is followed by the chapters that present that phase’s topics more in depth.

Planning

x

x

x

x

-

Identifying

x

x

x

x

x

-

Assessing

x

x

x

-

Setting

x

x

x

x

-

Analyzing

x

x

x

x

x

x

x

x

x

-

Reporting

x

x

x

TABLE 1.1

Tools for each experimental phase. Tools that are italicized are applied to the example experiments in their respective overview chapters.

Tool

Objective

Classification

Variables

Control Volume Analysis

Measurement System

Sensors and Transducers

Signal Conditioning

Signal Processing

Design-Stage Uncertainty

Component Calibration

Static Response

Dynamic Response

Signal Characterization

Sampling Rate

Sampling Period

Fourier Analysis

Sampling Statistics

Probability Distribution

Data Rejection

Pooling Samples

Hypothesis Testing

Design of Experiments

Measurement Uncertainty

Data Regression

Data Correlation

Units

Significant Figures

Technical Reporting

4

Measurement and Data Analysis for Engineering and Science

Introduction to Experimentation

1.3

5

Example Experiments

The tools developed in this text for each phase of experimentation are listed in Table 1.1.

These tools will be illustrated in the overview chapters by applying them to two example

experiments, one related to biomedical engineering and the other to environmental engineering.

The tools that are applied specifically to the two example experiments are italicized in

Table 1.1. Only the results of using the tools are presented in the overview chapters. It is

left to the reader to explore the details of each tool in the chapters related to that phase.

Descriptions of the two experiments follow.

Fitness Tracking - A Biomedical Engineering Experiment:

The power expenditure and heart rate of a cyclist during exercise are measured to better

understand their relationship with certain environmental factors. A series of high-intensityinterval workout protocols were performed by a recreational cyclist during which power,

heart rate, and cadence were measured. The workouts consisted of a strenuous (high-power)

effort for a given time followed by an equal-length recovery (low-power) effort. Interval

lengths studied included one, three, and 20-minute efforts. The relationship between heart

rate and power was studied for different cadences. Data was collected using a commercially

available heart rate monitor, a power meter, and a cadence sensor. These sensor outputs

were transmitted wirelessly to a GPS bike computer that recorded the data.

Microclimate Changes - A Environmental Engineering Experiment:

Variations in the weather conditions of a microclimate are studied by measuring its

pressure, temperature, and relative humidity under different sunlight irradiance and wind

conditions. Relationships between these variables and the effect of wind speed are examined.

Data acquisition is accomplished using a small sensing package that is placed into the

microclimate region by a drone. The sensing package monitors the environmental conditions

at fixed time intervals. The package’s output is transmitted wirelessly to a receiver and a

memory card located onboard the drone as the drone flies within one mile of the transmitter.

Bibliography

[1] Balchin, J. 2014. Quantum Leaps: 100 Scientists Who Changed the World. London:

Arcturus Publishing Ltd.

[2] Crease, R.P. 2004. The Prism and the Pendulum: The Ten Most Beautiful Experiments

in Science. New York: Random House.

[3] Boorstin, D.J. 1983. The Discoverers: A History of Man’s Search to Know His World

and Himself. New York: Random House.

[4] Park, R.L. 2000. Voodoo Science: The Road from Foolishness to Fraud. New York:

Oxford University Press.

[5] Gregory, A. 2001. Eureka! The Birth of Science. Duxford, Cambridge, UK: Icon Books.

[6] Harré, R. 1984. Great Scientific Experiments. New York: Oxford University Press.

[7] Boorstin, D.J. 1985. The Discoverers. New York: Vintage Books.

[8] Gale, G. 1979. The Theory of Science. New York: McGraw-Hill.

7

Part I

Planning an Experiment

9

2

Planning an Experiment - Overview

Experiments essentially pose questions and seek answers. A good experiment provides an

unambiguous answer to a well-posed question.

Henry N. Pollack. 2003. Uncertain Science ... Uncertain World. Cambridge: Cambridge

University Press.

Experiments actually have been around ever since the beginning of mankind. Our first

experiment is performed almost immediately after we are born. Being hungry, we cry and

wait for someone to come feed us. This simple action encompasses the essential elements

of an experiment. We actively participate in changing the environment and then note the

results.

Recorded experiments date back to the first millennium B.C. Since then, experiments

have played an increasing role in science. This progression parallels the development of

more and more experimental tools. Today, it has expanded to exploring the universe with

elaborate sensor packages.

R

R

Chapter 3 Overview

Experiments serve many roles in scientific understanding (3.1). Some experiments lead

to new laws and theories (inductive experiments). Others test the validity of a conjecture

or theory (fallibilistic experiments) or illustrate something (conventionalistic experiments).

All three approaches are part of the scientific method. This method ascertains the validity

of an hypothesis through both experiment and theory.

Before performing an experiment, it is crucial to identify all of its variables (3.2). An

experimentalist manipulates the independent variable and records the effect on a dependent

variable. An extraneous variable cannot be controlled, but it affects the value of what is

measured to some extent. A parameter is fixed throughout the experiment.

An experiment also can be classified according to its purpose (3.3). This categorization

helps to clarify what may be needed for the experiment. Mathematical relationships between

variables are quantified by variational experiments. Validational experiments literally validate an hypothesis. Pedagogical experiments instruct and explorational experiments help

to develop new ideas.

Finally, there is a process to follow that helps to assure a successful experiment (3.4).

This process starts with developing an experimental objective and approach. It ends with

reporting the results along with their associated measurement uncertainties.

R

R

Application of Tools to Example Experiments

The essentials in planning an experiment are presented in Chapter 3. The tools for

planning an experiment (Table 1.1), specifically those related to stating the experimental

objective, classifying the experiment, and identifying the experimental variables, are applied

to the two example experiments in the following.

11

Planning

12

Measurement and Data Analysis for Engineering and Science

Fitness Tracking

• Objective: To explore the relationship between the power produced and the heart rate

response of a cyclist at different pedal cadences.

• Classification: This experiment resulted from the observation by a cyclist that hightorque, low-cadence efforts were considerably more fatiguing than low-torque, highcadence ones at an identical power output. Because many extraneous variables are not

measured, in addition to only using one test subject, the goal of the experiment is to

identify possible relations power, heart rate, and cadence. Therefore, this experiment

is classified as explorational.

• Variables: A total of five variables are recorded. The independent variables are power

output and pedal cadence. Heart rate is the only dependent variable. Extraneous

variables are the environmental temperature measured during the exercise session

and a self-assessment rating by the cyclist on a 1-to-5 scale of fatigue.

Microclimate Changes

• Objective: To quantify how wind speed and solar irradiance may affect pressure, temperature, and relative humidity in a microclimate environment.

• Classification: Although data are acquired under uncontrolled conditions, the data are

examined later in terms of different wind speeds and irradiances. Thus, wind speed

and irradiance are varied implicitly. Such an experiment is classified as variational.

• Variables: Five variables are measured. The independent variables are wind speed and

irradiance. Pressure, temperature, and relative humidity are dependent variables.

3

Experiments

CONTENTS

3.1

3.2

3.3

3.4

3.5

Role of Experiments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

The Experiment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Classification of Experiments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Plan for Successful Experimentation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Problems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

13

15

17

18

20

...there is a diminishing return from increased theoretical complexity and ... in many

practical situations the problem is not sufficiently well defined to merit an elaborate

approach. If basic scientific understanding is to be improved, detailed experiments will be

required ...

R

Graham B. Wallis. 1980. International Journal of Multiphase Flow 6:97.

Measure what can be measured and make measurable what cannot be measured.

Galileo Galilei, c.1600.

3.1

Role of Experiments

Park [1] remarks that “science is the systematic enterprise of gathering knowledge about

the world and organizing and condensing that knowledge into testable laws and theories.”

Experiments play a pivotal role in this process. The general purpose of any experiment is

to gain a better understanding about the process under investigation and, ultimately, to

advance science.

The Greeks were the earliest civilization that attempted to gain a better understanding

of their world through observation and reasoning. Previous civilizations functioned within

their environment by observing its behavior and then adapting to it. It was the Greeks

who first went beyond the stage of simple observation and attempted to arrive at the

underlying physical causes of what they observed [3]. Two opposing schools emerged, both

of which still exist but in somewhat different forms. Plato (428-347 B.C.) advanced that the

highest degree of reality was that which men think by reasoning. He believed that better

understanding followed from rational thought alone. This is called rationalism. On the

contrary, Aristotle (384-322 B.C.) believed that the highest degree of reality was that which

man perceives with his senses. He argued that better understanding came through careful

observation. This is known as empiricism. Empiricism maintains that knowledge originates

from, and is limited to, concepts developed from sensory experience. Today it is recognized

that both approaches play important roles in advancing scientific understanding.

13

Measurement and Data Analysis for Engineering and Science

Planning

14

The Real World

Experiment

..

Theory

,--------,

I

:

Our Concept of

The Real World

1

:

L ________ _j

FIGURE 3.1

The interplay between experiment and theory.

There are several different roles that experiments play in the process of scientific understanding. Harré [4], who discusses some of the landmark experiments in science, describes

three of the most important roles: inductivism, fallibilism, and conventionalism. Inductivism is the process whereby the laws and theories of nature are arrived at based upon

the facts gained from the experiments. In other words, a greater theoretical understanding

of nature is reached through induction. Taking the fallibilistic approach, experiments are

performed to test the validity of a conjecture. The conjecture is rejected if the experiments

show it to be false. The role of experiments in the conventionalistic approach is illustrative.

These experiments do not induce laws or disprove hypotheses but rather show us a more useful or illuminating description of nature. Testings fall into the category of conventionalistic

experiments.

All three of these approaches are elements of the scientific method. Credit for its formalization often is given to Francis Bacon (1561-1626). However, the seeds of experimental

science were sown earlier by Roger Bacon (c. 1220-1292), who was not related to Francis.

Roger is considered “the most celebrated scientist of the Middle Ages.” [5] He attempted

to incorporate experimental science into the university curriculum but was prohibited by

Pope Clement IV, leading him to write his findings in secrecy. Francis argued that our

understanding of nature could be increased through a disciplined and orderly approach in

answering scientific questions. This approach involved experiments, done in a systematic

and rigorous manner, with the goal of arriving at a broader theoretical understanding.

Using the approach of Francis Bacon’s time, first the results of positive experiments

and observations are gathered and considered. A preliminary hypothesis is formed. All rival

hypotheses are tested for possible validity. Hopefully, only one correct hypothesis remains.

This approach is the basis of the scientific method, a method that tests a scientific

hypothesis through experiment and theory. Today, this method is used mainly either to

validate a particular hypothesis or to determine the range of validity of a hypothesis. In

the end, it is the constant interplay between experiment and theory that leads to advancing

our understanding, as illustrated schematically in Figure 3.1. The concept of the real world

Experiments

15

3.2

The Experiment

An experiment is an act in which one physically intervenes with a process under investigation and records the results. This is shown schematically in Figure 3.2. Examine this

definition more closely. In an experiment one physically changes, in an active manner,

the process being studied and then records the results of the change. Thus, computational

simulations are not experiments. Likewise, observation of a process alone is not an experiment. An astronomer charting the heavens does not alter the paths of planetary bodies.

He does not perform an experiment. Rather, he observes. An anatomist who dissects something does not physically change a process (although he may move anatomical parts during

dissection). Again, he observes. Yet, it is through the interactive process of observationexperimentation-hypothesizing that understanding advances. All elements of this process

are essential. Traditionally, theory explains existing results and predicts new results; experiments validate existing theory and gather results for refining theory.

When conducting an experiment, it is imperative to identify all of the variables involved.

Variables are the physical quantities that are involved in the process under investigation

,--------,

I

Physical, active change ----t•~l

I

Process

I

~~~·~ Recorded results

I

L ________ _j

FIGURE 3.2

The experiment.

Planning

is developed from the data acquired through experiment and the theories constructed to

explain the observations. Often new experimental results improve theory and new theories

guide and suggest new experiments. The Ptolemaic (geocentric) model of the universe was

challenged by the observations of Jupiter’s moons made by Galileo in 1609. This supported

the Copernican (heliocentric) model of the solar system, which Copernicus had proposed

about 60 years earlier. In 1911, Einstein developed his special theory of relativity. Later,

in 1959, this was verified by the Pound-Rebka gravitational red-shift experiment. Through

the interplay between experiment and theory, a more refined and realistic concept of the

world is developed. Anthony Lewis summarizes it well, “The whole ethos of science is that

any explanation for the myriad mysteries in our universe is a theory, subject to challenge

and experiment. That is the scientific method.”

Gale [6], in his treatise on science, advances that there are two goals of science: explanation and understanding, and prediction and control. Science is not absolute; it evolves. Its

modern basis is the experimental method of proof. Explanation and understanding encompass statements that make causal connections. One example statement is that an increase

in the temperature of a perfect gas under constant volume causes an increase in its pressure. This usually leads to an algorithm or law that relates the variables involved in the

process under investigation. Prediction and control establish correlations between variables.

For the previous example, these would result in a correlation between pressure and temperature. Science is a process-driven field in which one true hypothesis remains after other

false hypotheses are disproved.

Planning

16

Measurement and Data Analysis for Engineering and Science

that can undergo change during the experiment and thereby affect the process. They are

classified as independent, dependent, and extraneous. An experimentalist manipulates

the independent variable(s) and records the effect on the dependent variable(s). An extraneous variable cannot be controlled, but it affects the value of what is measured to some extent.

A controlled experiment is one in which all of the variables involved in the process are

identified and can be controlled. In reality, almost all experiments have extraneous variables

and, therefore, strictly are not controlled. This inability to precisely control every variable

is the primary source of experimental uncertainty, which is considered in Chapter 18. The

measured variables are called measurands.

A variable that is either actively or passively fixed throughout the experiment is called

a parameter. Sometimes, a parameter can be a specific function of variables. For example,

the Reynolds number, which is a nondimensional number used frequently in fluid mechanics,