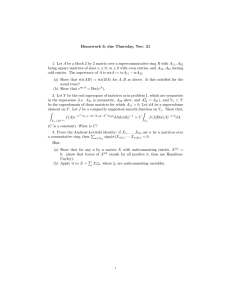

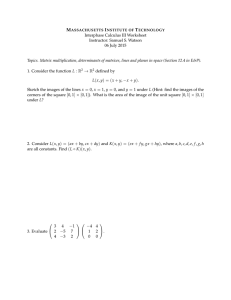

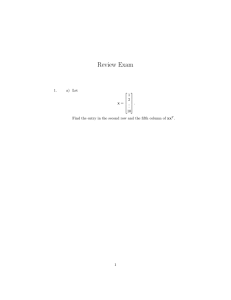

1 Linear Algebra Worksheet University of California, Berkeley Scott Alan Carson Econometrics is much easier if we use linear algebra. You don’t have to be too versed in linear algebra to be able to use if affectively in introductory econometrics. Below are some of the operations we will use throughout the semester 1. Matrix dimensions Given a system of equations y1 0 1 x1 2 x2 . . . k xk 1 y2 0 1 x1 2 x2 . . . k xk 2 . . yn 0 1 x1 2 x2 . . . k xk n The system can be represented in matrix and vector form as y1 1 y 2 1 y = 1 3 . . y 1 n x11 x12 . x21 x22 . x31 x32 . . . . xn1 xn 2 . x1k x2 k x3 k . xnk 1 1 2 2 . + . . . k n The left hand side is an nX1 vector. The right hand side is an nXk matrix. It has n rows and k columns. Matrix notation is that each element’s first subscript is the row identifier, and the second is the column identifier. In the future, we will just identify each matrix component with its subscript identifying the column. x0 x0 x 0 . x 0 x1 x2 x1 x2 x1 x2 . . x1 x2 . xk . xk . xk . . . xk 2. Matrix addition and subtraction Given two matrices with the same dimensions, their elements can be added. Let 2 a11 a21 A= . . a n1 a12 a22 . . an 2 a11 b11 a 21 b21 A B . . a b n1 n1 . . a1m b11 b12 . . a2 m b21 b22 . . . and B= . . . . . . . . . anm bn1 bn 2 a12 b12 a 22 b22 . . a n 2 bn 2 . . b1m . . b2 m . . . . . . . . bnm . . a1m b1m . . a2 m b . . . . . . . . a nm bnm Example 3 6 2 1 2 0 4 8 2 A 5 1 3 and B 0 1 2 => A B 5 2 5 1 1 1 1 0 1 2 1 2 Matrix subtraction is just taking the difference between each element. 3. Matrix multiplication If two matrices are conformable, they can be multiplied. Conformability means the number of columns in the first matrix is equal to the number of rows in the second matrix. The dimension of the product matrix is the number of rows in the first matrix by the number of columns in the second matrix. If A is 2X4 and B is 2X2, the matrices are not conformable. If A is 2X4 and B is 4X2, the matrices are conformable, and the dimension of the product is 2X2. Given the matrices a11 a21 A= . . a n1 a12 a22 . . an 2 . . a1m b11 b12 . . a2 m b21 b22 . . . and B= . . . . . . . . . anm bn1 bn 2 . . b1m . . b2 m . . . . . . . . bnm Conformable matrices are multiplied with a series of inner products that match the conformability of the product matrix. For example, 3 a11 a A 21 a 31 a 41 a12 a13 a 22 a 23 a32 a33 a 42 a 43 a14 a 24 a34 a 44 and b11 b12 b b B 21 22 b b 31 32 b 41 b42 b13 b23 b33 b43 b14 b24 b34 b44 The product matrix is d11 d D 21 d 31 d 41 d12 d13 d 22 d 23 d 32 d 33 d 42 d 43 d14 d 24 d 34 d 44 The subscript on this product matrix tells us how to construct to inner products. The 1X1 subscript for the d11element indicates that the first row of the pre-multiplied matrix A is multiplied by the post-multiplied matrix B. d11 a11b11 a12b21 a13b31 a14b41 d12 a11b12 a12b22 a13b32 a14b42 . . d 44 a41b14 a42b24 a43b34 a44b44 Note that the dimension of each element is also the dimension of the element in the product matrix. For example, the dimension for each element in the right-hand side’s product equals the left hand side. A quantitative example is 2 3 1 3 . Each matrix is a 2X2. So the matrix is conformable and B A 1 2 4 1 because the number of columns in the first matrix is equal to the number of rows in the second matrix. The dimesion of the product matrix is 2X2 because the number of rows in the first matrix is 2, and the number of columns in the second matrix is 2. Taking the inner products is d11 2 X 1 3X 1 2 3 5 d12 2 X 3 3X 2 6 6 12 d 21 4 X 1 1X 1 4 1 5 4 d 22 4 X 3 1X 2 12 2 14 This means that the product matrix D is d12 5 12 d D 11 d 21 d 22 5 14 For any square matrix A, the following statements are equivalent. 1. A is invertible. 2. A is non-singular. 3. detA is non zero. 4. A is of rank n. Determinant So, we need to be able to calculate a determinant. A determinant is a value that can be calculated from a square matrix. The most important use in econometrics is to calculate the inverse of a matrix, therefore, the coefficients in a linear system of equations. There are various ways we can define the determinant of square matrices. For large matrices—matrices for values greater than a three by three—the typical way of calculating a determinant is a Laplace expansion. We leave those larger determinants to Laplace expansion and computers, but for smaller matrices, determinants are calculated by sweeping the matrix. For example, given a two by two matrix. a12 a a11a22 a12 a22 A 11 a21 a22 Given the matrix 5 12 the determinant is 5 14 5 *12 70 60 10 A 5 14 For a three by three matrix, the determinant is calculated in similar fashion. a11 a12 A a21 a22 a 31 a32 a13 a23 a11a22 a33 a12 a23a31 a13a21a32 a12 a21a33 a11a23a32 a13a22 a31 a33 For example, 1 5 3 A 2 1 3 1 *1 *1 5 * 3 *1 3 * 2 * 2 5 * 2 *1 1 * 3 * 2 3 *1 *1 1 2 1 1 15 12 10 6 3 28 19 11 5 Inverse To estimate the vector of regression coefficients, it is necessary to calculate a matrix inverse. There are various ways to calculate an inverse, and econometricians and applied mathemiticians almost never calculate a matrix inverse by hand: there is just to many moving parts and a computer is more accurate. Nevertheless, it is useful to understand how a matrix inverse in calculated in its role in estimating the vector of regression coefficients. 1 adj ( A) A This is illustrated by breaking it down into steps and with an example. The example is the same as Gujarati and Porter, p. 847. Let the matrix equal A1 1 2 3 A 5 7 4 2 1 3 Calculate the determinant. a. 1*7*3+2*4*2+3*5*1-2*5*3-1*4*1-3*7*2=21+16+15-30-4-42=-24 b. Obtain the cofactor matrix 7 4 5 4 5 7 2 3 2 1 1 3 1 3 1 2 2 3 C 2 3 2 1 1 3 1 3 1 2 2 3 7 4 5 4 5 7 17 7 9 3 3 3 13 11 3 c. Transpose the cofactor matrix 17 3 13 7 3 11 9 3 3 6 Multiply the cofactor matrix by the inverse of the determinant d. 17 3 13 1 1 A 7 3 11 24 9 3 3 17 24 7 24 9 24 3 24 3 24 3 24 13 24 11 24 3 24 4. Transpose Because of the dimensions in linear algebra, we do not square matrices but multiply by the same matrix that is symmetric. This symmetric matrix is the matrix transpose. Stated precisely, aij a ji In other words, rows become columns and columns become rows. For example, given the matrix a11 A a 21 a31 a12 a 22 a32 a13 a 23 a33 a11 A a12 a13 T 5. Idempotent(幂等) A=AA 6. Orthogonal(正交) a 21 a 22 a 23 a31 a32 a33