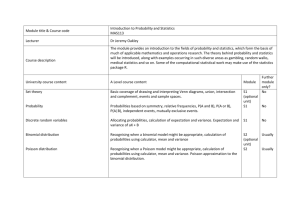

Notes for Math 450 Lecture Notes 3

advertisement

Notes for Math 450

Lecture Notes 3

Renato Feres

1

Moments of Random Variables

We introduce some of the standard parameters associated to a random variable.

The two most common are the expected value and the variance. These are

special cases of moments of a probability distribution. Before defining these

quantities, it may be helpful to recall some basic concepts associated to random

variables.

1.1

Discrete and continuous random variables

A random variable X is said to be discrete if, with probability one, it can take

only a finite or countably infinite number of possible values. That is, there is a

set {x1 , x2 , . . . } ⊆ R such that

∞

X

P (X = xk ) = 1.

k=1

X is a continuous random variable if there exists a function fX : R → [0, ∞)

(not necessarily continuous) such that, for all x,

Z x

P (X ≤ x) =

fX (s)ds.

−∞

The probability density function, or PDF, fX (x), must satisfy:

1. fX (x) ≥ 0 for all x ∈ R;

R∞

2. −∞ fX (x)dx = 1;

3. P (a ≤ X ≤ b) =

Rb

a

fX (x)dx for any a ≤ b.

If fX (x) is continuous, it follows from item 3 and the fundamental theorem

of calculus that

P (x ≤ X ≤ x + h)

fX (x) = lim

.

h→0

h

1

The cumulative distribution function of X is defined (both for continuous and

discrete random variables) as:

FX (x) = P (X ≤ x), for all x.

In terms of the probability density function, Fx (x) takes the form

Z x

FX (x) = P (X ≤ x) =

fX (z)dz.

−∞

Proposition 1.1 (PDF of a linear transformation) Let X be a continuous random variable with PDF fX (x) and cumulative distribution function FX (x)

and let Y = aX + b. Then the PDF of Y is given by

1

fY (y) = fX ((y − b)/a).

a

Proof. First assume that a > 0. Since Y ≤ y if and only if X ≤ (y − b)/a, we

have

FY (y) = P (Y ≤ y) = P (X ≤ (y − b)/a) = FX ((y − b)/a).

Differentiating both sides, we find

fY (y) =

1

fX ((y − b)/a).

a

The case a < 0 is left as an exercise.

This proposition is a special case of the following.

Proposition 1.2 (PDF of a transformation of X) Let X be a continuous

random variable with PDF fX (x) and y = g(x) a differentiable one-to-one function with inverse x = h(y). Define a new random variable Y = g(X). Then Y

is a continuous random variable with PDF fY (y) given by

fY (y) = fX (h(y))|h0 (y)|.

The higher dimensional generalization of the proposition was already mentioned

in the second set of lecture notes.

1.2

Expectation

The most basic parameter associated to a random variable is its expected value

or mean. Fix a probability space (S, F, P ) and let X : S → R be a random

variable.

Definition 1.1 (Expectation) The expectation or mean value of the random

variable X is defined as

P∞

i=1 xi P (X = xi ) if X is discrete

E[X] =

R ∞

xfX (x)dx

if X is continuous.

−∞

2

Example 1.1 (A game of dice) A game consists in tossing a die and receiving a payoff X equal to $n for n pips. It is natural to define the fair price to

play one round of the game as being the expected value of X. If you could play

the game for less than E[X], you would make a sure profit by playing it long

enough, and if you pay more you are sure to lose money in the long run. The

fair price is then

6

X

E[X] =

i/6 = 21/6 = $3.50

i=1

Example 1.2 (Waiting in line) Let us suppose that the waiting time to be

served at the post office at a particular location and time of day is known to

follow an exponential distribution with parameter λ = 6 (in units 1/hour).

What is the expected time of wait? We have now a continuous random variable

T with probability density function fT (t) = λe−λt . The expected value is easily

calculated to be:

Z ∞

1

E[T ] =

tλe−λt dt = .

λ

0

Therefore, the mean time of wait is one-sixth of an hour, or 10 minutes.

It is a bit inconvenient to have to distinguish the continuous and discrete

cases every time we refer to the expected value of a random variable. For this

reason, we need a uniform notation that represents all cases. We will use the

notation for the Lebesgue integral, introduced in the appendix of the previous

set of notes. (You do not need to know about the Lebesgue integral. We are

only using the notation.) So we will often denote the expected value of a random

variable X, of any type, by

Z

E[X] =

X(s)dP (s).

S

Sometimes dP (s) is written P (ds). The notation should be understood as

follows. Suppose that we decompose the range of values of X into intervals

(xi , xi + h], where h is a small step-size. Then

X

E[X] ∼

xi P (X ∈ (xi , xi + h]).

i

For discrete random variables, the same integral represents the sum in definition

1.1.

Here are a few simple properties of expectations.

Proposition 1.3 Let X and Y be random variables on the probability space

(S, F, P ). Then:

1. If X ≥ 0 then E[X] ≥ 0.

2. For any real number a, E[aX] = aE[X].

3

3. E[X + Y ] = E[X] + E[Y ].

4. If X is constant equal to a, then E[a] = a.

5. E[XY ]2 ≤ E[X 2 ]E[Y 2 ], with the equality if and only if X and Y are

linearly dependent, i.e., there are constants a, b, not both zero, such that

P (aX + bY = 0) = 1. (This is the Cauchy-Schwartz inequality.)

6. If X and Y are independent and both E[|X|] and E[|Y |] are finite, then

E[XY ] = E[X]E[Y ].

It is not difficult to obtain from the defintion that if Y = g(X) for some

function g(x) and X is a continuous random variable with probability density

function fX (x), then

Z

Z ∞

E[g(X)] =

g(X(s))dP (s) =

g(x)fX (x)dx.

−∞

S

1.3

Variance

The variance of a random variable X refines our knowledge of the probability

distribution of X by giving a broad measure of how X is dispersed around its

mean.

Definition 1.2 (Variance) Let (S, F, P ) be a probability space and consider

a random variable X : S → R with expectation m = E[X]. (We assume that

m exists and is finite.) We define the variance of X as the mean square of the

difference X − m; that is,

Z

Var(X) = E[(X − m)2 ] = (X(s) − m)2 dP (s).

S

The standard deviation of X is defined as σ(X) =

p

Var(X).

Example 1.3 Let D be the determinant of the matrix

X Y

D = det

,

Z T

where X, Y, Z, T are independent random variables uniformly distributed in

[0, 1]. We wish to find E[D] and Var(D). The probability space of this problem

is S = [0, 1]4 , F the σ-algebra generated by 4-dimensional parallelepipeds, and

P the 4-dimensional volume obtained by integrating dV = dx dy dz dt. Thus

Z 1Z 1Z 1Z 1

E[D] =

(xt − zy)dx dy dz dt = 0.

0

0

0

0

The variance is

Z

1

Z

1

Z

1

Z

Var(D) =

0

0

0

1

(xt − zy)2 dx dy dz dt = 7/72.

0

4

Some of the general properties of variance are enumerated in the next proposition. They can be derived from the definitions by simple calculations. The

details are left as exercises.

Proposition 1.4 Let X, Y be random variables on a probability space (S, F, P ).

1. Var(X) = E[X 2 ] − E[X]2 .

2. Var(aX) = a2 Var(X), where a is any real constant.

3. If X and Y have finite variance and are independent, then

Var(X + Y ) = Var(X) + Var(Y ).

The variance of a sum of any number of independent random variables now

follows from the above results. The next proposition implies that the standard

deviation of the √

arithmetic mean of independent random variables X1 , . . . , Xn

decreases like 1/ n.

Proposition 1.5 Let X1 , X2 , . . . , Xn be independent random variables having

the same standard deviation σ. Denote their sum by

Sn = X 1 + · · · + X n .

Then

Var

Sn

n

=

σ2

.

n

Mean and variance are examples of moments and central moments of probability distributions. These are defined as follows.

Definition 1.3 (Moments) The moment of order k = 1, 2, 3, . . . of a random

variable X is defined as

Z

k

E[X ] =

X(s)k dP (s).

S

The central moment of order k of the random variable with mean µ is

Z

E[(X − µ)k ] = (X(s) − µ)k dP (s).

S

The meaning of the central moments, and the variance in particular, is easier

to interpret using Chebyshev’s inequality. Broadly speaking, this inequality says

that if the central moments are small, then the random variable cannot deviate

much from its mean.

Theorem 1.1 (Chebyshev inequality) Let (S, F, P ) be a probability space,

X : S → R a random variable, and > 0 a fixed number.

5

1. Let X ≥ 0 (with probability 1) and let k ∈ N. Then

P (X ≥ ) ≤ E[X k ]/k .

2. Let X be a random variable with finite expectation m. Then

P (|X − m| ≥ ) ≤ Var(X)/2 .

3. Let X be a random variable with finite expectation m and finite standard

deviation σ > 0. For any positive c > 0 we have

P (|X − m| ≥ cσ) ≤ 1/c2 .

Proof. The third inequality follows from the second by taking = cσ, and the

second follows from the first by substituting |X − m| for X. Thus we only need

to prove the first. This is done by noting that

Z

k

E[X ] =

X(s)k dP (s)

S

Z

≥

X(s)k dP (s)

{s∈S:X(s)≥}

Z

≥

k dP (s)

{s∈S:X(s)≥}

= k P (X ≥ ).

So we get P (X ≥ ) ≤ E[X k ]/k as claimed.

Example 1.4 Chebyshev’s inequality, in the form of inequality 3 in the theorem, implies that if X is a random variable with finite mean m and finite

variance σ 2 , then the probability that X lies in the interval (m − 3σ, m + 3σ) is

at least 1 − 1/32 = 8/9.

Example 1.5 (Tosses of a fair coin) We make N = 1000 tosses of a fair coin

and denote by SN the number of heads. Notice that SN = X1 + X2 + · · · + XN ,

where Xi is 1 if the i-th toss obtains ‘head,’ and 0 if ‘tail.’ We assume that the Xi

are independent and P (Xi = 0) = P (Xi = 1) = 1/2. Then E[SN ] = N/2 = 500

and Var(SN ) = N/4 = 250. From the second inequality in theorem 1.1 we have

P (450 ≤ SN ≤ 550) is at least 1 − 250/502 = 0.9.

A better estimate of the dispersion around the mean will be provided by the

central limit theorem, discussed later.

6

2

The Laws of Large Numbers

The frequentist interpretation of probability rests on the intuitive idea that if

we perform a large number of independent trials of an experiment, yielding

numerical outcomes x1 , x2 , . . . , then the averages (x1 + · · · + xn )/n converge to

some value x̄ as n grows. For example, if we toss a fair coin n times and let

xi be 0 or 1 when the coin gives, respectively, ‘head’ or ‘tail,’ then the running

averages should converge to the relative frequency of ‘tails,’ which is 0.5. We

discuss now two theorem that make this idea precise.

2.1

The weak law of large numbers

Given a sequence X1 , X2 , . . . of random variables, we write

Sn = X 1 + · · · + X n .

Theorem 2.1 (Weak law of large numbers) Let (S, F, P ) be a probability

space and let X1 , X2 , . . . be a sequence of independent random variables with

means mi and variances σi2 . We assume that the means are finite and there is

a constant L such that σi2 ≤ L for all i. Then, for every > 0,

Sn − E[Sn ] ≥ = 0.

lim P n→∞

n

If, in particular, E[Xi ] = m for all i, then

Sn

lim P − n ≥ = 0.

n→∞

n

Proof. The independence of the random variables implies

Var(Sn ) = Var(X1 ) + · · · + Var(Xn ) ≤ nL.

Chebyshev’s (second) inequality applied to Sn then gives

Sn − E[Sn ] Var(Sn )

L

P ≥ = P (|Sn − E[Sn ]| ≥ n) ≤ (n)2 ≤ n2 .

n

This proves the theorem.

For example, let X1 , X2 , . . . be a sequence of independent, identically distributed random variables with two outcomes: 1 with probability p and 0 with

probability 1 − p. Then the weak law of large numbers says that the arithmetic

mean Sn /n converges to p = E[Xi ] in the sense that, for any > 0, the probability that Sn /n lies outside the interval [p − , p + ] goes to zero as n goes to

∞.

7

2.2

The strong law of large numbers

The weak law of large numbers, applied to a sequence Xi ∈ {0, 1} of coin tosses,

says that Sn /n must lie in an arbitrarily small interval around 1/2 with high

probability (arbitrarily close to 1) if n is taken big enough. A stronger statement

would be to say that, with probability one, a sequence of coin tosses yields a

sum Sn such that Sn /n actually converges to 1/2.

To explain the meaning of the stronger claim, let us be more explicit and

view the random variables as functions Xi : S → R on the same probability

space (S, F, P ). Then, for each s ∈ S we can consider the sample sequence

X1 (s), X2 (s), . . . , as well as the arithmetic averages Sn (s)/n, and ask whether

Sn (s)/n (an ordinary sequence of numbers) actually converges to 1/2. The

strong law of large numbers states that the set of s for which this holds is an

event of probability 1. This is a much more subtle result than the weak law,

and we will be content with simply stating the general theorem.

Theorem 2.2 (Strong law of large numbers) Let (S, F, P ) be a probability

space and let X1 , X2 , . . . be random variables defined on S with finite means and

variances satisfying

∞

X

Var(Xi )

< ∞.

i2

i=1

Then, there is an event E ∈ F of probability 1 such that for all s ∈ E,

Sn

E[Sn ]

−

→0

n

n

as n → ∞. In particular, if in addition all the means are equal to m then for

all s in a subset of S of probability 1,

lim

n→∞

3

Sn (s)

= m.

n

The Central Limit Theorem

The reason why the normal distribution arises so often is the central limit theorem. We state this theorem here without proof, although experimental evidence

for its validity will be given in a number of examples.

Let (S, F, P ) be a probability space and X1 , X2 , . . . be independent random

variables defined on S. Assume that the Xi have a common distribution with

finite expectation m and finite nonzero variance σ 2 . Define the sum

Sn = X 1 + X 2 + · · · + X n .

Theorem 3.1 (The central limit theorem) If X1 , X2 , . . . are independent

random variables with mean µ and variance σ 2 , then the random variable

Zn =

Sn − nµ

√

σ n

8

converges in distribution to a standard normal random variable. In other words,

Z z

1 2

1

e− 2 u du

P (Zn ≤ z) → Φ(z) = √

2π −∞

as n → ∞.

In a typical application of the central limit theorem, one considers a random

variable X with mean µ and variance σ 2 , and a sequence X1 , X2 , . . . of indepen)/n.

dent realizations of X. Let X n be the sample mean, X n = (X1 + · · · + Xn√

Then the limiting distribution of the random variable Zn = (X n − µ)/(σ/ n)

is the standard normal distribution.

We saw in Lecture Notes 2 that if random variables X and Y are independent and have probability density functions fX and fY , then the sum X + Y

has probability density function equal to the convolution fX ∗ fY . Therefore,

one way to observe the convergence to the normal distribution claimed in the

central limit theorem is to consider the convolution powers f n∗ = f ∗ · · · ∗ f of

the common probability density function f with itself n times and see√that it approaches a normal distribution. (Subtracting µ and dividing by σ/ n changes

the probability density function in the simple way described in proposition 1.1.)

f∗ f

f

2

2.5

2

1.5

1.5

1

1

0.5

0

−1

0.5

−0.5

0

0.5

0

−2

1

−1

f∗ f∗ f

0

1

2

2

4

f∗ f∗ f∗ f

4

6

5

3

4

2

3

2

1

1

0

−4

−2

0

2

0

−4

4

−2

0

Figure 1: Convolution powers of the function f (x) = 1 over [−1, 1]. By the

central limit theorem, after centering and re-scaling (not done in the figure),

f n∗ approaches a normal distribution.

9

Example 3.1 (Die tossing) Consider the experiment of tossing a fair die n

times. Let Xi be the number obtained in the i-th toss and Sn = X1 + · · · + Xn .

The Xi are independent and have a common discrete distribution with mean µ =

3.5 and σ 2 = 35/12. Assuming n = 1000, by the central limit theorem Sn has

approximately the p

normal distribution with mean µ(Sn ) = 3500 and standard

deviation σ(Sn ) = 35 × 1000/12, which is approximately 54. Therefore, if we

simulate the experiment of tossing a die 1000 times, repeat the experiment a

number of times (say 500) and plot a histogram of the result, what we obtain

should be approximated by the function

f (x) =

1 x−µ 2

1

√ e− 2 ( σ ) ,

σ 2π

where µ = 3500 and σ = 54.

0.14

0.12

0.1

0.08

0.06

0.04

0.02

0

3300

3350

3400

3450

3500

3550

3600

3650

3700

Figure 2: Comparison between the sample distribution given by the stem plot

and the normal distribution for the experiment of tossing a die 1000 times and

counting the total number of pips.

Example 3.2 (Die tossing II) We would like to compute the probability that

after tossing a die 1000 times, one obtains more than 150 6s. Here, we consider the random variable Xi , i = 1, . . . , 1000, taking values in {0, 1}, with

P (Xi = 1) = 1/6. (Xi = 1 represents the event of getting a 6 in the i-th toss.)

Writing Sn = X1 +· · ·+Xn , we wish to compute the probability

P (S1000 > 150).

p

Each Xi has mean p = 1/6 and standard deviation (1 − p)p. By the central

limit theorem, we approximate the probability p

distribution of Sn by a normal

distribution with µ = 1000p and variance σ = 1000(1 − p)p. This is approximately µ = 166.67 and σ = 11.79. Now, the distribution of (Sn − µ)/σ is

10

approximately the standard normal, so we can write

P (Sn > 150) = P ((Sn − µ)/σ > (150 − µ)/σ)

= P ((Sn − µ)/σ > −1.4139)

Z ∞

1 2

1

∼√

e− 2 z dz

2π −1.41

= 0.9215.

The integral above was evaluated numerically by a simple Riemann sum over

the interval [−1.41, 10] and step-size 0.01. We conclude that the probability of

obtaining at least 150 6s in 1000 tosses is approximately 0.92.

4

Some Simulation Techniques

Up until now we have mostly done simulations of random variables with a finite

number of possible values. In this section we explore a few ideas for simulating

continuous random variables.

Suppose we have a continuous random variable X with probability density

function f (x) and we wish to evaluate the expectation E[g(X)], for some function g(x). This requires evaluating the integral

Z ∞

E[g(X)] =

g(x)f (x)dx.

−∞

If the integral is not tractable by analytical or standard numerical methods,

one can approach it by simulating realizations x1 , x2 , . . . , xn of X and, provided

that the variance of g(X) is finite, one can apply the law of large numbers to

obtain the approximation

n

E[g(X)] ∼

1X

g(xi ).

n i=1

This is the basic idea behind Monte-Carlo integration. It may happen that we

cannot simulate realizations of X, but we can simulate realizations y1 , y2 , . . . , yn

of a random variable Y with probability density function h(x) which is related

to X in that h(x) is not 0 unless f (x) is 0. In this case we can write

Z ∞

E[g(X)] =

g(x)f (x)dx

−∞

Z ∞

g(x)f (x)

=

h(x)dx

h(x)

−∞

= E[g(Y )f (Y )/h(Y )]

n

1 X g(yi )f (yi )

∼

.

n i=1 h(yi )

11

The above procedure is known as importance sampling. We need now ways to

simulate realizations of random variables. This is typically not an easy task,

but a few general techniques are available. We describe some below.

4.1

Uniform random number generation

We have already looked at simulating uniform random numbers in Lecture Notes

1. We review the main idea here. This is usually done by number theoretic

methods, the simplest of which are the linear congruential algorithms. Such an

algorithm begins by setting an integer seed, u0 , and then generating a sequence

of new integer values by some deterministic rule of the type

un+1 = Kun + b (mod M ),

where M and K are integers. Since only finitely many different numbers occur,

the modulus M should be chosen as large as possible. To prevent cycling with

a period less than M the multiplier K should be taken relatively prime to M .

Typically b is set to 0, in which case the pseudo-random number generator is

called a multiplicative congruential generator. A good choice of parameters are

K = 75 and M = 231 − 1. Then x = u/M gives a pseudo-random number in

[0, 1] that simulates a uniformly distributed random variable.

We may return to this topic later and discuss statistical tests for evaluating

the quality of such pseudo-random numbers. For now, we will continue to take

for granted that this is a good way to simulate uniform random numbers over

[0, 1].

If we want to simulate a random point in the square [0, 1] × [0, 1], we can

naturally do it by picking a pair of independent random variables X1 , X2 with

the uniform distribution on [0, 1]. (Similarly for cubes [0, 1]n in any dimension

n.)

Example 4.1 (Area of a disc by Monte Carlo) As a simple illustration of

the Monte Carlo integration method, suppose we wish to find the area of a disc

of radius 1. The disc is inscribed in the square S = [−1, 1] × [−1, 1]. If we pick

a point from S at random with the uniform distribution, then the probability

that it will be from the disc is p = π/4, which is the ratio of the area of the disc

by the area of the square S. The following program simulates this experiment

(50000 random points) and estimates a = 4p = π. It gave the value a = 3.1403.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

rand(’seed’,121)

n=500000;

X=2*rand(n,2)-1;

a=4*sum(X(:,1).^2 + X(:,2).^2<=1)/n

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

12

Figure 3: Simulation of 1000 random points on the square [−1, 1]2 with the

uniform distribution. To approximate the ratio of the area of the disc over the

area of the square we compute the fraction of points that fall on the disc.

The above example should prompt the question: How do we estimate the

error involved in, say, our calculation of π, and how do we determine the number

of random points needed for a given precision? First, consider the probability

space S = [−1, 1]2 with probability measure given by

ZZ

1

P (E) =

dxdy,

4

E

and the random variable D : S → {0, 1} which is 1 for a point in the disc and 0

for a point in the complement of the disc. The expected value of D is µ = π/4

and the variance is easily calculated to be σ 2 = µ(1 − µ) = π(4 − π)/16. If we

draw n independent points on the square, and call the outcomes D1 , D2 , . . . , Dn ,

then the fraction of points in the disc is given by the random variable

Dn =

D1 + · · · + Dn

.

n

As we have already seen

√ (proposition 1.5), Dn must have mean value µ and

standard deviation σ/ n. Fix a positive number K. One way to estimate the

error in our calculation

is to ask for the probability that |Dn − µ| is bigger than

√

the error Kσ/ n. Equivalently, we ask for the probability P (|Zn | ≥ K), where

Zn =

Dn − µ

√ .

σ/ n

This probability can now be estimated using the central limit theorem. Recall that the probability distribution density of Zn , for big n, is very nearly a

13

standard normal distribution. Thus

√

2

P (|Dn − µ| ≥ Kσ/ n) ∼ √

2π

Z

∞

1

2

e− 2 z dz.

K

If we take K = 3, the integral on the right-hand side is approximately 1.6×10−5 .

Using n = 500000, we obtain

P (|Dn − µ| ≥ 0.0017) ∼ 1.6 × 10−5 .

In other words, the probability that our simulated Dn , for n = 500000, does

not lie in an interval around the true value µ of radius 0.0017 is approximately

1.6×10−5 . This assumes, of course, that our random numbers generator is ideal.

Deviations from this error bound can serve as a quality test for the generator.

4.2

Transformation methods

We indicate by U ∼ U (0, 1) a random variable that has the uniform distribution

over [0, 1]. Our problem now is to simulate realizations of another random

variable X with probability density function f (x), using U ∼ U (0, 1). Let

Z x

F (x) =

f (s)ds

−∞

denote the cumulative distribution function of X. Notice that F : R → (0, 1).

Assume that F is invertible, and denote by G : (0, 1) → R the inverse function

G = F −1 . Now set X = F −1 (U ).

Proposition 4.1 (Inverse distribution method) Let U ∼ U (0, 1) and G :

(0, 1) → R the inverse of the cumulative distribution function with PDF f (x).

Set

X = G(U ).

Then X has cumulative distribution function F (x).

Proof.

P (X ≤ x) = P (F −1 (U ) ≤ x)

= P (U ≤ F (x))

= FU (F (x))

= F (x).

Example 4.2 The random variable X = a + (b − a)U clearly has the uniform

distribution on [a, b] if U ∼ U (0, 1). This follows from the proposition since

F (x) =

x−a

,

b−a

which has inverse function F −1 (u) = a + (b − a)u.

14

Example 4.3 (Exponential random variables) If U ∼ U (0, 1) and λ > 0,

then

1

X = − log(U )

λ

has an exponential distribution with parameter λ. In fact, an exponential random variable has PDF

f (x) = λe−λx

and its cumulative distribution function is easily obtained by explicit integration:

F (x) = 1 − e−λx .

Therefore,

1

F −1 (u) = − log(1 − u).

λ

But 1 − U ∼ U (0, 1) if U ∼ U (0, 1), so we have the claim.

0.18

0.16

0.14

0.12

0.1

0.08

0.06

0.04

0.02

0

0

1

2

3

4

5

6

7

8

Figure 4: Stem plot of relative frequencies of 5000 independent realizations of

an exponential random variable with parameter 1. Superposed to it is the graph

of frequencies given by the exact density e−λ .

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=exponential(lambda,n)

%Simulates n independent realizations of a

%random variable with the exponential

%distribution with parameter lambda.

y=-log(rand(1,n))/lambda;

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

15

Here are the commands used to produce figure 4.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

y=exponential(1,5000);

[n,xout]=hist(y,40);

stem(xout,n/5000)

grid

hold on

dx=xout(2)-xout(1);

fdx=exp(-xout)*dx;

plot(xout,fdx)

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

4.3

Lookup methods

This is the discrete version of the inverse transformation method. Suppose

one is interested in simulating a discrete random variable X with sample space

S = {0, 1, 2, . . . } or a subset of it. Write pk = P (X = k). Some, possibly

infinitely many, of the pk may be zero. Now define

qk = P (X ≤ k) =

k

X

pi .

i=0

Let U ∼ U (0, 1) and write

X = min{k : qk ≥ U }.

Then X has the desired probability distribution. In fact, we must necessarily

have qk−1 < U ≤ qk for some k and so

P (X = k) = P (U ∈ (qk−1 , qk ]) = qk − qk−1 = pk .

4.4

Scaling

We have already seen that if Y = aX + b for a non-zero a, then

y−b

1

f

.

fY (y) =

|a|

a

Thus, for example, if X is exponentially distributed with parameter 1, then

Y = X/λ is exponentially distributed with parameter λ.

Similarly, if we can simulate a random variable Z with the standard normal

distribution, then X = σZ + µ will be a normal random variable with mean µ

and standard deviation σ.

16

4.5

The uniform rejection method

The methods of this and the next subsection are examples of the rejection

sampler method.

Suppose we want to simulate a random variable with PDF f (x) such that

f (x) is zero outside of the interval [a, b] and f (x) ≤ L for all x. Choose X ∼

U (a, b) and Y ∼ U (0, L) independently. If Y < f (X), accept X as the simulated

value we want. If the acceptance condition is not satisfied, try again enough

times until it holds. Then take that X for which Y < f (X) as the output of the

algorithm and call it X. This procedure is referred to as the uniform rejection

method for density f (x).

Proposition 4.2 (Uniform rejection method) The random variable X produced by the uniform rejection method for density f (x) has probability distribution function f (x).

Proof. Let A represent the region in [a, b] × [0, L] consisting of points (x, y)

such that y < f (x). We call A the acceptance region. As above, we denote by

(X, Y ) a random variable uniformly distributed on [a, b] × [0, L]. Let F (x) =

P (X ≤ x) denote

R x the cumulative distribution function of X. We wish to show

that F (x) = a f (s)ds. This is a consequence of the following calculation, which

uses the continuous version of the total probability formula and the key fact:

P ((X, Y ) ∈ A|X = s) = f (s)/L.

F (x) = P (X ≤ x)

= P (X ≤ x|(X, Y ) ∈ A)

P ({X ≤ x} ∩ {(X, Y ) ∈ A})

P ((X, Y ) ∈ A)

Rb

1

P ({X ≤ x} ∩ {(X, Y ) ∈ A}|X = s)ds

= b−a a

Rb

1

b−a a P ((X, Y ) ∈ A|X = s)ds

Rx

P ((X, Y ) ∈ A|X = s)ds

= Rab

P ((X, Y ) ∈ A|X = s)ds

a

R x f (s)

L ds

= Rab f (s)

ds

Z ax L

=

f (s)ds.

=

a

It is clear that the efficiency of the rejection method will depend on the

probability that a random point (X, Y ) will be accepted, i.e., will fall in the

17

acceptance region A. This probability can be estimated as follows:

P (accept) = P ((X, Y ) ∈ A)

Z b

1

=

P ((X, Y ) ∈ A|X = s)ds

b−a a

Z b

1

f (s)ds

=

(b − a)L a

1

=

.

(b − a)L

If this number is too small, the procedure will be inefficient.

Example 4.4 (Uniform rejection method) Consider the probability density function

1

f (x) = sin(x)

2

over the interval [0, π]. We wish to simulate a random variable X with PDF

f (x) using, first, the uniform rejection method.

The following program does that.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function x=samplefromsine(n)

%Simulates n independent realizations of a random

%variable with PDF (1/2)sin(x) over the interval

%[0,pi].

x=[];

for i=1:n

U=0;

Y=1/2;

while Y>=(1/2)*sin(U)

U=pi*rand;

Y=(1/2)*rand;

end

x=[x U];

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

The probability of acceptance is 2/π, or approximately 0.64. The following

Matlab commands can be used to obtain figure 5:

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

y=samplefromsine(5000);

[n,xout]=hist(y,40);

stem(xout,n/5000)

grid

hold on

18

dx=xout(2)-xout(1);

fdx=(1/2)*sin(xout)*dx;

plot(xout,fdx)

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

0.045

0.04

0.035

0.03

0.025

0.02

0.015

0.01

0.005

0

0

0.5

1

1.5

2

2.5

3

Figure 5: Stem plot of the relative frequencies of 5000 independent realizations a

simulated random variable with PDF 0.5 sin(x) over [0, π]. The exact frequencies

are superposed for comparison.

4.6

The envelope method

One limitation of the uniform rejection method is the requirement that the PDF

f (x) be 0 on the complement of a finite interval [a, b]. A more general procedure,

called the envelope method for f (x) can sometimes be used when the uniform

rejection method does not apply.

Suppose that we wish to simulate a random variable with PDF f (x) and

that we already know how to simulate a second random variable Y with PDF

g(x) having the property that f (x) ≤ ag(x) for some positive a and all x. Note

that a ≥ 1 since the total integral of both f (x) and g(x) is 1. Now consider

the following algorithm. Draw a realization of Y with the distribution density

g(y) and then draw a realization of U with the uniform distribution U (0, ag(y)).

Repeat the procedure until a pair (Y, U ) such that U < f (Y ) is obtained. Then

set X equal to the obtained value of Y . In other words, simulate a value from the

distribution g(y) and accept this value with probability f (y)/(ag(y)), otherwise

reject and try again.

The method will work more efficiently if the acceptance rate is high. The

overall acceptance probability is P (U < f (Y )). It is not difficult to calculate

this probability as we did in the case of the uniform rejection method. (Simply

19

apply the integral form of the total probability formula.) The result is

P (accept) =

1

.

a

Proposition 4.3 (The envelope method) The envelope method for f (x) described above simulates a random variable X with probability distribution f (x).

Proof. The argument is essentially the same as for the uniform rejection method.

Note now that P (U ≤ f (Y )|Y = s) = f (s)/(ag(s)). With this in mind, we have:

F (x) = P (X ≤ x)

= P (Y ≤ x|U ≤ f (Y ))

P ({Y ≤ x} ∩ {U ≤ f (Y )})

P (U ≤ f (Y ))

R∞

P ({Y ≤ x} ∩ {U ≤ f (Y )}|Y = s)g(s)ds

= −∞ R ∞

P (U ≤ f (Y )|Y = s)g(s)ds

−∞

Rx

P (U ≤ f (Y )|Y = s)g(s)ds

= R−∞

∞

P (U ≤ f (Y )|Y = s)g(s)ds

−∞

R x f (s)

a ds

= R−∞

∞ f (s)

ds

−∞ a

Z x

=

f (s)ds.

=

−∞

Example 4.5 (Envelope method) This is the same as the previous example,

but we now approach the problem via the envelope method. We wish to simulate

a random variable X with PDF (1/2) sin(x) over [0, π]. We first simulate a

random variable Y with probability density g(y), where

(

4

if y ∈ [0, π/2]

2y

g(y) = π4

(π

−

y)

if

y ∈ [π/2, π].

π2

Notice that f (x) ≤ ag(x) for a = π 2 /8. Therefore, the envelope method will

have probability of acceptance 1/a = 0.81. To simulate the random variable Y ,

note that g(x) = (h ∗ h)(x), where h(x) = 2/π over [0, π/2]. Therefore, we can

take Y = V1 + V2 , where Vi are identically distributed uniform random variables

over [0, π/2].

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function x=samplefromsine2(n)

20

%Simulates n independent realizations of a random

%variable with PDF (1/2)sin(x) over the interval

%[0,pi], using the envelope method.

x=[];

for i=1:n

U=1/2;

Y=0;

while U>=(1/2)*sin(Y)

Y=(pi/2)*sum(rand(1,2));

U=(pi^2/8)*((2/pi)-(4/pi^2)*abs(Y-pi/2))*rand;

end

x=[x Y];

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

5

Standard Probability Distributions

We study here a number of the more commonly occurring probability distributions. They are associated with basic types of random experiments that often

serve as building blocks for more complicated probability models. Among the

most important for our later study are the normal, the exponential, and the

Poisson distributions.

5.1

The discrete uniform distribution

A random variable X is discrete uniform on the numbers 1, 2, . . . , n, written

X ∼ DU (n)

if it takes values in the set S = {1, 2, . . . , n} and each of the possible values is

equally likely to occur, that is,

P (X = k) =

1

, k ∈ S.

k

The cumulative distribution function of X is, therefore,

P (X ≤ k) =

k

, k ∈ S.

n

The expectation of a discrete uniform random variable X is easily calculated:

E[X] =

n

X

k

n

k=1

n(n + 1)

2n

n+1

=

.

2

=

21

The variance is similarly calculated. Its value is

Var(X) =

n2 − 1

.

12

The following is a simple way to simulate a DU (n) random variable;

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=discreteuniform(n,m)

%Simulates m independent samples of a DU(n) random variable.

y=[];

for i=1:m

y=[y ceil(n*rand)];

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

5.2

The binomial distribution

Given a positive integer n and an integer k between 0 and n, recall that the

binomial coefficient is defined by

n!

n

.

C(n, k) =

=

k

k!(n − k)!

It gives the number of ways to pick k elements in a set of n elements. We often

read C(n, k) as “n choose k.”

The binomial distribution is the distribution of the number of “successful”

outcomes in a series of n independent trials, each with a probability p of “success” and 1 − p of “failure.” If the total number of successes is denoted X, we

write

X ∼ B(n, p)

to indicate that X is a binomial random variable for n independent trials and

success probability p. Thus, if Z1 , . . . , Zn are independent random variables

taking values in {0, 1}, and P (Zi = 0) = 1, P (Zi = 0) = 1 − p, then

X = Z1 + · · · + Zn

is a B(n, p) random variable.

The sample space for a binomial random variable X is S = {0, 1, 2, . . . , n}.

The probability of k successes followed by n − k failures is pk (1 − p)n−k . Indeed,

this is the probability of any sequence of n outcomes with k success trials,

independent of the order in which they occur. There are C(n, k) such sequences,

so the probability of k successes is

n

P (X = k) =

pk (1 − p)n−k .

k

The expectation and variance of the binomial distribution are easily obtained:

E[X] = np, Var(X) = np(1 − p).

22

Example 5.1 (Urn problem) An urn contains N balls, of which K are black

and N − K are red. We draw with replacement n balls and count the number

X of black balls drawn. Let p = K/N . Then X ∼ B(n, p).

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=binomial(n,p,m)

%Simulates drawing m independent samples of a

%binomial random variable B(n,p).

y=sum(rand(n,m)<=p);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

5.3

The multinomial distribution

Consider the following random experiment. An urn contains balls of colors

c1 , c2 , . . . , cr , which can be drawn with probabilities p1 , p2 , . . . , pr . Suppose we

draw n balls with replacement and register the number Xi , for i = 1, 2, . . . , r,

that a ball of color ci was drawn. The vector X = (X1 , X2 , . . . , Xr ) is then said

to have the multinomial distribution, and we write X ∼ M (n, p1 , . . . , pr ). Note

that X1 + · · · + Xr = n. Binomial random variables correspond to the special

case r = 2. More explicitly, the multinomial distribution assigns probabilities

P (X1 = k1 , . . . , Xr = kr ) =

n!

pk1 . . . pkr r .

k1 ! . . . kr ! 1

Note that if X = (X1 , . . . , Xr ) ∼ M (n, p1 , . . . , pr ), then each Xi can be

interpreted as the number of successes in n trials, each of which has probability

pi of success and 1 − pi of failure. Therefore, Xi is a binomial random variable

B(n, pi ).

Example 5.2 Suppose that 100 independent observations are taken from a uniform distribution on [0, 1]. We partition the interval into 10 equal subintervals

(bins), and record the numbers X1 , . . . , X10 of observations that fall in each

bin. The information is then represented as a histogram, or bar graph, in which

the bar over the bin labeled by i equals Xi . Then, the histogram itself can be

viewed as a random variable with the multinomial distribution, where n = 100

and pi = 1/10 for i = 1, 2, . . . , 10.

The following program simulates one sample draw of a random variable with

the multinomial distribution.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=multinomial(n,p)

%Simulates drawing a sample vector y=[y1, ... yr]

%with the multinomial distribution M(n,p),

%where p=[p1 ... pr] is a probability vector.

r=length(p);

x=rand(n,1);

a=0;

23

A=zeros(n,1);

for i=1:r

A=A+i*(a<=x & x<a+p(i));

a=a+p(i);

end

y=zeros(1,r);

for j=1:r

y(j)=sum(A==j);

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

5.4

The geometric distribution

As in the binomial distribution, consider a sequence of trials with a success/fail

outcome and probability p of success. Let X be the number of independent

trials until the first success is encountered. In other words, X is the waiting

time until the first success. Then X is said to have the geometric distribution,

denoted

X ∼ Geom(p).

The sample space is S = {1, 2, 3, . . . }. In order to have X = k, there must be a

sequence of k − 1 failures followed by one success. Therefore,

P (X = k) = (1 − p)k−1 p.

Another way to describe a geometric random variable X is as follows. Let

X1 , X2 , . . . be independent random variables with values in {0, 1} such that

P (Xi = 1) = p and P (Xi = 0) = 1 − p. Then

X = min{n ≥ 1 : Xn = 1} ∼ Geom(p).

In fact, as the Xi are independent, we have

P (X = n) = P ({X1 = 0} ∩ {X2 = 0} ∩ · · · ∩ {Xn−1 = 0} ∩ {Xn = 1})

= P (X1 = 0)P (X2 = 0) . . . P (Xn−1 = 0)P (Xn = 1)

= (1 − p)n−1 p.

The expectation of a geometrically distributed random variable is calculated

as follows:

E[X] =

∞

X

iP (X = i) =

i=1

∞

X

i(1 − p)i−1 p =

i=1

1

p

= .

(1 − (1 − p))2

p

Similarly:

E[X 2 ] =

∞

X

i=1

i2 P (X = i) =

∞

X

i2 (1 − p)i−1 p = p

i=1

24

1 + (1 − p)

2−p

=

,

(1 − (1 − p))3

p2

from which we obtain the variance:

Var = E[X 2 ] − E[X]2 =

1

1−p

2−p

− 2 =

.

p2

p

p2

We have used above the following formulas:

∞

X

i=1

ai−1 =

1

,

1−a

∞

X

1

,

(1 − a)2

iai−1 =

i=1

∞

X

i2 ai−1 =

i=1

1+a

(1 − a)3

as well as the formulas

n

X

i=

i=1

n(n + 1)

,

2

n

X

i2 =

i=1

1

n(n + 1)(2n + 1).

6

Example 5.3 (Waiting for a six) How long should we expect to have to wait

to get a 6 in a sequence of die tosses? Let X denote the number of tosses until

6 appears for the first time. Then the probability that X = k is

k−1

5

1

P (X = k) =

.

6

6

In other words we have k − 1 failures, each with probability 5/6, until a success,

with probability 1/6. The expected value of X is

∞

X

k=1

kP (X = k) =

k−1

∞

X

5

1

k

= 6.

6

6

k=1

So on average we need to wait 6 tosses to get a 6.

The following program simulates one sample draw of a random variable with

the Geom(p) distribution.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=geometric(p)

%Simulates one draw of a geometric

%random variable with parameter p.

a=0;

y=0;

while a==0

y=y+1;

a=(rand<p);

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

25

5.5

The negative binomial distribution

A random variable X has the negative binomial distribution, also called the

Pascal distribution, denoted X ∼ N B(n, p), if there exists an integer n ≥ 1 and

a real number p ∈ (0, 1) such that

n+k−1

P (X = n + k) =

pn (1 − p)k , k = 0, 1, 2, . . .

k

The negative binomial distribution has the following interpretation.

Proposition 5.1 Let X1 , . . . , Xn be independent Geom(p) random variables.

Then X = X1 + · · · + Xn has the negative binomial distribution with parameters

n and p.

Therefore, to simulate a negative binomial random variable all we need is to

simulate n independent geometric random variables, then add them up. It also

follows from this proposition that

E[X] =

n

n(1 − p)

, Var(X) =

.

p

p2

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=negbinomial(n,p)

%Simulates one draw of a negative binomial

%random variable with parameters n and p.

y=0;

for i=1:n

a=0;

u=0;

while a==0

u=u+1;

a=(rand<p);

end

y=y+u;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

5.6

The Poisson distribution

A Poisson random variable X with parameter λ, denoted X ∼ Po(λ), is a

random variable with sample space S = {0, 1, 2, . . . } such that

P (X = k) =

λk −λ

e , k = 0, 1, 2, . . . .

k!

This is a very ubiquitous distribution and we will encounter it many times in

the course. One way to think about the Poisson distribution is as the limit of a

26

binomial distribution B(n, p) as n → ∞, p → 0, while λ = np = E[X] remains

constant. In fact, replacing p by λ/n in the binomial distribution gives

k n−k

λ

λ

P (X = k) =

1−

n

n

k n−k

n!

λ

λ

=

1−

k!(n − k)! n

n

n

k

(1 − λ/n)n

λk

n!

k

k! (n − k)!n (1 − λ/n)k

λk n (n − 1) (n − 2)

(n − k + 1) (1 − λ/n)n

=

···

k! n n

n

n

(1 − λ/n)k

λk −λ

e .

→

k!

=

Notice that we have used the limit (1 − λ/n)n → eλ .

The expectation and variance of a Poisson random variable are easily calculated from the definition or by the limit of the corresponding quantities for the

binomial distribution. The result is:

E[X] = λ, Var(X) = λ.

One noteworthy property of Poisson random variables is that, if X ∼ Po(λ)

and Y ∼ Po(µ) are independent Poisson random variables, then Z = X + Y ∼

Po(λ + µ).

A numerical example may help clarify the meaning of the Poisson distribution. Consider the interval [0, 1] partitioned into a large number, n, of subintervals of equal length: [0, 1/n), [1/n, 2/n), . . . , [(n − 1)/n, 1]. To each subinterval

we randomly assign a value 1 with a small probability λ/n (for a fixed λ) and

0 with probability 1 − λ/n. Let X be the number of 1s. Then, for large n, the

random variable X is approximately Poisson with parameter λ. The following

Matlab script illustrates this procedure. It produces samples of a Poisson random variable with parameter λ = 3 over the interval [0, 1] and a graph that

shows the positions where an event occurs.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

%Approximate Poisson random variable, X, with parameter lambda.

lambda=3;

n=500;

p=lambda/n;

a=(rand(1,n)<p);

x=1/n:1/n:1;

X=sum(a)

stem(x,a)

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

27

1

0.9

0.8

0.7

0.6

0.5

0.4

0.3

0.2

0.1

0

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

0.9

1

Figure 6: Poisson distributed events over the interval [0, 1] for λ = 3.

A sequence of times of occurrences of random events is said to be a Poisson

process with rate λ if the number of observations, Nt , in any interval of length t

is Nt ∼ Po(λt) and the number of events in disjoint intervals are independent of

one another. This is a simple model for discrete events occurring continuously

in time.

The following is a function script for the arrival times over [0, T ] of a Poisson

process with parameter λ.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function a=poisson(lambda,T)

%Imput - lambda arrival rate

%

- T time interval, [0,T]

%Output - a arrival times in interval [0,T]

for i=1:1000

z(i,1)=(1/lambda)*log(1/(1-rand(1,1))); %interarrival times

if i==1

t(i,1)=z(i);

else

t(i,1)=t(i-1)+z(i,1);

end

if t(i)>T

break

end

end

M=length(t)-1;

a=t(1:M);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

28

5.7

The hypergeometric distribution

A random variable X is said to have a hypergeometric distribution if there exist

positive integers r, n and m such that for any k = 0, 1, 2, . . . , n we have

r

n−r

k

m−k

P (X = k) =

.

n

m

The binomial, the multinomial and the Poisson distributions arise when one

wants to count the number of successes in situations that generally correspond to

drawing from a population with replacement. The hypergeometric distribution

arises when the experiment involves drawing without replacement.

The expectation and variance of a random variable X having the hypergeometric distribution are given by

E[X] = np, Var(X) = npq

N −n

.

N −1

Example 5.4 (Drawing without replacement) An urn contains n balls, r

of which are black and n−r are red. We draw from the urn without replacement

m bals and denote by X the number of black balls among them. Then X has

the hypergeometric distribution with parameters r, n and m.

Example 5.5 (Capture/recapture) The capture/recapture method is sometimes used to estimate the size of a wildlife population. Suppose that 10 animals

are captured, tagged, and released. On a later occasion, 20 animals are captured, and it is found that 4 of them are tagged. How large is the population?

We assume that there are n animals in the population, of which 10 are tagged.

If the 20 animals captured later are taken in such a way that all the n-choose-20

possible groups are equally likely, then the probability that 4 of them are tagged

is

10

n − 10

4

16

L(n) =

.

n

20

The number n cannot be precisely determined from the given information, but it

can be estimated using the maximum likelihood method. The idea is to estimate

the value of n as the value that makes the observed outcome (X = 4 in this

example) most probable. In other words, we estimate the population size to

be the value n that maximizes L(n). It is left as an exercise to check that

L(n)/L(n − 1) > 1 if and only if n < 50, so L(n) stops growing for n = 50.

Therefore, the maximum is attained for n = 50. This number serves as our

estimate of the population size in the sense that it is the value that maximizes

the likelihood of the outcome X = 4.

29

5.8

The uniform distribution

A random variable X has a uniform distribution over the range [a, b], written

X ∼ U (a, b), if the PDF is given by

(

1

if a ≤ x ≤ b

fX (x) = b−a

0

otherwise.

If x ∈ [a, b], then

Z

x

FX (x) =

fX (y)dy

Z∞x

fX (y)dy

=

a

=

Therefore

FX (x) =

x−a

.

b−a

0

x−a

b−a

1

if x < a

if a ≤ x ≤ b

if x > b.

The expectation and variance are easily calculated:

a+b

2

(b − a)2

Var(X) =

.

2

E[X] =

5.9

The exponential distribution

A random variable X has an exponential distribution with parameter λ > 0,

written

X ∼ Exp(λ)

if it has the PDF

(

0

fX (x) =

λe−λx

if x < 0

if x ≥ 0.

The cumulative distribution function is, therefore, given by

(

0

if x < 0

FX (x) =

1 − e−λx if x ≥ 0.

Expectation and variance are given by

E[X] =

1

1

, Var(X) = 2 .

λ

λ

30

Exponential random variables often arise as random times. We will see

them very often. The following propositions contain some of their most notable

properties. The first property states can be interpreted as follows. Suppose

that something has a random life span which is exponentially distributed with

parameter λ. (For example, the atom of a radioactive element.) Then, having

survived for a time t, the probability of surviving for an additional time s is the

same probability it had initially to survive for a time s. Thus the system does

not keep any memory of the passage of time. To put it differently, if an entity

has a life span that is exponentially distributed, its death cannot be due to an

“aging mechanism” since, having survived by time t, the chances of it surviving

an extra time s are the same as the chances that it would have survived to time

s from the very beginning. More precisely, we have the following proposition.

Proposition 5.2 (Memoryless property) If X ∼ Exp(λ), then we have

P (X > s + t|X > t) = P (X > s)

for any s, t ≥ 0.

Proof.

P ({X > s + t} ∩ {X > t})

P (X > t)

P (X > s + t

=

P (X > t)

1 − P (X ≤ s + t

=

1 − P (X ≤ t)

1 − FX (s + t)

=

1 − FX (t)

P (X > s + t|X > t) =

=

1 − (1 − e−λ(s+t) )

1 − (1 − eλt )

= e−λs

= 1 − (1 − e−λs )

= 1 − FX (s)

= 1 − P (X ≤ s)

= P (X > s).

The next proposition states that the inter-event times for a Poisson random

variable with parameter λ are exponentially distributed with parameter λ.

Proposition 5.3 Consider a Poisson process with rate λ. Let T be the time to

the first event (after 0). Then T ∼ Exp(λ).

31

Proof. Let Nt be the number of events in the interval (0, t] (for given fixed

t > 0). Then Nt ∼ Po(λt). Consider the cumulative distribution function of T :

FT (t) = P (T ≤ t)

= 1 − P (T > t)

= 1 − P (Nt = 0)

(λt)0 e−λt

0!

= 1 − e−λt .

=1−

This is the distribution function of an Exp(λ) random variable, so T ∼ Exp(λ).

So the time of the first event of a Poisson process is an exponential random

variable. Using the independence properties of the Poisson process, it should

be clear (more details later) that the time between any two such events has the

same exponential distribution. Thus the times between events of the Poisson

process are exponential.

There is another way of thinking about the Poisson process that this result

suggests. For a small time h we have

P (T ≤ h)

1 − e−λh

1 − (1 − λh) O(h2 )

=

=

+

→λ

h

h

h

h

as h → 0. So for very small h, P (T ≤ h) is approximately λh and due to the

independence property of the Poisson process, this is the probability for any time

interval of length h. The Poisson process can therefore be thought of as a process

with constant event “hazard” λ, where the “hazard” is essentially a measure of

event density on the time axis. The exponential distribution with parameter λ

can therefore also be reinterpreted as the time to an event of constant hazard

λ.

The next proposition describes the distribution of the minimum of a collection of independent exponential random variables.

Proposition 5.4 Let Xi ∼ Exp(λi ), i = 1, 2, . . . , n, be independent random

variables, and define X0 = min{X1 , X2 , . . . , Xn }. Then X0 ∼ Exp(λ0 ), where

λ 0 = λ1 + λ 2 + · · · + λ n .

32

Proof. First note that for X ∼ Exp(λ) we have P (X > x) = e−λx . Then

P (X0 > x) = P (min{Xi } > x)

i

= P ({X1 > x} ∩ {X2 > x} ∩ · · · ∩ {Xn > x})

n

Y

=

P (Xi > x)

=

i=1

n

Y

e−λi x

i=1

= e−x(λ1 +···+λn )

= e−λ0 x .

Proposition 5.5 Suppose that X ∼ Exp(λ) and Y ∼ Exp(µ) are independent

random variables. Then P (X < Y ) = λ/(λ + µ).

Proof.

Z

∞

P (X < Y |Y = y)f (y)dy

P (X < Y ) =

Z0 ∞

=

P (X < y)f (y)dy

0

Z

∞

=

(1 − eλy )µe−µy dy

0

=

λ

.

λ+µ

The next result gives the likelihood of a particular exponential random variable of an independent collection being the smallest.

Proposition 5.6 Let Xi ∼ Exp(λi ), i = 1, 2, . . . , n be independent random

variables and let J be the index of the smallest of the Xi . Then J is a discrete

random variable with probability mass function

P (J = i) =

λi

, i = 1, 2, . . . , n,

λ0

where λ0 = λ1 + · · · + λn .

Proof. For each j, define the random variable Y = mink6=j {Xk } and set λ−j =

33

λ0 − λj . Then

P (J = j) = P (Xj < mink6=j {Xk })

= P (Xj < Y )

λj

=

λj + λ−j

λj

=

.

λ0

From the formula for a linear transformation of a random variable we immediately have:

Proposition 5.7 Let X ∼ Exp(λ). Then for α > 0, Y = αX has distribution

Y ∼ Exp(λ/α).

5.10

The Erlang distribution

A continuous random variable X taking values in [0, ∞) is said to have the

Erlang distribution is it has PDF

f (x) =

λ(λx)n−1 −λx

e

.

(n − 1)!

It can be shown that if T1 , T2 , . . . , Tn are independent random variables with

a common exponential distribution with parameter λ, then

Sn = T 1 + T 2 + · · · + T n

has the Erlang distribution with parameters n and λ. It follows from this claim

that the expectation and variance of an Erlang random variable are given by

n

n

E[X] = , Var(X) = 2 .

λ

λ

5.11

The normal distribution

The normal, or Gaussian, distribution is one of the most important distributions

in probability theory. One reason for this is that sums of random variables often

approximately follow a normal distribution.

Definition 5.1 A random variable X has a normal distribution with parameters µ and σ 2 , written

X ∼ N (µ, σ 2 )

if it has probability density function

(

2 )

1 x−µ

1

fX (x) = √ exp −

2

σ

σ 2π

for −∞ < x < ∞ and σ > 0.

34

Note that the PDF is symmetric about x = µ, so the median and mean of

the distribution will be µ. Checking that the density integrates to 1 requires

the well-known integral

r

Z ∞

π

−αx2

e

dx =

, α > 0.

α

−∞

We leave the calculation of this and the variance as an exercise. The result is

E[X] = µ, Var(X) = σ 2 .

The random variable Z is said to have the standard normal distribution if

Z ∼ N (0, 1). Therefore, the density of Z, which is usually denoted φ(z), is given

by

1 2

1

φ(z) = √ exp − z

2

2π

for −∞ < z < ∞. The cumulative distribution function of a standard normal

random variable is denoted Φ(z), and is given by

Z z

Φ(z) =

φ(x)dx.

−∞

There is no simple analytic expression for Φ(z) in terms of elementary functions.

Consider Z ∼ N (0, 1) and let X = µ + σZ, for σ > 0. Then X ∼ N (µ, σ 2 ).

But we know that fx (x) = (1/σ)φ((x − µ)/σ), from which the claim follows.

Conversely, if X ∼ N (µ, σ 2 ), then

Z=

X −µ

∼ N (0, 1).

σ

It is also easily shown that the cumulative distribution function satisfies:

x−µ

FX (x) = Φ

σ

and so the cumulative probabilities for any normal random variable can be

calculated using the tables for the standard normal distribution.

The sum of normal random variables is also a normal random variable. This

is shown in the following proposition.

Proposition 5.8 If X1 ∼ N (µ1 , σ12 ) and X2 ∼ N (µ2 , σ22 ) are independent normal random variables, then Y = X1 + X2 is also normal and

Y ∼ N (µ1 + µ2 , σ12 + σ22 ).

The elementary proof will be left as an exercise.

Therefore, any linear combination of independent normal random variables is

also a normal random variable. The mean and variance of the resulting random

variable can then be calculated from the proposition.

35

In Matlab we can sample a standard normal random variable using the

command randn, which has the same usage as rand. This is what we will

typically use when sampling from a normal distribution.

One simple way to generate a normal random variable is to use the central

limit theorem. Consider

n

12 X

Z=

(Ui − 1/2)

n i=1

where Ui are independent random variables with the uniform distribution on

[0, 1]. Then Z has mean 0 and variance 1 and is approximately normal. This

method is not very efficient since it requires sampling from the uniform distribution many times for a single realization of the normal distribution.

5.12

The Box-Muller method

A more efficient procedure for simulating normal random variables is the socalled Box-Muller method. This consists of first simulating a uniform and an

exponential random variable independently:

Θ ∼ U (0, 2π), and R2 ∼ Exp(1/2).

Then

X1 = R cos(Θ),

X2 = R sin(Θ)

are two independent standard normal random variables. The following proposition is needed to justify this claim.

Proposition 5.9 Let X1 and X2 be random variables with values in R. Let R

and Θ be the radius and angle expressing the vector valued random variable X =

(X1 , X2 ) in polar coordinates. Then X1 , X2 are independent standard normal

random variables if and only if R2 and Θ are independent with R2 ∼ Exp(1/2)

and Θ ∼ U (0, 2π).

Proof. The PDFs of (X1 , X2 ) and of (R, Θ) are related by the change of coordinate

x1 = r cos(θ), x2 = r sin(θ).

First assume that X1 and X2 are independent standard normal random variables. By independence, the PDF of the vector random variable X is the product

of the respective PDFs

1 − 21 (x21 +x22 )

fX (x1 , x2 ) = f1 (x1 )f2 (x2 ) =

e

.

2π

Using the general change of coordinate formula, we obtain

∂(x1 , x2 ) f(R,Θ) (r, θ) = fX1 ,X2 (x1 , x2 ) ∂(r, θ) 1 −r2 /2 cos θ −r sin θ =

e

det

sin θ

r cos θ 2π

2

1

=

× re−r /2 .

2π

36

Therefore the PDF of (R, Θ) splits as a product of a (constant) function of θ

and a function of r. This shows that R and Θ are independent, Θ ∼ U (0, 2π)

2

and R has PDF re−r /2 . Applying the transformation formula again, this time

for the function g(r) = r2 we obtain that the PDF for R2 is

fR2 (u) =

1 −1u

e 2 .

2

This shows that R2 and Θ are as claimed. The converse is shown similarly. 3

2

1

0

−1

−2

−3

−5

−4

−3

−2

−1

0

1

2

3

4

5

Figure 7: Simulation of 5000 realizations of a two dimensional standard normal

random variable, using the Box-Muller method. The program is shown below.

The following program implements the Box-Muller method. It uses the

program exponential(lambda,n) to simulate an exponential random variable.

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=stdnormal2d(n)

%Simulates n realizations of two independent

%standard normal random variables (X1,X2) using

%the Box-Muller method. Requires the function

%exponential(lambda,n). The output is a matrix

%of size n-by-2.

theta=2*pi*rand(1,n);

r=sqrt(exponential(0.5,n));

x1=r.*cos(theta);

x2=r.*sin(theta);

y=[x1;x2]’;

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

37

5.13

The χ2n distribution

If Zi are independent standard normal random variables, then

X=

n

X

Zi

i=1

has a χ2n distribution.

5.14

The gamma distribution

The gamma function, Γ(x), is defined by the integral

Z ∞

y x−1 e−y dy.

Γ(x) =

0

It is not difficult to show from the definition that Γ(1) = 1 and Γ(x+1) = xΓ(x).

If x = n is√a positive integer, it follows that Γ(n + 1) = n!. Also worth noting,

Γ(1/2) = π.

A random variable X has a gamma distribution with parameters α, β.0,

written X ∼ Γ(α, β), if it has PDF

( α

β

xα−1 e−βx if x > 0

f (x) = Γ(α)

0

if x ≤ 0.

Note that Γ(1, λ) = Exp(λ), so the gamma distribution is a generalization

of the exponential distribution.

It is also not difficult to show that if X ∼ Γ(α, β), then

E[X] =

α

α

, Var(X) = 2 .

β

β

We show how to compute E[X] and leave the variance as an exercise.

Z ∞

E[X] =

xf (x)dx

Z0 ∞

β α α−1 −βx

x

e

dx

=

x

Γ(α)

0

Z

α ∞ β α+1 α −βx

=

x e

dx

β 0 αΓ(α)

Z ∞

α

β α+1

=

xα e−βx dx

β 0 Γ(α + 1)

α

= .

β

Figure 8 shows the graph of the PDF function for Γ(4, 1).

We note the following property of gamma random variables. The proof is

left as an exercise.

38

0.09

0.08

0.07

0.06

0.05

0.04

0.03

0.02

0.01

0

0

5

10

15

Figure 8: Stem plot of the relative frequencies of 5000 independent realizations of

a random variable with Γ(4, 1) distribution. Superposed to it are the frequencies

given by the density f (x) = x3 e−x /6.

Proposition 5.10 If X1 ∼ Γ(α1 , β) and X2 ∼ Γ(α1 , β) are two independent

random variables, and Y = X1 + X2 , then Y ∼ Γ(α1 + α2 , β).

This proposition implies that the sum of n independent exponentially distributed random variables with parameter λ is Γ(n, λ). Since the inter-event

times of a Poisson process with rate λ are exponential with parameter λ, it

follows that the time of the nth event of a Poisson process is Γ(n, λ).

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=gamma(r,n)

%Simulates n independent realizations of a Gamma(r,1)

%random variable.

for j=1:n

t=2;

u=3;

while (t<u)

e=-r*log(rand);

t=((e/r)^(r-1))*exp((1-r)*(e/r-1));

u=rand;

end

y(j)=e;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

39

5.15

The beta distribution

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=beta(a,b,n)

%Simulates n independent realizations of a Beta(a,b)

%random variable.

x1=gamma(a,n);

x2=gamma(b,n);

y=x1./(x1+x2);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

6

Exercises and Computer Experiments

Exercise 6.1 Show that the variance of a random variable X satisfies

Var(X) = E[X 2 ] − E[X]2 .

Exercise 6.2 (Chebyshev’s inequality) Use Chebyshev’s inequality to show

that if X is a random variable with finite mean m and finite variance σ 2 , then

with probability at least 0.96 X is in (m − 5σ, m + 5σ).

Exercise 6.3 (Geometric distribution) We are drawing with replacement

from a population of size N and want to know how long we need to wait to

obtain exactly r distinct elements. If the time of wait is T , find the expected

value and variance of T . Hint: If we have just obtained n distinct elements,

n < r, the waiting time for the next distinct element is a random variable, Tn ,

with the geometric distribution. It satisfies

n k−1 N − n

, k = 1, 2, 3, . . .

P (Tn = k) =

M

N

The total waiting time, T , is then

T = 1 + T1 + T2 + · · · + Tr−1 .

Now use the fact that E[T ] is the sum of the expectations E[Ti ].

Exercise 6.4 Show that the cumulative distribution function of a Geom(p)

random variable is FX (k) = P (X ≤ k) = 1 − (1 − p)k .

Exercise 6.5 At each lecture a professor will call one student at random from a

class of 30 students to solve a problem on the chalkboard. Thus the probability

that you will be selected on any particular day is 1/30. On average, how many

lectures will you have to wait to be called?

Exercise 6.6 If X ∼ U (a, b), show that E[X] = (a + b)/2 and Var(X) =

(b − a)2 /2.

Exercise 6.7 Show that if X ∼ Exp(λ), then E[X] = 1/λ, and Var(X) =

1/λ2 .

40

6.1

Convolution of PDFs

Exercise 6.8 Let f (x) and g(x) be two functions of a real variable x ∈ R.

Suppose that f (x) is zero for x in the complement of the interval [a, b], and

g(x) is zero in the complement of [c, d]. Show that (f ∗ g)(x) is zero in the

complement of [a + c, b + d]. Hint: show that the convolution of the indicator

functions of the first two intervals is zero in the complement of the third.

Exercise 6.9 Suppose that f (x) and g(x) are two functions of a real variable

x ∈ R which are zero outside of the intervals [a, b] and [c, d], respectively. We

wish to obtain an approximation formula for the convolution h = f ∗ g of f and

g by discretizing the convolution integral. Assume that the lengths of the two

intervals are multiples of a common small positive step size e. This means that

there are positive integers N and M such that

d−c

b−a

=e=

.

N

M

Show that the approximation of (f ∗ g)(x) over the interval [a + c, b + d] by

Riemann sum discretization of the convolution integral is

min{j,M +1}

X

h(xj ) =

f (a + (j − i)e)g(c + (i − 1)e)e,

i=max{1,j−N }

for j = 1, . . . , N + M + 1.

The following script implements the approximation for the convolution integral to obtain the nth convolution power of a function f .

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function g=convolution(f,a,b,n)

%Input - f vector discretization of a function

%

- a and b are the left and right endpoints

%

of an interval outside of which f is zero

%

- n degree of convolution

%Output - h vector approximating of the n degree

%

convolution of f with itself

%

over interval [na, nb]

N=length(f)-1;

e=(b-a)/N;

s=[a:e:b];

g=f;

for k=2:n

x=[k*a:e:k*b];

h=zeros(size(x));

for j=1:k*N+1

for i=max([j-N,1]):min([j,(k-1)*N+1])

41

h(j)=h(j)+f(j-i+1)*g(i)*e;

end

end

g=h;

end

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

Exercise 6.10 Let f be the function f (x) = cx2 over the interval [−1, 1], where

c is a normalization constant. We discretize it and write in Matlab:

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

x=[-1:0.01:1]; f=x.^2; f=f/sum(f);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

To find the convolution power f ∗n = f ∗ · · · ∗ f of degree n, we invoke the

function convolution defined above. This is done with the command

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

g=convolution(f,-1,1,n);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

When plotting g, keep in mind that it may be non-zero over the bigger interval

[na, nb]. So write now x=[-n:0.01:n]; plot(x,g). Draw the graphs of f and

the convolution powers of degree n = 2, 5 and 20.

6.2

Central limit theorem

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

rand(’seed’,121)

p=ones(1,6)/6; %Probabilities of the outcomes of tossing a die

N=1000;

%Number of tosses in each trial

M=500;

%Number of trials

y=[];

for i=1:M

y=[y sum(samplefromp(p,N))];

end

[n,xout]=hist(y,20); %n is the count in each bin, and xout

%is a vector giving the bin locations

hold off

stem(xout,n/M)

%stem plot of the relative frequencies

%Now, we superpose the plot of the normal density

m=N*3.5;

s=sqrt(N*35/12);

x=m-3*s:0.1:m+3*s;

dx=xout(2)-xout(1);

42

f=(1/(s*sqrt(2*pi)))*exp(-0.5*((x-m)/s).^2)*dx;

hold on

plot(x,f)

grid

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

Exercise 6.11 (Transformation method) Write a program to simulate a

random variable X with probability density function f (x) = 3x2 over the interval [0, 1] using the transformation method. Simulate 1000 realizations of X and

plot a stem plot with 20 bins. On the same coordinate system, superpose the

graph of f (x) (appropriately normalized so as to give be correct frequencies of

each bin.)

Exercise 6.12 (Uniform rejection method) Write a program to simulate a

random variable taking values in [−1, 1] with probability density

f (x) =

3

(1 − x2 ).

4

Use the uniform rejection method. Simulate 1000 realizations of X and plot a

stem plot with 20 bins. On the same coordinate system, superpose the graph of

f (x) (appropriately normalized so as to give be correct frequencies of each bin.)

6.3

Normal distributions

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

function y=normalpdf(x,mu,sigma)

%Input - mu, sigma: parameters of normal distribution

%

- x a real number

%Output - value of the pdf at x

y=exp(-0.5*(x-mu).^2)/(sigma*sqrt(2*pi));

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

7

Appendix: summary of famous distributions

Binomial

P (X = k) =

n

k

pk (1 − p)n−k , k = 0, 1, . . . , n.

Multinomial

P (n1 , n2 , . . . , nk ) =

P

k

k

( ni )! Y ni X

pi ,

pi = 1.

n1 !n2 ! . . . nk ! i=1

i=1

Geometric

P (X = k) = (1 − p)k−1 p, k = 1, 2, . . . , n.

43

Poisson

P (X = k) =

Uniform

f (x) =

λk e−λ

, k = 0, 1, 2, . . .

k!

1

I[a,b] (x), x ∈ R.

b−a

Negative binomial

P (X = n + k) =

n+k−1

k

pn (1 − p)k , k = 0, 1, 2, . . .

Exponential

f (x) = λe−λx , x ≥ 0.

Gamma

f (x) =

λn xn−1 e−λx

, x ≥ 0.

Γ(n)

Chi-square with ν degrees of freedom

f (x) =

xν/2−1 e−x/2

, x ≥ 0.

Γ(ν/2)2ν/2

tν

Γ((ν + 1)/2)

f (x) = √

νπΓ(ν/2)

1+

x2

ν

, x ∈ R.

Cauchy

f (x) =

β

1

, x ∈ R.

π β 2 + (x − α)2

Weibull