z114 System Overview

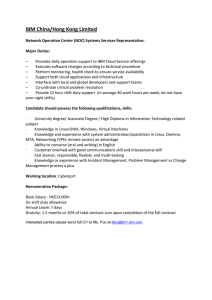

advertisement