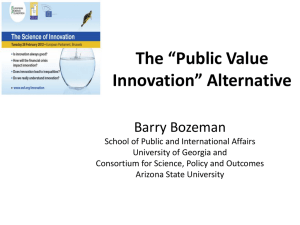

Public Value Mapping of Science Outcomes: Theory and Method

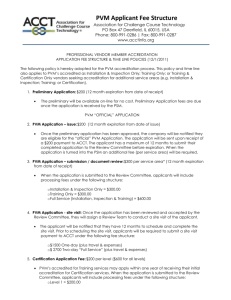

advertisement