Solutions

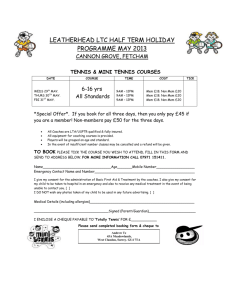

advertisement