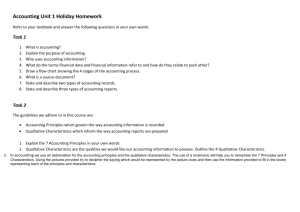

Qualitative Case Study Guidelines

advertisement