A Survey of Glove-Based Systems and Their Applications

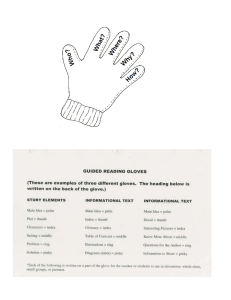

advertisement