21-Robust regression.wxb

advertisement

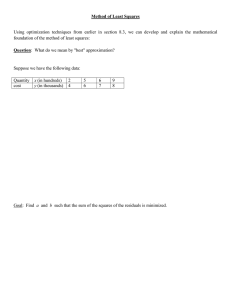

WEIGHTED LEAST SQUARES - used to give more emphasis to selected points in the analysis What are weighted least squares? Recall, in OLS we minimize Q = ! %32 = ! (Y3 - "! - "" X3 )2 or n n i=1 i=1 _ - X" _ - X" Q = (Y _ )w (Y _) In weighted least squares, we minimize – 2 Q = DDe23 œ DDw3 cY3 Y b" X3 )d i j i j The normal equations become b! Dw3 + b" Dw3 X3 = Dw3 Y3 b! Dw3 X3 + b" Dw3 X2i = Dw3 X3 Y3 For the intermediate calculations we get (where w3 is the weight) t n3 D D w3 X3 , DD w3 Yij , DD w3 X3 Yij , DD w3 X23 , DD w3 Y2ij , DD w3 i j i j i j i j i j i j Calculation of the corrected sum of squares is – DD y2ij = D D w3 (Yij Y..)2 = DDw3 Y2ij i j i j i j the slope is ^ = b = " " " DD x3 yij i j DD x23 i j œ DDw3 Yij X3 i j DDw3 X32 i j (DDw3 Yij)2 i j DDw3 i j (DDw3 YijX3 ) i j Dw3 (DDw3 X3 )2 i j DDw3 i j – – the intercept is calculated with X and Y as usual, but these are calculated as – Y.. = DDw3 Yij i j DDw3 i j and – X.. = DDw3 X3 i j DDw3 i j It the weights are 1, then all results are the same as OLS The variance is 532 = 5%23 = 5Y2 3 = 52 w3 WEIGHTED LEAST SQUARES handout Q =!%32 = !(Y3 - "! - "" X3 )2 or Q = (Y - X")w (Y - X") In OLS we minimize n n i=1 i=1 In weighted least squares, we minimize Q = De23 œ Dw3 cY3 b! - b" X3 d2 i i If the weights are 1, then all results are the same as OLS The normal equations become b! Dw3 + b" Dw3 X3 = Dw3 Y3 b! Dw3 X3 + b" Dw3 X2i = Dw3 X3 Y3 For the intermediate calculations we get (where w3 is the weight) t n3 D D w3 X3 , DD w3 Yij , DD w3 X3 Yij , DD w3 X23 , DD w3 Y2ij , DD w3 i j i j i j i j i j i j Calculation of the corrected sum of squares is _ DD y2ij = D D w3 (Yij Y..)2 = DDw3 Y2ij i j i j i j the slope is ^ = b = " " " DDw3 Yij X3 i j DDw3 X23 i j _ (DDw3 Yij)2 i j DDw3 i j (DDw3 YijX3 ) i j Dw3 (DDw3 X3 )2 i j DDw3 i j _ the intercept is calculated with X and Y as usual, but the means are calculated as _ Y.. = DDw3 Yij i j DDw3 i j 2 53 = 5%23 = and _ X.. = DDw3 X3 i j DDw3 i j 2 The variance is 5Y2 3 = 5w3 For multiple regression Q = DDe32 = DDw3 cY3 b! b" X"i b# X#i ... b:-" X:-",i )d2 i j i j the normal equations the regression coefficients the variance-covariance matrix; (Xw WX)B = (Xw WY) B = (Xw WX)-" (Xw WY) 5,2 = 52 (Xw WX)-" 52 is estimated by MSEA , based on weighted deviations; MSEA = ^ )2 Dw3 (Y3 Y 3 np In a multiple regression, we minimize – 2 Q = DDe23 œ DDw3 cY3 Y b" X"i b# X#i ... b:-" X:-",i )d i j i j The matrix containing weights is W8x8 Ô w" Ö 0 = Ö 0 Õ 0 0 w# 0 0 0 0 × 0 0 Ù Ù ... 0 0 w8 Ø The matrix equations for the regression solutions are, (Xw WX)B = (Xw WY) the normal equations B = (Xw WX)-" (Xw WY) the regression coefficients the variance-covariance matrix; 5,2 = 5 2 (Xw WX)-" where 5 2 is estimated by MSEA , based on weighted deviations MSEA = ^ )2 Dw3 (Y3 Y 3 np = Dw3 /32 n p Estimating weights For ANOVA this may be relatively easy if enough observations are available in each group. Variances can be estimated directly. However, in regression we usually have a "smooth" function of changing residuals with changing X3 valuesÞ To estimate these we note that 53 can be estimated by the residuals |e3 |. We can then estimate the residuals with OLS, and regress the residuals (squared or unsquared) on a suitable variable (usually one of the X3 ^ ). variables or Y 3 The procedure can be iterated. That is, if the new regressions coefficients differ substancially from the old, the residuals can be estimated again from the weighted regression and the weights can be calcalated again and the regression fit again with the new weights. Weighting may be used for various cases 1) One common use is to adjust for non-homogeneous variance. One common approach is assume that the variance is non-homogeneous because it si a function of X3 . Then we must determine what that function is, for simple linear regression, the function is commonly 532 = 5 2 X3 , 532 = 5 2 ÈX3 532 = 5 2 X32 , to adjust for this, we would weight by the inverse of the function 2) Another common case is where the function is not known, but the data can be subset into smaller groups. These may be separate samples, or they may be cells from an analysis of variance (not regression, but weighting also works for (ANOVA). Then we determine a value of 532 for each cell i, and the weighting function is the reciprocal (inverse) of the variance for each subgroup ’ 512 “ 3 3) The values analyzed are means, then mean will differ between “observations" if the sample size are not equal. Since we may still assume that the variance of the original observations is homogeneous, The the variance of each 2 point is a simple function of n3 (where the variance of a mean is 5n , or 5 2 1n ) we would weight by the inverse of the coefficient, of simply n If we could not assume that the variances were equal then for each mean we would 2 have the variance of 5n33 , and the weight would be 5n32 3 NOTE: the actual value of a weight is not important, only the proportional value between weights, so all weights could be multiplied or divided by some constant value. Some people recommend weighting by 5n32 without 532 3 concern for the homogeneity of variance. Since all are equal to 5 2 when the variance is homogeneous, this is the same as taking all n3 weights, and multiplying by a constant 512 8. ROBUST REGRESSION There are many regressions developed that call themselves "robust". Some are based on the median deviation, others on trimmed analyses. The one we will discuss uses weighted least squares, and is called ITERATIVELY REWEIGHTED LEAST SQUARES. a) ordinary least squares is considered "robust", but is sensitive to points which deviate greatly from the line this occurs (1) with data which is not normal or symmetrical data with a large tail (2) when there are outliers (contamination) b) ROBUST REGRESSION is basically weighted least squares where the where the weights are an inverse function of the residuals (1) points within a range "close" to the regression line get a weight of 1 if all weights are 1 then the result is ordinary least squares regression (2) points outside the range get a weight less than 1 (3) ordinary least squares minimize ^ 2 = (residual)2 D(Yij X3 " )2 = D(Yij Y..) robust regression minimizes Dw3 Ò(Yij X3 " )2 c5 where w3 is some function of the standardized residual and w3 should be chosen to minimize the influence of large residuals. The w3 chosen is a weight, and if the proper function is chosen, the calculations may be done as a weighted least squares, minimizing Dwij (Yij X3 " )2 (4) the function chosen by Huber was chosen such that if the residual is small ( Ÿ ^ ) there is no weight 1.3455 First we define a robust estimate of variance as called the median absolute 7/.3+8Öl/3 7/.3+8Ð/3 Ñl× deviation (MAD) such that MAD = , where the !Þ'(%& constant 0.6745 will give an unbiased estimate of 5 for independent observations from a normal distribution. MAD is an alternative estimate of ÈMSE Then we define a scaled residual as .ß where . = /3 Q EH ^ then weight wij = 1 if leij l Ÿ 1.$%&5 wij = "Þ$%& l.l ^ for keij k > 1.3455 where 1.345 is called the tuning constant, and is chosen to make the technique robust and 95% efficient. Efficiency refers to the ratio of variances from this model relative to normal regression models. (5) The solution is iterative (a) do regression, obtain eij values (b) compute the weights (c) run the weighted least squares analysis using the weights (d) iteratively recalculate the weights and rerun the weighted least squares analysis UNTIL (1) there is no change in the regression coefficients to some predetermined level of precision (2) note that the solution may not be the one with the minimum sum of squares deviations (6) PROBLEMS (a) this is not an ordinary least squares, but the hypotheses are likely to be tested as if they were the distributional properties of the estimator are not well documented (b) Schreuder, Boos and Hafley (Forest Resources Inventories Workshop Proc., Colorado State U., 1979) suggest running both regressions if "similar", use ordinary least squares if "different" find out why; if because of an outlier then robust regression is "probably" better they further suggest using robust regression as "a tool for analysis, not a cure all for bad data" The technique is useful for detecting outlierS.