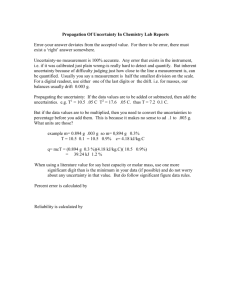

Report - Europa.eu

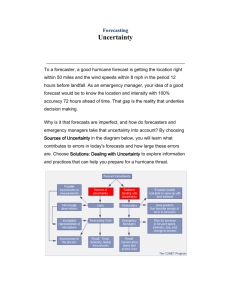

advertisement