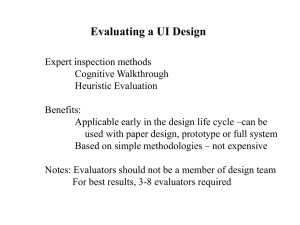

Heuristic evaluation: Comparing ways of finding and reporting

advertisement