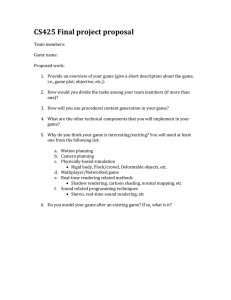

Image-Based Rendering A Brief Overview David Luebke University of Virginia

Image-Based Rendering

A Brief Overview

David Luebke

University of Virginia

Context

Bad: I don’t have assignment #1 graded

– Worse: won’t be graded this week at all

Next major topic: programming the vertex and fragment pipelines

– Big topic, several lectures

– Assignment #3

But first, next couple of lectures will be

“culture” topics

– Image-based rendering

– Parallel graphics

Image-Based Rendering

You’ve been learning how to turn geometric models into images

– Specifically, images of compelling 3D objects and worlds

Image-based rendering : a relatively new field of computer graphics devoted to making images from images

Ex: Quicktime VR

Images with depth

Quicktime VR is really just a 2D panoramic photograph

– Spin around, zoom in and out

But what if we could assign depth to parts of the image?

Ex: Tour Into the Picture

Tour Into the Picture

Software for:

– Selecting parts of an image

– Assigning a vanishing point for depth of background objects

– Assigning depth to foreground objects

– “Painting in” behind objects

Depth per pixel

What if we could assign an exact depth to every pixel?

Ex: MIT Image-Based Editing system

Depth per pixel continued

What if we had a “camera” that automatically acquired depth at every pixel?

Ex: deltasphere

Ex: Monticello project

General image-based rendering

Can we do anything if we don’t have depth at every pixel?

– Intuitively, if we had enough images we should be able to reconstruct new images from novel viewpoints

– Even without depth information

– This is the problem of pure image-based rendering

A 4-D Light Field

Creating Light Fields

Light Field as an

Array of Images

Fast Rendering of Light

Fields

Use Gouraud Shading

Hardware!

Light Field Rendering

Demo of Stanford viewer and light fields…

View-Dependent

Rendering

Spectrum of rendering techniques from pure IBR to pure geometry

Points in this space:

– Pure IBR: light field/lumigraph

– Depth-per-pixel approaches

Another point: view-based rendering

– Slides at: http://graphics.stanford.edu/~kapu/vbr/webslides/index.html