15-505 Lecture 5: Information Retrieval Internet Search Technologies Kamal Nigam

advertisement

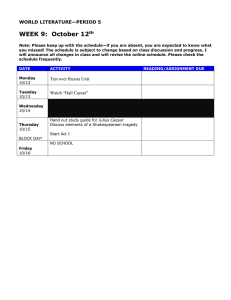

15-505

Internet Search Technologies

Lecture 5: Information Retrieval

Kamal Nigam

Slides adapted from Chris Manning, Prabhakar Raghavan, and Hinrich Schütze

at http://www-csli.stanford.edu/~hinrich/information-retrieval-book.html

1

Information Retrieval in 1650

Which plays of Shakespeare contain the words

Brutus AND Caesar but NOT Calpurnia?

One could grep all of Shakespeare’s plays for

Brutus and Caesar, then strip out lines containing

Calpurnia?

Slow (for large corpora)

NOT Calpurnia is non-trivial

Other operations (e.g., find the word Romans near

countrymen) not feasible

Ranked retrieval (best documents to return)

2

Term-document incidence

Antony and Cleopatra

Julius Caesar The Tempest

Hamlet

Othello

Macbeth

Antony

1

1

0

0

0

1

Brutus

1

1

0

1

0

0

Caesar

1

1

0

1

1

1

Calpurnia

0

1

0

0

0

0

Cleopatra

1

0

0

0

0

0

mercy

1

0

1

1

1

1

worser

1

0

1

1

1

0

Brutus AND Caesar but NOT

Calpurnia

1 if play contains

word, 0 otherwise

3

Incidence vectors

So we have a 0/1 vector for each term.

To answer query: take the rows for Brutus,

Caesar and Calpurnia (complemented) bitwise

AND.

110100 AND 110111 AND 101111 = 100100.

4

Answers to query

Antony and Cleopatra, Act III, Scene ii

Agrippa [Aside to DOMITIUS ENOBARBUS]: Why, Enobarbus,

When Antony found Julius Caesar dead,

He cried almost to roaring; and he wept

When at Philippi he found Brutus slain.

Hamlet, Act III, Scene ii

Lord Polonius: I did enact Julius Caesar I was killed i' the

Capitol; Brutus killed me.

5

Search index construction

Documents to

be indexed.

Friends, Romans, countrymen.

Tokenizer

Token stream.

Friends Romans

Countrymen

Linguistic

modules

Modified tokens.

Inverted index.

friend

roman

countryman

Indexer friend

2

4

roman

1

2

countryman

13

6

16

Tokenization

Documents to

be indexed.

Friends, Romans, countrymen.

Tokenizer

Token stream.

Friends Romans

Countrymen

Linguistic

modules

Modified tokens.

Inverted index.

friend

roman

countryman

Indexer friend

2

4

roman

1

2

countryman

13

7

16

Tokenization

Input: “Friends, Romans and Countrymen”

Output: Tokens

Friends

Romans

and

Countrymen

Each token is candidate for an index entry, after

further processing

But what are valid tokens to emit?

8

Tokenization: tricky cases

Finland’s Finland? Finlands? Finland’s?

Hewlett-Packard Hewlett and Packard as one or

two tokens?

the hold-him-back-and-drag-him-away-maneuver ?

Numbers: unique token for every number?

L'ensemble one token or two?

L ? L’ ? Le ?

German noun compounds are not segmented

Lebensversicherungsgesellschaftsangestellter

‘life insurance company employee’

Chinese and Japanese have no spaces between words:

莎拉波娃现在居住在美国东南部的佛罗里达。

9

Normalization

Documents to

be indexed.

Friends, Romans, countrymen.

Tokenizer

Token stream.

Friends Romans

Countrymen

Linguistic

modules

Modified tokens.

Inverted index.

friend

roman

countryman

Indexer friend

2

4

roman

1

2

countryman

1310 16

Normalization

Need to “normalize” terms in indexed text as well

as query terms into the same form

We want to match U.S.A. and USA

We most commonly implicitly define equivalence

classes of terms

Alternative is to do asymmetric expansion:

e.g., by deleting periods in a term

Enter: window

Search: window, windows

Enter: windows Search: Windows, windows

Enter: Windows Search: Windows

Potentially more powerful, but less efficient

11

Case folding

Reduce all letters to lower case

exception: upper case (in mid-sentence?)

e.g., General Motors

Fed vs. fed

SAIL vs. sail

Often best to lower case everything, since users

will use lowercase regardless of ‘correct’

capitalization…

Makes some queries hard: “The Gap” – clothing

store

12

Stop words

With a stop list, you exclude from dictionary

entirely the commonest words. Intuition:

They have little semantic content: the, a, and, to, be

They take a lot of space: ~30% of postings for top 30

But the trend is away from doing this:

Good compression lets the space for stopwords be very small

Good query optimization techniques mean you pay little at

query time for including stop words.

You need them for:

Phrase queries: “King of Denmark”

Various song titles, etc.: “Let it be”, “To be or not to be”

“Relational” queries: “flights to London”

13

Stemming

Reduce terms to their “roots” before indexing

“Stemming” suggest crude affix chopping

language dependent

e.g., automate(s), automatic, automation all

reduced to automat.

for example compressed

and compression are both

accepted as equivalent to

compress.

for exampl compress and

compress ar both accept

as equival to compress

14

Porter’s algorithm

Commonest algorithm for stemming English

Conventions + 5 phases of reductions

Results suggest at least as good as other stemming options

phases applied sequentially

each phase consists of a set of commands

sample convention: Of the rules in a compound command,

select the one that applies to the longest suffix.

ies i

ational ate

tional tion

15

Normalization: other languages

Accents: résumé vs. resume.

Most important criterion:

How are your users like to write their queries for

these words?

Even in languages with common accents, users

often may not type them

German: Tuebingen vs. Tübingen

Should be equivalent

16

Normalizations

Full morphological analysis – at most modest

benefits for retrieval

Do stemming and other normalizations help?

Often very mixed results: really help recall for

some queries but harm precision on others

17

Indexing

Documents to

be indexed.

Friends, Romans, countrymen.

Tokenizer

Token stream.

Friends Romans

Countrymen

Linguistic

modules

Modified tokens.

Inverted index.

friend

roman

countryman

Indexer friend

2

4

roman

1

2

countryman

1318 16

Big(ger) corpora

Consider N = 1M documents, each with about 1K

terms.

~6 bytes/term including spaces/punctuation

Say there are m = 500K distinct terms among

these.

500K x 1M matrix has half-a-trillion 0’s and 1’s.

But it has no more than one billion 1’s.

6GB of data in the documents.

matrix is extremely sparse.

Why?

What’s a better representation?

We only record the 1 positions.

19

Inverted index

For each term T, we must store a list of all

documents that contain T.

Do we use an array or a list for this?

Brutus

2

Calpurnia

1

Caesar

4

2

8

16 32 64 128

3

5

8

13 21 34

13 16

What happens if the word Caesar

is added to document 14?

20

Inverted index

Linked lists generally preferred to arrays

Dynamic space allocation

Insertion of terms into documents easy

Space overhead of pointers

Brutus

2

4

8

16

Calpurnia

1

2

3

5

Caesar

13

Dictionary

32

8

Posting

64

13

128

21

34

16

Postings lists

21

Sorted by docID (more later on why).

Indexer steps

Sequence of (Modified token, Document ID) pairs.

Doc 1

I did enact Julius

Caesar I was killed

i' the Capitol;

Brutus killed me.

Doc 2

So let it be with

Caesar. The noble

Brutus hath told you

Caesar was ambitious

Term

I

did

enact

julius

caesar

I

was

killed

i'

the

capitol

brutus

killed

me

so

let

it

be

with

caesar

the

noble

brutus

hath

told

you

Doc #

1

1

1

1

1

1

1

1

1

1

1

1

1

1

2

2

2

2

2

2

2

2

2

2

2

2

caesar

2

was

ambitious

2

2

22

Indexer steps

Sort by terms.

Core indexing step.

Term

Doc #

I

did

enact

julius

caesar

I

was

killed

i'

the

capitol

brutus

killed

me

so

let

it

be

with

caesar

the

noble

brutus

hath

told

you

caesar

was

ambitious

1

1

1

1

1

1

1

1

1

1

1

1

1

1

2

2

2

2

2

2

2

2

2

2

2

2

2

2

2

Term

Doc #

ambitious

2

be

2

brutus

1

brutus

2

capitol

1

caesar

1

caesar

2

caesar

2

did

1

enact

1

hath

1

I

1

I

1

i'

1

it

2

julius

1

killed

1

killed

1

let

2

me

1

noble

2

so

2

the

1

the

2

told

2

you

2

was

1

was

2

with

2

23

Indexer steps

Multiple term entries in a

single document are

merged.

Frequency information is

added.

Why frequency?

Will discuss later.

Term

Doc #

ambitious

2

be

2

brutus

1

brutus

2

capitol

1

caesar

1

caesar

2

caesar

2

did

1

enact

1

hath

1

I

1

I

1

i'

1

it

2

julius

1

killed

1

killed

1

let

2

me

1

noble

2

so

2

the

1

the

2

told

2

you

2

was

1

was

2

with

2

Term

Doc #

ambitious

be

brutus

brutus

capitol

caesar

caesar

did

enact

hath

I

i'

it

julius

killed

let

me

noble

so

the

the

told

you

was

was

with

2

2

1

2

1

1

2

1

1

2

1

1

2

1

1

2

1

2

2

1

2

2

2

1

2

2

Term freq

1

1

1

1

1

1

2

1

1

1

2

1

1

1

2

1

1

1

1

1

1

1

1

1

1

1

24

The result is split into a Dictionary file and a

Postings file.

Term

Doc #

ambitious

be

brutus

brutus

capitol

caesar

caesar

did

enact

hath

I

i'

it

julius

killed

let

me

noble

so

the

the

told

you

was

was

with

Freq

2

2

1

2

1

1

2

1

1

2

1

1

2

1

1

2

1

2

2

1

2

2

2

1

2

2

1

1

1

1

1

1

2

1

1

1

2

1

1

1

2

1

1

1

1

1

1

1

1

1

1

1

Doc #

Term

N docs Coll freq

ambitious

1

1

be

1

1

brutus

2

2

capitol

1

1

caesar

2

3

did

1

1

enact

1

1

hath

1

1

I

1

2

i'

1

1

it

1

1

julius

1

1

killed

1

2

let

1

1

me

1

1

noble

1

1

so

1

1

the

2

2

told

1

1

you

1

1

was

2

2

with

1

1

Freq

2

2

1

2

1

1

2

1

1

2

1

1

2

1

1

2

1

2

2

1

2

2

2

1

2

2

1

1

1

1

1

1

2

1

1

1

2

1

1

1

2

1

1

1

1

1

1

1

1

1

1

1

25

Where do we pay in storage?

Doc #

Terms

Freq

2

2

1

2

1

1

2

1

1

2

1

1

2

1

1

2

1

2

2

1

2

2

2

1

2

2

Term

N docs Coll freq

ambitious

1

1

be

1

1

brutus

2

2

capitol

1

1

caesar

2

3

did

1

1

enact

1

1

hath

1

1

I

1

2

i'

1

1

it

1

1

julius

1

1

killed

1

2

let

1

1

me

1

1

noble

1

1

so

1

1

the

2

2

told

1

1

you

1

1

was

2

2

with

1

1

Pointers

1

1

1

1

1

1

2

1

1

1

2

1

1

1

2

1

1

1

1

1

1

1

1

1

1

1

26

Retrieval

27

Query processing

We’ve just build something cool. How do we use

it?

28

Query processing: AND

Consider processing the query:

Brutus AND Caesar

Locate Brutus in the Dictionary;

Locate Caesar in the Dictionary;

Retrieve its postings.

Retrieve its postings.

“Merge” the two postings:

2

4

8

16

1

2

3

5

32

8

64

13

128

21

Brutus

34 Caesar

29

The merge

Walk through the two postings simultaneously, in

time linear in the total number of postings entries

2

8

2

4

8

16

1

2

3

5

32

8

64

13

Brutus

34 Caesar

128

21

If the list lengths are x and y, the merge takes O(x+y)

operations.

Crucial: postings sorted by docID.

30

Boolean queries: Exact match

Boolean Queries are queries using AND, OR and

NOT to join query terms

Views each document as a set of words

Is precise: document matches condition or not.

Primary commercial retrieval tool for 3 decades.

Professional searchers (e.g., lawyers) still like

Boolean queries:

You know exactly what you’re getting.

31

Boolean queries: More general merges

Exercise: Adapt the merge for the queries:

Brutus AND NOT Caesar

Brutus OR NOT Caesar

Can we still run through the merge in time O(x+y)

or what can we achieve?

32

Merging

What about an arbitrary Boolean formula?

(Brutus OR Caesar) AND NOT

(Antony OR Cleopatra)

Can we always merge in “linear” time?

Linear in what?

33

Query optimization

What is the best order for query processing?

Consider a query that is an AND of t terms.

For each of the t terms, get its postings, then

AND them together.

Brutus

2

Calpurnia

1

Caesar

4

2

8

16 32 64 128

3

5

8

16 21 34

13 16

Query: Brutus AND Calpurnia AND Caesar

34

Query optimization example

Process in order of increasing freq:

start with smallest set, then keep cutting further.

This is why we kept

freq in dictionary

Brutus

2

Calpurnia

1

Caesar

4

2

8

16 32 64 128

3

5

8

13 21 34

13 16

Execute the query as (Caesar AND Brutus) AND Calpurnia.

35

Phrase queries

36

Phrase queries

Want to answer queries such as “carnegie

mellon” – as a phrase

Thus the sentence “I got money from the Mellon

bank in Carnegie” is not a match.

The concept of phrase queries has proven easily

understood by users; about 10% of web queries

are phrase queries

No longer suffices to store only

<term : docs> entries

37

Positional indexes

Store, for each term, entries of the form:

<number of docs containing term;

doc1: position1, position2 … ;

doc2: position1, position2 … ;

etc.>

38

Positional index example

<be: 993427;

1: 7, 18, 33, 72, 86, 231;

2: 3, 149;

4: 17, 191, 291, 430, 434;

5: 363, 367, …>

Which of docs 1,2,4,5

could contain “to be

or not to be”?

Can compress position values/offsets

Nevertheless, this expands postings storage

substantially

39

Processing a phrase query

Extract inverted index entries for each distinct

term: to, be, or, not.

Merge their doc:position lists to enumerate all

positions with “to be or not to be”.

to:

be:

2:1,17,74,222,551; 4:8,16,190,429,433;

7:13,23,191; ...

1:17,19; 4:17,191,291,430,434; 5:14,19,101; ...

Same general method for proximity searches

40

Positional index size

You can compress position values/offsets

Still, a positional index expands postings storage

substantially

Standardly used because of the power and

usefulness of phrase and proximity queries …

whether used explicitly or implicitly in a ranking

retrieval system.

41

Rules of thumb

A positional index is 2–4 as large as a nonpositional index

Positional index size 35–50% of volume of

original text

Caveat: all of this holds for “English-like”

languages

42

Scoring and Ranking

43

Scoring

Thus far, our queries have all been Boolean

Docs either match or not

Good for expert users with precise understanding

of their needs and the corpus

Applications can consume 1000’s of results

Not good for (the majority of) users with poor

Boolean formulation of their needs

Most users don’t want to wade through 1000’s of

results – cf. use of web search engines

44

Scoring

We wish to return in order the documents most

likely to be useful to the searcher

How can we rank order the docs in the corpus

with respect to a query?

Assign a score – say in [0,1]

for each doc on each query

Assume no spammers

“adversarial IR” makes life complicated

45

Incidence matrices

Recall: Document is binary vector X in {0,1}v

Query is a binary vector Y

Score: Overlap measure:

X Y

Antony and Cleopatra

Julius Caesar

The Tempest

Hamlet

Othello

Macbeth

Antony

1

1

0

0

0

1

Brutus

1

1

0

1

0

0

Caesar

1

1

0

1

1

1

Calpurnia

0

1

0

0

0

0

Cleopatra

1

0

0

0

0

0

mercy

1

0

1

1

1

1

worser

1

0

1

1

1

0

46

Example

On the query ides of march, Shakespeare’s

Julius Caesar has a score of 3

All other Shakespeare plays have a score of 2

(because they contain march) or 1

Thus in a rank order, Julius Caesar would come

out tops

47

Overlap matching

What’s wrong with the overlap measure?

It doesn’t consider:

Term frequency in document

Term scarcity in collection (document

mention frequency)

of is more common than ides or march

Length of documents

(And queries: score not normalized)

48

Scoring: density-based

Thus far: overlap of terms/phrases in a doc

Next: if a document talks about a topic more,

then it is a better match

Document relevant if it has a lot of the terms

This leads to the idea of term weighting.

49

Scoring: Term weighting

50

Term-document count matrices

Consider the number of occurrences of a term in

a document:

Bag of words model

Document is a vector in ℕv: a column below

Antony and Cleopatra

Julius Caesar

The Tempest

Hamlet

Othello

Macbeth

Antony

157

73

0

0

0

0

Brutus

4

157

0

1

0

0

Caesar

232

227

0

2

1

1

Calpurnia

0

10

0

0

0

0

Cleopatra

57

0

0

0

0

0

mercy

2

0

3

5

5

1

worser

2

0

1

1

1

0

51

Bag of words view of a doc

Thus the doc

John is quicker than Mary .

is indistinguishable from the doc

Mary is quicker than John .

52

Term frequency tf

Idea: Score by summing all occurrences of query

words in doc

Long docs are favored because they’re more

likely to contain query terms

Can fix this to some extent by normalizing for

document length

But is raw tf the right measure?

Note: in IR frequency means unnormalized count

53

Counts vs. frequencies

Consider again the ides of march query.

Julius Caesar has 5 occurrences of ides

No other play has ides

march occurs in over a dozen

All the plays contain lots of of

By this scoring measure, the top-scoring play is

likely to be the one with the most ofs

54

Weighting term frequency: tf

What is the relative importance of

0 vs. 1 occurrence of a term in a doc

1 vs. 2 occurrences

2 vs. 3 occurrences …

Unclear: while it seems that more is better, a lot isn’t

proportionally better than a few

Can just use raw tf

Another option commonly used in practice:

wft ,d 0 if tft ,d 0, 1 log tft ,d otherwise

Note: in IR frequency means unnormalized count

55

Score computation

Score for a query q = sum over terms t in q:

tq tft ,d

[Note: 0 if no query terms in document]

Can use wf instead of tf in the above

Still doesn’t consider term scarcity in collection

(ides is rarer than of)

56

Weighting should depend on the

term overall

Which of these tells you more about a doc?

10 occurrences of ides?

10 occurrences of of?

Attenuate the weight of a common term

But what is “common”?

Consider collection frequency (cf )

The total number of occurrences of the term in the

entire collection of documents

57

Document frequency

But document frequency (df ) may be better:

df = number of docs in the corpus containing the

term

Word

cf

df

ferrari

10422

17

insurance 10440

3997

Document/collection frequency weighting is only

possible in known (static) collection.

So how do we make use of df ?

58

tf x idf term weights

tf x idf measure combines:

term frequency (tf )

or wf, some measure of term density in a doc

inverse document frequency (idf )

measure of informativeness of a term: its rarity across

the whole corpus

The most commonly used version is:

n

idf i log

df i

59

Summary: tf x idf (or tf.idf)

Assign a tf.idf weight to each term i in each

document d

wi ,d tfi ,d log( n / df i )

What is the wt

of a term that

occurs in all

of the docs?

tfi ,d frequency of term i in document j

n total number of documents

df i the number of documents that contain te rm i

Increases with the number of occurrences within a doc

Increases with the rarity of the term across the whole corpus

60

Real-valued term-document

matrices

Function (scaling) of count of a word in a

document:

Bag of words model

Each is a vector in ℝv

Here log-scaled tf.idf

Note can be >1!

Antony and Cleopatra

Julius Caesar

The Tempest

Hamlet

Othello

Macbeth

Antony

13.1

11.4

0.0

0.0

0.0

0.0

Brutus

3.0

8.3

0.0

1.0

0.0

0.0

Caesar

2.3

2.3

0.0

0.5

0.3

0.3

Calpurnia

0.0

11.2

0.0

0.0

0.0

0.0

Cleopatra

17.7

0.0

0.0

0.0

0.0

0.0

mercy

0.5

0.0

0.7

0.9

0.9

0.3

worser

1.2

0.0

0.6

0.6

0.6

0.0 61

Scoring: similarity

62

Documents as vectors

Each doc j can now be viewed as a vector of

wfidf values, one component for each term

So we have a vector space

terms are axes

docs live in this space

even with stemming, this is huge-dimensional

(The corpus of documents gives us a matrix,

which we could also view as a vector space in

which words live – transposable data)

63

Why turn docs into vectors?

You can also turn queries into (short) vectors

With q a vector, find d vectors (docs) “near” it.

64

Intuition

t3

d2

d3

q

t1

d5

t2

d4

Postulate: Text that is “close together”

in the vector space talk about similar things.

65

First cut at similarity

Idea: Distance between d1 and d2 is the length of

the vector |d1 – d2|.

Why is this not a great idea?

We still haven’t dealt with the issue of length

normalization

Euclidean distance

Short documents would be more similar to each

other by virtue of length, not topic

However, we can implicitly normalize by looking

at angles instead

66

Cosine similarity

Distance between vectors d1 and d2 captured by

the cosine of the angle x between them.

Note – this is similarity, not distance

t3

d2

d1

θ

t1

t2

67

Cosine similarity

A vector can be normalized (given a length of 1)

by dividing each of its components by its length –

here we use the L2 norm

x2

2

x

i i

This maps vectors onto the unit sphere:

Then,

Longer documents don’t get more weight

dj

n

i 1

wi , j 1

68

Cosine similarity

d j dk

sim (d j , d k )

d j dk

n

i 1

i1 w

n

wi , j wi ,k

2

i, j

2

w

i1 i,k

n

Cosine of angle between two vectors

The denominator involves the lengths of the

vectors.

Normalization

69

Normalized vectors

For normalized vectors, the cosine is simply the

dot product:

cos( d j , d k ) d j d k

70

Queries in the vector space model

Central idea: the query as a vector:

We regard the query as short document

We return the documents ranked by the

closeness of their vectors to the query, also

represented as a vector.

d j dq

sim (d j , d q )

d j dq

n

i 1

i1 w

Note that dq is very sparse!

n

wi , j wi ,q

2

i, j

2

w

i1 i,q

n

71

What did we learn today?

Length-normalized TFIDF-weighted/positional inverted

index to compute cosine similarities

Say it three times fast

Store, for each term, entries of the form:

<number of docs containing term;

Doc1/tfidf: position1, position2 … ;

Doc2/tfidf: position1, position2 … ;

...>

Compute boolean matched documents with dot product

scores together, and present them ranked to user

72

Following up on today’s lecture

Free online textbook: http://wwwcsli.stanford.edu/~hinrich/information-retrievalbook.html

Good in-depth classes at CMU:

15-493 & 11-741

Taught by Jamie Callan and Yiming Yang

73