Spoken Dialogue Systems Bob Carpenter June 20, 1999

advertisement

Spoken Dialogue Systems

Bob Carpenter and Jennifer Chu-Carroll

June 20, 1999

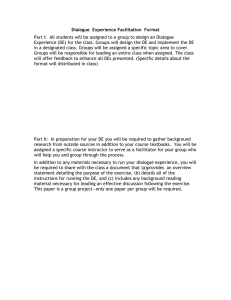

Tutorial Overview: Data Flow

Part I

Signal

Processing

Part II

Semantic

Interpretation

Speech

Recognition

Discourse

Interpretation

Dialogue

Management

Speech

Synthesis

June 1999

Response

Generation

2

Speech and Audio Processing

• Signal processing:

– Convert the audio wave into a sequence of feature vectors

• Speech recognition:

– Decode the sequence of feature vectors into a sequence of words

• Semantic interpretation:

– Determine the meaning of the recognized words

• Speech synthesis:

– Generate synthetic speech from a marked-up word string

June 1999

3

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

4

Acoustic Waves

• Human speech generates a wave

– like a loudspeaker moving

• A wave for the words “speech lab” looks like:

s

p

ee

ch

l a

b

“l” to “a”

transition:

Graphs from Simon Arnfield’s web tutorial on speech, Sheffield:

http://lethe.leeds.ac.uk/research/cogn/speech/tutorial/

June 1999

5

Acoustic Sampling

• 10 ms frame (ms = millisecond = 1/1000 second)

• ~25 ms window around frame to smooth signal processing

25 ms

...

10ms

a1

June 1999

a2

Result:

Acoustic Feature Vectors

a3

6

Spectral Analysis

• Frequency gives pitch; amplitude gives volume

– sampling at ~8 kHz phone, ~16 kHz mic (kHz=1000 cycles/sec)

s

p

ee

ch

l

a

• Fourier transform of wave yields a spectrogram

– darkness indicates energy at each frequency

– hundreds to thousands of frequency samples

June 1999

7

b

Acoustic Features: Mel Scale Filterbank

• Derive Mel Scale Filterbank coefficients

• Mel scale:

– models non-linearity of human audio perception

– mel(f) = 2595 log10(1 + f / 700)

– roughly linear to 1000Hz and then logarithmic

• Filterbank

– collapses large number of FFT parameters by filtering with ~20

triangular filters spaced on mel scale

...

m1 m2 m3 m4

June 1999

m5

m6

…

8

frequency

coefficients

Cepstral Coefficients

• Cepstral Transform is a discrete cosine transform of log

filterbank amplitudes:

i

ci (2 / N ) j 1 log m j cos ( j 0.5)

N

1/ 2

N

• Result is ~12 Mel Frequency Cepstral Coefficients (MFCC)

• Almost independent (unlike mel filterbank)

• Use Delta (velocity / first derivative) and Delta2 (acceleration

/ second derivative) of MFCC (+ ~24 features)

June 1999

9

Additional Signal Processing

• Pre-emphasis prior to Fourier transform to boost high level

energy

• Liftering to re-scale cepstral coefficients

• Channel Adaptation to deal with line and microphone

characteristics (example: cepstral mean normalization)

• Echo Cancellation to remove background noise (including

speech generated from the synthesizer)

• Adding a Total (log) Energy feature (+/- normalization)

• End-pointing to detect signal start and stop

June 1999

10

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

11

Properties of Recognizers

• Speaker Independent vs. Speaker Dependent

• Large Vocabulary (2K-200K words) vs. Limited Vocabulary

(2-200)

• Continuous vs. Discrete

• Speech Recognition vs. Speech Verification

• Real Time vs. multiples of real time

• Spontaneous Speech vs. Read Speech

• Noisy Environment vs. Quiet Environment

• High Resolution Microphone vs. Telephone vs. Cellphone

• Adapt to speaker vs. non-adaptive

• Low vs. High Latency

• With online incremental results vs. final results

June 1999

12

The Speech Recognition Problem

• Bayes’ Law

– P(a,b) = P(a|b) P(b) = P(b|a) P(a)

– Joint probability of a and b = probability of b times the probability of a

given b

• The Recognition Problem

– Find most likely sequence w of “words” given the sequence of

acoustic observation vectors a

– Use Bayes’ law to create a generative model

– ArgMaxw P(w|a) = ArgMaxw P(a|w) P(w) / P(a)

= ArgMaxw P(a|w) P(w)

• Acoustic Model:

• Language Model:

June 1999

P(a|w)

P(w)

13

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

14

Hidden Markov Models (HMMs)

• HMMs provide generative acoustic models P(a|w)

• probabilistic, non-deterministic finite-state automaton

– state n generates feature vectors with density Pn

– transitions from state j to n are probabilistic Pj,n

P1,1

P2,2

P1(.)

June 1999

P1,2

P3,3

P2(.)

15

P2,3

P3(.)

P3,4

HMMs: Single Gaussian Distribution

P1,1

P2,2

P1(.)

P1,2

P3,3

P2(.)

P2,3

• Outgoing likelihoods:

P3(.)

P

n

P3,4

j ,n

1

• Feature vector a generated by normal density (Gaussian)

with mean h and covariance matrix S

Pn (a) N(a | hn , S n )

(2 )

June 1999

d / 2

| Sn |

1 / 2

1

1

T

exp( (a hn ) S n (a hn ))

2

16

HMMs: Gaussian Mixtures

• To account for variable pronunciations

• Each state generates acoustic vectors according to a linear

combination of m Gaussian models, weighted by lm:

Pn (a) m ln,m N(a | hn,m , S n,m )

Three-component

mixture model in

two dimensions

June 1999

17

Acoustic Modeling with HMMs

• Train HMMs to represent subword units

• Units typically segmental; may vary in granularity

– phonological (~40 for English)

– phonetic (~60 for English)

– context-dependent triphones (~14,000 for English): models

temporal and spectral transitions between phones

– silence and noise are usually additional symbols

• Standard architecture is three successive states per

phone:

P1,1

P2,2

P1(.)

June 1999

P1,2

P3,3

P2(.)

18

P2,3

P3(.)

P3,4

Pronunciation Modeling

• Needed for speech recognition and synthesis

• Maps orthographic representation of words to sequence(s)

of phones

• Dictionary doesn’t cover language due to:

– open classes

– names

– inflectional and derivational morphology

• Pronunciation variation can be modeled with multiple

pronunciation and/or acoustic mixtures

• If multiple pronunciations are given, estimate likelihoods

• Use rules (e.g. assimilation, devoicing, flapping), or

statistical transducers

June 1999

19

Lexical HMMs

• Create compound HMM for each lexical entry by

concatenating the phones making up the pronunciation

– example of HMM for ‘lab’ (following ‘speech’ for crossword triphone)

ch-l+a

l

triphone:

phone:

P1,1

P2,2

P1(.)

P1,2

l-a+b

a

P3,3

P2(.)

P2,3

P1,1

P3(.)

P3,4

P2,2

P1(.)

P1,2

a-b+#

b

P3,3

P2(.)

P2,3

P1,1

P3(.)

P3,4

P2,2

P1(.)

P1,2

P3,3

P2(.)

P2,3

P3(.)

P3,4

• Multiple pronunciations can be weighted by likelihood into

compound HMM for a word

• (Tri)phone models are independent parts of word models

June 1999

20

HMM Training: Baum-Welch Re-estimation

• Determines the probabilities for the acoustic HMM models

• Bootstraps from initial model

– hand aligned data, previous models or flat start

• Allows embedded training of whole utterances:

– transcribe utterance to words w1,…,wk and generate a compound

HMM by concatenating compound HMMs for words: m1,…,mk

– calculate acoustic vectors: a1,…,an

• Iteratively converges to a new estimate

• Re-estimates all paths because states are hidden

• Provides a maximum likelihood estimate

– model that assigns training data the highest likelihood

June 1999

21

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

22

Probabilistic Language Modeling: History

• Assigns probability P(w) to word sequence w = w1 ,w2,…,wk

• Bayes’ Law provides a history-based model:

P(w1 ,w2,…,wk)

= P(w1) P(w2|w1) P(w3|w1,w2) … P(wk|w1,…,wk-1)

• Cluster histories to reduce number of parameters

June 1999

23

N -gram Language Modeling

• n-gram assumption clusters based on last n-1 words

–

–

–

–

P(wj|w1,…,wj-1) ~ P(wj|wj-n-1,…,wj-2 ,wj-1)

unigrams ~ P(wj)

bigrams ~ P(wj|wj-1)

trigrams ~ P(wj|wj-2 ,wj-1)

• Trigrams often interpolated with bigram and unigram:

P̂( w3 | w1 , w2 ) l3

F ( w3 | w1 , w2 )

l2

k F (wk | w1 , w2 )

F ( w3 | w2 )

F ( w3 )

l1

k F (wk | w2 ) k F (wk )

– the li typically estimated by maximum likelihood estimation on held

out data (F(.|.) are relative frequencies)

– many other interpolations exist (another standard is a non-linear

backoff)

June 1999

24

Extended Probabilistic Language Modeling

• Histories can include some indication of semantic topic

– latent-semantic indexing (vector-based information retrieval model)

– topic-spotting and blending of topic-specific models

– dialogue-state specific language models

• Language models can adapt over time

– recent history updates model through re-estimation or blending

– often done by boosting estimates for seen words (triggers)

– new words and/or pronunciations can be added

• Can estimate category tags (syntactic and/or semantic)

– Joint word/category model: P(word1:tag1,…,wordk:tagk)

– example: P(word:tag|History) ~ P(word|tag) P(tag|History)

June 1999

25

Finite State Language Modeling

• Write a finite-state task grammar (with non-recursive CFG)

• Simple Java Speech API example (from user’s guide):

public <Command> = [<Polite>] <Action> <Object> (and <Object>)*;

<Action> = open | close | delete;

<Object> = the window | the file;

<Polite> = please;

• Typically assume that all transitions are equi-probable

• Technology used in most current applications

• Can put semantic actions in the grammar

June 1999

26

Information Theory: Perplexity

• Perplexity is standard model of recognition complexity given

a language model

• Perplexity measures the conditional likelihood of a corpus,

given a language model P(.):

PP( w1 ,..., wN ) P( w1 ,..., wN ) 1/ N

• Roughly the number of equi-probable choices per word

• Typically computed by taking logs and applying historybased Bayesian decomposition:

log 2 PP 1 / N n1 log 2 P(wn | w1 ,..., wn1 )

N

• But lower perplexity doesn’t guarantee better recognition

June 1999

27

Zipf’s Law

• Lexical frequency is inversely proportional to rank

– Frequency(n) = Frequency of n-th most frequent word

– Zipf’s Law: Frequency(Rank) = Frequency(1)/Rank

– Thus:

log Frequency(Rank) - log Rank

From G.R. Turner’s web site on Zipf’s law:

http://www.btinternet.com/~g.r.turner/ZipfDoc.htm

June 1999

28

Vocabulary Acquisition

• IBM personal E-mail corpus of PDB (by R.L. Mercer)

• static coverage is given by most frequent n words

• dynamic coverage is most recent n words

June 1999

Vocabulary

Static

Dynamic Text Size

Coverage Coverage

5,000

92.5

95.5

56,000

10,000

95.9

98.2

240,000

15,000

97.0

99.0

640,000

20,000

97.6

99.5

1,300,000

29

Language HMMs

• Can take HMMs for each word and combine into a single

HMM for the whole language (allows cross-word models)

• Result is usually too large to expand statically in memory

• A two word example is given by:

P(word1|word1)

P(word1)

word1

P(word2|word1)

P(word1|word2)

P(word2)

June 1999

word2

P(word2|word2)

30

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

31

HMM Decoding

• Decoding Problem is finding best word sequence:

– ArgMax w1,…,wm P(w1,…,wm | a1,…,an)

• Words w1…wm are fully determined by sequences of states

• Many state sequences produce the same words

• The Viterbi assumption:

– the word sequence derived from the most likely path will be the most

likely word sequence (as would be computed over all paths)

i ( s) Max P( s1 ,..., si | a1 ,..., a i ) Ps (a i ) Max r i 1 (r ) Pr , s

Acoustics

for state s

for input a

June 1999

Max over

previous

states r

32

likelihood

previous

state is r

Transition

probability

from r to s

Visualizing Viterbi Decoding: The Trellis

i ( s) Max P( s1 ,..., si | a1 ,..., a i ) Ps (a i ) Max r i 1 (r ) Pr , s

input

ai-1

s1

i-1(s1)

s2

i(s2)

P1,2

...

P1,k

s1

i+1(s1)

s2

i+1(s2)

...

June 1999

Pk,1

i(s1)

P1,1

...

time

P2,1

s1

ai+1

...

best

path

i-1(s2)

P1,1

...

s2

ai

sk

sk

sk

i-1(sk)

i(sk)

i+1(sk)

ti-1

ti

ti+1

33

Viterbi Search: Dynamic Programming

Token Passing

• Algorithm:

– Initialize all states with a token with a null history and the likelihood

that it’s a start state

– For each frame ak

• For each token t in state s with probability P(t), history H

– For each state r

» Add new token to s with probability P(t) Ps,r Pr(ak), and

history s.H

• Time synchronous from left to right

• Allows incremental results to be evaluated

June 1999

34

Pruning the Search Space

• Entire search space for Viterbi search is much too large

• Solution is to prune tokens for paths whose score is too low

• Typical method is to use:

– histogram: only keep at most n total hypotheses

– beam: only keep hypotheses whose score is a fraction of best score

• Need to balance small n and tight beam to limit search and

minimal search error (good hypotheses falling off beam)

• HMM densities are usually scaled differently than the

discrete likelihoods from the language model

– typical solution: boost language model’s dynamic range, using P(w)n

P(a|w), usually with with n ~ 15

• Often include penalty for each word to favor hypotheses

with fewer words

June 1999

35

N-best Hypotheses and Word Graphs

• Keep multiple tokens and return n-best paths/scores:

–

–

–

–

p1

p2

p3

p4

flights from Boston today

flights from Austin today

flights for Boston to pay

lights for Boston to pay

• Can produce a packed word graph (a.k.a. lattice)

– likelihoods of paths in lattice should equal likelihood for n-best

Austin

flights

from

Boston

today

for

Boston

lights

for

June 1999

36

to

pay

Search-based Decoding

• A* search:

– Compute all initial hypotheses and place in priority queue

– For best hypothesis in queue

• extend by one observation, compute next state score(s) and

place into the queue

• Scoring now compares derivations of different lengths

– would like to, but can’t compute cost to complete until all data is seen

– instead, estimate with simple normalization for length

– usually prune with beam and/or histogram constraints

• Easy to include unbounded amounts of history because no

collapsing of histories as in dynamic programming n-gram

• Also known as stack decoder (priority queue is “stack”)

June 1999

37

Multiple Pass Decoding

• Perform multiple passes, applying successively more finegrained language models

• Can much more easily go beyond finite state or n-gram

• Can use for Viterbi or stack decoding

• Can use word graph as an efficient interface

• Can compute likelihood to complete hypotheses after each

pass and use in next round to tighten beam search

• First pass can even be a free phone decoder without a

word-based language model

June 1999

38

Measuring Recognition Accuracy

• Word Error Rate =

Insertions Deletions Substitutions

Words

• Example scoring:

– actual utterance: four

six seven nine three three seven

– recognizer:

four oh

six seven five

three

seven

insert

subst

delete

– WER: (1 + 1 + 1)/7 = 43%

• Would like to study concept accuracy

– typically count only errors on content words [application dependent]

– ignore case marking (singular, plural, etc.)

• For word/concept spotting applications:

– recall: percentage of target words (concept) found

– precision: percentage of hypothesized words (concepts) in target

June 1999

39

Empirical Recognition Accuracies

•

•

•

•

Cambridge HTK, 1997; multipass HMM w. lattice rescoring

Top Performer in ARPA’s HUB-4: Broadcast News Task

65,000 word vocabulary; Out of Vocabulary: 0.5%

Perplexities:

–

–

–

–

–

word bigram: 240

backoff trigram of 1000 categories: 238

word trigram: 159

word 4-gram: 147

word 4-gram + category trigram: 137

• Word Error Rates:

– clean, read speech: 9.4%

– clean, spontaneous speech: 15.2%

– low fidelity speech: 19.5%

June 1999

40

(6.9 million bigrams)

(803K bi, 7.1G tri)

(8.4 million trigrams)

(8.6 million 4-grams)

Empirical Recognition Accuracies (cont’d)

•

•

•

•

•

Lucent 1998, single pass HMM

Typical of real-time telephony performance (low fidelity)

3,000 word vocabulary; Out of Vocabulary: 1.5%

Blended models from customer/operator & customer/system

Perplexities customer/op customer/system

– bigram:

– trigram:

105.8 (27,200)

99.5 (68,500)

32.1 (12,808)

24.4 (25,700)

• Word Error Rate: 23%

• Content Term (single, pair, triple of words) Precision/Recall

– one-word terms:

93.7 / 88.4

– two-word terms:

96.9 / 85.4

– three-word terms: 98.5 / 84.3

June 1999

41

Confidence Scoring and Rejection

• Alternative to standard acoustic density scoring

–

–

–

–

compute HMM acoustic score for word(s) in usual way

baseline score for an anti-model

compute hypothesis ratio (Word Score / Baseline Score)

test hypothesis ratio vs. threshold

• Can be applied to:

– free word spotting (given pronunciations)

– (word-by-word) acoustic confidence scoring for later processing

– verbal information verification

• existing info: name, address, social security number

• password

June 1999

42

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

43

Semantic Interpretation: Word Strings

• Content is just words

– System: What is your address?

– User:

fourteen eleven main street

• Can also do concept extraction / keyword(s) spotting

– User:

My address is fourteen eleven main street

• Applications

– template filling

– directory services

– information retrieval

June 1999

44

Semantic Interpretation: Pattern-Based

• Simple (typically regular) patterns specify content

• ATIS (Air Traffic Information System) Task:

– System: What are your travel plans?

– User:

[On Monday], I’m going [from Boston] [to San Francisco].

– Content: [DATE=Monday, ORIGIN=Boston, DESTINATION=SFO]

• Can combine content-extraction and language modeling

– but can be too restrictive as a language model

• Java Speech API: (curly brackets show semantic ‘actions’)

public <command> = <action> [<object>] [<polite>];

<action> = open {OP} | close {CL} | move {MV};

<object> = [<this_that_etc>] window | door;

<this_that_etc> = a | the | this | that | the current;

<polite> = please | kindly;

• Can be generated and updated on the fly (eg. Web Apps)

June 1999

45

Semantic Interpretation: Parsing

• In general case, have to uncover who did what to whom:

–

–

–

–

System: What would you like me to do next?

User: Put the block in the box on Platform 1. [ambiguous]

System: How can I help you?

User: Where is A Bug’s Life playing in Summit?

• Requires some kind of parsing to produce relations:

– Who did what to whom:

?(where(present(in(Summit,play(BugsLife)))))

– This kind of representation often used for machine translation

• Often transferred to flatter frame-based representation:

– Utterance type: where-question

– Movie: A Bug’s Life

– Town: Summit

June 1999

46

Robustness and Partiality

• Controlled Speech

– limited task vocabulary; limited task grammar

• Spontaneous Speech

– Can have high out-of-vocabulary (OOV) rate

– Includes restarts, word fragments, omissions, phrase fragments,

disagreements, and other disfluencies

– Contains much grammatical variation

– Causes high word error-rate in recognizer

• Parsing is often partial, allowing:

– omission

– parsing fragments

June 1999

47

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

48

Recorded Prompts

• The simplest (and most common) solution is to record

prompts spoken by a (trained) human

• Produces human quality voice

• Limited by number of prompts that can be recorded

• Can be extended by limited cut-and-paste or template filling

June 1999

49

Speech Synthesis

• Rule-based Synthesis

– Uses linguistic rules (+/- training) to generate features

– Example: DECTalk

• Concatenative Synthesis

–

–

–

–

June 1999

Record basic inventory of sounds

Retrieve appropriate sequence of units at run time

Concatenate and adjust durations and pitch

Waveform synthesis

50

Diphone and Polyphone Synthesis

•

•

•

•

Phone sequences capture co-articulation

Cut speech in positions that minimize context contamination

Need single phones, diphones and sometimes triphones

Reduce number collected by

– phonotactic constraints

– collapsing in cases of no co-articulation

• Data Collection Methods

– Collect data from a single (professional) speaker

– Select text with maximal coverage (typically with greedy algorithm),

or

– Record minimal pairs in desired contexts (real words or nonsense)

June 1999

51

Duration Modeling

Must generate segments with the appropriate duration

• Segmental Identity

– /ai/ in like twice as long as /I/ in lick

• Surrounding Segments

– vowels longer following voiced fricatives than voiceless stops

• Syllable Stress

– onsets and nuclei of stressed syllables longer than in unstressed

• Word “importance”

– word accent with major pitch movement lengthens

• Location of Syllable in Word

– word ending longer than word starting longer than word internal

• Location of the Syllable in the Phrase

– phrase final syllables longer than same syllable in other positions

June 1999

52

Intonation: Tone Sequence Models

• Functional Information can be encoded via tones:

–

–

–

–

given/new information (information status)

contrastive stress

phrasal boundaries (clause structure)

dialogue act (statement/question/command)

• Tone Sequence Models

– F0 contours generated from phonologically distinctive tones/pitch

accents which are locally independent

– generate a sequence of tonal targets and fit with signal processing

June 1999

53

Intonation for Function

• ToBI (Tone and Break Index) System, is one example:

–

–

–

–

Pitch Accent

* (H*, L*, H*+L, H+L*, L*+H, L+H*)

Phrase Accent - (H-, L-)

Boundary Tone % (H%, L%)

Intonational Phrase

<Pitch Accent>+ <Phrase Accent> <Boundary Tone>

statement

vs. question

example:

source: Multilingual Text-to-Speech Synthesis, R. Sproat, ed., Kluwer, 1998

June 1999

54

Text Markup for Synthesis

• Bell Labs TTS Markup

– r(0.9) L*+H(0.8) Humpty L*+H(0.8) Dumpty r(0.85) L*(0.5) sat on a

H*(1.2) wall.

– Tones:

Tone(Prominence)

– Speaking Rate: r(Rate) and pauses

– Top Line (highest pitch); Reference Line (reference pitch); Base

Line (lowest pitch)

• SABLE is an emerging standard extending SGML

http://www.cstr.ed.ac.uk/projects/sable.html

– marks: emphasis(#), break(#), pitch(base/mid/range,#), rate(#),

volume(#), semanticMode(date/time/email/URL/...),

speaker(age,sex)

– Implemented in Festival Synthesizer (free for research, etc.):

http://www.cstr.ed.ac.uk/projects/festival.html

June 1999

55

Intonation in Bell Labs TTS

• Generate a sequence of F0 targets for synthesis

• Example:

– We were away a year ago.

– phones: w E w R & w A & y E r & g O

– Default Declarative intonation: (H%) H* L- L% [question: L* H- H%]

source: Multilingual Text-to-Speech Synthesis, R. Sproat, ed., Kluwer, 1998

June 1999

56

Signal Processing for Speech Synthesis

• Diphones recorded in one context must be generated in

other contexts

• Features are extracted from recorded units

• Signal processing manipulates features to smooth

boundaries where units are concatenated

• Signal processing modifies signal via ‘interpolation’

– intonation

– duration

June 1999

57

The Source-Filter Model of Synthesis

• Model of features to be extracted and fitted

• Excitation or Voicing Source(s) to model sound source

–

–

–

–

–

standard wave of glottal pulses for voiced sounds

randomly varying noise for unvoiced sounds

modification of airflow due to lips, etc.

high frequency (F0 rate), quasi-periodic, choppy

modeled with vector of glottal waveform patterns in voiced regions

• Acoustic Filter(s)

– shapes the frequency character of vocal tract and radiation character

at the lips

– relatively slow (samples around 5ms suffice) and stationary

– modeled with LPC (linear predictive coding)

June 1999

58

Barge-in

• Technique to allow speaker to interrupt the system’s speech

• Combined processing of input signal and output signal

• Signal detector runs looking for speech start and endpoints

– tests a generic speech model against noise model

– typically cancels echoes created by outgoing speech

• If speech is detected:

– Any synthesized or recorded speech is cancelled

– Recognition begins and continues until end point is detected

June 1999

59

Speech Application Programming Interfaces

•

•

•

•

Abstract from recognition/synthesis engines

Recognizer and synthesizer loading

Acoustic and grammar model loading (dynamic updates)

Recognition

– online

– n-best or lattice

• Synthesis

– markup

– barge in

• Acoustic control

– telephony interface

– microphone/speaker interface

June 1999

60

Speech API Examples

• SAPI: Microsoft Speech API (rec&synth)

– communicates through COM objects

– instances: most systems implement all or some of this (Dragon, IBM,

Lucent, L&H, etc.)

• JSAPI: Java Speech API (rec & synth)

– communicates through Java events (like GUI)

– concurrency through threads

– instances: IBM ViaVoice (rec), L&H (synth)

• (J)HAPI: (Java) HTK API (recognition)

– communicates through C or Java port of C interface

– eg: Entropics Cambridge Research Lab’s HMM Tool Kit (HTK)

• Galaxy (rec & synth)

– communicates through a production system scripting language

– MIT System, ported by MITRE for DARPA Communicator

June 1999

61

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

62

Discourse & Dialogue Processing

• Discourse interpretation:

– Understand what the user really intends by interpreting utterances in

context

• Dialogue management:

– Determine system goals in response to user utterances based on

user intention

• Response generation:

– Generate natural language utterances to achieve the selected goals

June 1999

63

Discourse Interpretation

• Goal: understand what the user really intends

• Example: Can you move it?

– What does “it” refer to?

– Is the utterance intended as a simple yes-no query or a request to

perform an action?

• Issues addressed:

– Reference resolution

– Intention recognition

• Interpret user utterances in context

June 1999

64

Reference Resolution

U: Where is A Bug’s Life playing in Summit?

S: A Bug’s Life is playing at the Summit theater.

U: When is it playing there?

S: It’s playing at 2pm, 5pm, and 8pm.

U: I’d like 1 adult and 2 children for the first show.

How much would that cost?

• Knowledge sources:

– Domain knowledge

– Discourse knowledge

– World knowledge

June 1999

65

Reference Resolution: In Theory

• Focus stacks:

– Maintain recent objects in stack

– Select objects that satisfy semantic/pragmatic constraints starting

from top of stack

– May take into account discourse structure

• Centering:

– Backward-looking center (Cb): object connecting the current

sentence with the previous sentence

– Forward-looking centers (Cf): potential Cb of the next sentence

– Rule-based filtering & ranking of objects for pronoun resolution

June 1999

66

Reference Resolution: In Practice

• Non-existent: does not allow the use of anaphoric

references

• Allows only simple references:

– utilizes the focus stack reference resolution mechanism

– does not take into account discourse structure information

• Example:

U: Where is A Bug’s Life playing in

Summit?

Summit

A Bug’s Life

June 1999

67

S: A Bug’s Life is playing at the

Summit theater.

June 1999

68

Summit

theater

A Bug’s Life

U: When is it playing there?

June 1999

69

Intention Recognition

B: I have to wash my hair.

June 1999

70

A: Would you like to go to the hairdresser?

• B’s utterance should be interpreted as an acceptance of A’s

proposal.

June 1999

71

A: What’s that smell around here?

• B’s utterance should be interpreted as an answer to A’s

question.

June 1999

72

A: Would you be interested in going out to dinner

tonight?

• B’s utterance should be interpreted as a rejection of A’s

proposal.

June 1999

73

Intention Recognition (Cont’d)

• Goal: to recognize the intent of each user utterance as one

(or more) of a set of dialogue acts based on context

• Sample dialogue actions:

– Verbmobil

– Switchboard DAMSL

• Greet/Thank/Bye

• Conventional-closing

• Suggest

• Statement-(non-)opinion

• Accept/Reject

• Agree/Accept

• Confirm

• Acknowledgment

• Yes-No-Question/Yes-Answer • Clarify-Query/Answer

• Give-Reason

• Non-verbal

• Deliberate

• Abandoned

• On-going standardization efforts (Discourse Resource

Initiative)

June 1999

74

Intention Recognition: In Theory

• Knowledge sources:

– Overall dialogue goals

– Orthographic features, e.g.:

• punctuation

• cue words/phrases: “but”, “furthermore”, “so”

• transcribed words: “would you please”, “I want to”

– Dialogue history, i.e., previous dialogue act types

– Dialogue structure, e.g.:

• subdialogue boundaries

• dialogue topic changes

– Prosodic features of utterance: duration, pause, F0, speaking rate

June 1999

75

Intention Recognition: In Theory (Cont’d)

• Finite-state dialogue grammar:

– e.g.

S / Greet

U / Question S / Answer

U / Bye

U / Question

• Plan-based discourse understanding:

– Recipes: templates for performing actions

– Inference rules: to construct plausible plans

• Empirical methods:

– Probabilistic dialogue act classifiers: HMMs

– Rule-based dialogue act recognition: CART, Transformation-based

learning

June 1999

76

Intention Recognition: In Practice

• Makes assumptions about (high-level) task-specific

intentions: e.g.,

– Call routing: giving destination information

– ATIS: requesting flight information

– Movie information system: movie showtime or theater playlist

information

• Does not allow user-initiated complex dialogue acts, e.g.

confirmation, clarification, or indirect responses

S1: What’s your account number?

U1: Is that the number on my ATM card?

S2: Would you like to transfer $1,500 from savings to checking?

U2: If I have enough in savings.

June 1999

77

Intention Recognition: In Practice (Cont’d)

• User utterances can play one of two roles:

– Identify one of a set of possible task intentions

– Provide necessary information for performing a task

• Based on either keywords in an utterance or its

syntactic/semantic representation

• Maps keywords or representations to intentions using:

– Template matching

– Probabilistic model

– Vector-based similarity measures

June 1999

78

Intention Recognition: Example

U: What time is A Bug’s Life playing at the Summit

theater?

• Using keyword extraction and vector-based similarity

measures:

– Intention: Ask-Reference: _time

– Movie: A Bug’s Life

– Theater: the Summit quadplex

June 1999

79

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

80

Dialogue Management: Motivating Examples

• Dialogue 1:

S: Would you like movie showtime or theater playlist

information?

U: Movie showtime.

S: What movie do you want showtime information about?

U: Saving Private Ryan.

S: At what theater do you want to see Saving Private

Ryan?

U: Paramount theater.

S: Saving Private Ryan is not playing at the Paramount

theater.

June 1999

81

DM: Motivating Examples (Cont’d)

• Dialogue 2:

S: How may I help you?

U: When is Saving Private Ryan playing?

S: For what theater?

U: The Paramount theater.

S: Saving Private Ryan is not playing at the

Paramount theater, but it’s playing at the Madison

theater at 3:00, 5:30, 8:00, and 10:30.

June 1999

82

DM: Motivating Examples (Cont’d)

• Dialogue 3:

S: How may I help you?

U: When is Saving Private Ryan playing?

S: For what theater?

U: The Paramount theater.

S: Saving Private Ryan is playing at the Fairmont

theater at 6:00 and 8:30.

U: I wanted to know about the Paramount theater, not

the Fairmont theater.

S: Saving Private Ryan is not playing at the

Paramount theater, but it’s playing at the Madison

theater at 3:00, 5:30, 8:00, and 10:30.

June 1999

83

Comparison of Sample Dialogues

• Dialogue 1:

• Dialogue 2:

– System-initiative

– Implicit

confirmation

– Merely informs

user of failed query

– Mechanical

– Least efficient

June 1999

– Mixed-initiative

– No confirmation

– Suggests

alternative when

query fails

– More natural

– Most efficient

84

• Dialogue 3:

– Mixed-initiative

– No confirmation

– Suggests

alternative when

query fails

– More natural

– Moderately

efficient

Dialogue Management

• Goal: determine what to accomplish in response to

user utterances, e.g.:

–

–

–

–

–

Answer user question

Solicit further information

Confirm/Clarify user utterance

Notify invalid query

Notify invalid query and suggest alternative

• Interface between user/language processing

components and system knowledge base

June 1999

85

Dialogue Management (Cont’d)

• Main design issues:

– Functionality: how much should the system do?

– Process: how should the system do them?

• Affected by:

– Task complexity: how hard the task is

– Dialogue complexity: what dialogue phenomena are allowed

• Affects:

– robustness

– naturalness

– perceived intelligence

June 1999

86

Task Complexity

• Application dependent

• Examples:

Call

Routing

Weather

Information

Automatic

Banking

ATIS

Simple

Complex

• Directly affects:

– Types and quantity of system knowledge

– Complexity of system’s reasoning abilities

June 1999

Travel

University

Planning Course

Advisement

87

Dialogue Complexity

• Determines what can be talked about:

– The task only

– Subdialogues: e.g., clarification, confirmation

– The dialogue itself: meta-dialogues

• Could you hold on for a minute?

• What was that click? Did you hear it?

• Determines who can talk about them:

– System only

– User only

– Both participants

June 1999

88

Dialogue Management: Functionality

• Determines the set of possible goals that the system may

select at each turn

• At the task level, dictated by task complexity

• At the dialogue level, determined by system designer in

terms of dialogue complexity:

– Are subdialogues allowed?

– Are meta-dialogues allowed?

– Only by the system, by the user, or by both agents?

June 1999

89

DM Functionality: In Theory

• Task complexity: moderate to complex

– Travel planning

– University course advisement

• Dialogue complexity:

– System/user-initiated complex subdialogues

• Embedded negotiation subdialogues

• Expressions of doubt

– Meta-dialogues

– Multiple dialogue threads

June 1999

90

DM Functionality: In Practice

• Task complexity: simple to moderate

– Call routing

– Weather information query

– Train schedule inquiry

• Dialogue complexity:

– About task only

– Limited system-initiated subdialogues

June 1999

91

Dialogue Management: Process

• Determines how the system will go about selecting among

the possible goals

• At the dialogue level, determined by system designer in

terms of initiative strategies:

– System-initiative: system always has control, user only responds to

system questions

– User-initiative: user always has control, system passively answers

user questions

– Mixed-initiative: control switches between system and user using

fixed rules

– Variable-initiative: control switches between system and user

dynamically based on participant roles, dialogue history, etc.

June 1999

92

DM Process: In Theory

• Initiative strategies:

– Mixed-initiative

– Variable-initiative

• Mechanisms for modeling initiative:

– Planning and reasoning

– Theorem proving

– Belief modeling

• Knowledge sources for modeling initiative:

–

–

–

–

June 1999

System beliefs, user beliefs, and mutual beliefs

System domain knowledge

Dialogue history

User preferences

93

DM Process: In Practice

• Initiative strategies:

–

–

–

–

User-initiative

System-initiative

Mixed-initiative

Variable-initiative

• Mechanisms for modeling initiative:

– System and mixed-initiative: finite-state machines

– Variable-initiative: evidential model for computing initiative

• Knowledge sources:

– Dialogue history: e.g. user fails to make progress in task

– Participant roles: advisor/advisee vs. collaborators

– Features of current utterance: e.g.: ambiguous utterance,

underspecified utterance

June 1999

94

Dialogue Management: Summary

• In theory:

– Focuses on developing algorithms for addressing difficult dialogue

phenomena in complex tasks

• In practice:

– Focuses on developing robust, real-time dialogue strategies for

handling common dialogue phenomena in simpler tasks

June 1999

95

Dialogue Management: Example

• System for providing movie showtime and theater playlist

information

• Functionality:

– Task: provide simple movie information

– Dialogue: allow system-initiated subdialogues

• Process:

– Variable-initiative

June 1999

96

Dialogue Management Example (Cont’d)

<U has task & dialogue initiative>

U: When is Antz playing at the Fairmont theater?

<S has dialogue initiative (low score for theater)>

<goal: confirm Paramount theater>

S: Did you say the Paramount theater?

U: No, the Fairmont theater.

<S has no initiative>

<goal: answer user question>

S: Antz is playing at the Fairmont theater at 7pm.

U: When is it playing at the Summit theater?

<S has task & dialogue initiative>

<goal: notify failure & suggest alternative>

S: Antz is not playing at the Summit theater, but it’s

playing at the AMC theater in Summit at 8pm.

June 1999

97

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

98

Response Generation

U: Is Saving Private Ryan playing at the Chatham

cinema?

June 1999

99

S: No, it’s not.

• S provides elliptical response

June 1999

100

S: No, Saving Private Ryan is not playing at the Chatham

cinema.

• S provides full response (which provides grounding

information)

June 1999

101

S: No, Saving Private Ryan is not playing at the Chatham

cinema; the theater’s under renovation.

• S provides full response and supporting evidence

June 1999

102

Response Generation (Cont’d)

• Goal: generate natural language utterances to achieve

goal(s) selected by the dialogue manager

• Issues:

– Content selection: determining what to say

– Surface realization: determining how to say it

• Generation gap: discrepancy between the actual output of

the content selection process and the expected input of the

surface realization process

June 1999

103

Content Selection

• Goal: determine the propositional content of utterances to

achieve goal(s)

• Examples:

– Antz is not playing at the Maplewood theater; [Nucleus]

– Would you like the suite? [Nucleus]

– Can you get the groceries from the car? [Nucleus]

June 1999

104

– the theater’s under renovation. (evidence) [Satellite]

– It’s the same price as the regular room. (motivation) [Satellite]

– The key is on the dryer. (enablement) [Satellite]

June 1999

105

Content Selection: In Theory

• Knowledge sources:

–

–

–

–

Domain knowledge base

User beliefs

User model: user characteristics, preferences, etc.

Dialogue history

• Content selection mechanisms:

– Schemas: patterns of predicates

– Rule-based generation

– Plan-based generation:

• Recipes: templates for performing actions

• Planner: to construct plans for given goal

– Case-based reasoning

June 1999

106

Content Selection: In Practice

• Knowledge sources:

– Domain knowledge base

– Dialogue history

• Pre-determined content selection strategies:

– Nucleus only, no satellite information

– Nucleus + fixed satellite

June 1999

107

Surface Realization

• Goal: determine how the selected content will be conveyed

by natural language utterances

• Examples:

–

–

–

–

Antz is showing (shown) at the Maplewood theater.

The Maplewood theater is showing Antz.

It is at the Maplewood theater that Antz is shown.

Antz, that’s what’s being shown at the Maplewood theater.

• Issues:

– Clausal structure construction

– Lexical selection

June 1999

108

Surface Realization: In Theory

• Typical surface generator requires as input:

– Semantic representation to be realized

– Clausal structure for generated utterance

• Surface realization component utilizes a grammar to

generate utterance that conveys the given semantic

representation

June 1999

109

Surface Realization: In Practice

• Canned utterances:

– Pre-determined utterances for goals; e.g.:

• Greetings: Hello, this is the ABC bank’s operator.

• Repeat: Could you please repeat your request?

– Facilitates pre-recorded prompts for speech output

• Template-based generation:

– Templates for goals; e.g.:

• Notification: Your call is being transferred to X.

• Inform: A,B,C,D, and E are playing at the F theater.

• Clarify: Did you say X or Y?

– Needs cut-and-paste of pre-recorded segments or full TTS system

June 1999

110

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

111

Dialogue Evaluation

• Goal: determine how “well” a dialogue system performs

• Main difficulties:

– No strict right or wrong answers

– Difficult to determine what features make a dialogue system better

than another

– Difficult to select metrics that contribute to the overall “goodness” of

the system

– Difficult to determine how the metrics compensate for one another

– Expensive to collect new data for evaluating incremental

improvement of systems

June 1999

112

Dialogue Evaluation (Cont’d)

• System-initiative, explicit

confirmation

–

–

–

–

–

June 1999

• Mixed-initiative, no

confirmation

–

–

–

–

–

better task success rate

lower WER

longer dialogues

fewer recovery subdialogues

less natural

113

lower task success rate

higher WER

shorter dialogues

more recovery subdialogues

more natural

Dialogue Evaluation Paradigms

• Evaluating the end result only:

– Reference answers

• Evaluating both the end result and the process toward it:

– Evaluation metrics

– Performance functions

June 1999

114

Evaluation Paradigms: Reference Answers

• Evaluates the task success rate only

• Suitable for query-answering systems for which a correct

answer can be defined for each query

• For each query:

– Obtain answer from dialogue system

– Compare with reference answer

– Score system performance

• Advantage: simple

• Disadvantage: ignores many other important factors that

contribute to quality of dialogue systems

June 1999

115

Evaluation Paradigms: Evaluation Metrics

• Different metrics for evaluating different components of a

dialogue system:

– Speech recognizer: word error rate / word accuracy

– Understanding component: attribute value matrix

– Dialogue manager:

• appropriateness of system responses

• error recovery abilities

– Overall system:

• task success

• average number of turns

• elapsed time

• turn correction ratio

June 1999

116

Paradigms: Evaluation Metrics (Cont’d)

• Advantage:

– Takes into account the process toward completing the task

• Limitations:

– Difficult to determine how different metrics compensate for one

another

– Metrics may not be independent of one another

– Does not generalize across domains and tasks

June 1999

117

Paradigms: Performance Functions

• PARADISE [Walker et al.]: derives performance functions

using both task-based and dialogue-based metrics

• User satisfaction:

– Maximize task success

– Minimize costs:

• Efficiency measures: e.g., number of utterances, elapsed time

• Qualitative measures: e.g., repair ratio, inappropriate utt. ratio

• Performance function derivation:

– Obtain user satisfaction ratings (questionnaire)

– Obtain values for various metrics (automatic or manual)

– Apply multiple linear regression to derive a function relating user

satisfaction and various cost factors, e.g.,

Perf .21* TSR .47 * MR .15 * ET

June 1999

118

Paradigms: Performance Functions (Cont’d)

• Advantages:

– Allows for comparison of dialogue systems performing different tasks

– Specifies relative contributions of cost factors to overall performance

– Can be used to make predictions about future versions of the

dialogue system

• Disadvantages:

– Data collection cost for deriving performance function is high

– Cost for deriving performance function for multiple systems to draw

general conclusions is high

June 1999

119

Tutorial Overview: Outline

Part I

Part II

• Signal processing

• Speech recognition

• Discourse and dialogue

– Discourse interpretation

– Dialogue management

– Response generation

– acoustic modeling

– language modeling

– decoding

• Semantic interpretation

• Speech synthesis

June 1999

• Dialogue evaluation

• Data collection

120

Data Collection: Wizard of Oz Paradigm

• Setup for initial data collection:

– User communicates with “system” through telephone (speech) or

keyboard (text)

– “System” is actually a human, typically given instructions on how to

behave like a system

– Users are typically given tasks to perform in the target domain

– Subjects are the users and the “system” can be played by one

person

– Dialogues between “system” and user are recorded and transcribed

• Setup for intermediate system evaluation:

– Use actual running system, with wizard supervision

– Wizard can override undesirable system behavior, e.g., correct ASR

errors

June 1999

121

Data Collection: Wizard of Oz (Cont’d)

• Features of collected data:

– Typically much less complex than actual human-human dialogues

performing the same tasks

– Captures how humans behave when they talk to computers

– Captures variations among different subjects in both language and

approach when performing the same tasks

• Use of collected data:

– Particularly useful for designing the interpretation component of the

dialogue system

– Useful for training purposes for ASR systems

– May also be helpful for designing the dialogue management and

response generation components

June 1999

122

Summary

Signal

Processing

Semantic

Interpretation

Speech

Recognition

Discourse

Interpretation

Dialogue

Management

Speech

Synthesis

June 1999

Response

Generation

123

Publicly Available Telephone Demos

• Nuance

http://www.nuance.com/demo/index.html

– Banking: 1-650-847-7438

– Travel Planning: 1-650-847-7427

– Stock Quotes: 1-650-847-7423

• SpeechWorks http://www.speechworks.com/demos/demos.htm

– Banking: 1-888-729-3366

– Stock Trading: 1-800-786-2571

• MIT Spoken Language Systems Laboratory

http://www.sls.lcs.mit.edu/sls/whatwedo/applications.html

– Travel Plans (Pegasus): 1-877-648-8255

– Weather (Jupiter): 1-888-573-8255

• IBM http://www.software.ibm.com/speech/overview/business/demo.html

– Mutual Funds, Name Dialing: 1-877-VIA-VOICE

June 1999

124