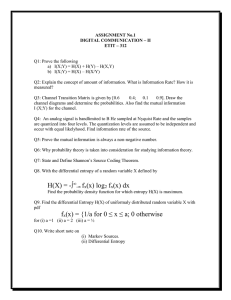

Data Mining – Algorithms: Decision Trees - ID3 Chapter 4, Section 4.3

advertisement

Data Mining – Algorithms: Decision Trees - ID3 Chapter 4, Section 4.3 Common Recursive Decision Tree Induction Pseudo Code • If all instances have same classification – Stop, create leaf with that classification • Else – Choose attribute for root – Make one branch for each possible value (or range if numeric attribute) – For each branch • Recursively call same method Choosing an Attribute • Key to how this works • Main difference between many decision tree learning algorithms • First, let’s look at intuition – We want a small tree – Therefore, we prefer attributes that divide instances well – A perfect attribute to use is one for which each value is associated with only one class (e.g. if all rainy days play=no and all sunny days play=yes and all overcast days play=yes) – We don’t often get perfect very high in the tree – So, we need a way to measure relative purity Relative Purity – My Weather Sunny Overcast Rainy Hot Mild Cool No No No No No No Yes Yes No Yes Yes No Yes Yes No No No Yes Yes No No No Yes Yes Yes Yes • Intuitively, it looks like outlook creates more purity, but computers can’t use intuition • ID3 (famous early algorithm) – measured based on “information theory” (Shannon 1948) Information Theory • A piece of information is more valuable if it adds to what is already known – If all instances in a group were known to be all in the same class, then the information value of being told the class of a particular instance is Zero – If instances are evenly split between classes, then the information value of being told the class of a particular instance is Maximized – Hence more purity = less information – Information theory measures the value of information using “entropy”, which is measured in “bits” Entropy • Derivation of formula is beyond our scope • Calculation – entropy = (-cnt1 log2cnt1 – cnt2 log2cnt2 – cnt3 log2cnt3 … + totalcnts log2totalcnts ) / totalcnts where cnt1, cnt2, … are counts of number of instances in each class and totalcnts is the total of those counts <see entropy.xls – show how it goes up with less purity> Entropy calculated in Excel classes cat1 counts log counts neg term careful 1 0 0 0 0 cat2 classes cat1 counts log counts neg term careful 2 1 -1 -2 -2 7 2.807355 -2.80735 -19.6515 -19.6515 cat2 3 1.584963 -1.58496 -4.75489 -4.75489 cat3 cat4 #NUM! #NUM! #NUM! cat5 #NUM! #NUM! #NUM! 0 cat3 8 3 #NUM! #NUM! #NUM! 0 cat4 4 2 -2 -8 -8 total cat5 #NUM! #NUM! #NUM! #NUM! #NUM! #NUM! 0 24 24 4.348516 0.543564 0 total 9 3.169925 28.52933 0 28.52933 13.77444 1.530493 Information Gain • Amount entropy is reduced as a result of dividing • This is the deciding measure for ID3 Example: My Weather (Nominal) Outlook sunny sunny overcast rainy rainy rainy overcast sunny sunny rainy sunny overcast overcast rainy Temp hot hot hot mild cool cool cool mild cool mild mild mild hot mild Humid high high high high normal normal normal high normal normal normal high normal high Windy FALSE TRUE FALSE FALSE FALSE TRUE TRUE FALSE FALSE FALSE TRUE TRUE FALSE TRUE Play? no yes no no no no yes yes yes no yes yes no no Let’s take this a little more realistic than book does • this will be cross validated • Normally 10-fold is used, but with 14 instances that is a little awkward • For each fold will divide into training and test data • This time through, let’s save the last record as a test Using Entropy to Divide • Entropy for all training instances (5 yes, 8 no) = .96 • Entropy for Outlook division = weighted average of Nodes created by division = 5/13 * .72 (entropy [4,1]) + 4/13 * 1 (entropy [2,2]) + 4/13 * 0 (entropy [0,4]) = .585 • Info Gain = .96 - .585 = .375 Using Entropy to Divide • Entropy for Temperature division = weighted average of Nodes created by division = 4/13 * .81 (entropy [1,3]) + 5/13 * .97 (entropy [3,2]) + 4/13 * 1.0 (entropy [2,2]) = .931 • Info Gain = .96 - .931 = .029 Using Entropy to Divide • Entropy for Humidity division = weighted average of Nodes created by division = 6/13 * 1 (entropy [3,3]) + 7/13 * .98 (entropy [3,4]) = .992 • Info Gain = .96 - .992 = -0.032 Using Entropy to Divide • Entropy for Windy division = weighted average of Nodes created by division = 8/13 * .81 (entropy [2,6]) + 5/13 * .72 (entropy [4,1]) = .777 • Info Gain = .96 - .777 = .183 • Biggest Gain is via Outlook Recursive Tree Building • On sunny instances, will consider other attributes Example: My Weather (Nominal) Outlook sunny sunny Temp hot hot Humid high high Windy FALSE TRUE Play? no yes sunny sunny mild cool high normal FALSE FALSE yes yes sunny mild normal TRUE yes Using Entropy to Divide • Entropy for all sunny training instances (4 yes, 1 no) = .72 • Outlook does not have to be considered because it has already been used Using Entropy to Divide • Entropy for Temperature division = weighted average of Nodes created by division = 2/5 * 1.0 (entropy [1,1]) + 2/5 * 0.0 (entropy [2,0]) + 1/5 * 0.0 (entropy [1,0]) = .400 • Info Gain = .72 - .4 = .32 Using Entropy to Divide • Entropy for Humidity division = weighted average of Nodes created by division = 3/5 * .918 (entropy [2,1]) + 2/5 * .0 (entropy [2,0]) = .551 • Info Gain = .72 - .55 = 0.17 Using Entropy to Divide • Entropy for Windy division = weighted average of Nodes created by division = 3/5 * .918 (entropy [3,2]) + 2/5 * 0 (entropy [2,0]) = .551 • Info Gain = .72 - .55 = .17 • Biggest Gain is via Temperature Tree So Far outlook Sunny Rainy Overcast temp Hot 1 yes, 1 no Mild 2 yes Cool 1 yes 2 yes, 2 no 4 no Recursive Tree Building • On sunny, hot instances, will consider other attributes Outlook sunny sunny Temp hot hot Humid high high Windy FALSE TRUE Play? no yes Tree So Far outlook Sunny Rainy Overcast temp Hot Mild windy True 1 yes False 1 no 2 yes Cool 1 yes 2 yes, 2 no 4 no Recursive Tree Building • On overcast instances, will consider other attributes Example: My Weather (Nominal) Outlook Temp Humid Windy Play? overcast hot high FALSE no overcast cool normal TRUE yes overcast overcast mild hot high normal TRUE FALSE yes no Using Entropy to Divide • Entropy for all overcast training instances (2 yes, 2 no) = 1.0 • Outlook does not have to be considered because it has already been used Using Entropy to Divide • Entropy for Temperature division = weighted average of Nodes created by division = 2/4 * 0.0 (entropy [0,2]) + 1/4 * 0.0 (entropy [1,0]) + 1/4 * 0.0 (entropy [1,0]) = .000 • Info Gain = 1.0 - 0.0 = 1.0 Using Entropy to Divide • Entropy for Humidity division = weighted average of Nodes created by division = 2/4 * 1.0 (entropy [1,1]) + 2/4 * 1.0 (entropy [1,1]) = 1.0 • Info Gain = 1.0 – 1.0 = 0.0 Using Entropy to Divide • Entropy for Windy division = weighted average of Nodes created by division = 2/4 * 0.0 (entropy [0,2]) + 2/4 * 0.0 (entropy [2,0]) = 0.0 • Info Gain = 1.0 – 0.0 = 1.0 • Biggest Gain is tie between Temperature and Windy Tree So Far outlook Sunny Rainy Overcast temp Hot Mild Cool windy True windy True 1 yes False 1 no 2 yes False 1 yes 2 yes 2 no 4 no Test Instance • • • • • Top of the tree checks outlook Test instance value = rainy Branch right Reach a leaf Predict “No” (which is correct) In a 14-fold cross validation, this would continue 13 more times • Let’s run WEKA on this … WEKA results – first look near the bottom === Stratified cross-validation === === Summary === Correctly Classified Instances 9 64.2857 % Incorrectly Classified Instances 2 14.2857 % 3 Unclassified Instances ============================================ • On the cross validation – it got 9 out of 14 tests correct (the unclassified instances are tough to understand without seeing all of the trees that were built. It surprises me. They may do some work to avoid overfitting More Detailed Results === Confusion Matrix === a b <-- classified as 2 1 | a = yes 1 7 | b = no ==================================== •Here we see –the program 3 times predicted play=yes, on 2 of those it was correct •The program 8 times predicted play = no, on 7 of those it was correct •There were 3 instances whose actual value was play=yes, the program correctly predicted that on 2 of them •There were 8 instances whose actual value was play=no, the program correctly predicted that on 7 of them •All of the unclassified instances were actually play=yes Part of our purpose is to have a take-home message for humans • Not 14 take home messages! • So instead of reporting each of the things learned on each of the 14 training sets … • … The program runs again on all of the data and builds a pattern for that – a take home message WEKA - Take-Home outlook = sunny | temperature = hot | | windy = TRUE: yes | | windy = FALSE: no | temperature = mild: yes | temperature = cool: yes outlook = overcast | temperature = hot: no | temperature = mild: yes | temperature = cool: yes outlook = rainy: no •This is a decision tree!! Can you see it? •This is almost the same as we generated, we took a tie breaker a different way •This is a fairly simple classifier – not as simple as with OneR – but it could be the take home message from running this algorithm on this data – if you are satisfied with the results! Let’s Try WEKA ID3 on njcrimenominal • Try 10-fold unemploy = hi: bad unemploy = med | education = hi: ok | education = med | | twoparent = hi: null | | twoparent = med: bad | | twoparent = low: ok | education = low | | pop = hi: null | | pop = med: ok | | pop = low: bad unemploy = low: ok === Confusion Matrix === a b <-- classified as 5 2 | a = bad 3 22 | b = ok Seems to noticeably improve on our very simple methods on this slightly more interesting dataset Another Thing or Two • Using this method, if an attribute is essentially a primary key – identifying the instances, dividing based on it will give the maximum information gain because no further information is needed to determine the class (the entropy will be 0) • However, branching based on a key is not interesting, nor is useful for predicting future un-trained on instances • The more general idea is that the entropy measure favors splitting using attributes with more possible values • A common adjustment is to use a “gain ratio” … Gain Ratio • Take info gain and divide by “intrinsic info” from the split • E.g. top split above Attribute Wt Ave Info Gain Split info Gain ratio Outlook .585 .375 Info([5,4,4] )= 1.577 .238 Temperature .931 .029 Info([4,5,4]) = 1.577 .018 Humidity .992 -.032 Info([6,7]) = -0.032 .996 Windy .777 .183 Info([8,5]) = .190 .961 Still not a slam dunk • Even this gets adjusted in some approaches to avoid yet other problems (see p 97) ID3 in context • Quinlan (1986) published about ID3 as first major successful decision tree learner in Machine Learning • He continued to improve the algorithm – His C4.5 was published in a book, and is available as J48 in WEKA • Improvements included dealing with numeric attributes, missing values, noisy data, and generating rules from trees (see Section 6.1) – His further efforts were commercial and proprietary instead of published in research literature • Probably almost every graduate student in Machine Learning starts out by writing a version of ID3 so that they FULLY understand it Class Exercise • ID3 cannot run on jappanbank data since it includes some numeric attributes • Let’s run WEKA J48 on japanbank End Section 4.3