Supercomputing on Windows Clusters: Experience and Future Directions Andrew A. Chien

Supercomputing on Windows

Clusters:

Experience and Future Directions

Andrew A. Chien

CTO, Entropia, Inc.

SAIC Chair Professor

Computer Science and Engineering, UCSD

National Computational Science Alliance

Invited Talk, USENIX Windows, August 4, 2000

Overview

Critical Enabling Technologies

The Alliance’s Windows Supercluster

– Design and Performance

Other Windows Cluster Efforts

Future

– Terascale Clusters

– Entropia

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

External Technology Factors

Microprocessor Performance

100

Microprocessors

MIPS R2000 (125)

MIPS R3000 (40)

10

Cray 1S (12.5)

Cray X-MP (8.5)

Cray Y-MP (6)

HP 7000 (15)

R4000 (10)

R4400 (6.7)

1

Vector supercomputers

X86/Alpha

(1)

1975 1980 1985 1990 1995

Micros: 10MF -> 100 MF -> 1GF -> 3GF -> 6GF (2001?)

Year

Introduced

=> Memory system performance catching up (2.6 GB/s 21264 memory

BW)

Killer Networks

GigSAN/GigE: 110 MB/s

UW Scsi: 40 MB/s

LAN: 10Mb/s -> 100Mb/s -> ?

SAN: 12MB/s -> 110MB/s

(Gbps) -> 1100MB/s -> ?

– Myricom, Compaq, Giganet, Intel,...

Network bandwidths limited by system internal memory bandwidths

Cheap and very fast communication hardware

FastE: 12 MB/s

Ethernet 1MB/s

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Rich Desktop Operating Systems

Environments

HD Storage

Networks

Graphical Interfaces

Audio/Graphics

Clustering, Performance,

Multiprocess Protection

Mass store, HP networking,

Management, Availability, etc.

SMP support

Basic device access

1981 1985 1990 1995 1999

Desktop (PC) operating systems now provide

– richest OS functionality

– best program development tools

– broadest peripheral/driver support

– broadest application software/ISV support

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

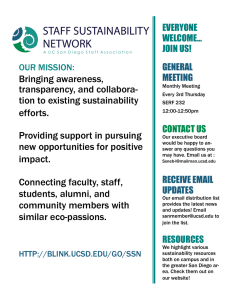

Critical Enabling Technologies

Critical Enabling Technologies

Cluster management and resource integration (“use like” one system)

Delivered communication performance

– IP protocols inappropriate

Balanced systems

– Memory bandwidth

– I/O capability

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

The HPVM System

Goals

– Enable tightly coupled and distributed clusters with high efficiency and low effort (integrated solution)

– Provide usable access thru convenient standard parallel interfaces

– Deliver highest possible performance and simple programming model

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Delivered Communication

Performance

Early 1990’s, Gigabit testbeds

– 500Mbits (~60MB/s) @ 1 MegaByte packets

– IP protocols not for Gigabit SAN’s

Cluster Objective: High performance communication to small and large messages

Performance Balance Shift: Networks faster than I/O, memory, processor

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Fast Messages Design Elements

User-level network access

Lightweight protocols

– flow control, reliable delivery

– tightly-coupled link, buffer, and I/O bus management

Poll-based notification

Streaming API for efficient composition

Many generations 1994-1999

– [IEEE Concurrency, 6/97]

– [Supercomputing ’95, 12/95]

Related efforts: UCB AM, Cornell U-Net,RWCP PM,

Princeton VMMC/Shrimp, Lyon BIP => VIA standard

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Improved Bandwidth

250

200

150

100

50

0

1995 1996 1997 1998 1999

MB/s

20MB/s -> 200+ MB/s (10x)

– Much of advance is software structure: API’s and implementation

– Deliver *all* of the underlying hardware performance

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Improved Latency

25

20

15

10

5 microseconds

0

1995 1996 1997 1998 1999

100 m s to 2 m s overhead (50x)

– Careful design to minimize overhead while maintaining throughput

– Efficient event handling, fine-grained resource management and interlayer coordination

– Deliver *all* of the underlying hardware performance

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

HPVM = Cluster Supercomputers

MPI Put/Get

Global

Arrays

Fast Messages

Myrinet

Server-

Net

Giganet

VIA

SMP

BSP

WAN

Scheduling

& Mgmt (LSF)

Performance

Tools

HPVM 1.0 (8/1997)

HPVM 1.2 (2/1999)

- multi, dynamic, install

HPVM 1.9 (8/1999)

- giganet, smp

Turnkey Cluster Computing; Standard API’s

Network hardware and API’s increase leverage for users, achieve critical mass for system

Each involved new research challenges and provided deeper insights into the research issues

– Drove continually better solutions (e.g. multi-transport integration, robust flow control and queue management)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

HPVM Communication Performance

120

100

80

60

40

20

0

FM on Myrinet

MPI on FM-Myrinet

• N

1/2

~ 400 Bytes message size (bytes)

Delivers underlying performance for small messages, endpoints are the limits

100MB/s at 1K vs 60MB/s at 1000K

– >1500x improvement

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

80

90

HPVM/FM on VIA

70

60

50

40

30

20

10

FM on Giganet VIA

MPI-FM on Giganet VIA

• N

1/2

~ 400 Bytes

0

4

10

24

29

44

39

68

49

92

60

16

70

40

80

64

90

88

10

11

2

11

13

6

12

16

0

13

18

4

14

20

8

15

23

2

16

25

6 message size (bytes)

FM Protocol/techniques portable to Giganet VIA

Slightly lower performance, comparable N

1/2

Commercial version: WSDI (stay tuned)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Procs

Unified Transfer and Notification

(all transports)

<space>

Fixed Size

Frames

Variable Size

Data

Increasing

Addresses

Networks

Fixed Size Trailer

+ Length/Flag

Solution: Uniform notify and poll (single Q representation)

Scalability: n into k (hash); arbitrary SMP size or number of NIC cards

Key: integrate variable-sized messages; achieve single DMA transfer

– no pointer-based memory management, no special synchronization primitives, no complex computation

Memory format provides atomic notification in single contiguous memory transfer (bcopy or DMA)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Integrated Notification Results

Myrinet (latency)

Single Transport Integrated

8.3

m s

Myrinet (BW) 101MB/s

Shared Memory (latency) 3.4

m s

Shared Memory (BW) 200+MB/s

8.4

m s

101MB/s

3.5

m s

200+MB/s

No polling or discontiguous access performance penalties

Uniform high performance which is stable over changes of configuration or the addition of new transports

– no custom tuning for configuration required

Framework is scalable to large numbers of SMP processors and network interfaces

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Supercomputer Performance

Characteristics (11/99)

Cray T3E

MF/Proc Flops/Byte Flops/NetworkRT

1200 ~2 ~2,500

SGI Origin2000 500

HPVM NT Supercluster 600

~0.5

~8

~1,000

~12,000

IBM SP2 (4 or 8-way) 2.6-5.2GF ~12-25 ~150-300K

Beowulf (100Mbit) 600 ~50 ~200,000

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Windows

The NT Supercluster

Windows Clusters

Early prototypes in CSAG

– 1/1997, 30P, 6GF

– 12/1997, 64P, 20GF

Alliance’s Supercluster

– 4/1998, 256P, 77GF

– 6/1999, 256P*, 109GF

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

NCSA’s Windows Supercluster

128 HP Kayak XU

Dual PIII 550 MHz/1GB RAM

AS-PCG MPI Performance - 2D Navier Stokes Kernel

20

Engineering Fluid Flow Problem

18

128 300 MHz Intel Pentium II +

128 550 MHz Pentium III Xeon

16

14

12

10

Origin

550 MHz

Using NT, Myrinet Interconnect, and HPVM

8

6

4

2

0

300 MHz

SGI O2000, 250 MHz R10000

NT Cluster: Intel 550 MHz PIII Xeon HP Kayak

NT Cluster: Intel 300MHz PII HP Kayak

Cluster: 128 550MHz + 128 300 MHz

#207 in Top 500

Supercomputing Sites

0 32

D. Tafti, NCSA

64 96 128

Processors

160 192 224 256

Rob Pennington (NCSA), Andrew Chien (UCSD)

Windows Cluster System

Front-End Systems

Fast Ethernet

File servers

LSF master

Internet

• Apps development

• Job submission

LSF Batch

Job Scheduler

FTP to Mass Storage

Daily backups

128 GB Home

200 GB Scratch

128 Compute Nodes, 256 CPUs

Windows NT, Myrinet and HPVM

128 Dual 550 MHz Systems

Infrastructure and Development Testbeds

Windows 2K and NT

8 4p 550 + 32 2p 300 + 8 2p 333

(courtesy Rob Pennington, NCSA)

Example Application Results

MILC – QCD

Navier-Stokes Kernel

Zeus-MP – Astrophysics CFD

Large-scale Science and Engineering codes

Comparisons to SGI O2K and Linux clusters

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

MILC Performance

12

10

IA-32/Win NT, 300 MHz PII

250 MHz SGI O2K

T3E 900

IA-32/Win NT 550MHz Xeon

8

6

4

2

0

0 50

Processors

Src: D. Toussaint and K. Orginos, Arizona

100

Zeus-MP (Astrophysics CFD)

10000

9000

8000

7000

6000

5000

4000

3000

2000

1000

0

1 4 16 32 64 96 128 192 256

# procs

SGI O2K

Janus (ASCI Red)

NT Supercluster

550 Mhz

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

2D Navier Stokes Kernel

AS-PCG MPI Performance - 2D Navier Stokes Kernel

20

18

16

14

12

10

8

6

4

2

0

0 32 64

128 300 MHz Intel Pentium II +

128 550 MHz Pentium III Xeon

96

SGI O2000, 250 MHz R10000

NT Cluster: Intel 550 MHz PIII Xeon HP Kayak

NT Cluster: Intel 300MHz PII HP Kayak

Cluster: 128 550MHz + 128 300 MHz

128

Processors

160 192 224 256

Source: Danesh Tafti, NCSA

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Applications with High Performance on Windows Supercluster

Zeus-MP (256P, Mike Norman)

ISIS++ (192P, Robert Clay)

ASPCG (256P, Danesh Tafti)

Cactus (256P, Paul Walker/John Shalf/Ed Seidel)

MILC QCD (256P, Lubos Mitas)

QMC Nanomaterials (128P, Lubos Mitas)

Boeing CFD Test Codes, CFD Overflow (128P, David Levine) freeHEP (256P, Doug Toussaint)

ARPI3D (256P, weather code, Dan Weber)

GMIN (L. Munro in K. Jordan)

DSMC-MEMS (Ravaioli)

FUN3D with PETSc (Kaushik)

SPRNG (Srinivasan)

MOPAC (McKelvey)

Astrophysical N body codes (Bode)

=> Little code retuning and quickly running ...

Parallel Sorting (Rivera

– CSAG),

18.3 GB Minutesort World Record

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

MinuteSort

Sort max data disk-to-disk in 1 minute

“Indy sort”

– fixed size keys, special sorter, and file format

HPVM/Windows Cluster winner for 1999 (10.3GB) and 2000 (18.3GB)

– Adaptation of Berkeley NOWSort code (Arpaci and

Dusseau)

Commodity configuration ($$ not a metric)

– PC’s, IDE disks, Windows

– HPVM and 1Gbps Myrinet

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

MinuteSort Architecture

Kayak

Netserver

HPVM & 1Gbps Myrinet

Kayak

32 HP Kayaks

3Ware Controllers

4 x 20GB IDE disks

32 HP Netservers

2 x 16GB SCSI disks

Sort Scaling

Concurrent read/bucket-sort/communicate is bottleneck – faster I/O infrastructure required (busses and memory, not disks)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

MinuteSort Execution Time

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Reliability

Gossip: “Windows platforms are not reliable”

– Larger systems => intolerably low MTBF

Our Experience: “Nodes don’t crash”

– Application runs of 1000s of hours

– Node failure means an application failure; effectively not a problem

Hardware

– Short term: Infant mortality (1 month burn-in)

– Long term

• ~1 hardware problem/100 machines/month

• Disks, network interfaces, memory

• No processor or motherboard problems.

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Windows Cluster Usage

NT Cluster Usage by Number of Processors

May1999 to Jul2000

500000

400000

300000

200000

100000

0

1 - 31 32 - 63 64 - 256

Number of Processors

Lots of large jobs

Runs up to ~14,000 hours (64p * 9 days)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Other Large Windows Clusters

Sandia’s Kudzu Cluster (144 procs, 550 disks, 10/98)

Cornell’s AC 3 Velocity Cluster (256 procs, 8/99)

Others (sampled from vendors)

– GE Research Labs (16, Scientific)

– Boeing (32, Scientific)

– PNNL (96, Scientific)

– Sandia (32, Scientific)

– NCSA (32, Scientific)

– Rice University (16, Scientific)

– U. of Houston (16, Scientific)

– U. of Minnesota (16, Scientific)

– Oil & Gas (8,Scientific)

– Merrill Lynch (16, Ecommerce)

– UIT (16, ASP/Ecommerce)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

The AC 3 Velocity

64 Dell PowerEdge 6350 Servers

• Quad Pentium III 500 MHz/2 MB Cache Processors (SMP)

• 4 GB RAM/Node

• 50 GB Disk (RAID 0)/Node

GigaNet Full Interconnect

• 100 MB/Sec Bandwidth between any 2 Nodes

• Very Low Latency

2 Terabytes Dell PowerVault 200S Storage

• 2 Dell PowerEdge 6350 Dual Processor File Servers

• 4 PowerVault 200S Units/File Server

• 8 36 GB/Disk Drives/PowerVault 200S

• Quad Channel SCSI Raid Adapter

• 180 MB/sec Sustained Throughput/ Server #381 in Top 500

2 Terabyte PowerVault 130T Tape Library

• 4 DLT 7000 Tape Drives

• 28 Tape Capacity

(courtesy David A. Lifka, Cornell TC)

Supercomputing Sites

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Recent AC 3 Additions

8 Dell PowerEdge 2450 Servers (Serial Nodes)

• Pentium III 600 MHz/512 KB Cache

• 1 GB RAM/Node

• 50 GB Disk (RAID 0)/Node

7 Dell PowerEdge 2450 Servers (First All NT Based AFS Cell)

• Dual Processor Pentium III 600 MHz/512 KB Cache

• 1 GB RAM/Node Fileservers, 512 MB RAM/Node Database servers

• 1 TB SCSI based RAID 5 Storage

• Cross platform filesystem support

64 Dell PowerEdge 2450 Servers (Protein Folding, Fracture

Analysis)

• Dual Processor Pentium III 733 Mhz/256 KB Cache

• 2 GB RAM/Node

• 27 GB Disk (RAID 0)/Node

• Full Giganet Interconnect

3 Intel ES6000 & 1 ES1000 Gigabit switches

• Upgrading our Server Backbone network to Gigabit Ethernet

(courtesy David A. Lifka, Cornell TC)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

AC

3

Goals

Only commercially supported technology

– Rapid spinup and spinout

– Package technologies for vendors to sell as integrated systems

=> All of commercial packages were moved from SP2 to Windows, all users are back and more!

Users: “I don’t do windows” =>

“I’m agnostic about operating systems, and just focus on getting my work done.”

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Protein Folding

The cooperative motion of ion and water through the gramicidin ion channel. The effective quasi-article that permeates through the channel includes eight water molecules and the ion. Work of Ron Elber with

Bob Eisenberg, Danuta Rojewska and Duan Pin.

Reaction path study of lig and diffusion in leghemoglobin. The ligand is CO (white) and it is moving from the binding site, the heme pocket, to the protein exterior. A study by

Weislaw Nowak and Ron Elber.

http://www.tc.cornell.edu/reports/NIH/resource/CompBiologyTools/

(courtesy David A. Lifka, Cornell TC)

Protein Folding Per/Processor Performance

Results on different computers for a protein structures:

Machine

Blue Horizon (SP

San Diego)

Linux cluster

Velocity (CTC)

Velocity+ (CTC)

System

AIX 4

CPU

Power3

Linux 2.2

PentiumIII

Win 2000 PentiumIII

Xeon

Win 2000 PentiumIII

CPU speed

[MHz]

222

650

500

733 compiler xlf

PGF 3.1

df v6.1

df v6.1

Results on different computers for ( a / b or b proteins):

Machine

Blue Horizon (SP

San Diego)

Linux cluster

System

AIX 4

Linux 2.2

CPU

Power3

PentiumIII

CPU speed

[MHz]

222

650 compiler xlf

PGF 3.1

Velocity (CTC)

Velocity+ (CTC)

Win 2000 PentiumIII

Xeon

Win 2000 PentiumIII

500

733 df v6.1

df v6.1

(courtesy David A. Lifka, Cornell TC)

Energy evaluations per second

44.3

59.1

46.0

59.2

Energy evaluations per second

15.0

21.0

16.9

22.4

AC 3 Corporate Members

-Air Products and Chemicals

-Candle Corporation

-Compaq Computer Corporation

-Conceptual Reality Presentations

-Dell Computer Corporation

-Etnus, Inc.

-Fluent, Inc.

-Giganet, Inc.

-IBM Corporation

-ILOG, Inc.

-Intel Corporation

-KLA-Tencor Corporation

-Kuck & Associates, Inc.

-Lexis-Nexis

-MathWorks, Inc.

-Microsoft Corporation

-MPI Software Technologies, Inc.

-Numerical Algorithms Group

-Portland Group, Inc.

-Reed Elsevier, Inc.

-Reliable Network Solutions, Inc.

-SAS Institute, Inc.

-Seattle Lab, Inc.

-Visual Numerics, Inc.

-Wolfram Research, Inc.

(courtesy David A. Lifka, Cornell TC)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Windows Cluster Summary

Good performance

Lots of Applications

Good reliability

Reasonable Management complexity (TCO)

Future is bright; uses are proliferating!

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Windows Cluster Resources

NT Supercluster, NCSA

– http://www.ncsa.uiuc.edu/General/CC/ntcluster/

– http://www-csag.ucsd.edu/projects/hpvm.html

AC3 Cluster, TC

– http://www.tc.cornell.edu/UserDoc/Cluster/

University of Southampton

– http://www.windowsclusters.org/

=> application and hardware/software evaluation

=> many of these folks will work with you on deployment

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Tools and Technologies for Building

Windows Clusters

Communication Hardware

– Myrinet, http://www.myri.com/

– Giganet, http://www.giganet.com/

– Servernet II, http://www.compaq.com/

Cluster Management and Communication Software

– LSF, http://www.platform.com/

– Codeine, http://www.gridware.net/

– Cluster CoNTroller, MPI, http://www.mpi-softtech.com/

– Maui Scheduler http://www.cs.byu.edu/

– MPICH, http://www-unix.mcs.anl.gov/mpi/mpich/

– PVM, http://www.epm.ornl.gov/pvm/

Microsoft Cluster Info

– Win2000 http://www.microsoft.com/windows2000/

– MSCS,http://www.microsoft.com/ntserver/ntserverenterprise/ exec/overview/clustering.asp

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Future Directions

Terascale Clusters

Entropia

A Terascale Cluster

10+ Teraflops in 2000?

NSF currently running a $36M Terascale competition

Budget could buy

– an Itanium cluster (3000+ processors)

– ~3TB of main memory

? #1 in Top 500 ?

Supercomputing Sites

– > 1.5Gbps high speed network interconnect

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Entropia: Beyond Clusters

• COTS, SHV enable larger, cheaper, faster systems

•

Supercomputers (MPP’s) to…

•

Commodity Clusters (NT Supercluster) to…

• Entropia

Internet Computing

Idea: Assemble large numbers of idle PC’s in people’s homes, offices, into a massive computational resource

– Enabled by broadband connections, fast microprocessors, huge PC volumes

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Unprecedented Power

Entropia network: ~30,000 machines (and growing fast!)

– 100,000, 1Ghz => 100 TeraOp system

– 1,000,000, 1Ghz => 1,000 TeraOp system (1 PetaOp)

IBM ASCI White (12 TeraOp, 8K processors, $110 Million system)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Why Participate: Cause Computing!

People Will Contribute

Millions have demonstrated willingness to donate their idle cycles

“Great Cause” Computing

– Current: Find ET, Large Primes, Crack DES…

– Next: find cure for cancer, muscular dystrophy, air and water pollution, …

• understand human genome, ecology, fundamental properties of matter, economy

Participate in science, medical research, promoting causes that you care about!

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Technical Challenges

Heterogeneity (machine, configuration, network)

Scalability (thousands to millions)

Reliability (turn off, disconnect, fail)

Security (integrity, confidentiality)

Performance

Programming

. . .

Entropia: harnessing the computational power of the Internet

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Entropia is . . .

Power : a network with unprecedented power and scale

Empower : ordinary people to participate in solving the great social challenges and mysteries of our time

Solve : team solving fascinating technical problems

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Summary

Windows clusters are powerful, successful high performance platforms

– Cost effective and excellent performance

– Poised for rapid proliferation

Beyond clusters are Internet computing systems

– Radical technical challenges, vast and profound opportunities

For more information see

– HPVM http://www-csag.ucsd.edu/

– Entropia http://www.entropia.com/

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Credits

NT Cluster Team Members

– CSAG (UIUC and UCSD Computer Science) – my research group

– NCSA Leading Edge Site -- Robert Pennington’s team

Talk materials

• NCSA (Rob Pennington, numerous application groups)

• Cornell TC (David Lifka)

• Boeing (David Levine)

• MPISoft (Tony Skjellum)

• Giganet (David Wells)

• Microsoft (Jim Gray)

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA

Entropia, Inc -- University of California, San Diego (UCSD/CSE) -- NCSA