Slides for PhD Thesis Seminar

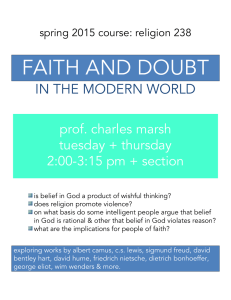

advertisement

Belief Augmented Frames

14 June 2004

Colin Tan

ctank@comp.nus.edu.sg

http://www.comp.nus.edu.sg/~ctank

Motivation

• Primary Objective:

–

To study how uncertain and defeasible

knowledge may be integrated into a

knowledge base.

• Main Deliverable:

–

A system of theories and techniques that

allow us to integrate new knowledge we

have gained, and to use this knowledge to

make better inferences

Proposed Solution

• A frame-based reasoning system

augmented with belief measures.

–

Frame-based system to structure

knowledge and relations between entities.

– Belief measures provide uncertain

reasoning on existence of entities and the

relationships between them.

Why Belief Measures?

• Statistical Measures

–

Standard tool for modeling uncertainty.

– Essentially, if the probability that a

proposition E is true is p, then the

probability of that E is false is 1-p.

• P(E) = p

• P(not E) = 1-p

Why Belief Measures?

• This relationship between P(E) and

P(not E) introduces a problem:

–

This relationship essentially leaves no

room for ignorance. Either the proposition

is true with a probability of p, or it is false

with a probability of 1-p.

– This can be counter-intuitive at times.

Why Belief Measures?

• [Shortliffe75] cites a study in which,

given a set of symptoms, doctors were

willing to declare with certainty x that a

patient was suffering from a disease D,

yet were unwilling to declare with

certainty 1-x that the patient was not

suffering from D.

Why Belief Measures?

• To allow for ignorance our research

focuses on belief measures.

• The ability to model ignorance is

inherent in belief systems.

–

E.g. in Dempster-Shafer Theory

[Dempster67], if our belief in E1 and E2

are 0.1 and 0.3 respectively, then the

ignorance is (1 – (0.1 + 0.3)) = 0.6.

Why Frames?

• Frames are a powerful form of

representation.

–

Intuitively represents relationships

between objects using slot-filler pairs.

• Simple to perform reasoning based on

relationships.

–

Hierarchical

• Can perform generalizations to create general

models derived from a set of frames.

Why Frames?

• Frames are powerful form of

representation:

–

Daemons

• Small programs that are invoked when a

frame is instantiated or when a slot is filled.

Combining Frames with

Uncertainty Measures

• Augmenting slot-value pairs with

uncertainty values.

–

Enhance expressiveness of relationships.

– Can now do reasoning using the

uncertainty values.

• A Belief Augmented Frame (BAF) is a

frame structure augmented with belief

measures.

Example BAF

Donkey

0.6, 0.3

color

1.0, 0.0

Grey,

1.0, 0.0

owns

0.7, 0.2

Alice,

1.0, 0.0

walks

0.9, 0.1

Blue,

1.0, 0.0

color

1.0, 0.0

Dog

0.9, 0.0

location

1.0, 0.0

Bay,

1.0, 0.0

Belief Representation in

Belief Augmented Frames

• Beliefs are represented by two masses:

–

–

–

φT: Belief mass supporting a proposition.

φF: Belief mass refuting a proposition.

In general φT + φF 1

• Room to model ignorance of the facts.

• Separate belief masses allow us to:

Draw φT and φF from different sources.

– Have different chains of reasoning for φT and φF.

–

Belief Representation in

Belief Augmented Frames

• This ability to derive the refuting masses

from different sources and chains of

reasoning is unique to BAF.

–

–

In Probabilistic Argumentation Systems (the

closest competitor to BAF) for example, p(not E)

= 1 – p(E).

Possible though to achieve this in Dempster

Shafer Theory through the underlying

mechanisms generating m(E) and m(not E).

Belief Representation in

Belief Augmented Frames

• BAFs however give a formal framework for

deriving T and F

–

BAF-Logic, a complete reasoning system for

BAFs.

• BAFs provide a formal framework for Frame

operations.

–

E.g. how to generalize from a given set of

frames.

• BAF and DST can in fact be complementary:

–

BAF as a basis of generating masses in DST

Degree of Inclination

• The Degree of Inclination is defined as:

–

DI = T - F

• DI is in the range of [-1, 1].

• One possible interpretation of DI:

-1

False

-0.75

Most

Probably

False

-0.5

Probably

False

-0.25

Likely

False

0

Ignorant

0.25

Likely

True

0.5

Probably

True

0.75

Most

Probably

True

1

True

Utility Value

• The Degree of Inclination DI can be remapped to the range [0, 1] through the

Utility function:

–

U = (DI + 1) / 2

– By normalizing U across all relevant

propositions it becomes possible to use U

as a statistical measure.

Plausibility, Ignorance,

Evidential Interval

• Plausibility pl is defined as:

pl = 1 - F

• Ignorance ig is defined as:

ig = pl – T

= 1 – (T + F)

• The Evidential Interval EI is defined to

be the range

EI =[T, pl]

Interpreting the Evidential

Interval

Evidential Interval

Interpretation

[0, 1]

[0, 0]

Complete ignorance.

[1, 1]

The evidence provided completely

supports the fact.

[T, Pl] 0 < T, Pl < 1

Pl T

[T, Pl] 0 < T, Pl < 1

Pl < T

The evidence both supports and

refutes the fact.

The evidence provided completely

refutes the fact.

The evidence supporting the fact

exceeds the plausibility of the fact.

I.e. the evidence is contradictory.

Reasoning with BAFs

• Belief Augmented Frame Logic, or

BAF-Logic, is used for reasoning with

BAFs.

• Throughout the remainder of this

presentation, we will consider two

propositions A and B, with supporting

and refuting masses TA, FA, TB, and

FB.

Reasoning with BAFs

AND, OR, NOT

• A B:

–

–

TA B = min(TA, TB)

FA B = max(FA, FB)

• A B:

–

–

TA B = max(TA, TB)

FA B = min(FA, FB)

• A:

–

–

T A = F A

F A = T A

Default Reasoning in BAF

• When the truth of a proposition is unknown,

then we set the supporting and refuting

masses to TDEF and FDEF respectively.

–

Conventionally, TDEF = FDEF = 0

• Two special default values:

–

–

TONE = 1 , FONE = 0

TZERO = 0 , FZERO = 1

• Used for defining contradiction and

tautology.

Default Reasoning in BAF

• Other default reasoning models are

possible too.

–

E.g. categorical defaults:

• : (A, TA , FA) (B, TB , FB) / (B, TB , FB)

• Semantics:

– Given a knowledge base KB.

– If KB :- A and KB :-/- B, infer B with supporting

and refuting masses TB and FB

–

Detailed study of this topic still to be

made.

BAF and Propositional Logic

• BAF-Logic properties that are identical

to Propositional Logic:

–

Associativity, Commutativity, Distributivity,

Idempotency, Absorption, De-Morgan’s

Theorem, - elimination.

BAF and Propositional Logic

• Other properties of Propositional Logic

work slightly differently in BAF-Logic.

–

In particular, some of the properties hold

true only if the constituent propositions are

at least “probably true” or “probably false”

• I.e. |DIP | 0.5

BAF and Propositional Logic

• For example, P and P Q must both

be at least probably true for Q to not be

false.

–

If DIP and DIP Q are less than 0.5, DIQ

might end up < 0.

• For - elimination, P Q must be

probably true, and P must be probably

false, before we can infer that Q is not

false.

BAF and Propositional Logic

• This can lead to unexpected reasoning

results.

–

E.g. P, P Q are not false, yet DIQ < 0.

• A possible solution is to set {TQ = TDEF ,

FQ = FDEF} when DIP and DIPQ are less

than 0.5

• In actual fact, the magnitude of DIP and DIP

Q don’t both have to be 0.5. Only their

average magnitudes must be 0.5.

Belief Revision

• Beliefs are not static. We need a mechanism

to update beliefs [Pollock00].

• To track the revision of belief masses, we

add a subscript t to time-stamp the masses.

–

E.g. TP,0 is the value of TP at time 0, TP,1 at

time 1 etc.

• At time t, given a proposition P with masses

TP, t and FP,t, suppose we derive masses

TP, * and FP, *, then the new belief masses at

time t+1 are:

–

–

TP, t+1 = TP, t + (1- ) TP, *

FP, t+1 = FP, t + (1- ) FP, *

Belief Revision

• Intuitively, this means that we give a

credibility factor to the existing

masses, and (1- ) to the derived

masses.

• therefore controls the rate at which

beliefs are revised, given new

evidence.

An Example

• Given the following propositions in your

knowledge base:

–

KB = {(A, 0.7, 0.2), (B, 0.9, 0.1), (C, 0.2,

0.7), (A B R, TONE , FONE,), (A B

R, TONE , FONE)}

– We want to derive TR, 1, FR, 1.

An Example

• Combining our clauses regarding R,

we obtain:

–

R = (A B) (A B)

• = A B ( A B)

• With De-Morgan’s Theorem we can

derive R:

–

R= A B (A B)

An Example

• TR,* = min(TA , TB , max(FA , TB ))

= min(0.7, 0.9, max(0.2, 0.9))

= min(0.7, 0.9, 0.9)

= 0.7

• FR,* = max(FA , FB , min(TA , FB ))

= max(0.2, 0.1, min(0.7, 0.1))

= max(0.2, 0.1, 0.1)

= 0.2

An Example

•

•

We begin with default values for R:

–

TR,0 = TDEF

–

= 0.0

= FDEF

= 0.0

FR,0

This gives us the following attributes:

An Example

Measure

Value

DIR, 0

0.0

PlR,0

1.0

IgR,0

1.0

EIR,0

[0.0, 1.0]

An Example

• Deriving the new belief values with =

0.4

–

TR,1 = 0.4 * 0.0 + (1.0 – 0.4) * 0.7

–

= 0.42

= 0.4 * 0.0 + (1.0 – 0.4) * 0.2

= 0.12

FR,1

• This gives us:

An Example

Measure

Value

DIR, 1

0.42 – 0.12 = 0.30

PlR,1

1.0 – 0.12 = 0.88

IgR,1

0.88 – 0.42 = 0.46

EIR,1

[0.42, 0.88]

An Example

• We see that with our new information

about R, our ignorance falls from 1.0

(total ignorance) to 0.46. With more

knowledge available about whether R

is true, we also see the plausibility

falling from 1.0 to 0.88.

• Further, suppose it is now known that:

–

B C R

An Example

• Combining our clauses regarding R,

we obtain:

–

R = (A B) (B C) (A B)

= A B C ( A B)

• With De-Morgan’s Theorem we can

derive R:

–

R= A B C (A B)

An Example

• TR,* = min(TA , TB , TC , max(FA , TB ))

= min(0.7, 0.9, 0.2, max(0.2, 0.9))

= min(0.7, 0.9, 0.2, 0.9)

= 0.2

• FR,* = max(FA , FB , FC , min(TA , FB ))

= max(0.2, 0.1, 0.7, min(0.7, 0.1))

= max(0.2, 0.1, 0.7, 0.1)

= 0.7

An Example

• Updating the beliefs:

–

TR,2 = 0.4 * 0.42 + (1.0 – 0.4) * 0.2

–

= 0.288

= 0.4 * 0.12 + (1.0 – 0.4) * 0.7

= 0.468

FR,2

• This gives us:

An Example

Measure

Value

DIR, 2

0.288 – 0.468 = -0.18

PlR,2

1.0 – 0.468 = 0.532

IgR,2

0.532 – 0.288 = 0.244

EIR,2

[0.288, 0.532]

An Example

• Here the new evidence that B C R

fails to support R, because C is not

true (DIC = -0.5)

• Hence the plausibility of R falls from

0.88 to 0.532, while the truth value

DIR,2 enters into the negative range.

Integrating Belief Measures

with Frames

• Belief measures to quantify:

–

The existence of the object/concept

represented by the frame.

– The existence of relations between frames

Frames with Belief Measures

Camp

(1.0, 0.0)

Baby

(0.75, 0.25)

IsA

At

(1.0, 0.0)

Ike

(1.0, 0.0)

Brother

Kyle

Friend

(0.8, 0.2)

Likes

Kicked

(0.9, 0.1)

(0.9, 0.1)

Kenny

Helped

(0.75, 0.25)

Insults

At

Stan

(0.9, 0.1)

(0.75, 0.25)

StudiesAt

Friend

(1.0, 0.0)

South Park

Integrating Belief Measures

with Frames

• Deriving Belief Values

–

BAF-Logic statements can be used to derive

belief measures.

• For example, suppose we propose that:

–

–

Sam is Bob’s son if Sam is male and Bob has a

child.

Within our knowledge base, we have {(Sam is

male, 0.6, 0.2), (Bob has child, 0.8, 0.1), (Sam is

male Bob has child Sam is Bob’s Son, 0.7,

0.1)}

Integrating Belief Measures

with Frames

• Assuming that = 0, we can derive:

Tsam,son,bob

Fsam,son,bob

DIsam,son, bob

Plsam, son, bob

Igsam, son, bob

= min(0.6, 0.8, 0.7)

= 0.6

= max(0.2, 0.1, 0.1)

= 0.2

= 0.4

= 0.8

= 0.2

Integrating Belief Measures

with Frames

• Daemons

–

Can be activated based on belief masses,

DI, EI, Ig and Pl values.

– Can act on DI, EI, Ig, Pl values for further

processing.

• E.g. if it is likely that Sam is Bob’s son, and if

the ignorance is less than 0.2, create a new

frame School, and set Sam, Student, School

relationship.

Frame Operations

• add_frame, del_frame, add_rel, etc. etc.

• More interesting operations include abstract:

–

–

–

–

Given a set of frames

Create a super-frame that is the parent of the set

of frames.

Copy relations that occur in at least % of the

set of frames to the superframe.

Set the belief masses to be a composition of all

the belief masses in the set for that relation.

Application Examples

Discourse Understanding

• Discourse can be translated to a

machine understandable form before

being cast as BAFs.

• Discourse Representation Structures

(DRS) are particularly useful.

–

Algorithm to convert from DRS to BAF is

trivial [Tan03].

Application Examples

Discourse Understanding

• Setting Belief Masses

–

Initial belief masses may be set using

fuzzy-sets.

• E.g. to model a person being helpful

– Shelpful = {1.0/”invaluable”, 0.75/”very helpful”,

0.5/”helpful”, 0.25/”unhelpful”, 0.0/”uncooperative”}

• If we say that Kenny is very helpful, we can

set:

– Tkenny_helpful = 0.75

– Fkenny_helpful = 1.0 - 0.75= 0.25

Application Examples

Discourse Understanding

• Further propositions and rules may be

inserted into the knowledge base to

perform reasoning on the initial belief

masses.

• Propositions and rules modeled as

prolog clauses.

Application Examples

Text Classification

• Can model text classification as a BAF

problem:

–

In BAF-Logic the jth document Dij in the

document class ci is taken to be a conjunction of

terms tk:

• Dij = tij0 tij1 … tij(n-1)

–

Each term and document is related by a set of

relations:

• Rijk = {(Dij, term, tk, Tijk, Fijk) | tk is a term in Dij}

Application Examples

Text Classification

• Given a set of documents D in class ci, we

apply the abstract operator to produce the

set of relations characterizing ci.

–

v = (Si0, Si1, Si2, … Si(m-1))

• Each Sik is the relation:

Sik = {(ci, term, tk, Tik, Fik) | tk occurs in at least

% of documents Dj in class ci}

– Tik = minj Tijk

– Fik = maxlmaxj Tljk, l i

–

Application Examples

Text Classification

–

Tik is our belief that the term tk implies that the

–

document belongs to class ci.

Fik is our belief that the term tk implies that the

document belongs to some other class cl.

• Given an unseen document Du, we derive

the keyword terms tunk, k. We can derive the

following masses that support and refute the

proposition that Du belongs to class ci.

Application Examples

Text Classification

–

–

Ti, unk = min( Ti0, Ti1, …max( Fi0, Fi1, …))

Fi, unk = max( Fi0, Fi1, …min( Ti0, Ti1, …))

• From this we derive the degree of inclination

using the standard definition:

–

DIi, unk = Ti, unk - Fi, unk

• We choose the class with the largest DI as

the winner.

–

win = argmax DIi,unk

Text Classification

Experiment I

• Corpus used: 20 Newsgroups

–

–

–

20,000 USENET articles culled from 20

newsgroups.

19,600 articles to train classifiers, 400 to test.

Relatively poor performance from classifiers due

to nature of USENET postings.

• Jeffreys-Perks Law used to smoothen

statistics.

Classification Results

Inside Testing

Text Classification - Inside Test

Classification Accuracy (%)

100

90

80

70

60

NBAYES

50

BAF

40

PAS

30

20

10

0

0%

10%

Degree of Abstraction

20%

Classification Results

Outside Testing

Text Classification - Outside Test

Classification Accuracy (% )

70

60

50

NBAYES

BAF

PAS

40

30

20

10

0

0%

10%

Degree of Abstraction

20%

Classification Results

Overall

Classification Results - Overall

Classification Accuracy (% )

80

70

60

50

NBAYES

BAF

PAS

40

30

20

10

0

0%

10%

Degree of Abstraction

20%

Text Classification

Analysis

• Both BAF and Probabilistic

Argumentation Systems (PAS) perform

better than Naïve Bayes (NBAYES).

• BAF performs significantly better than

PAS for unseen documents.

• However performance for seen

documents is mixed. PAS and BAF

appear to have similar performance.

Text Classification

Experiment II

• Corpus Used: Reuters Newswire articles

–

–

2,000 articles in 25 categories for training.

500 articles for testing.

• Results:

–

Similar to Experiment I

• Compared with PAS, mixed performance for seen data.

• Superior performance for unseen data.

• PAS and BAF both have superior performance to Naïve

Bayes.

Text Classification

Conclusions

• Both BAF and PAS perform better than

Naïve Bayes.

• BAF and PAS have similar

performance for seen data.

• BAF has better performance over PAS

for unseen data.

Publications

C. K. Y. Tan, K. T. Lua, “Discourse Understanding

with Discourse Representation Theory and Belief

Augmented Frames”, 2nd International

Conference on Computational Intelligence,

Robotics and Autonomous Systems, Singapore,

2003.

C. K. Y. Tan, K. T. Lua, “Belief Augmented Frames

for Knowledge Representation in Spoken

Dialogue Systems”, 1st International Indian

Conference on Artificial Intelligence, Hyderabad,

India, 2003.

Publications

C. K. Y. Tan, “Text Classification using Belief

Augmented Frames”, 8th Pacific Rim International

Conference on Artificial Intelligence, Auckland,

2004.

C. K. Y. Tan, “Belief Augmented Frames”, Doctoral

Thesis, Department of Computer Science,

School of Computing, National University of

Singapore, 2003.

Current and Future Work

• Currently:

–

Developing a BAF Reasoning Engine

• Future:

–

Dialog Management using BAFs

– Automatic Text Classification

– AI Engine for Game Playing

Conclusion

• Use of belief measures to quantify

uncertainty.

–

Room for ignorance

• Use of Frames to organize knowledge.

–

Frames represent objects or ideas in the world.

– Slot-filler pairs represent relations between

frames.

– Relations are weighted by belief measures.

References

• [Shortliffe75] E. H. Shortliffe, B. G.

Buchanan, “A Model of Inexact Reasoning in

Medicine”, Mathematical Biosciences Vol 23,

pp 351-379, 1975.

• [Dempster67] A. P. Dempster, “Upper and

Lower Probabilities Induced by a Multivalued

Mapping”, The Annals of Mathematical

Statistics Vol 38 No 2, pp 325-339, 1967

References

• [Pollock00] J. L. Pollock, A. S. Gilles, “Belief

Revision and Epistemology”, Synthese 122,

pp 69-92, 2000.

• [Tan03] C. K. Y. Tan, K. T. Lua, “Discourse

Understanding with Discourse

Representation Theory and Belief

Augmented Frames”, 2nd International

Conference on Computational Intelligence,

Robotics and Autonomous Systems,

Singapore, 2003.