Text Classification with Belief Augmented Frames Colin Tan Department of Computer Science,

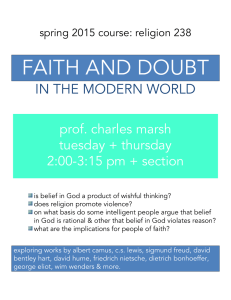

advertisement

Text Classification with

Belief Augmented Frames

Colin Tan

Department of Computer Science,

School of Computing,

National University of Singapore.

Outline

•

•

•

•

•

•

•

•

What are Belief Augmented Frames?

Motivation behind Belief Augmented Frames

Representing Beliefs in BAFs

Some Definitions

Belief Augmented Frame Logic (BAF-Logic)

Applying BAF-Logic to Text Classification

Experiment Protocol and Results

Conclusions

What are Belief Augmented

Frames?

• Belief Augmented Frames (BAF) combine

classical AI frames with belief measures.

– Frame-based system to structure knowledge

and relations between entities.

– Belief measures provide uncertain reasoning on

existence of entities and the relationships

between them.

Motivation behind Belief

Augmented Frames

• Why Belief Measures?

– Statistical Measures

• Standard tool for modeling uncertainty.

• Essentially, if the probability that a proposition E is true is p,

then the probability of that E is false is 1-p.

– P(E) = p

– P(not E) = 1-p

• This relationship essentially leaves no room for ignorance.

Either the proposition is true with a probability of p, or it is

false with a probability of 1-p.

• This can be counter-intuitive at times.

Motivation behind Belief

Augmented Frames

• Why Belief Measures?

– [Shortliffe75] cites a study in which, given a set

of symptoms, doctors were willing to declare

with certainty x that a patient was suffering

from a disease D, yet were unwilling to declare

with certainty 1-x that the patient was not

suffering from D.

Motivation behind Belief

Augmented Frames

• Why Belief Measures?

– To allow for ignorance our research focuses on

belief measures.

– The ability to model ignorance is inherent in

belief systems.

• E.g. in Dempster-Shafer Theory [Dempster67], if

our belief in E1 and E2 are 0.1 and 0.3 respectively,

then the ignorance is (1 – (0.1 + 0.3)) = 0.6.

Motivation behind Belief

Augmented Frames

• Why Frames?

– Frames are a powerful form of representation.

• Intuitively represents relationships between objects

using slot-filler pairs.

– Simple to perform reasoning based on relationships.

• Hierarchical

– Can perform generalizations to create general models

derived from a set of frames.

Example BAF

Donkey

0.6, 0.3

color

1.0, 0.0

Grey,

1.0, 0.0

owns

0.7, 0.2

Alice,

1.0, 0.0

walks

0.9, 0.1

Blue,

1.0, 0.0

color

1.0, 0.0

Dog

0.9, 0.0

location

1.0, 0.0

Bay,

1.0, 0.0

Belief Representation in Belief

Augmented Frames

• Beliefs are represented by two masses:

– φT: Belief mass supporting a proposition.

– φF: Belief mass refuting a proposition.

– In general φT + φF 1

• Room to model ignorance of the facts.

• Separate belief masses allow us to:

– Draw φT and φF from different sources.

– Have different chains of reasoning for φT and φF.

Belief Representation in Belief

Augmented Frames

• This ability to derive the refuting masses

from different sources and chains of

reasoning is unique to BAF.

– In Probabilistic Argumentation Systems (the

closest competitor to BAF) for example, p(not

E) = 1 – p(E).

Some Definitions

• Degree of Inclination

– The Degree of Inclination is defined as:

• DI = T - F

– DI is in the range of [-1, 1].

– One possible interpretation of DI:

-1

False

-0.75

Most

Probably

False

-0.5

Probably

False

-0.25

Likely

False

0

Ignorant

0.25

Likely

True

0.5

Probably

True

0.75

Most

Probably

True

1

True

Some Definitions

• Utility Value

– The Degree of Inclination DI can be re-mapped

to the range [0, 1] through the Utility function:

• U = (DI + 1) / 2

• By normalizing U across all relevant propositions it

becomes possible to use U as a statistical measure.

Belief Augmented Frame Logic

(BAF-Logic)

• Belief Augmented Frame Logic, or BAFLogic, is used for reasoning with BAFs.

• Throughout the remainder of this

presentation, we will consider two

propositions A and B, with supporting and

refuting masses TA, FA, TB, and FB.

Belief Augmented Frame Logic

(BAF-Logic)

• A B:

– TA B = min(TA, TB)

– FA B = max(FA, FB)

• A B:

– TA B = max(TA, TB)

– FA B = min(FA, FB)

• A:

– T A = F A

– F A = T A

Belief Augmented Frame Logic

(BAF-Logic)

• BAF-Logic properties that are identical to

Propositional Logic:

– Associativity, Commutativity, Distributivity,

Idempotency, Absorption, De-Morgan’s Theorem, elimination.

• Other properties of Propositional Logic work

slightly differently in BAF-Logic.

– In particular, some of the properties hold true only if the

constituent propositions are at least “probably true” or

“probably false”

• I.e. |DIP | 0.5

Belief Augmented Frame Logic

(BAF-Logic)

• An Example:

– Given the following propositions in your

knowledge base:

• KB = {(A, 0.7, 0.2), (B, 0.9, 0.1), (C, 0.2, 0.7), (A

B R, TONE , FONE,), (A B R, TONE ,

FONE)}

• We want to derive TR, FR.

Belief Augmented Frame Logic

(BAF-Logic)

• Combining our clauses regarding R, we

obtain:

– R = (A B) (A B)

• = A B ( A B)

• With De-Morgan’s Theorem we can derive

R:

– R= A B (A B)

Belief Augmented Frame Logic

(BAF-Logic)

• TR = min(TA , TB , max(FA , TB ))

= min(0.7, 0.9, max(0.2, 0.9))

= min(0.7, 0.9, 0.9)

= 0.7

• FR = max(FA , FB , min(TA , FB ))

= max(0.2, 0.1, min(0.7, 0.1))

= max(0.2, 0.1, 0.1)

= 0.2

Belief Augmented Frame Logic

(BAF-Logic)

• DIR

= TR - FR

= 0.7 – 0.2

= 0.5

• UR

= (1 + 0.5) / 2.0

= 0.75

• Suppose now it is known that B C R

Belief Augmented Frame Logic

(BAF-Logic)

• Combining our clauses regarding R, we

obtain:

– R = (A B) (B C) (A B)

= A B C ( A B)

• With De-Morgan’s Theorem we can derive

R:

– R= A B C (A B)

Belief Augmented Frame Logic

(BAF-Logic)

• TR = min(TA , TB , TC , max(FA , TB ))

= min(0.7, 0.9, 0.2, max(0.2, 0.9))

= min(0.7, 0.9, 0.2, 0.9)

= 0.2

• FR = max(FA , FB , FC , min(TA , FB ))

= max(0.2, 0.1, 0.7, min(0.7, 0.1))

= max(0.2, 0.1, 0.7, 0.1)

= 0.7

Belief Augmented Frame Logic

(BAF-Logic)

• DIR

= TR - FR

= 0.2 – 0.7

= -0.5

• UR

= (1 - 0.5) / 2.0

= 0.25

• Here the new evidence that B C R fails

to support R, because C is not true (DIC = 0.5)

Text Classification

First Approach

• First Formulation:

– Using Individual Word Scores

– Assuming that a document di belongs to a class

ck, then for every term tij the following relation

holds:

di ck (ti0 ck ti1 ck ti2 ck …

ti,n-1 ck)

Text Classification

First Approach

• Likewise, for a document di not belonging

to a class ck, we can derive:

di ck m, mk (ti0 cm ti1 cm ti2

cm … ti,n-1 cm)

• These can be formulated in BAF-Logic:

Tdi ck = min(p(ck | ti0), p(ck | ti1), …, p(ck | ti, n-1))

Fdi ck = max(min(p(cm | ti0), p(cm | ti1), …,

p(cm | ti, n-1)), min(p(cn|ti0),

p(cn|ti1),…,p(cn|ti,n-1)), …)), m, n etc k

Text Classification

First Approach

• The final score of a document di belong to

class cj is given by:

U d i ck

1.0 DI d i ck

2

• Where:

DI d i ck dTi ck dFi ck

Text Classification

First Approach

• Individual term probabilities are derived

using Bayesian probabilities:

p(ck | tij )

p(tij | ck ) p(ck )

p(tij )

Text Classification

Second Approach

• We classify the entire document using

Naïve Bayes assumption:

p( ck | d i ) p( ck | tij )

j

• Trivial to derive the supporting score that di

ck.

– It is simply p(ck | di)

Text Classification

Second Approach

• Formulating the Refuting Score is

straightforward too:

di ck di cm di cn di cp…, m, n, p, etc k

• We can formulate both supporting and

refuting scores in BAF-Logic:

T

d i ck p(ck | di )

dF c max( p(cm | di ), p(cn | di ), p(c p | di ),...)

i

k

Text Classification

Second Approach

• We retain the definitions of DI and U from

the first approach.

Experiment Protocol

• Using Andrew McCallum’s “Bag of Words”

or BOW library.

– Extended “rainbow”, the text-classification

front-end, with two BAF classification

methods.

• Methods are called BAF1 and BAF2

– Also extended with two PAS methods (see

paper for more details)

• Methods are called PAS1 and PAS2

Experiment Protocol

• Corpus:

– 20 Newsgroups

– 80% (16,000) documents used to generate

statistics.

– 20% (4,000) documents used for testing

– Choice of documents for training/testing

handled by BOW

– Headers removed from all documents

Experiment Protocol

• Trials

– 10 trials were performed using each

classification method.

• Naïve Bayes, tf.idf, kNN, EMM, Max entropy,

Probabilistic Indexing, BAF1, BAF2, PAS1, PAS2

– The average was taken from the 10 trials for

each method.

Experiment Results

Text Classification Accuray

100

90

82.09 79.88

82.01 81.15

80

68.98

70

65.87 67.46

60

50

35.17

40

30

20

10

Method

PA

S2

PA

S1

BA

F2

BA

F1

AX

EN

T

PR

IN

D

M

EM

kN

N

id

f

tf.

Ba

ye

s

0

Na

ïv

e

Accuracy (%)

82.36

77.73

Analysis

• BAF1 performs poorly.

– Using individual word scores appears to be a poor idea.

• BAF2 performs very well.

– Better than the other methods attempted.

• BAF2 Performance slightly better than Naïve

Bayers

– Appears that considering a document to belong to

another class has a positive effect on classification

scores.

Conclusion

• Experiment results show that the use of BAFLogic to classify documents might be a good idea.

• In addition there are features of BAFs (e.g.

daemons attached to slots) that might enhance

classification performance further.

• More work should be done on this.

– Understanding better why BAF-Logic works for text

classification.

– Improving classification performance.