L17_WRAPUP.ppt

advertisement

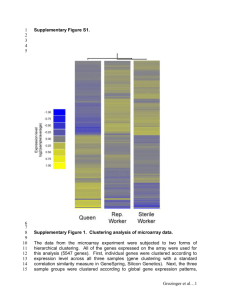

Lecture 15 Wrap up of class What we intended to do and what we have done: • Topics: • What is the Biological Problem at hand? • Types of data: micro-array, proteomic, RNAseq, GWAS • Why and when does one use them? Sources of Variation led us to our next topic • Statistical issues concerning: • a. Normalization of data • b. Stochastic error versus systematic errors Normalization • VERY important we realize WHY we normalize data as opposed to HOW to normalize data. I am including Background correction along with Normalizing here. • The pros and Cons of normalizing vs not. • What theoretically Normalizing is supposed to do and WHAT it actually does. Statistical topics: • • • • LOESS Quantile Normalization Tukey Bi-weight Wilcoxon Signed Rank test Now come the QUESTIONS OF INTEREST: What are the genes that are different for the healthy versus diseased cells? • –Gene discovery, differential expression Is a specified group of genes all up-regulated in a specified condition? • –Gene set differential expression • Did not get time for this too much but can be included in Clustering after DE Tests we talked about: For 2 conditions: • Pooled t test • Welch’s t test • Wilcoxon Rank Sum Test • PermutationTest • Bootstrap t test. • EB Bayes Test Announcement • I am totally voice-less today • So we will present as follows: – Andrew – Cameron – Lili – Huinan – Ben – Amit – Xin Contd… • • • • • • • David Chongjin Jie Jeff Miaoru Jillian Jeff Tests contd • • • • For multiple Conditions ANOVA F test Kruskal Wallis Test EB Bayes Test Multiplicity: • The question of multiplicity adjustment, FWE, PCE or FDR? • Bonferroni corrections, • False Discovery Rates, FDR • Sequential Bonferroni, the Holm adjustment • Bootstrapping, Permutation adjustments Class discovery, clustering • To do clustering we need a distance metric and a linkage method. • We can have hierarchical or non-hierarchical clustering. • • Non-hierarchical Clustering: Partitioning Methods (need to know number of clusters0 • Hierarchical Clustering: Produces trees (produces tree-diagram) Distance and Linkages • • • • • • • • • • • Distance: Eucledean Manhattan Mahalanobis Correlation Linkages: Complete Singles Centroid Average Class prediction, classification • •Are there tumour sub-types not previously identified? Do my genes group into previously undiscovered pathways? LDA • Feature Selection: gene filtering – Differential Expression – PCA – Penalized Least Square • • Choosing the rules – Parametric ones: • • • • • Liklihood Linear Discriminant Rule Mahalanobis rule Posterior Probability Rule The General Classification Rule (using cost of mis-classification and priors) Misclassifications – Non-parametric ones • K-NN • Estimating Misclassification rates – Resubstitution – Hold-out Samples – Cross validation/Jack-knife This is just the beginning of this journey • Remember you still have loads to learn • You have to keep reading and be willing to incorporate new ideas • Thanks a bunch for sharing this journey with me!