Week 14 - Reinforcement Learning

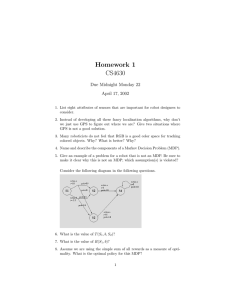

advertisement

CS188 Discussion Week 14: Reinforcement Learning By Nuttapong Chentanez Want to compute Markov Decision Process (MDP) -Consists of set of states s S -Transition model model, T(s, a, s’) = P(s’ | s, a) - Probability that executing action a in state s leads to s’ -Reward function R(s, a, s’), - Can also write Renter(s’) for reward for entering into s’ - Or Rin(s) for being in s -A start state (or a distribution) -May be terminal states Bellman’s Equation Idea: Optimal rewards = maximize over first action and then follow optimal policy How? Simple Monte-Carlo (Sampling) *(s) = Value Iteration Theorem: Will converge to a unique optimal values. Policy may converge long before values do. Policy Iteration Policy Evaluation: Calculate utilities for a fixed policy Policy Improvement: Update policy based on resulting converged utility Repeat until policy does not change In practice, no need to compute “exact” utility of a policy just rough estimate is enough. Combine dynamic programming with Monte-Carlo -One step of sampling and use current state value -Aka. Temporal Difference Learning (TD) Reinforcement Learning Still have MDP Still have an MDP: A set of states s S A set of actions (per state) A A model T(s,a,s’) A reward function R(s,a,s’) Still looking for a policy (s) However, the agent don’t know T or R Must actually try actions at states out to learn Reinforcement Learning in Animals Studied experimentally in psychology for > 60 years Rewards: food, pain, hunger etc. Example: Bees learn near-optimum foraging plan in artificial flowers field Dolphin training Model-Based Learning -Can try to learn T and R first then Simplest case: Count outcomes for each s,a Normalize to give estimate of T(s,a,s’) Discover R(s,a,s’) the first time we experience (s,a,s’) Passive Learning -Given a policy (s), try to learn V(s), don’t know T, R TD Features -On-line, Incremental, Bootstrapping(use info that we learn so far) -Model free, Converge as long as decreases over time eg (1/k), <1 Problems with TD -TD is for learning value of state under a given policy -If we want to learn optimal policy, won’t work -Idea, learn state-action pair (Q-values) instead Q-Functions -Utility of starting at state s, taking action a, then follow thereafter -Q-value of optimum policy, utility of starting at state s, taking action a, then follow optimum policy afterward Another solution: Exploration Function Idea: instead of exploring a fixed amount, can explore area where the value is not yet established Eg. f(u,n) = u + k/n, k is a constant Q-Learning Algorithm, applying TD idead to learn Q * Practical Q-Learning -In realistic situation, too many states to visit, may even be infinite -Need to learn from small training data -Be able to generalize to new, similar states -Fundamental problem in machine learning Function Approximation -Inefficient/Infeasible to learn each state-action pair q-value -Suppose we approximate Q(st,at) with a function f with parameters -Can do gradient-descent update Learn Q*(s,a) values -Receive a sample (s,a,s’,r) -Consider your old estimate: -Consider your new sample estimate: -Modify the old estimate towards the new sample: -Equivalently, average samples over time: Converge as long as decreases over time eg (1/k), <1 Exploration vs. Exploitation -“If always take best current action, will never explore other action and never know there is a better action” -Explore initially then exploit later -Many scheme for balancing exploration vs. exploitation Simplest: random actions (-greedy) Every time step, flip a coin With probability , act randomly With probability 1-, act according to current policy (best q value for instance) Will explore space but keep doing random action even after the learning is “done” A solution: Lower over time Project time - vt is the reward received - Idea: Gradient indicate the direction of changes in that most increase Q Want Q to looks more like vt, so modify each parameter depending on whether increasing or decreasing a parameter will make Q more like vt - In this simple form, does not always work, but the idea is on the right track - Feature selection is difficult in general