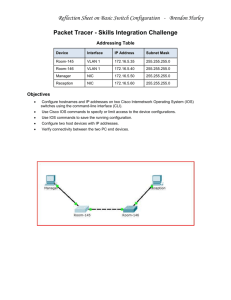

Cisco Service Delivery Center Infrastructure 2.1 Design Guide

advertisement