Chapter 1: Introduction to Statistical Methods “The true logic of this

Chapter 1:

Introduction to Statistical Methods

“The true logic of this world is in the calculus

of probabilities”.

James Clerk Maxwell

Introductory Remarks

• This course:

The Physics of systems containing

HUGE numbers ( ~ 10 23 ) of particles: Solids, liquids, gases, E&M radiation (photons), ….

•

Challenge: Describe a system’s Macroscopic characteristics from a Microscopic theory.

Classical: Newton’s Laws need to solve 10 23 coupled differential equations of motion (ABSURD!!)

Quantum: Schrödinger’s Equation

need to solve for 10 23 particles (ABSURD!!)

Use a Statistical description of such a system.

Talk about Probabilities & Average Properties

We are NOT concerned with the detailed behavior of individual particles.

•

Definitions:

Microscopic: ~ Atomic dimensions

~ ≤ a few

Å

Macroscopic: Large enough to be “visible” in the “ordinary” sense

Definitions

• An

Isolated System

is in

Equilibrium

when it’s

Macroscopic

parameters are timeindependent. This is the usual case in this course!

• But, note!

Even if it’s

Macroscopic

parameters are time-independent, a system’s

Microscopic

parameters can still vary with time!

The Random Walk & The Binomial Distribution

Section 1.1: Elementary Statistical Concepts; Examples

• Some math preliminaries

(& methods) for the next few lectures.

• To treat statistical physics problems, we must first know something about the mathematics of

Probability & Statistics

The following should be a review! (?)

•

Keep in mind : Whenever we want to describe a situation using probability & statistics, we must consider an assembly of a large number

N

(in principle,

N ∞

) of “similarly prepared systems”.

This assembly is called an

ENSEMBLE

( “Ensemble” = the French word for assembly ).

• The Probability of an occurrence of a particular event is DEFINED with respect to this particular ensemble

& is given by the fraction of systems in the ensemble characterized by the occurrence of this event.

•

Example : In throwing a pair of dice, we can give a statistical description by considering that a very large number

N of similar pairs of dice are thrown under similar circumstances. Alternatively, we could imagine the same pair of dice thrown

N times under similar circumstances. The probability of obtaining two 1’s is then given by the fraction of these experiments in which two 1’s is the outcome.

• Note that this probability depends strongly on the nature of the ensemble to which we are referring.

• Reif’s flower seed example (p. 5).

• To quantitatively introduce probability concepts, we use a specific, simple example, which is actually much more general than you first might think.

• The example is called

The Random Walk Problem

One-Dimensional Random Walk

In it’s simplest, idealized form, the random walk problem can be viewed as in the figure above: A drunk starts out from a lamp post on a street.

Each step he takes is of equal length ℓ

. The man is SO DRUNK , that the direction of each step (right or left) is completely independent of the preceding step. The probability of stepping to the right is p & of stepping to the left is q = 1 – p . In general, q ≠ p

. The x axis is along the sidewalk, the lamp post is at x = 0 . Each step is of length ℓ

, so his location on the x axis must be x = mℓ where m = a positive or a negative integer.

•

Question : After N steps, what is the probability that the man is at a specific location x = mℓ

( m specified)?

To answer, we first consider an ensemble of a large number

N of drunk men starting from similar lamp posts. Or repeat this with the same drunk man walking on the sidewalk

N times.

• This can be easily generalized to 2 dimensions. See figure to the right:

The 2 dimensional random walk corresponds to a PHYSICS problem of adding N , 2 dimensional vectors of equal length

(see figure) & random directions & asking:

What is the probability that the resultant has a certain magnitude & direction?

Physical Examples to which the

Random Walk Problem applies

1. Magnetism

N atoms, each with magnetic moment

μ

.

Each has spin ½ . By Quantum Mechanics , each magnetic moment can point either “up” or

“down”. If these are equally likely, what is the

Net magnetic moment of the N atoms?

2. Diffusion of a Molecule of |Gas

A molecule travels in 3 dimensions with a mean distance ℓ between collisions. How far is it likely to have traveled after N collisions? Answer using

Classical Mechanics .

The Random Walk Problem

• The Random Walk Problem illustrates some fundamental results of Probability Theory .

• The techniques used are Powerful & General . They are used repeatedly throughout

Statistical Mechanics

.

So, it’s very important to spend some time on this problem & understand it!

Section 1.2: 1 Dimensional Random Walk

• Forget the drunk, let’s get back to Physics !

Think of a particle moving in 1 dimension in steps of length ℓ

, with probability p of stepping to the right & q = 1 – p of stepping to the left.

After N steps, the particle is at position: x = mℓ

( - N ≤ m ≤ N ).

Let n

1

≡ # of steps to the right (of N ), n

2

Clearly,

≡ # of steps to the left.

N = n

1

+ n

2

(1)

Clearly also, x ≡ mℓ = (n

1

- n

2

)ℓ or, m = n

1

Combining (1) & (2) gives m = 2n

1

- n

2

(2)

– N (3)

Thus, if N is odd, so is m and if N is even, so is m .

A

Fundamental Assumption

is that successive steps are

statistically independent

• Let p ≡ the probability of stepping to the right and q = 1 – p ≡ the probability of stepping to the left.

• Since each step is statistically independent, the probability of a given sequence of n

1 steps to the right followed by n

2 steps to the left is given by multiplying the respective probabilities for each step: p·p·p·p·p · · · · · · · p·p · ···· · ···· ·

n

1 q·q·q·q·q·q·q·q·q·q· · · q·q p n1 q n2 factors

n

2 factors

• But, also, clearly, there are

MANY different possible ways of taking N steps so n

1 are to right & n

2 are to left!

≡

• The # of distinct possibilities is the SAME as counting the # of distinct ways we can place N objects, n

1 of one type & n

2 another in N = n

1

+ n

2 places: of

1 st place: Can be occupied any one of N ways

2 nd place: Can be occupied any one of N - 1 ways

3 rd place: Can be occupied any one of N - 2 ways

·

·

(N – 1) th place: Can be occupied only 2 ways

N th place: Can be occupied only 1 way

All available places can be occupied in:

N(N-1)(N-2)(N-3)(N-4)·····(3)(2)(1) ≡ N! Ways

N! ≡ “N-Factorial”

• Note However!

This analysis doesn’t take into account the fact that there are only 2 distinguishable kinds of objects: n

1 of the 1 st type & n

2 permutations of the of the 2 nd type .

All n

1

!

possible

1 st type of object lead to exactly the same

N! possible arrangements of the objects. Similarly, all n

2

!

possible permutations of the 2 nd type of object also lead to exactly the same N! arrangements.

So, we need to divide the result by n

1

!n

2

!

So, the with n

1

# of distinct ways in which N objects can be arranged of the 1 st type & n

2 of the 2 nd type is

≡

N!/(n

1

!n

2

!)

This is the same as the # of distinct ways of taking N steps, with n

1 to the right & n

2 to the left.

In Summary:

The probability W

N

(n

1

) of taking N steps, with n

1 to the right & n

2

= N - n

1 to the left is W

N

(n

1

) = [N!/(n

1

!n

2

!)]p n1 q n2 or

W

N

(n

1

) = [N!/{n

1

!(N – n

1

)!]}p n1 (1-p) n2

• Often, this is written as

W

N

(n

1

) = N p n1 q n2 n

1

• This probability distribution is called the

Remember that q = 1- p

Binomial Distribution

.

• This is because the

Binomial Expansion has the form

(p + q) N = ∑

(n1 = 0

N)

[N!/[n!(N–n

1

)!]p n1 q n2

• We really want the probability P

N

(m) that x = mℓ after N steps. This really the same as W

N

(n

1

) if we change notation:

P

N

(m) = W

N n

2

= N - n

1

(n

1

) .

But m = 2n

1

– N, so n

1

= (½)(N + m)

&

= (½)(N - m).

So the probability P

N

(m) that x = mℓ after N steps is:

P

N

(m) = {N!/([0.5(N + m)]![0.5(N – m)!]}p 0.5(N+m) (1-p) 0.5(N-m)

For the common case of p = q = ½, this is:

P

N

(m) = {N!/([0.5(N + m)]![0.5(N – m)!]}(½) N

This is the usual form of the

Binomial Distribution

which is probably the most elementary (discrete) probability distribution.

• As a trivial example, suppose that p = q = ½, N = 3 steps:

P

3

(m) = {3!/[0.5(3+m)!][0.5(3-m)!](½) 3

So

P

3

(3) = P

3

(-3) = (3!/[3!0!](⅛) = ⅛

Possible Step Sequences n

1 n

2 m = n

1

– n

2

3 0 3

2

1

0

1

2

3

1

-1

-3

P

3

(1) = P

3

(-1) = (3!/[2!1!](⅛) = ⅜

• As another example, suppose that: p = q = ½, N = 20.

P

20

(m) = {20!/[0.5(20 + m)!][0.5(20 - m)!](½) 3

• Doing this gives the histogram

Note: The envelope of the histogram results in the figure is a bell-shaped curve. The significance of this is that, after N random steps, the probability of a particle being a distance of N steps away from the start is very small & the probability of it being at or near the origin is relatively large:

P

20

(20) = [20!/(20!0!)](½) 20

P

20

(20)

9.5

10 -7

P

20

(0) = [20!/(10!) 2 ](½) 20

P

20

(0)

1.8

10 -1

Sect. 1.3: General Discussion of Mean Values

• The Binomial Distribution is only one example of a probability distribution. Now, we’ll begin a discussion of a

General Distribution.

• Most of the following results are valid for ANY probability distribution,

• Let u = a variable which can take on any of M discrete values: u

1

,u

2

,u

3

,…,u

M-1

,u

M with probabilities P(u

1

),P(u

2

),P(u

3

),…..,P(u

M-1

),P(u

M

)

• The Mean (average) value of u is defined as: ū ≡ <u> ≡ (S

2

/S

1

) where

S

1

≡

P(u

1

) + P(u

2

) + P(u

3

) +…..+ P(u

M-1

) + P(u

M

)

≡ ∑ i

P(u i

)

S

2

≡ u

1

P(u

1

) + u

2

P(u

2

) + u

3

P(u

3

) +…..+ u

M-1

P(u

M-1

) + u

M-1

P(u

M

)

≡ ∑ i u i

P(u i

)

For a properly normalized distribution, S

1

= ∑ i

P(u i

) = 1 . We assume this from now on.

• Sometimes, ū is called the 1 st moment of P(u).

• If

O(u) is any function of u , the mean value of O(u) is:

Ō ≡ <O> ≡ ∑ i

O(u i

)P(u i

)

• Some simple mean values that are useful for describing the probability distribution P(u) :

1. The mean value, ū

This is a measure of the central value of u about which the various

• Consider the quantity values of u i are distributed.

Δu ≡ u - ū

(deviation from the mean).

It’s mean is:

<Δu> = <u - ū> = ū – ū = 0

The mean value of the deviation from the mean is always zero!

• Now, lets look at (Δu) 2 = (u - <u>) 2

(square of the deviation from the mean).

It’s mean value is: <(Δu) 2 > = <(u - <u>) 2 > = <u 2 -2uū – (ū) 2 >

= <u 2 > - 2<u><u> – (<u>) 2 = <u 2 > - (<u>) 2

• This is called the “Mean Square Deviation”

(from the mean).

It is also called several different (equivalent!) other names: the Dispersion or the Variance or the 2 nd Moment of P(u) about the mean .

<(Δu) 2 > is a measure of the spread of the u values about the mean ū.

NOTE that <(Δu) 2 > = 0 if & only if u i

= ū for all i .

• It can easily be shown that, <(Δu) 2 > ≥ 0 , or <u 2 > ≥ (<u>) 2

• We could also define the n th moment of P(u) about the mean:

<(Δu) n > ≡ <(u - <u>) n >

• This is rarely used beyond n = 2 . Almost never beyond n = 3 or 4 .

•

NOTE:

A knowledge of the probability distribution function

P(u) gives complete information about the distribution of the values of u . But, a knowledge of only a few moments, like knowing just ū & <(Δu) 2 > implies only partial, though useful knowledge of the distribution. A knowledge of only some moments is not enough to uniquely determine P(u).

Math Theorem

• In order to uniquely determine a distribution P(u), we need to know ALL moments of it. That is we need all moments for n = 0,1,2,3….

.

Section 1.4: Calculation of Mean Values for the

Random Walk Problem

• Also we’ll discuss a few math “tricks” for doing discrete sums!

• We’ve found:

The probability in N steps of making n

1 to the right & n

2

= N - n

1 to the left is the Binomial Distribution:

W

N

(n

1

) = [N!/(n

1

!n

2

!)]p n1 q n2 p = the probability of a step to the right, q = 1 – p = the probability of a step to the left.

• First, lets verify normalization:

∑

(n1 = 0

N)

W

N

(n

1

) = 1?

• Recall the binomial expansion:

(p + q) N = ∑

(n1 = 0

N)

[N!/(n

1

!n

2

!)]p n1 q n2 = ∑

(n1 = 0

N)

W

N

(n

1

)

• But, (p + q) = 1 , so (p + q) N = 1 &

∑

(n1 = 0

N)

W

N

(n

1

) = 1.

•

Question 1: What is the mean number of steps to the right?

<n

1

> ≡ ∑

(n1 = 0

N) n

1

W

N

(n

1

) = ∑

(n1 = 0

N) n

1

[N!/(n

1

!(N-n

1

)!]p n1 q N-n1 (1)

• We can do this sum by looking it up in a table OR we can use a “trick” as follows. The following is a general procedure which usually works, even if it doesn’t always have mathematical “rigor”.

•

Temporarily , lets treat p & q as “arbitrary”, continuous variables, ignoring the fact that p + q =1 .

•

NOTE that, if p is a continuous variable, then we clearly have: n

1 p n1 ≡ p[

(p n1 )/

p]

• Now, use this in (1) (interchanging the sum & the derivative):

<n

1

> = ∑

(n1 = 0

N)

[N!/(n

1

!(N-n

1

)!]n

1 p n1 q n2 = ∑

( n1 = 0

N)

[N!/(n

1

!(N-n

1

)!] p[

(p n1 )/

p] q n2

= p[

/

p] ∑

(n1 = 0

N)

[N!/(n

1

!(N-n

1

)!]p n1 q N-n1 = p[

/

p] (p + q) N = pN(p + q) N-1

But, for our special case (p + q) = 1 , (p + q) N-1 = 1 , so

<n

1

> = Np

•

Summary: The mean number of steps to the right is:

<n

1

> = Np

• We might have guessed this! Similarly, we can also easily show that

The mean number of steps to the left is:

<n

2

> = Nq

• Of course, <n

1

> + <n

2

> = N(p + q) = N as it should!

• Question 2:

What is the mean displacement ,

<x> = <m>ℓ

?

Clearly, m = n

1

– n

2

, so <m> = <n

1

> - <n

2

> = N( p – q)

So, if p = q = ½, <m> = 0 so,

<x> = <m>ℓ

= = 0

• Question 3: What is the dispersion (or variance)

<(Δn

1

) 2 > = <(n

1

- <n

1

>) 2 > in the number of steps to the right?

That is, what is the spread in n

1

• Our general discussion has shown that: values about <n

1

> ?

<(Δn

1

) 2 > = <(n

1

) 2 > - (<n

1

>) 2

Also we’ve just seen that <n

1

> = Np

So, we first need to calculate the quantity <(n

1

) 2 >

<(n

1

) 2 > = ∑

(n1 = 0

N)

(n

1

) 2 W

N

(n

1

) = ∑

(n1 = 0

N)

(n

1

) 2 [N!/(n

1

!(N-n

1

)!]p n1 q N-n1 (2)

• Use a similar “trick” as we did before & note that:

(n

1

) 2 p n1 ≡ [p(

/

p)] 2 p n1

• After algebra (in the book) & using p + q = 1 , we find:

<(n

1

) 2 > = (Np) 2 + Npq = (<n

1

>) 2 + Npq

• So, finally, using

<(Δn

1

) 2 > = <(n

1

) 2 > - (<n

1

>) 2

<(Δn

1

) 2 > = Npq

• This is the dispersion or variance of the binomial distribution.

• The root mean square (rms) deviation from the mean is defined as:

(Δ*n

1

)

[<(Δn

1

) 2 >]

½

(in general). For the binomial distribution this is

(Δ*n

1

) = [Npq]

½

The distribution width

• Again note that: <n

1

> = Np . So, the relative width of the distribution is:

(Δ*n

1

)/<n

1

> = [Npq]

½

(Np) = (q

½

)

(pN)

½

If p = q , this is: (Δ*n

1

)/<n

1

> = 1

(N)

½

= (N) -½

As N increases, the mean value increases

N but the relative width decreases

(N) -½

• Question 4: What is the dispersion

<(Δm) 2 > = <(m - <m>) 2 > in the net displacement?

x = mℓ

. What is the spread in m values about <m> )?

• We had, m = n

1

– n

Δm = m - <m> = (2n

1

2

= 2n

1

– N

. So, <m> = 2<n

– N) – (2<n

1

> - N) = 2(n

1

1

> – N.

– <n

1

>) = 2(Δn

1

)

(Δm) 2 = 4(Δn

1

) 2 . So, <(Δm) 2 > = 4<(Δn

1

) 2 >

• Using

<(Δn

1

) 2 > = Npq , this becomes:

<(Δm) 2 > = 4Npq

• If p = q = ½, <(Δm) 2 > = N

Summary: 1 Dimensional Random Walk Problem

•

Probability Distribution is Binomial: W

N

(n

1

) = [N!/(n

1

!n

2

!)]p n1 q n2

•

Mean number of steps to the right: <n

1

> = Np

•

Dispersion in n

1

: <(Δn

1

) 2 > = Npq

Relative width:

(Δ*n

1

)/<n

1

> = (q

½

)

(pN)

½ for N increasing, the mean value increases

N , the relative width decreases

(N) -½

Some General Comments about the Binomial Distribution

The Binomial Distribution applies to cases where there are only two possible outcomes: head or tail, success or failure, defective item or good item, etc.

Requirements justifying the use of the Binomial Distribution

1.

The experiment must consist of n identical trials.

2.

Each trial must result in only one of two possible outcomes.

3.

The outcomes of the trials must be statistically independent.

4.

All trials must have the same probability for a particular outcome.

Common Notation for the Binomial Distribution r items of one type and (n – r) of a second type can be arranged in n

C r n!

n

C r r!

(n

ways. Here: r)!

n

C r is called the binomial coefficient

In this notation, the probability distribution can be written:

W

n

(r) =

n

C

r

p

r

(1-p)

n-r

≡ probability of finding r items of one type & n – r items of the other type. p = probability of a given item being of one type

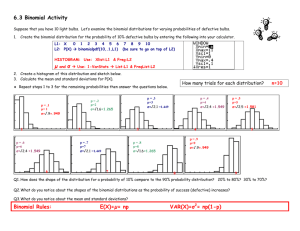

Binomial Distribution Example

Problem: A sample of n = 11 electric bulbs is drawn every day from those manufactured at a plant. The probabilities of getting defective bulbs are random and independent of previous results. The probability that a bulb is defective is p = 0.04

.

1.

What is the probability of finding exactly three defective bulbs in a sample?

(Probability that r = 3 ?)

2.

What is the probability of finding three or more defective bulbs in a sample?

(Probability that r ≥ 3 ?)

Binomial Distribution, n = 11

No. of defective bulbs, r

Probability

11

C r p r (1-p) n-r

0

1

2

3

11

C

0

(0.04) 0 (0.96) 11 = 0.6382

11

C

1

(0.04) 1 (0.96) 10 = 0.2925

11

C

2

(0.04) 2 (0.96) 9 = 0.0609

11

C

3

(0.04) 3 (0.96) 8 = 0.0076

Question 1: Probability of finding exactly three defective bulbs in a sample?

P(r = 3 defective bulbs ) = W

11

(r = 3) = 0.0076

Question 2: Probability of finding three or more defective bulbs in a sample?

P(r ≥ 3 defective bulbs ) =

1- W

11

(r = 0) – W

11

(r = 1) – W

11

(r = 2) =

1 – 0.6382 - 0.2925 – 0.0609 = 0.0084

Binomial Distribution, Same Problem, Larger r

No. of defective bulbs

0

Probability

11

C r p r (1-p) n-r

11

C

0

(0.04) 0 (0.96) 11 = 0.638239

1

11

C

1

(0.04) 1 (0.96) 10 = 0.292526

2

3

4

5

11

C

2

(0.04) 2 (0.96) 9 = 0.060943

11

C

3

(0.04) 3 (0.96) 8 = 0.007618

11

C

4

(0.04) 4 (0.96) 7 = 0.000635

11

C

5

(0.04) 5 (0.96) 6 = 0.000037

Binomial Distribution

0,70

0,60

0,50

0,40

0,30

0,20

0,10

0,00

0

Probability Distribution of Defective Items

1 2

Binomial Distribution, n = 11, p = 0.04

3 4 5 6

Defective Items

7 8 9 10 11

Binomial Distribution

0,70

0,60

0,50

0,40

0,30

0,20

0,10

0,00

Probability Distribution of Good Items

Binomial Distribution, n = 11, q = 0.96

0 1 2 3 4 5

Good Items

6 7 8 9 10 11

.2461

Binomial distribution with n=10, p=0.5

prob

0

0

1

2

3

4

5

6

7

8

9

10

“Wandering Photon”

Animation found on the Internet!

The “Wandering Photon”

Photon

Walks straight for a random length

Stops with probability g

Turns in a random direction with probability (1g

)

One Dimension

x

After a random length x with probability g stop with probability (1g

)/2 continue in each direction

x

x

x

x

x

x

P(photon absorbed at x)? x q ( x )

e

x

2 pdf of the length of the first step

1 is the average step length

γ is the absorption probability

P(photon absorbed at x) = f (|x|, g

,h)

g e

2 g x x

e

x q ( x )

pdf of the length of the first step

2

1 is the average step length

γ is the absorption probability

The sleepy drunk

in higher dimensions

After a random length, with probability g stop with probability (1g

) pick a random direction

The sleepy drunk

in higher dimensions r

P(absorbed at r) = f (r, g,

)