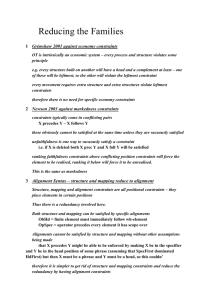

---------------------------------------------------------------------------------- A Statistical MT Tutorial Workbook

advertisement