WikiOnto: A System For Semi-automatic Extraction And Modeling Of Ontologies

advertisement

2009 IEEE International Conference on Semantic Computing

WikiOnto: A System For Semi-automatic Extraction And

Modeling Of Ontologies

Using Wikipedia XML Corpus

Lakshman Jayaratne

University of Colombo School of Computing

Colombo, Sri Lanka

klj@ucsc.cmb.ac.lk

Lalindra De Silva

University of Colombo School of Computing

Colombo, Sri Lanka

lalindra84@gmail.com

domains as the sources for building ontologies1 .

The research efforts and the applications that resulted

from those research in the area of ontology development,

extraction and ontology learning are many and diverse.

With the light of these attempts, section 2 takes a look at

related prior research to construct topic ontologies using

various sources as input. In section 3, we describe our

source - the Wikipedia XML Corpus - and the structure of

the documents in the corpus, along with recognition as to

why it is an ideal source for such research. The framework

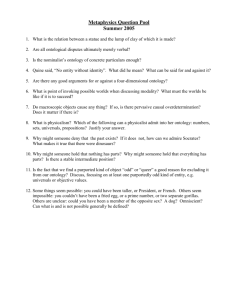

of our system consists of three major layers (Figure 1).

As such, section 4 looks at in detail how this three-tiered

framework is implemented. Finally, we will present the

ontology development environment with both the current and

proposed facilities that are/will be available to the users of

the system.

Abstract—This paper introduces WikiOnto: a system that

assists in the extraction and modeling of topic ontologies in a

semi-automatic manner using a preprocessed document corpus

of one of the largest knowledge bases in the world - the

Wikipedia. Based on the Wikipedia XML Corpus, we present a

three-tiered framework for extracting topic ontologies in quick

time and a modeling environment to refine these ontologies.

Using Natural Language Processing (NLP) and other Machine

Learning (ML) techniques along with a very rich document

corpus, this system proposes a solution to a task that is

generally considered extremely cumbersome. The initial results

of the prototype suggest strong potential of the system to

become highly successful in ontology extraction and modeling

and also inspire further research on extracting ontologies from

other semi-structured document corpora as well.

Keywords-Ontology, Wikipedia XML Corpus, Ontology

Modeling, Ontology Extraction

II. R ELATED W ORKS

I. I NTRODUCTION

The research efforts to extract ontologies have spanned

over multifarious domains in terms of the input sources they

use. A large number of these researches have focused on

using textual data as their input while a lesser number of

research have focused on semi-structured data and relational

databases. [4] presents a method in which the words that

appear in the source texts are ontologically annotated using

the WordNet [5] as the lexical database. The authors have

primarily worked on assigning verbs that appear in source

articles into ontological classes. Several other researchers

have utilized the XML schemas as a starting point for the

creation of ontologies. In [6], the authors have used the

ORA-SS (Object-Relationship-Attribute Model for Semistructured Data) model to initially determine the nature of

the relationship between the elements of the XML schema

and subsequently to create a genetic model for organizing

the ontology. [7] gives a detailed survey of some of the work

that has been carried out in terms of learning ontologies for

the semantic web. [8] presents a framework for ontology

With the growing issue of information overload in the

present world and with the emergence of the semantic

web, the importance of ontologies has come under the

spotlight in recent years. Ontologies, commonly defined as

“an explicit specification of a conceptualization” provides

an agreed upon representation of concepts of a domain for

easier knowledge management [1]. However, the modeling

of ontologies is generally considered to be a painstaking

task, often requiring the knowledge and expertise of an

ontology engineer who is well versed in the concerned

domain.

Previous efforts in building ontology development environments were successful in their comprehensiveness of

the tools they provided to the user, but it still involved a

lot of manual work when building ontologies using these

environments [2]. Additionally, most of the efforts to extract

ontologies were focused on using textual data as their

sources. As such, in this research, we have looked into extracting ontologies and modeling them using the Wikipedia

XML Corpus [3] as the source, with a futuristic view of

extending this research into using other semi-structured data

978-0-7695-3800-6/09 $26.00 © 2009 IEEE

DOI 10.1109/ICSC.2009.93

1 This work is based on the continuing undergraduate thesis of the first

author titled ‘A Machine Learning Approach To Ontology Extraction and

Evaluation Using Semi-structured Data’

571

extraction for document classification. In this work, the

author has experimented on the Reuters collection and the

Wikipedia database dump as her sources and produces a

methodology in which concepts can be extracted from such

large document bases. Other tools for extracting ontologies

from text such as ASIUM [9], OntoLearn [10] and TextToOnto [11] have also been around. However, the biggest

motivation for our research spans from OntoGen [12]. OntoGen is a tool that enables semi-automatic generation of topic

ontologies using textual data as the initial source. We have

utilized the best features of the OntoGen methodology while

extending our research into the semi-structured data domain.

Consequently, we have prposed to enhance our system with

additional concept extraction mechanisms such as lexico

syntactic pattern matching, which the OntoGen project has

not incorporated.

<collectionlink xlink:type="simple"

xlink:href="3850.xml">Baseball

</collectionlink>

</item>

<item>

<collectionlink xlink:type="simple"

xlink:href="3812.xml">Basketball

</collectionlink>

</item>

</normallist>

</section>

...

...

...

<languagelink lang="cs">M?</languagelink>

<languagelink lang="de">Ball</languagelink>

...

...

...

</section>

</body>

</article>

III. W IKIPEDIA XML C ORPUS

Each document is contained within the ‘article’ tag within

which lies a limited number of predefined element tags

that correspond to specific relations between text segments

in the document. The ‘name’ element carries the title of

the article while ‘title’ elements within each ‘section’ elements correspond to the titles of different subsections. The

‘normallist’ elements contain lists of information while the

‘collectionlink’ and ‘unknownlink’ elements correspond to

other articles referred from within the document.

In addition to the documents, the corpus provides category

information which hierarchically organizes the articles in

the relevant categories. Using this category information, we

were able to develop the initial segment of our system where

the user can choose a certain number of articles from the

interested domain to be initially input to the system. This

selection of domain specific document set enables better

targeted and expedite ontology creation as opposed to using

the entire corpus as the input.

The Wikipedia, being one of the largest knowledge bases

in the world and the most popular reference work in the

current World Wide Web, has inspired so many people and

projects in diverse fields including knowledge management,

information retrieval and ontology development. The reliability of the articles in the Wikipedia is often criticized

as it is a knowledge base that can be accessed and made

modifications to by anyone in the world. However, for the

most part, the Wikipedia is considered to be reliable enough

to be used in non-critical applications.

The Wikipedia XML Corpus is a collection of documents

derived from the Wikipedia which is being used in a large

number of Information Retrieval and Machine Learning

tasks at present research communities. The corpus contains

articles in eight languages and the latest collection contains

approximately 660,000 articles in English language that

correspond to articles in the Wikipedia. Each XML document in the corpus is uniquely named (e.g. 12345.xml) and

has the following uniform element structure: (e.g. 3928.xml

representing the article on “Ball”)

IV. W IKI O NTO F RAMEWORK

As previously mentioned, the WikiOnto system is implemented in a three-tiered framework. The following sections

explain each layer in detail.

<article>

<name id="3928">Ball</name>

<body>

<p>A <emph3>ball</emph3> is a round object

that is used most often in

<collectionlink xlink:type="simple"

xlink:href="26853.xml">sport

</collectionlink>s and

<collectionlink xlink:type="simple"

xlink:href="11970.xml">game

</collectionlink>s.

</p>

...

...

...

<section>

<title>Popular ball games</title>

There are many popular games...

...

<normallist>

<item>

A. Concept Extraction Using Document Structure

In this layer, we make use of the structure of the documents to deduce substantial information about the taxonomic

hierarchy of possible concepts in that document. After

careful review of many documents in the corpus, we have

established the following assumptions in extracting concepts

from the documents.

• Word phrases contained within the ‘name’, ‘title’,

‘item’, ‘collectionlink’ and ‘unknownlink’ elements are

proposed as concepts to the user (e.g. in the previous

example of the XML file, the words ‘Ball’, ‘Sport’,

‘Game’, ‘Popular Ball Games’, ‘Baseball’ and ‘Basketball’ are all suggested to the user as concepts)

572

Figure 1.

•

•

•

Design of the WikiOnto System

The word phrases contained within ‘title’ elements in

each of the first-level sections are suggested as subconcepts of the concept within the ‘name’ element of

the document (e.g. ‘Popular Ball Games’ is a subconcept of the concept ‘Ball’)

The word phrases contained within a ‘title’ element

nested within several ‘section’ elements is suggested

as a sub-concept of the ‘title’ concept of the immediate

section above it

Any word phrase wrapped inside ‘collectionlink’ or

‘unknownlink’ elements are suggested as sub-concepts

of the concept immediately above it in the structure of

the XML document (e.g. the concepts ‘Baseball’ and

‘BasketBall’ are sub-concepts of the concept ‘Popular

Ball Games’ while the concepts ‘Sport’ and ‘Game’ are

sub-concepts of the concept ‘Ball’)

selector explained earlier, choosing manually or inputting the

entire corpus2 ), the system iterates through all the documents

to extract the potential concepts and their relationships

according to the assumptions listed earlier. The ontology

modeling environment (section 5) explains how the user

can refine these relationships after examining the concepts

(i.e. how the user can label the relationships as hyponomic,

hypernimic, meronomic relations etc).

In order to validate the concepts that are extracted and

suggested, we have incorporated WordNet [5] into our system. When all the concepts are extracted from the documents

as per the above assumptions, each and every concept is

matched morphologically, word-by-word with WordNet. If

even a single word in a word phrase cannot be morphologically matched with WordNet (owing to the reasons such as

being a foreign word, a person’s name, place name, etc),

the whole word phrase is withheld from being added to the

concept collection and is presented to the user at the end of

the processing stage along with the rest of such concepts.

In the initialization of the system, the user is given the

opportunity to define the maximum number of words that

can be contained in a potential concept. Once the user

inputs the documents that are to be used as the sources to

extract concepts (either using the domain-specific document

2 Due to computer resource restraints, the system is yet to be tested with

the full corpus (approx. 660,000 articles) as input

573

The user has the full discretion to decide whether these

concepts should be added to the concept collection or not.

At the end of this processing stage, the user is able to

query for a concept in the collection and start building

an ontology with the queried concept as the root concept.

The system automatically populates first level concepts and

according to the user’s needs, will populate the additional

levels of concepts as well (i.e. initially the ontology includes

only the immediate concepts that have a sub-concept relationship with the root concept. With the user’s discretion, the

system will treat each concept in the first level as a parent

concept and add its child concepts to it).

documents and imposing a threshold value, we were able to

identify the keywords that correspond to a given document.

With this vector-space representation of each document,

the user is then given the opportunity to group the documents

in to a desired number of clusters. This is achieved through

the k-means clustering algorithm [14] described below.

k-means Algorithm:

1) Choose k cluster centers randomly from the data points

2) Assign each data point to the closest cluster center

based on the similarity measure

3) Re-compute the cluster centers

4) If the assignment of data points have changed (i.e. the

process has not converged) repeat from step 2

B. Concepts From Keyword Clustering

As the second layer of our framework, we have implemented a keyword clustering method to identify the concepts

related to a given concept. There have been several welldocumented approaches and metrics for extracting keywords

and measuring their relevance to the documents. However,

we have used the well known TFIDF measure [13], which

is generally accepted to be a good measure of the relevance

of a term in a document and have represented each document using the vector-space model with the use of these

keywords. The TFIDF measure is the product between the

Term Frequency (TF) and the Inverse Document Frequency

(IDF) defined as follows:

The similarity measure used in our approach is the cosine

similarity [15] between two vectors which is defined as

follows:

If A and B are two vectors in our vector space:

Cosine Similarity = cos (θ) =

Once these clusters have been formed, the user selects a

concept in the raw ontology that is taking shape and the

system provides suggestions for that concept (excluding the

concepts already added as sub-concepts of that concept). In

achieving this, the system looks for the cluster that contains

the highest TFIDF value for that word (in the case where a

concept consists of more than one word, the system looks

for all the clusters where each word is located) and suggests

the keywords using two criteria.

1) Suggest the individual keywords of the document

vectors of that cluster

2) Suggest the highest valued keywords in the centroid

vector of that cluster. The centroid vector is the single

vector comprised by summing the respective elements

of all the vectors

nt,d

T Ft,d = k nk,d

TFt,d :the number of times a word t appears in a document

d

n :the frequency of term t in the document d

k :the number of distinct words in document d

IDFi = log

A.B

||A|| ||B||

|D|

|{d : ti ∈ d}|

IDFi :the log of the fraction between the number of

documents in the corpus and the number of documents in

which the word t appears

|D| :the number of documents in the corpus

C. Concepts From Sentence Pattern Matching

In enhancing the accuracy and the comprehensiveness of

the ontologies constructed through our system, we have

proposed to implement a sentence analyzing module to

work alongside the previous two layers. Several research

attempts at identifying lexical patterns within text files have

been proposed and we intend to utilize the best of these

methods to enable the users of our system to enhance

the ontologies they are constructing. A popular method for

acquiring hyponymic relations was presented by [16] and we

have begun to implement a similar approach in our system.

The motivation for such syntactic processing comes from the

fact that there is a significant number of common sentence

patterns appearing in the Wikipedia XML Corpus and this

TFIDFi,d = TFi,d ×IDFi

In selecting keywords in the document collection, we

have defined and removed all the ‘stopwords’ (words that

appear commonly in sentences and have little meaning with

regard to the ontologies, such as prepositions, definite and

indefinite articles, etc) and extracted all the distinct words in

the document collection to build the vector-space model for

each document. Afterwards, calculating the TFIDF measure

for each and every word in the word list within the individual

574

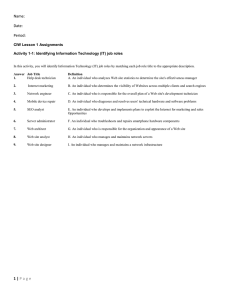

Figure 2.

WikiOnto Ontology Construction Environment

will allow the system to provide better suggestions to the

user in constructing the ontology.

In implementing this layer, we will incorporate a Part-OfSpeech tagger and with the help of the POS-tagged text, we

intend to extract relations as follows.

Sentences like “A ball is a round object that is used most

often ...” which appeared in the example XML file earlier

are evidence of common sentence patterns in the corpus. In

this instance, “A ball is a round object” matches with the

pattern “{NP} is a {NP}” in the POS-tagged text where ‘NP’

refers to a noun phrase. Several other patterns such as “{NP}

including {NP,}*and {NP}” (e.g. “All third world countries

including Sri Lanka, India and Pakistan”) are candidates for

very obvious relations and this should enable us to make

more comprehensive suggestions to the user. Again, these

candidate word phrases will be validated against WordNet

and the decision to add them to the ontology will completely

lie at the user’s discretion.

In the beginning of the ontology contsruction process, the

user has the ability to query for a concept, irrespective of

the fact that that concept exists in the concept collection

extracted initially. The system will generate the OWL definition for the ontology being built and the user has the ability

to export the ontology in this standard format.

VI. E VALUATION A ND F UTURE W ORK

Owing to the reason that the project is still continuing, a

thorough evaluation of the system seems distant. However,

the initial prototype was tested among the undergraduates

and several faculty members of the authors’ university and

we received commendable feedback and several requests for

additional features for the system. Additionally, since the

ontology being generated, for the most part, depends on

the user’s choices, a standard evaluation mechanism seems

impractical. The most well-known evaluation methods such

as comparing with a gold standard (an ontology that is

accepted to be defining the targeted domain accurately) or

testing the results of the ontology in an application seems

inappropriate given the dependance of the system upon the

user. Owing to the reason that our system is a facilitator

rather than a fully-fledged ontology generator, we plan to

evaluate the system through user trials towards the end of

the project.

With the progress of this project we have furthered our

V. T HE G RAPHICAL M ODELING E NVIRONMENT

We have used C# as our language of choice for this system

and piccolo2D for the visualization of the ontology editor.

The user is given the flexibility to add, delete concepts from

the taxonomic hierarchy and also the capability to rename

relations and concepts according to their discretion (Figure.

2).

575

expectations of the potential applications that can make use

of a system that takes semi-structured data and extracts topic

ontologies from them. Especially, we are focusing on how

the proposed project can be extended to other document

sources, such as the Reuters Collection [17].

[10] P. Velardi, R. Navigli, A. Cucchiarelli, and F. Neri, “Evaluation of OntoLearn, a methodology for automatic population of

domain ontologies,” in Ontology Learning from Text: Methods, Applications and Evaluation, P. Buitelaar, P. Cimiano,

and B. Magnini, Eds. IOS Press, 2006.

[11] A. Maedche and S. Staab, “Semi-automatic Engineering of

Ontologies from Text,” in Proceedings of the 12th International Conference on Software Engineering and Knowledge

Engineering, 2000.

VII. C ONCLUSION

In this paper, we have introduced WikiOnto: a system

for extracting and modeling topic ontologies using the

Wikipedia XML corpus. Through detail explanations, we

have presented our methodology of the system where we

have proposed a three-tiered approach to concept and relation extraction from the corpus as well as a development

environment for modeling the ontology. The project is still

continuing and is expected to produce successful outcomes

in the area of ontology extraction as well as spring up further

research in ontology extraction and modeling.

We expect to make the system and the source code

available for free. The extendability for other document

sources is still being tested and is the reason why it was left

out from the paper. We hope to make the results announced

with enough time so that the final system will be available

for the this paper’s intended audiance.

[12] “Ontogen: Semi-automatic ontology editor,” in Human Interface, Part II, HCII 2007, M. Smith and G. Salvendy, Eds.,

2007, pp. 309–318.

[13] G. Salton and C. Buckley, “Term-weighting approaches in

automatic text retrieval,” in Information Processing and Management, 1988, pp. 513–523.

[14] A. K. Jain, M. N. Murty, and P. J. Flynn, “Data clustering:

A review,” 1999.

[15] G. Salton and C. Buckley, “Term-weighting approaches in

automatic text retrieval,” in Information Processing and Management, 1988, pp. 513–523.

[16] M. A. Hearst, “Automatic acquisition of hyponyms from large

text corpora,” in In Proceedings of the 14th International

Conference on Computational Linguistics, 1992, pp. 539–545.

R EFERENCES

[17] M. Sanderson, “Reuters Test Collection,” in BSC IRSG, 1994.

[Online]. Available: citeseer.ist.psu.edu/sanderson94reuters.

html

[1] T. R. Gruber, “A translation approach to portable ontology

specifications,” Knowl. Acquis., vol. 5, no. 2, pp. 199–220,

1993.

[2] Ontoprise, “Ontostudio,” http://www.ontoprise.de/, 2007, (accessed 2009-05-08).

[3] L. Denoyer and P. Gallinari, “The Wikipedia XML Corpus,”

SIGIR Forum, 2006.

[4] S. Tratz, M. Gregory, P. Whitney, C. Posse, P. Paulson,

B. Baddeley, R. Hohimer, and A. White, “Ontological annotation with wordnet.”

[5] C. Fellbaum, Ed., WordNet An Electronic Lexical

Database. Cambridge, MA ; London: The MIT Press,

1998. [Online]. Available: http://mitpress.mit.edu/catalog/

item/default.asp?ttype=2&tid=8106

[6] C. Li and T. W. Ling, “From xml to semantic web,” in In 10th

International Conference on Database Systems for Advanced

Applications, 2005, pp. 582–587.

[7] B. Omelayenko, “Learning of ontologies for the web: the

analysis of existent approaches,” in In Proceedings of the

International Workshop on Web Dynamics, 2001.

[8] N. Kozlova, “Automatic ontology extraction for document

classification - masters thesis,” Saarland University, Germany,

Tech. Rep., February 2005.

[9] D. Faure, C. Ndellec, and C. Rouveirol, “Acquisition of

semantic knowledge using machine learning methods: The

system ”asium”,” Universite Paris Sud, Tech. Rep., 1998.

576