Judy Qiu Shanghai Many-Core Workshop March 27-28 2008

advertisement

Shanghai Many-Core Workshop March 27-28 2008

Judy Qiu

xqiu@indiana.edu, http://www.infomall.org/salsa

Research Computing UITS, Indiana University Bloomington IN

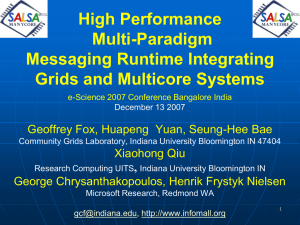

Geoffrey Fox, Huapeng Yuan, Seung-Hee Bae

Community Grids Laboratory, Indiana University Bloomington IN

George Chrysanthakopoulos, Henrik Frystyk Nielsen

Microsoft Research, Redmond WA

What applications can use the 128 cores expected in 2013?

Over same time period real-time and archival data will increase as fast

as or faster than computing

Internet data fetched to local PC or stored in “cloud”

Surveillance

Environmental monitors, Instruments such as LHC at CERN, High throughput

screening in bio- and chemo-informatics

Results of Simulations

Intel RMS analysis suggests Gaming and Generalized decision support

(data mining) are ways of using these cycles

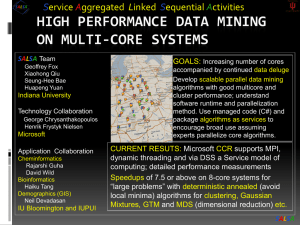

SALSA is developing a suite of parallel data-mining capabilities:

currently

Clustering with deterministic annealing (DA)

Mixture Models (Expectation Maximization) with DA

Metric Space Mapping for visualization and analysis

Matrix algebra as needed

Service Aggregated Linked Sequential Activities

Link parallel and distributed (Grid) computing by developing parallel modules as

services and not as programs or libraries

e.g. clustering algorithm is a service running on multiple cores

We can divide problem into two parts:

“Micro-parallelism” : High Performance scalable (in number of cores) parallel kernels or

libraries

“Macro-parallelism” : Composition of kernels into complete applications

Two styles of “micro-parallelism”

Dynamic search as in scheduling algorithms, Hidden Markov Methods (speech

recognition), and computer chess (pruned tree search); irregular synchronization with

dynamic threads

“MPI Style” i.e. several threads running typically in SPMD (Single Program Multiple

Data); collective synchronization of all threads together

Most data-mining algorithms (in INTEL RMS) are “MPI Style” and very close to

scientific algorithms

Status: is developing a suite of parallel data-mining capabilities: currently

Clustering with deterministic annealing (DA)

Mixture Models (Expectation Maximization) with DA

Metric Space Mapping for visualization and analysis

Matrix algebra as needed

Results: currently

Microsoft CCR supports MPI, dynamic threading and via DSS a service model of computing;

Detailed performance measurements with Speedups of 7.5 or above on 8-core systems for “large

problems” using deterministic annealed (avoid local minima) algorithms for clustering, Gaussian

Mixtures, GTM (dimensional reduction) etc.

Collaboration:

SALSA Team

Geoffrey Fox

Xiaohong Qiu

Seung-Hee Bae

HuapengYuan

Indiana University

Technology Collaboration

Application Collaboration

George Chrysanthakopoulos

Henrik Frystyk Nielsen

Microsoft

Cheminformatics

Rajarshi Guha

David Wild

Bioinformatics

Haiku Tang

Demographics (GIS)

Neil Devadasan

IU Bloomington and IUPUI

We implement micro-parallelism using Microsoft CCR

(Concurrency and Coordination Runtime) as it supports both MPI rendezvous and

dynamic (spawned) threading style of parallelism http://msdn.microsoft.com/robotics/

CCR Supports exchange of messages between threads using named ports and has

primitives like:

FromHandler: Spawn threads without reading ports

Receive: Each handler reads one item from a single port

MultipleItemReceive: Each handler reads a prescribed number of items of a given type from

a given port. Note items in a port can be general structures but all must have same type.

MultiplePortReceive: Each handler reads a one item of a given type from multiple ports.

CCR has fewer primitives than MPI but can implement MPI collectives efficiently

Use DSS (Decentralized System Services) built in terms of CCR for service model

DSS has ~35 µs and CCR a few µs overhead (latency, details later)

N data points E(x) in D dimensions space and minimize F by EM

N

F T p( x) ln{ k 1 exp[( E ( x) Y ( k )) 2 / T ]

K

x 1

Deterministic Annealing Clustering (DAC)

• F is Free Energy

• EM is well known expectation maximization method

•p(x) with p(x) =1

•T is annealing temperature varied down from with

final value of 1

• Determine cluster centerY(k) by EM method

• K (number of clusters) starts at 1 and is incremented by

algorithm

Decrease temperature (distance scale) to discover more clusters

Total

Asian

Hispanic

Renters

30 Clusters

GIS30Clustering

Clusters

10 Clusters

F({Y}, T)

Solve Linear

Equations for

each temperature

Nonlinearity

removed by

approximating

with solution at

previous higher

temperature

Configuration {Y}

Minimum evolving as temperature decreases

Movement at fixed temperature going to local minima if not

initialized “correctly”

N data points E(x) in D dim. space and Minimize F by EM

N

F T a ( x) ln{ k 1 g (k ) exp[ 0.5( E ( x) Y (k )) 2 / (Ts(k ))]

K

x 1

Deterministic

Generative

Traditional

Topographic

Gaussian

Annealing

Clustering

Mapping

(GTM)

(DAC)

Deterministic

Annealing

Gaussian

mixture

models

GM

Mixture

models

(DAGM)

• a(x)

= 1/N or

generally

p(x)

D/2 with p(x) =1

• a(x) = 1 and g(k) = (1/K)(/2)

•and

As s(k)=0.5

DAGM but set T=1 and fix K

•• g(k)=1

a(x)

=

1

• s(k) = 1/ and T = 1

• T is annealing

temperature

2)D/2}1/T

varied down from

M W/(2(k)

•Y(k) •= g(k)={P

m=1DAGTM:

(X(k))

km m

Deterministic

Annealed

with

final

value

of

1

2

2/2 Gaussian)

• s(k)=

(k)

(taking

case

of(X-

spherical

• Choose

fixed

(X)

=

exp(

0.5

)

)

m

m

Generative

Topographic

Mapping

• Vary

cluster centerY(k)

but can

calculate

weight

T misand

annealing

temperature

varied

down

from

• Vary•W

but

fix

values

of

M

and

K

a

priori

2

• GTM

has several

natural

annealing

P

and

correlation

matrix

s(k)

=

(k)

(even

for space

k

with

final

value

of

1

•Y(k) E(x)versions

Wm are2 vectors

in

original

high

D

dimension

based

on either DAC

or DAGM:

matrix

(k)

)

using

IDENTICAL

formulae

for space

•

Vary

Y(k)

P

and

(k)

• X(k) andunder

m areinvestigation

vectors

in

2

dimensional

mapped

k

Gaussian

mixtures

• K starts at 1 and is incremented by algorithm

•K starts at 1 and is incremented by algorithm

SALSA

“Main Thread” and Memory M

MPI/CCR/DSS

From other nodes

MPI/CCR/DSS

From other nodes

0

m0

1

m1

2

m2

3

m3

4

m4

5

m5

6

m6

7

m7

Subsidiary threads t with memory mt

Use Data Decomposition as in classic distributed memory but use

shared memory for read variables. Each thread uses a “local” array

for written variables to get good cache performance

Multicore and Cluster use same parallel algorithms but different

runtime implementations; algorithms are

Accumulate matrix and vector elements in each process/thread

At iteration barrier, combine contributions (MPI_Reduce)

Linear Algebra (multiplication, equation solving, SVD)

Parallel Overhead

on 8 Threads Intel 8b

0.45

10 Clusters

0.4

Speedup = 8/(1+Overhead)

0.35

Overhead = Constant1 + Constant2/n

Constant1 = 0.05 to 0.1 (Client Windows) due to

thread runtime fluctuations

0.3

0.25

20 Clusters

0.2

0.15

0.1

0.05

10000/(Grain Size n = points per core)

0

0

0.5

1

1.5

2

2.5

3

3.5

4

Speedup = Number of cores/(1+f)

f = (Sum of Overheads)/(Computation per

core)

Computation Grain Size n . # Clusters K

Overheads are

Synchronization: small with CCR

Load Balance: good

Memory Bandwidth Limit: 0 as K

Cache Use/Interference: Important

Runtime Fluctuations: Dominant large n, K

All our “real” problems have f ≤ 0.05 and

speedups on 8 core systems greater than 7.6

Multicore Matrix Multiplication

(dominant linear algebra in GTM)

10,000.00

Execution Time

Seconds 4096X4096 matrices

1 Core

1,000.00

Parallel Overhead

1%

8 Cores

100.00

Block Size

10.00

1

0.14

10

100

1000

10000

Parallel GTM Performance

0.12

Fractional

Overhead

f

0.1

0.08

0.06

4096 Interpolating Clusters

0.04

0.02

1/(Grain Size n)

0

0

0.002

n = 500

0.004

0.006

0.008

0.01

100

0.012

0.014

0.016

0.018

0.0

SALSA50

Deterministic Annealing

for Clustering of 335

compounds

Method works on much

larger sets but choose

this as answer known

GTM (Generative

Topographic Mapping)

used for mapping 155D

to 2D latent space

Much better than PCA

(Principal Component

Analysis) or SOM (Self

Organizing Maps)

Parallel Generative Topographic Mapping GTM

Reduce dimensionality preserving

topology and perhaps distances

Here project to 2D

GTM Projection of PubChem:

10,926,94 compounds in 166

dimension binary property space takes

4 days on 8 cores. 64X64 mesh of GTM

clusters interpolates PubChem. Could

usefully use 1024 cores! David Wild will

use for GIS style 2D browsing interface

to chemistry

PCA

GTM

Linear PCA v. nonlinear GTM on 6 Gaussians in 3D

PCA is Principal Component Analysis

GTM Projection of 2 clusters

of 335 compounds in 155

SALSA

dimensions

Micro-parallelism uses low latency CCR threads or MPI processes

Services can be used where loose coupling natural

Input data

Algorithms

PCA

DAC GTM GM DAGM DAGTM – both for complete algorithm and for

each iteration

Linear Algebra used inside or outside above

Metric embedding MDS, Bourgain, Quadratic Programming ….

HMM, SVM ….

User interface: GIS (Web map Service) or equivalent

Average run time (microseconds)

350

DSS Service Measurements

300

250

200

150

100

50

0

1

10

100

1000

10000

Round

trips of simultaneous two-way service

Timing of HP Opteron Multicore as a function

of number

messages processed (November 2006 DSS Release)

Measurements of Axis 2 shows about 500 microseconds – DSS is 10 times better

Machine

Intel8c:gf12

(8 core 2.33 Ghz)

(in 2 chips)

Intel8c:gf20

(8 core 2.33 Ghz)

Intel8b

(8 core 2.66 Ghz)

AMD4

(4 core 2.19 Ghz)

Intel4

(4 core 2.8 Ghz)

OS

Runtime

Grains

Parallelism

MPI Exchange Latency (µs)

MPJE (Java)

Process

8

181

MPICH2 (C)

Process

8

40.0

MPICH2: Fast

Process

8

39.3

Nemesis

Process

8

4.21

MPJE

Process

8

157

mpiJava

Process

8

111

MPICH2

Process

8

64.2

Vista

MPJE

Process

8

170

Fedora

MPJE

Process

8

142

Fedora

mpiJava

Process

8

100

Vista

CCR (C#)

Thread

8

20.2

XP

MPJE

Process

4

185

MPJE

Process

4

152

mpiJava

Process

4

99.4

MPICH2

Process

4

39.3

XP

CCR

Thread

4

16.3

XP

CCR

Thread

4

25.8

Redhat

Fedora

Redhat

Intel8b: 8 Core

(μs)

1

2

3

4

7

8

1.58

2.44

3

2.94

4.5

5.06

Shift

2.42

3.2

3.38

5.26

5.14

Two Shifts

4.94

5.9

6.84

14.32

19.44

3.96

4.52

5.78

6.82

7.18

Pipeline

Spawned

Number of Parallel Computations

Pipeline

2.48

Rendezvous

Shift

4.46

6.42

5.86

10.86

11.74

MPI

Exchange As

Two Shifts

7.4

11.64

14.16

31.86

35.62

Exchange

6.94

11.22

13.3

18.78

20.16

30

Time Microseconds

AMD Exch

25

AMD Exch as 2 Shifts

AMD Shift

20

15

10

5

Stages (millions)

0

0

2

4

6

8

10

Overhead (latency) of AMD4 PC with 4 execution threads on MPI style Rendezvous

Messaging for Shift and Exchange implemented either as two shifts or as custom CCR

pattern

70

Time Microseconds

60

Intel Exch

50

Intel Exch as 2 Shifts

Intel Shift

40

30

20

10

Stages (millions)

0

0

2

4

6

8

10

Overhead (latency) of Intel8b PC with 8 execution threads on MPI style Rendezvous

Messaging for Shift and Exchange implemented either as two shifts or as custom

CCR pattern

1.6

Scaled

Intel 8b Vista C# CCR 1 Cluster

1.5

10,000

Runtime

1.4

500,000

1.3

Divide runtime

by

Grain Size n

. # Clusters K

1.2

50,000

Datapoints

per thread

1.1

1

a)

1

2

3

4

5

6

Number of Threads (one per core)

7

8

1

Scaled

Runtime

Intel 8b Vista C# CCR 80 Clusters

50,000

10,000

0.95

500,000

0.9

Datapoints

per thread

0.85

0.8

b)

1

2

3

4

5

8 cores (threads)

and 1 cluster show

memory bandwidth

effect

6

Number of Threads (one per core)

7

8

80 clusters show

cache/memory

bandwidth effect

0.1

Std Dev Intel 8a XP C# CCR

Runtime 80 Clusters

0.075

500,000

10,000

0.05

50,000

0.025

Datapoints

per thread

0

b)

0

1

2

3

4

5

6

7

Number of Threads (one per core)

0.006

10,000

0.004

50,000

500,000

0.002

Datapoints

per thread

0

b)

1

2

3

4

5

6

Number of Threads (one per core)

7

8

synchronization

points

8

Std Dev Intel 8c Redhat C Locks

Runtime 80 Clusters

This is average of

standard

deviation of run

time of the 8

threads between

messaging

Early implementations of our clustering algorithm showed large

fluctuations due to the cache line interference effect (false sharing)

We have one thread on each core each calculating a sum of same

complexity storing result in a common array A with different cores using

different array locations

Thread i stores sum in A(i) is separation 1 – no memory access

interference but cache line interference

Thread i stores sum in A(X*i) is separation X

Serious degradation if X < 8 (64 bytes) with Windows

Note A is a double (8 bytes)

Less interference effect with Linux – especially Red Hat

Machine

Intel8b

Intel8b

Intel8b

Intel8b

Intel8a

Intel8a

Intel8a

Intel8c

AMD4

AMD4

AMD4

AMD4

AMD4

AMD4

OS

Vista

Vista

Vista

Fedora

XP CCR

XP Locks

XP

Red Hat

WinSrvr

WinSrvr

WinSrvr

XP

XP

XP

Run

Time

Mean

C# CCR

C# Locks

C

C

C#

C#

C

C

C# CCR

C# Locks

C

C# CCR

C# Locks

C

8.03

13.0

13.4

1.50

10.6

16.6

16.9

0.441

8.58

8.72

5.65

8.05

8.21

6.10

Time µs versus Thread Array Separation (unit is 8 bytes)

1

4

8

1024

Std/

Mean

Std/

Mean Std/

Mean Std/

Mean

Mean

Mean

Mean

.029

3.04

.059

0.884 .0051

0.884 .0069

.0095 3.08

.0028

0.883 .0043

0.883 .0036

.0047 1.69

.0026

0.66 .029

0.659 .0057

.01

0.69

.21

0.307 .0045

0.307 .016

.033

4.16

.041

1.27 .051

1.43

.049

.016

4.31

.0067

1.27 .066

1.27

.054

.0016 2.27

.0042

0.946 .056

0.946 .058

.0035 0.423

.0031

0.423 .0030

0.423 .032

.0080 2.62

.081

0.839 .0031

0.838 .0031

.0036 2.42

0.01

0.836 .0016

0.836 .0013

.020

2.69

.0060

1.05 .0013

1.05

.0014

0.010 2.84

0.077

0.84

0.040

0.840 0.022

0.006 2.57

0.016

0.84

0.007

0.84

0.007

0.026 2.95

0.017

1.05

0.019

1.05

0.017

Note measurements at a separation X of 8 and X=1024 (and values between 8 and 1024 not

shown) are essentially identical

Measurements at 7 (not shown) are higher than that at 8 (except for Red Hat which shows

essentially no enhancement at X<8)

As effects due to co-location of thread variables in a 64 byte cache line, align the array with

cache boundaries

This class of data mining does/will parallelize well on current/future multicore nodes

Several engineering issues for use in large applications

How to take CCR in multicore node to cluster (MPI or cross-cluster CCR?)

Need high performance linear algebra for C# (PLASMA from UTenn)

Access linear algebra services in a different language?

Need equivalent of Intel C Math Libraries for C# (vector arithmetic – level 1 BLAS)

Service model to integrate modules

Need access to a ~ 128 node Windows cluster

Future work is more applications; refine current algorithms such as DAGTM

New parallel algorithms

Clustering with pairwise distances but no vector spaces

Bourgain Random Projection for metric embedding

MDS Dimensional Scaling with EM-like SMACOF and deterministic annealing

Support use of Newton’s Method (Marquardt’s method) as EM alternative

Later HMM and SVM