High Performance Robust Datamining for Cheminformatics

advertisement

High Performance

Robust

Datamining for

Cheminformatics

Division of Chemical Information

Session: Cheminformatics: From Teaching to Research

ACS Spring Meeting New Orleans

April 8 2008

Geoffrey Fox

Community Grids Laboratory, School of informatics

Indiana University

http://www.chembiogrid.org

http://www.infomall.org/multicore

gcf@indiana.edu, http://www.infomall.org

1

Too much Computing?

Historically both grids and parallel computing have tried to

increase computing capabilities by

• Optimizing performance of codes at cost of re-usability

• Exploiting all possible CPU’s such as Graphics coprocessors and “idle cycles” (across administrative

domains)

• Linking central computers together such as NSF/DoE/DoD

supercomputer networks without clear user requirements

Next Crisis in technology area will be the opposite problem –

commodity chips will be 32-128way parallel in 5 years time

and we currently have no idea how to use them on commodity

systems – especially on clients

• Only 2 releases of standard software (e.g. Office) in this

time span so need solutions that can be implemented in

next 3-5 years

Intel RMS analysis: Gaming and Generalized decision

support (data mining) are ways of using these cycles

Intel’s Projection

Too much Data to the Rescue?

Multicore servers have clear “universal parallelism” as many

users can access and use machines simultaneously

Maybe also need application parallelism (e.g. datamining) as

needed on client machines

Over next years, we will be submerged of course in data

deluge

• Scientific observations for e-Science including

cheminformatics (high throughput screening)

• Local (video, environmental) sensors

• Data fetched from Internet defining users interests

Maybe data-mining of this “too much data” will use up the

“too much computing” both for science and commodity PC’s

• PC will use this data(-mining) to be intelligent user

assistant?

• Must have highly parallel algorithms and new algorithms

for large datasets

CICC Chemical Informatics and Cyberinfrastructure

Collaboratory Web Service Infrastructure

Cheminformatics Services

Statistics Services

Database Services

Core functionality

Fingerprints

Similarity

Descriptors

2D diagrams

File format conversion

Computation functionality

Regression

Classification

Clustering

Sampling distributions

Dimension Reduction

Embedding

3D structures by

CID

SMARTS

3D Similarity

Docking scores/poses by

CID

SMARTS

Protein

Docking scores

Applications

Applications

Docking

Predictive models

Filtering

Feature selection

Druglikeness

2D plots

Toxicity predictions

Arbitrary R code (PkCell)

Mutagenecity predictions

Need to make

PubChem related data by

Anti-cancer activity predictions

all

this

parallel

Pharmacokinetic parameters

CID, SMARTS

Hide parallelism

OSCAR Document Analysis

in service

InChI Generation/Search

Computational Chemistry (Gamess, Jaguar etc.)

Core Grid Services

Service Registry

Job Submission and Management

Local Clusters

IU Big Red, TeraGrid, Open Science Grid

Varuna.net

Quantum Chemistry

Portal Services

RSS Feeds

User Profiles

Collaboration as in Sakai

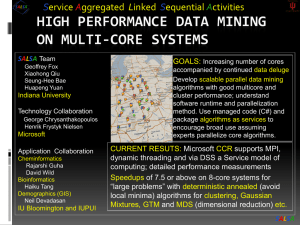

Service Aggregated Linked Sequential Activities

SALSA Team (funded by

Geoffrey Fox Microsoft)

Xiaohong Qiu

Seung-Hee Bae

Huapeng Yuan

Indiana University

Technology Collaboration

George Chrysanthakopoulos

Henrik Frystyk Nielsen

Microsoft

Application Collaboration

Cheminformatics (funded by NIH

Rajarshi Guha

ECCR)

David Wild

Bioinformatics

Haiku Tang

Demographics (GIS)

Neil Devadasan

IU Bloomington and IUPUI

GOALS: Increasing number of cores

accompanied by continued data deluge

Develop scalable parallel data mining

algorithms with good multicore and

cluster performance; understand

software runtime and parallelization

method. Use managed code (C#) and

package algorithms as services to

encourage broad use assuming

experts parallelize core algorithms.

CURRENT RESUTS: Microsoft CCR supports MPI,

dynamic threading and via DSS a Service model of

computing; detailed performance measurements

Speedups of 7.5 or above on 8-core systems for

“large problems” with deterministic annealed (avoid

local minima) algorithms for clustering, Gaussian

Mixtures, GTM and MDS (dimensional reduction) etc.

SALSA

General Problem Classes

N data points X(x) in D dimensional space OR

points with dissimilarity ij defined between them

Unsupervised Modeling

• Find clusters without prejudice

• Model distribution as clusters formed from

Gaussian distributions with general shape

• Both can use multi-resolution annealing

Dimensional Reduction/Embedding

• Given vectors, map into lower dimension space

“preserving topology” for visualization: SOM and GTM

• Given ij associate data points with vectors in a

Euclidean space with Euclidean distance approximately

ij : MDS (can anneal) and Random Projection

Data Parallel over N data points X(x)

SALSA

Minimize Free Energy F = E-TS where E objective function

(energy) and S entropy.

Reduce temperature T logarithmically; T= is dominated by

Entropy, T small by objective function

S regularizes E in a natural fashion

In simulated annealing, use Monte Carlo but in deterministic

annealing, use mean field averages

<F> = exp(-E0/T) F over the Gibbs distribution

P0 = exp(-E0/T) using an energy function E0 similar to E but for

which integrals can be calculated

E0 = E for clustering and related problems

General simple choice is E0 = (xi - i)2 where xi parameters to be

annealed

E.g. MDS has quartic E and replace this by quadratic E0

N data points E(x) in D dim. space and Minimize F by EM

N

N

x 1

x 1

2 2

F F

T

aT( x

) ln{

p(

x) ln{

g

(

k

)

exp[

exp[

0.5(

(

X

(

X

x

(

)

x

)

Y

(

Y

k

(

))

k

))/ T/](Ts (k ))]

k 1 k 1

K

K

Deterministic Annealing Clustering (DAC)

• a(x) = 1/N or generally p(x) with p(x) =1

• g(k)=1 and s(k)=0.5

• T is annealing temperature varied down from

with final value of 1

• Vary cluster centerY(k)

• K starts at 1 and is incremented by algorithm;

pick resolution NOT number of clusters

• My 4th most cited article but little used; probably

as no good software compared to simple K-means

• Avoid local minima

SALSA

Deterministic Annealing Clustering of Indiana Census Data

Decrease temperature (distance scale) to discover more clusters

Distance Scale

Temperature0.5

Deterministic

Annealing

F({Y}, T)

Solve Linear

Equations for

each

temperature

Nonlinearity

removed by

approximating

with solution

at previous

higher

temperature

Configuration {Y}

Minimum evolving as temperature decreases

Movement at fixed temperature going to local

minima if not initialized “correctly”

N data points E(x) in D dim. space and Minimize F by EM

N

F T a ( x) ln{ k 1 g (k ) exp[0.5( X ( x) Y (k )) 2 / (Ts (k ))]

K

x 1

Generative

Topographic

Mapping

(GTM)

Deterministic

Traditional

Annealing

Gaussian

Clustering

(DAC)

Deterministic

Annealing

Gaussian

models

GM

D/2 with

= 1/N

or

generally

p(x)

p(x) =1

Mixture

models

(DAGM)

• a(x) •=a(x)

1 and

g(k)

=mixture

(1/K)(/2)

• As

DAGM

but set T=1 and fix K

g(k)=1

and

s(k)=0.5

• s(k)••=a(x)

1/ =and

T

=

1

1

M

•

T

is

annealing

temperature

varied down from

•Y(k)• =g(k)={P

m=1

W

(X(k))

2

D/2

1/T

m

m

) }

DAGTM:

Deterministic

Annealed

k/(2(k)

2/2 )

with

final

value

of

1

• Choose

fixed

2

(X)

=

exp(

0.5

(X-

)

m

• s(k)=

(k) m(takingTopographic

case of spherical

Gaussian)

Generative

Mapping

• Varyand

cluster

but

can

weight

• Vary

butcenterY(k)

fix values of

Mvaried

andcalculate

Kdown

a priori

mannealing

• TW

is

temperature

from

2

•

GTM

has

several

natural

annealing

PE(x)

correlation

matrix

s(k) =high

(k) D(even

for space

•Y(k)with

Wm

are

vectors

in original

dimension

k and

final

value

of

1

2)based

versions

on

either DAC

or DAGM:

matrix

(k)

using

IDENTICAL

formulae

for space

• X(k)• and

are

vectors

in

2

dimensional

mapped

m

Vary

Y(k)investigation

Pk and (k)

under

Gaussian

mixtures

• K starts at 1 and is incremented by algorithm

•K starts at 1 and is incremented by algorithm

• DAMDS different form as different

Gibbs distribution (different E0)

SALSA

Multicore Matrix Multiplication

(dominant linear algebra in GTM)

Speedup = Number of cores/(1+f)

f = (Sum of Overheads)/(Computation per core)

10,000.00

Execution Time

Seconds 4096X4096 matrices

Computation Grain Size n . # Clusters K

Overheads are

Synchronization: small with CCR

Load Balance: good

Memory Bandwidth Limit: 0 as K

Cache Use/Interference: Important

Runtime Fluctuations: Dominant large n, K

All our “real” problems have f ≤ 0.05 and

speedups on 8 core systems greater than 7.6

1 Core

1,000.00

Parallel Overhead

1%

8 Cores

100.00

Block Size

10.00

1

0.14

10

100

1000

10000

Parallel GTM Performance

0.12

Fractional

Overhead

f

0.1

0.08

0.06

4096 Interpolating Clusters

0.04

0.02

1/(Grain Size n)

0

0

0.002

n = 500

0.004

0.006

0.008

0.01

100

0.012

0.014

0.016

0.018

0.02

50 SALSA

We implement micro-parallelism using Microsoft CCR

(Concurrency and Coordination Runtime) as it supports both MPI rendezvous

and dynamic (spawned) threading style of parallelism

http://msdn.microsoft.com/robotics/

CCR Supports exchange of messages between threads using named ports

and has primitives like:

FromHandler: Spawn threads without reading ports

Receive: Each handler reads one item from a single port

MultipleItemReceive: Each handler reads a prescribed number of items of

a given type from a given port. Note items in a port can be general

structures but all must have same type.

MultiplePortReceive: Each handler reads a one item of a given type from

multiple ports.

CCR has fewer primitives than MPI but can implement MPI collectives

efficiently

Use DSS (Decentralized System Services) built in terms of CCR for service

model

DSS has ~35 µs and CCR a few µs overhead

SALSA

MPI Exchange Latency in µs (20-30 µs computation between messaging)

Machine

Intel8c:gf12

(8 core

2.33 Ghz)

(in 2 chips)

Intel8c:gf20

(8 core

2.33 Ghz)

Intel8b

(8 core

2.66 Ghz)

AMD4

(4 core

2.19 Ghz)

Intel(4 core)

OS

Runtime

Grains

Parallelism

MPI Latency

Redhat

MPJE(Java)

Process

8

181

MPICH2 (C)

Process

8

40.0

MPICH2:Fast

Process

8

39.3

Nemesis

Process

8

4.21

MPJE

Process

8

157

mpiJava

Process

8

111

MPICH2

Process

8

64.2

Vista

MPJE

Process

8

170

Fedora

MPJE

Process

8

142

Fedora

mpiJava

Process

8

100

Vista

CCR (C#)

Thread

8

20.2

XP

MPJE

Process

4

185

Redhat

MPJE

Process

4

152

mpiJava

Process

4

99.4

MPICH2

Process

4

39.3

XP

CCR

Thread

4

16.3

XP

CCR

Thread

4

25.8

Fedora

Messaging CCR versus MPI

C# v. C v. Java

SALSA

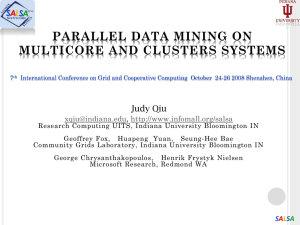

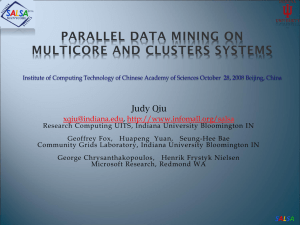

Parallel Generative Topographic Mapping GTM

Reduce dimensionality preserving

topology and perhaps distances

Here project to 2D

GTM Projection of PubChem:

10,926,94 compounds in 166

dimension binary property space takes

4 days on 8 cores. 64X64 mesh of GTM

clusters interpolates PubChem. Could

usefully use 1024 cores! David Wild will

use for GIS style 2D browsing interface

to chemistry

PCA

GTM

Linear PCA v. nonlinear GTM on 6 Gaussians in 3D

PCA is Principal Component Analysis

GTM Projection of 2 clusters

of 335 compounds in 155

SALSA

dimensions

Minimize Stress

(X) = i<j=1n weight(i,j) (ij - d(Xi , Xj))2

ij are input dissimilarities and d(Xi , Xj) the Euclidean distance

squared in embedding space (2D here)

SMACOF or Scaling by minimizing a complicated function is clever

steepest descent algorithm

Use GTM to initialize SMACOF

SMACOF

GTM

Use deterministically annealed version of GTM

Do not use GTM at all but rather find clusters by DAC

algorithm and then use MDS iteratively with one point

(cluster center) added each iteration

and/or use Newton’s method for MDS as only thousands

of parameters (# clusters times dimension l)

and/or use deterministically annealed MDS (DAMDS)

(X,T) = i<j=1n weight(i,j) (d(Xi , Xj) + 2T(l+2)- ij )2

Where T annealing temperature and l dimension of

embedding space (2 in example)

d(Xi , Xj) = (Xi – Xi)2 in l dimensional latent space

ij is dissimilarity in original space

(X,T) = i<j=1n weight(i,j) (d(Xi , Xj) + 2T(l+2)- ij )2

Note that that at T=, 2T(l+2)- ij is positive and all

points Xi are at origin. As T decreases, the terms with

large ij become negative and associated points gradually

expand from origin

“Physical Optimization”: Think of points Xi as “particles”

moving under influence of forces with other points.

Forces are in direction of vector between particles

Attractive: d(Xi , Xj) > ij - 2T(l+2)

Repulsive: d(Xi , Xj) < ij - 2T(l+2)

Can use iterative method based on this particle dynamics

analogy and this makes (deterministic) annealing quite

natural

“Main Thread” and Memory M

MPI/CCR/DSS

From other nodes

MPI/CCR/DSS

From other nodes

0

m0

1

m1

2

m2

3

m3

4

m4

5

m5

6

m6

7

m7

Subsidiary threads t with memory mt

Use Data Decomposition as in classic distributed memory

but use shared memory for read variables. Each thread

uses a “local” array for written variables to get good cache

performance

Multicore and Cluster use same parallel algorithms but

different runtime implementations; algorithms are

Accumulate matrix and vector elements in each process/thread

At iteration barrier, combine contributions (MPI_Reduce)

Linear Algebra (multiplication, equation solving, SVD)

SALSA

All parallel algorithms packaged as services and not traditional

libraries

MPI-Style Micro-parallelism uses low latency CCR threads or MPI

processes

CCR microseconds; local services 10’s microseconds; distributed services

milliseconds

Services can be used where loose coupling natural

Input data

Algorithms

PCA

DAC GTM GM DAGM DAGTM – both for complete algorithm and for each

iteration

Linear Algebra used inside or outside above

Metric embedding MDS, Bourgain, Quadratic Programming ….

HMM, SVM ….

User interface: GIS (Web map Service) or equivalent

SALSA

This class of data mining does/will parallelize well on current/future multicore

nodes

Several engineering issues for use in large applications

How to take CCR in multicore node to cluster (MPI or cross-cluster CCR?)

Use Google MapReduce on Cloud/Grid

Need high performance linear algebra for C# (PLASMA from UTenn)

Access linear algebra services in a different language?

Need equivalent of Intel C Math Libraries for C# (vector arithmetic – level 1

BLAS)

Service model to integrate modules

Although work used C#, similar results in C, C++, Java, Fortran

Future work is more applications; any suggestions?

Refine current algorithms such as DAGTM, SMACOF, DAMDS

New parallel algorithms

Clustering with pairwise distances but no vector spaces. Deterministic

annealing here well understood but even less used

Bourgain Random Projection for metric embedding

Support use of Newton’s Method (Marquardt’s method) as EM alternative

Later HMM and SVM

SALSA