Improved State Estimation in Multiagent Settings with Continuous or Large

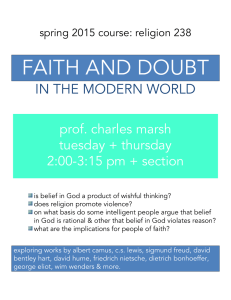

advertisement

Improved State Estimation in Multiagent Settings with Continuous or Large

Discrete State Spaces

Prashant Doshi

Department of Computer Science

University of Georgia

Athens, GA 30602

pdoshi@cs.uga.edu

Abstract

out using the interactive PF (I-PF) that generalizes the PF to

the multiagent setting (Doshi & Gmytrasiewicz 2005).

Previous applications of the I-PF were confined to simple problems with a very small number of discrete physical

states. This is because a large number of particles must be

used, at the expense of computational efficiency, to achieve

good approximation quality. While this limitation also affects the traditional PF, it is especially acute for the I-PF because the interactive state space from which the particles are

sampled tends to get large as it includes the nested beliefs of

the other agents.

The above limitation of the I-PF becomes more potent in

the context of a continuous or large state space, as exhibited

by many real-world applications. In this paper, we present

an improved method for approximately carrying out state estimation in multiagent settings characterized in part by continuous or large discrete physical state spaces. We factor out

some dimensions of the interactive state space and updating

the belief over these dimensions as exactly as possible, while

sampling and propagating the remaining ones. This procedure is equivalent to Rao-Blackwellising (Casella & Robert

1996) the I-PF. Specifically, we factor out the models of

other agents and update the agent’s belief over these models. We consider the case where the belief densities are represented using Gaussians. As the states may be continuous,

we focus on models which represent the transition dynamics

using conditional linear Gaussians (CLGs) or as deterministic, and the observation functions using softmax or CLGs.

In the update, those distributions that can be handled exactly

are handled exactly, while tight approximations are used for

the remaining ones. Simultaneously, we sample particles

from the distribution over the large physical state space and

project the particles in time. When compared with the performance of I-PF on continuous settings, we show that our

approach achieves better approximation quality while consuming less computational resources as measured by the

number of particles and runtime.

Our choice of the distributions while somewhat restrictive

is motivated by several reasons: These popular functions are

well-behaved statistically and allow closed-form posteriors,

there exist efficient methods for fitting the distributions’ parameters to data (Jordan 1995), and as we illustrate using

examples, they adequately model several applications such

as target tracking and fault diagnosis.

State estimation in multiagent settings involves updating an

agent’s belief over the physical states and the space of other

agents’ models. Performance of the previous approach to

state estimation, the interactive particle filter, degrades with

large state spaces because it distributes the particles over

both, the physical state space and the other agents’ models.

We present an improved method for estimating the state in

a class of multiagent settings that are characterized in part

by continuous or large discrete state spaces. We factor out

the models of the other agents and update the agent’s belief

over these models, as exactly as possible. Simultaneously, we

sample particles from the distribution over the large physical

state space and project the particles in time. This approach is

equivalent to Rao-Blackwellising the interactive particle filter.

We focus our analysis on the special class of problems where

the nested beliefs are represented using Gaussians, the problem dynamics using conditional linear Gaussians (CLGs) and

the observation functions using softmax or CLGs. These distributions adequately represent many realistic applications.

Introduction

In order to act rationally, an agent must track the state of

the environment over time. When an agent is acting alone

in the environment, it must track the evolution of the physical state; this is usually accomplished using the Bayes filter (Doucet, Freitas, & Gordon 2001). The filter, in practice, manifests as the Kalman filter when the dynamics are

linear Gaussian and the agent’s prior belief is Gaussian, or

as the particle filter (PF) (Doucet, Freitas, & Gordon 2001)

when no assumptions about the dynamics or prior beliefs are

made. In the presence of other agents who themselves act,

observe, and update their beliefs the agent must track not

only the physical state but also the possible states of others.

This is because other agents’ actions may affect the evolution of the physical state and the agent’s payoffs. One approach is to generalize the Bayes filter to multiagent settings

as shown in (Doshi & Gmytrasiewicz 2005), in which an

agent tracks the evolution of the interactive state over time.

An interactive state consists of the physical state and models of the other agents that include their beliefs, capabilities,

and preferences. In practice, the estimation may be carried

c 2007, Association for the Advancement of Artificial

Copyright Intelligence (www.aaai.org). All rights reserved.

712

Background: Multiagent State Estimation

Because changes in the physical state and agent i’s observations depend also on the actions of j, we sum over all of

t

j’s actions in the second step. As ist = st , θj,0

, we write:

We consider an agent i, that is interacting with one other

agent j. The arguments easily generalize to a setting with

more than two agents. Consider a space of physical states

S. We will call agent j’s belief over S a 0th level belief,

bj,0 . Additionally, j can be modeled by specifying its set

of actions Aj , set of observations Ωj , transition and observation functions, Tj and Oj , a reward function Rj , and

an optimality criterion OCj . j’s 0th level model is then

θj,0 = bj,0 , Aj , Ωj , Tj , Oj , Rj , OCj . The 0th level models are therefore POMDPs (other agent’s actions are folded

in as noise into T , O, and R). Agent i’s 1st level beliefs are

defined over the physical states of the world and the 0th level

models of agent j. This enriched state space has been called

a set of interactive states. Thus, let ISi,1 denote a set of interactive states defined as, ISi,1 = S × Θj,0 , and ISi,0 = S,

where Θj,0 , is the set of 0th level models of agent j. Let us

rewrite θj,0 as, θj,0 = bj,0 , θ̂j , where θ̂j ∈ Θ̂j , is the agent

j’s frame. To keep matters simple, we limit our focus to i’s

singly nested beliefs, though the definition of the interactive

state space generalizes to any level.

State estimation in multiagent settings is complex because

of two reasons: First, the prediction of how the physical state

changes must be made based on the predicted actions of the

other agent. The probabilities of other’s actions are based on

its models. Second, changes in other’s models have to be included in the update. Specifically, update of the other agent’s

beliefs due to its new observation must be included. The up-

×

t−1

t

P r(θj,0

|st , at−1

, at−1

, oti , θj,0

)=

i

j

t−1

, otj )

×δD (SEθ̂t (bt−1

j,0 , aj

j

j

Factoring State Estimation

We decompose the state estimation (Eq. 1) into two factors,

one of which represents the update of the belief over the

physical states, and the other is the update of the belief over

the other agent’s models conditioned on a physical state:

def

Oj (st , at−1

, at−1

, otj )

j

i

pj,0 (x|y) = N (μyj,0 ; Σyj,0 )(x)

bti,1 (ist ) = P r(ist |at−1

, oti , bt−1

i

i,1 )

t t−1

t−1

P r(is |ai , oti , ist−1 ) bt−1

)

=

i,1 (is

def

μyj,0

(3)

Σyj,0

where

is the k-element vector of means,

is the

k × k covariance matrix for the Gaussian. Let μj,0 and Σj,0

be the set of means and covariance matrices, respectively,

for every instantiation of y. We show an example level 0

ist−1 :θ̂jt−1 =θ̂jt

ist−1 at−1

otj

Representing Prior Beliefs

recursion in belief nesting will bottom out at the 0th level,

where the belief update of the agent reduces to a Bayes filter.

−

btj,0 )

Let X = {X1 , X2 , . . . , Xk } be the set of k continuousvalued variables, where k ∈ N, and Y be the set of discretevalued elements of the (hybrid) physical state space, S. Let

x be an instantiation of X and analogously for y. Together,

X and Y completely describe the physical state space.

For illustration, we use the continuous multiagent tiger

problem, a modified version of the persistent multiagent

tiger problem discussed in (Rathnas, Doshi, & Gmytrasiewicz 2006). In our continuous version, the tiger is

located on a continuous axis, −1 ≤ x ≤ 1. The gold is

always located symmetrically (about x = 0) from the tiger’s

location. Hence, knowing the tiger’s location allows one to

exactly infer the location of the gold, analogous to the classical version. We assume a discrete action space that involves

each agent calling out the location of the gold. Here, to keep

matters simple, this could be left (OL) or right (OR). Left,

for example, could signify that the gold is located at some

point, x ≤ 0. Each agent may also listen (L), hearing a growl

from the left (GL) or right (GR) that informs the agent, noisily, the tiger’s location. The agent also overhears, noisily, the

location, if called out by the other. Once a location has been

called out, the tiger (and the gold) persist at their original

location in the next time step with a high probability.

t−1

We represent agent j’s level 0 belief, bj,0

∈ Δ(S), ust−1

(xy) =

ing a factorization of the physical state space: bj,0

pj,0 (y)pj,0 (x|y). While pj,0 (y) is a discrete probability distribution over Y, pj,0 (x|y) is a collection of multivariate

Gaussian densities, each of which is defined over the variables in X. Each Gaussian in the collection may have a

different set of parameters specific to the instantiation of Y:

(1)

t−1

t−1

P r(at−1

|θj,0

) bt−1

)

j

i,1 (is

(2)

Because agent j’s model is private and cannot be observed

by i directly, i’s observation oti plays no role above.

where α is the normalization constant, δD is the Diract−1

delta function, P r(ajt−1 |θj,0

) is the probability that ajt−1

t−1

is Bayes rational for the agent described by θj,0

, and SEθ̂t

j

stands for the update of the complete belief using the transition and observation functions in the frame θ̂jt . In the multiagent state estimation above, i’s belief update will invoke

t−1

j’s belief update (via the term SEθ̂t (bj,0

, ajt−1 , otj )). This

=

P r(st |at−1

, at−1

, oti , st−1 )

i

j

where α is the normalization constant. The other term in

t−1

t

Eq. 2, P r(θj,0

| st , at−1

, ajt−1 , oti , θj,0

), may be rewritten:

i

t−1

P r(at−1

|θj,0

)

j

at−1

j

, oti ) Ti (st−1 , at−1

, at−1

, st ) δD (SEθ̂t

×Oi (st , ait−1 , at−1

j

i

j

j

otj

t−1

t−1

t−1

t−1

t

t

t

t

t−1

(bj,0 , aj , oj ) − bj,0 ) Oj (s , ai , aj , oj ) d is

ist−1 :θ̂jt−1 =θ̂jt

t−1

bt−1

)

i,1 (is

ist−1 at−1

j

t−1

t−1

t−1

t

t

P r(θj,0 |s , ai , aj , oti , θj,0

)

P r(st |at−1

, at−1

, oti , st−1 ) = α Oi (st , at−1

, at−1

, oti )

i

j

i

j

t−1

t−1 t

t−1

× Ti (s , ai , aj , s )

def

We may expand the first term of the above equation as,

t−1

dated belief of i, bti,1 (ist ) = P r(ist |ait−1 , oti , bi,1

):

bti,1 (ist ) = α

P r(ist |at−1

, oti , bt−1

i

i,1 ) =

t−1

P r(ist |at−1

, at−1

, oti , ist−1 ) P r(ajt−1 |θj,0

)

i

j

j

t−1

× bt−1

)

i,1 (is

713

3.5

0.008

Pr i,1 (bj,0 |x=0)

0.006

Prj (x )

interactive state space is then given by the following set of

t−1 t−1 (n) N

particles, {(s(n) , bi,1

(θj,0 |s )}i=1 :

3

0.007

0.005

0.004

0.003

2.5

2

1.5

1

(b)

N

1 t−1 (n)

δD (st−1 −s(n) )bt−1

) (5)

i,1 (θj,0 |s

N n=1

Figure 1: (a) A level 0 belief of j according to which j believes

t−1

We substitute Eq. 5 into Eq. 2, expand ist−1 = st−1 , θj,0

:

0.002

0.5

0

0.001

0

-200 -150 -100 -50

-1

0

50 100 150 200

x

(a)

-0.5

0

0.5

1

0

0.2

t−1

μ j,0

0.4

0.6

t−1

Σ j,0

0.8

t−1

t−1

bt−1

, θj,0

)≈

i,1 (s

1

that the tiger is likely to be at x=0.25, (b) Given that the tiger is

at location x=0, i believes that j believes that the tiger is likely at

x=0 (j’s beliefs are likely to have a mean of 0 and small variance).

bti,1 (ist ) ≈

×

t−1 t−1

bi,1 (θj,0

|s ) = N ([wry + wry · x]r=1

def

k(k+1)|y|

P r(st |at−1

, at−1

, oti , st−1 )

i

j

t−1

t−1

t

P r(θj,0

|st , at−1

, at−1

, oti , θj,0

) P r(at−1

|θj,0

)

i

j

j

t−1 (n)

t−1

δD (st−1 − s(n) )bt−1

) d θj,0

d st−1

i,1 (θj,0 |s

δD (st−1 − s(n) ) P r(st |at−1

, at−1

, oti , st−1 )

i

j

n=1

1

N

=

st−1

at−1

j

N

× N1

belief of j in the tiger problem in Fig. 1(a). Note that the

physical state in the problem consists of a single continuous

variable, x, denoting the tiger’s location.

t−1

t−1

Agent i’s level 1 belief, bi,1

∈ Δ(S × Θj,0

), is a

distribution over the level 0 beliefs of agent j for each

physical state and frame of the other agent. In order

t−1

t−1

to represent this belief, we factor it as, bi,1

(s, θj,0

) =

t−1 t−1

t−1 t−1 t−1

t−1 t−1

bi,1 (s ) bi,1 (θj,0 |s ). The term, bi,1 (s ), is a distribution over the physical state space analogous to j’s level

0 belief, and may be represented similarly. The second fact−1 t−1

tor, bt−1

) is a distribution over the level 0 beliefs

i,1 (θj,0 |s

of j conditioned on the physical state (assuming j’s frame is

known). Because j’s belief is represented using a collection

of Gaussians, as shown in Eq. 3, that are described by their

means and covariance matrices, i’s level 1 beliefs are densities over these parameters. We represent i’s level 1 belief

conditioned on the physical state using a conditional linear

Gaussian (CLG) density:

t−1

θj,0

ajt−1 n st−1

t−1

×

ds

t−1

θj,0

t−1

t−1

t

P r(θj,0

|st , at−1

, at−1

, oti , θj,0

) P r(at−1

|θj,0

)

i

j

j

t−1 (n)

t−1

) d θj,0

× bt−1

i,1 (θj,0 |s

Because of the delta function, the above becomes:

bti,1 (ist ) ≈

1

N

ajt−1

n

P r(st |at−1

, at−1

, oti , s(n) )

i

j

t−1

θj,0

t

P r(θj,0

|st

t−1

t−1 t−1 t−1 (n)

t−1

, at−1

, at−1

, oti , θj,0

) P r(at−1

|θj,0

)bi,1 (θj,0 |s ) d θj,0

i

j

j

where:

P r(st |at−1

, at−1

, oti , s(n) ) = α Oi (st , at−1

, at−1

, oti )

i

j

i

j

t−1

t−1 t

(n)

× Ti (s , ai , aj , s )

t−1

t

P r(θj,0

) = ot Oj (st , at−1

, at−1

, otj )

|st , at−1

, at−1

, oti , θj,0

i

j

j

i

t−1

, otj ) − btj,1 )

×δD (SEθ̂t (bt−1

j,0 , aj

; Σy )(μj,0 , Σj,0 )

(4)

j

j

Thus, i’s state estimation takes the approximate form:

The CLG is a density over the means and covariances that

parameterize j’s level 0 belief. The CLG’s own mean is a

linear function of the continuous variables in X and possibly distinct for each value of y. Recall that μj,0 and Σj,0 are

sets of as many k-element means and k × k covariances, respectively, as the number of instantiations, |y|. An example

CLG for the tiger problem is shown in Fig. 1(b).

bti,1 (ist ) ≈

N

α (n)

(n)

t

ρ

(st ) κ t−1 (θj,0

|st ) where:

aj

N t−1 n=1 at−1

j

aj

(n)

ρ

(st ) = Oi (st , at−1

, at−1

, oti ) Ti (s(n) , at−1

, ajt−1 , st )

i

j

i

def

at−1

j

(6)

Approximate State Estimation

κ

The I-PF propagates a sampled representation of agent i’s

nested beliefs, over time. Because the particles are sampled

from the entire interactive space, many of them are needed to

generate good approximations of the belief. However, this

makes the I-PF computationally intensive and the problem

is exacerbated when we consider large physical state spaces

that characterize realistic application settings.

(n)

ajt−1

def

t

(θj,0

|st ) =

t−1

(bt−1

, otj ) −

j,0 , aj

t−1

θj,0

t

bj,0 )

otj

Oj (st , at−1

, at−1

, otj )δD (SEθ̂t

j

i

j

t−1

t−1 (n)

t−1

P r(at−1

|θj,0

) bt−1

) dθj,0

j

i,1 (θj,0 |s

(7)

(n)

We estimate the physical state, denoted by ρat−1 , by propaj

gating the particles as in the PF; the estimation of the other

(n)

agent’s models given by κat−1 is performed as shown next.

j

Rao-Blackwellised I-PF (RB-IPF)

We offer a way to alleviate this difficulty. We may simulate the belief update over the physical state space using the

traditional PF while updating agent i’s belief over j’s beliefs conditioned on the physical state, as exactly as possible. We sample N particles from agent i’s belief over the

t−1 t−1

physical state space, bi,1

(s ), resulting in a set of parti(1) (2)

(N )

cles {s , s , . . . , s } that together approximate the belief over the large state space. The belief over the complete

Belief Update over Models

For settings where the physical state space is continuous, we

represent both agents’ observation functions using Gaussian

or softmax (also called logistic) densities (Jordan 1995). The

observation function of j represented as a softmax is:

exp(wqy,a + wqy,a · x)

def

Oj (st , at−1

, at−1

, otj = oq ) = |Ω |

j

i

y,a

j

+ wry,a · x)

r=1 exp(wr

714

t

= 0.25 ,OL, *, x )

1

0.9

0.8

0.7

0.6

0.5

0.4

0.3

0.2

0.1

0

t−1

GR

GL

-1

-0.5

0

xt

0.5

Tj (x

O j (x t, L, *, ? )

where the |Ωj | sets of wy,a , wy,a are parameters of the

softmax function instantiated for the discrete state variables,

y, and the discrete actions of both the agents, a.

In the continuous multiagent tiger problem, the observation function of agent j when it listens is shown in Fig. 2(a).

Agent i’s observation function when it listens, is obtained by

multiplying the softmax densities in Fig. 2(a) with 0.8 if it

overhears correctly, and 0.1 otherwise. However, if i calls

any location, it does not hear the growl or the other agent,

and the observation function is, Oi (xt , OL, ∗, ∗) = 0.05.

1

Σ

Σtj,0 =fL,GR

(Σt−1

j,0 ) =

μ

t−1

t−1

μtj,0 =fOL/OR,∗

(μt−1

j,0 , Σj,0 ) =μj,0

t−1

t−1

Σ

t

Σj,0 =fOL/OR,∗ (Σj,0 ) =Σj,0 + 0.25

Table 1: The means and variances of j’s updated level 0 belief for

the multiagent tiger problem. λ is the variational parameter.

1.6

are functions of the mean and covariance of the prior bet−1

t−1

lief, μtj,0 = faμj ,oj (μj,0

, Σj,0

) and Σtj,0 = faΣj ,oj (Σt−1

j,0 ).

t−1

μ

t

t

We may rewrite the above as, μj,0 = gaj ,oj (μj,0 , Σj,0 ) and

t−1

= gaΣj ,oj (Σtj,0 ), where gaμj ,oj and gaΣj ,oj may be seen

Σj,0

as inverses under the assumption that faμj ,oj and faΣj ,oj are

1:1 maps. This assumption holds when the transition and

observation functions are of the form defined previously.

To illustrate, consider j’s level 0 update when it listens

(L) and hears

a growl from the right (GR): P r(xt |aj =

1.4

1.2

1

0.8

0.6

0.4

0.2

0

-1

-0.5

(a)

0

0.5

1

(b)

xt

Figure 2: (a) Observation function of j when it listens is a collection of sigmoids. (b) i’s transition density when either or both call

out a location and the tiger was previously at xt−1 = 0.25.

1

t−1

t

t−1

t−1

)N (μt−1

)dxt−1 = N

L, bt−1

j,0 ) = −1 δD (x − x

j,0 , Σj,0 )(x

t−1

t−1

t

t

(μj,0 , Σj,0 )(x ) . When j hears GR, P r(x |oj = GR, aj =

We represent the transition functions as CLGs, which are

adequate for many realistic applications and additionally allow closed-form posteriors:

t

t−1

−x

t

)×N (μt−1

L, bt−1

j,0 ) = 1/(1+e

j,0 , Σj,0 )(x ) . Using the vari-

Tj (st−1 , ajt−1 , at−1

, st ) = N (w0y,a,y + wy,a,y · x; Σy,a,y )

i

(x ) × P r(y |x, y, a)

def

t−1

−2λ

2(0.5Σt−1

−1)

j,0 +μj,0 )λ(e

t−1

t−1

−2λ

e

(2λ−Σj,0 )+2e−λ Σt−1

j,0 −2λ−Σj,0

t−1

2Σj,0

λ(1+e−λ )

−λ −1)+2λ(e−λ +1)

−Σt−1

j,0 (e

μ

t−1

μtj,0 =fL,GR

(μt−1

j,0 , Σj,0 ) =

ational method, the product may be closely approximated

from below by a Gaussian, given in row 1 of Table 1. Here,

λ ∈ R is the variational parameter. For any value of λ the

Gaussian remains a tight lower bound; the approximation

is exact when λ = xt . Because xt is hidden, one way to

calculate a good λ is to use an iterative EM scheme given

an initial guess of λ (Murphy 1999). The updated means

and variances when j calls out a location are exact (row 2,

Table 1). Given the functions in Table 1, the inverses, say,

μ

Σ

gL,GL

(μtj,0 , Σtj,0 ), and gL,GL

(Σtj,0 ) may be obtained.

Step 2: Turning our attention to the integral in Eq. 7, the

t−1

term P r(ajt−1 |θj,0

) is the probability that action, ajt−1 , is

t−1

t−1

, ie. at−1

∈ OP T (θj,0

)

Bayes rational for the model θj,0

j

where OP T is the set of actions that are Bayes rational

t−1

given the model. Let Rat−1 ⊆ Θj,0

be the contiguous

where x , y constitute st , x, y constitute st−1 ,

P r(y |x, y, a) is a discrete probability distribution (softmax

if y depends on x), and the parameters, w0 , wy,a,y may

be distinct for each instantiation of Y, A, and Y .

In the tiger problem, the transition function for the action OL, shown in Fig. 2(a), is, Tj (xt−1 , OL, ∗, xt ) =

N (xt−1 ; 0.25)(xt ). Because the mean of the Gaussian is

at xt−1 , the tiger persists at its previous location with a high

probability. The transition function is similar for the other

action, OR. If both the agents listen, Tj (xt−1 , L, L, xt ) =

δD (xt − xt−1 ). Agent i’s transition function is analogous.

For clarity, we subdivide the process of updating i’s conditional beliefs over j’s models (Eq. 7) into three steps. In

the first and second steps, we show how j’s level 0 belief is

updated and i’s nested belief over j’s updated beliefs is obtained. The third step explains the effect of j’s observation

likelihood on the level 1 beliefs.

Step 1: Given that agent j’s prior level 0 beliefs are

Gaussian, and we may closely approximate the product of

a Gaussian and softmax function by a Gaussian (Murphy

t−1 t−1 t

1999), j’s level 0 belief update, SEθ̂t (bj,0

, aj , oj ) is

j

carried out analytically. Specifically, we derive a Gaussian that forms a lower bound to the softmax density using variational methods (see (Murphy 1999) for the derivation; (Jordan et al. 1999) for an introduction to variational methods). We note that while the variational Gaussian may not be a tight approximation to the softmax, the

product Gaussian is a tight approximation to the product of

the softmax and the Gaussian (for example, see (Murphy

1999)). Therefore, analogous to the Kalman filter, j’s posterior belief is also a Gaussian whose mean and covariance

j

region of j’s models for which the action, ajt−1 is Bayes

rational (Rathnas, Doshi, & Gmytrasiewicz 2006) ie. define

t−1

t−1

Rat−1 such that, ∀ θj,0

∈ Rat−1 , ajt−1 ∈ OP T (θj,0

).

j

j

t−1

Because, P r(ajt−1 |θj,0

) may be rewritten as,

1

t−1

|OP T (θj,0

)|

t−1

× δD (OP T (θj,0

) − ajt−1 ), the integral becomes,

R t−1

a

1

t−1

|OP T (θj,0

)|

otj

Oj (st , at−1

, at−1

, otj ) δD (SEθ̂t (bt−1

j

i

j,0 ,

j

j

t−1 (n)

t−1

, otj ) − btj,0 ) bt−1

) dθj,0

. Substituting i’s level

at−1

j

i,1 (θj,0 |s

1 belief

(Eq. 4) into

the above we get:

R t−1

a

1

t−1

|OP T (θj,0

)|

otj

Oj (st , at−1

, at−1

, otj )

j

i

δD (SEθ̂t (bt−1

j,0 ,

j

j

(n)

(n)

(n)

y

t−1

, otj ) − btj,0 ) N ([wr,0

+ wry · x(n) ]; Σy )(μt−1

at−1

j

j,0 , Σj,0 )

(n)

y

t−1

t−1

t

dθj,0

=

, at−1

, otj ) N ([ wr,0

+

i

ot Oj (s , aj

wry

(n)

j

· x(n) ] ;Σy

(n)

gaμj ,oj (μtj,0 , Σtj,0 ),

715

) (gaμj ,oj (μtj,0 , Σtj,0 ), gaΣj ,oj (Σtj,0 )),

gaΣj ,oj (Σtj,0 )

if

belongs in Rat−1 , 0 otherj

wise. Note that μtj,0 , Σtj,0 parameterize btj,0 and |OP1T (·)|

is absorbed into the density. We focus on the second term of

the previous expression next. Intuitively, this density is i’s

updated belief that results when the transformations, faμj ,oj

t−1

t−1

and faΣj ,oj are applied to the variate, μj,0

, Σj,0

at which

t−1

aj is Bayes rational. If the transformations are not linear,

the resulting density over μtj,0 , Σtj,0 may not be Gaussian.

In this case, we numerically estimate the Gaussian, N

(n)

(n)

(μaj ,oj ; Σaj ,oj ) (μtj,0 , Σtj,0 ), that best fits the density using,

say, the maximum likelihood (ML) approach.

)

1

0.6

Pr (b

Σt−1

j,0

i,1 j,0

0.8

L

0.4

0.2

OR

OL

3.5

3

2.5

2

1.5

1

0.5

0

-1

0

-1

-0.5

0

0.5

μ t

0

0.5

j,0

(a)

1 0

0.003

) (μtj,0 , Σtj,0 ) +

0.013

0.052

−0.007

Oj (xt , L, L, GR) × N ([−0.11, 0.15];

)

−0.007 0.013

(μtj,0 , Σtj,0 ) . As j’s observation densities are sigmoids,

t

t

1

the above becomes, κ(n)

L (θj,0 |x ) = 1+ext × N ([0.04, 0.15]

0.013 0.003

1

;

) (μtj,0 , Σtj,0 ) +

t × N ([−0.11, 0.15]

1+e−x

0.003 0.013

0.052

−0.007

;

) (μtj,0 , Σtj,0 ), which is a mixture of

−0.007 0.013

L, L, GL) × N ([0.04, 0.15];

1

0.8

0.6

0.4

0.2

t

Σ

j,0

-0.5

0

t

μ j,0

0.5

1 0

1

0.8

0.6

0.4

0.2 Σ t

j,0

0.013

0.003

(n)

t

two Gaussians (κL (θj,0

|xt = 0) in Fig. 3(d)).

While we started with a single prior CLG, the posterior

(n)

t

κat−1 (θj,0

|st ) is a mixture of multiple conditional Gaus-

(b)

6

t t

κL(bj,0

|x t= 0)

Pr i,1(b j,0 )

t−1

μ j,0

7

6

5

4

3

2

1

0

-1

-0.5

1

This weight is the probability with which j received its

observation, otj , on performing action, ajt−1 . This actionobservation combination form the subscripts to the mean

and variance of the Gaussian.

t

t

t

In the tiger problem, if i listens, κ(n)

L (θj,0

|x ) = Oj (x ,

5

4

j

sians. In general, there will be atmost |Ωj | distinct Gaussians in the mixture and |Aj ||Ωj | many densities that make

up agent i’s level 1 belief, after one step of the belief update. After t steps, there will be a maximum of (|Aj ||Ωj |)t

distinct densities in the mixture. As the number of mixture components grows exponentially with the updates, more

compact representations of the belief are needed.

3

2

1

0

-1

-0.5

0

t

μj,0

(c)

0.5

1 0

1

0.8

0.6

0.4

0.2 t

Σj,0

(d)

Figure 3: (a)Agent j’s horizon 1 policy. As the variance increases, j is less certain about the tiger’s location, hence chooses

to listen. The superimposed polygon (with the black border) represents the updated mean and variance of j’s belief after L, GR.

(b) Agent i’s belief over j’s given that j listens and hears a GR.

(c) A maximum likelihood Gaussian fit to the density in (b). (d)

(n)

κL (θj,0 |xt = 0) as a mixture of two Gaussian densities.

Experiments

where N (μaj ,oj , Σaj ,oj )

(n)

is the fitted Gaussian density. Notice that κat−1 is a mixture

We implemented RB-IPF and evaluated its performance on

the multiagent tiger problem and a sequential version of the

public good problem with punishment (PG) (Fudenberg &

Tirole 1991). Our sequential version of PG is from the perspective of agent i. Let xu ∈ Xu be the quantity of resources in the public pot. We assume in our formulation that

Xu is hidden. However, each agent receives an observation

of plenty (PY) or meager (MR) symbolizing the state of the

public pot. The resources in i’s private pot, xr,i ∈ Xr,i , are

perfectly observable to i. We briefly list our formulation:

• The state space is, Xi = Xu × Xr,i , which represents the

amount of resources in the public pot and private pot of agent

i • The actions are, Ai = {Contribute(C), Defect(D)}. An

agent contributes a fixed amount, xc , during the contribute

action. Let A = Ai × Aj , where Aj = Ai • The observations of i are, Ωi = {PY, MR} • The transition function,

Ti : Xi × A × Xi → [0, 1], is deterministic as the amount

of contributions are fixed and known. Note that both agents’

actions affect Xu while Xr,i is affected only by i’s action. •

The observation function is, Oi : Xu × Ωi → [0, 1] •

The reward function is, Ri : Xi × A → R. The reward

is determined as follows: Ri (xi , ai , aj ) = xr,i + ci xu −

1D (ai )1C (aj )P − 1C (ai )1D (aj )cp , where ci (=cj ) is the

marginal private return. P is the punishment meted out to

the defecting agent and cp is the non-zero cost of punishing for the contributing agent. 1D (·) is an indicator function

which is 1 if its argument is D, 0 otherwise.

The transition function when both agents contribute is,

of Gaussians where Oj (st , ajt−1 , ait−1 , otj ) is the weight

assigned to each participating Gaussian in the mixture.

Ti (xt−1

, C, C, xti ) = δD ((xt−1

+ 2xc ) − xtu )δD ((xt−1

u

i

r,i − xc ) −

t−1

t−1

t

t−1

xr,i ), where xi

= xu , xr,i and xti = xtu , xtr,i . If i

t−1 t−1 (n)

Let agent i’s level 1 belief, bi,1

(θj,0 |x ), when x(n) =

0 be the one shown in Fig. 1(b). For j’s action, ajt−1 = L,

RL is a polygon (in red) shown in Fig. 3(a). We note that

the update of j’s beliefs on listening and hearing a GR shifts

the (red) polygon right along the mean (because j heard a

GR) and reduces the variance. Given j’s action of L and

an observation

of GR, i’s

predicted belief over j’s belief is,

N ([0,0];

0.1

0.01

0.01

0.1

μ

Σ

) (gL,GR

(μtj,0 , Σtj,0 ), gL,GR

(Σtj,0 )) if

the argument of the Gaussian is in RL , otherwise it is 0.

μ

Σ

Here, gL,GR

(μtj,0 , Σtj,0 ) and gL,GR

(Σtj,0 ) are the inverses

of the functions in row 1 of Table 1. Notice that the functions in row 1 are non-linear. We show the predicted belief in Fig. 3(b), and the ML fit to the predicted belief in

Fig. 3(c). If j calls out the location,

then i’s predicted belief

over j’s belief is, N ([0,0.25];

0.1

0.01

0.01

0.1

) (μtj,0 , Σtj,0 ) if

μtj,0 , Σtj,0 − 0.25 is in ROL (or ROR ), otherwise 0.

(n)

t

Step 3: The final step in calculating κat−1 (θj,0

|st )

involves

(n)

the

product,

j

otj

Oj (st , at−1

, at−1

, otj )

j

i

(n)

(n)

N (μaj ,oj , Σaj ,oj ) (μtj,0 , Σtj,0 )

(n)

j

716

Multiagent Tiger Problem

I-PF

RB-IPF

I-PF

RB-IPF

I-PF

RB-IPF

0.16

0.14

0.12

0.2

0.15

0.1

0.08

0.08

0.06

0.06

0.25

0.2

0.15

0.1

0.1

0.05

0.05

1

10

100

No. of Particles (N)

(a)

1000

10000

I-PF

RB-IPF

0.3

L1 Error

0.1

0.35

0.25

0.14

0.12

L1 Error

L1 Error

Public Good Problem

0.3

0.18

L1 Error

0.16

1

10

100

1

No. of Particles over S

10

100

No. of Particles (N)

1000

10000

1

(c)

(b)

10

100

No. of Particles over S

(d)

defects while j contributes, Ti (xt−1

, D, C, xti ) = δD ((xt−1

+

u

i

t−1

t

−x

)

,

and,

T

(x

, C, D, xti ) = δD ((xut−1 +

xc )−xtu )δD (xt−1

i

r,i

r,i

i

t

xc ) − xtu )δD ((xt−1

r,i − xc ) − xr,i ) conversely. If both defect,

the pots remain unchanged. Probability densities for the two

observations of PY and MR for both agents are sigmoids.

We demonstrate that the RB-IPF is statistically more efficient as compared to the I-PF. In other words, the RB-IPF

estimates the hidden interactive state more accurately, while

consuming less particles and hence less computational resources. In Figs. 4(a) and (c), we show the line plots of

the L1 error as a function of the number of particles (N)

allocated to each filter. L1 error measures the distance between the approximate and exact posteriors. As it is difficult to carry out the belief update exactly, we used the IPF with half a million particles to compute the ‘exact’ beliefs. Each data point is the average of 10 runs of the respective filter. For the multiagent tiger problem, the estimation

was performed for the case where agent i listens (L) and

hears a growl from the left but does not overhear anything

(GL, S). In the PG problem, the beliefs resulting from

agent i contributing (C) and perceiving plenty of resources

(PY) in the public pot were used for comparison. We obtained similar results for other actions and observations. We

used the belief in Fig. 1(b) as the prior of agent i (bi,1 (x)

was a Gaussian with mean -0.25 and variance of 0.25) in the

tiger problem, and an analogous belief in the PG problem.

Observe that for both the problems, the posterior belief

generated by RB-IPF is much closer to the truth than that

generated by the I-PF for the same number of particles. One

reason for this is that the RB-IPF uses all the particles to

estimate the continuous physical state, while in I-PF the particles are distributed over the estimation of the physical state

and the other’s model. The second reason is that RB-IPF

updates the belief over the other agent’s models more accurately; we demonstrate this using the profiles in Fig. 4(b, d)

On average, exponentially more particles are needed for IPF to reach an identical L1 error as the RB-IPF. This is exemplified by the difference in the run times of the two methods (Fig. 5(a)) for an identical estimation accuracy.

A source of error in RB-IPF when updating the belief over

other agent’s models is the step of fitting a Gaussian to the

piecewise density resulting from j’s update of its beliefs. We

analyze the sensitivity of the performance of the RB-IPF to

this error by varying the number of sample points that are

Prob.

Tiger

PG

Run times in secs

RB-IPF

I-PF

50.93 ± 0.96

151.05 ± 0.4

32.06 ± 0.3

101.07 ± 0.45

L1 Error

Figure 4: Performance profiles for the tiger and PG problems. (a, c) Estimation accuracy of RB-IPF is significantly better than the I-PF’s

given the same number of particles. (b, d) Comparison of the accuracy of model estimations. Both filters use an identical number of particles

over the physical states; I-PF also uses that number for models.

0.12

0.11

0.1

0.09

0.08

0.07

0.06

0.05

0.04

Tiger

PG

100

1000

No. of points for Gaussian fit

Figure 5: For an identical L1 error, RB-IPF takes less run time

than the I-PF (Linux, Xeon 3.4GHz, 4GB RAM). The plot shows

that accuracy of RB-IPF is not significantly sensitive to the closeness of the Gaussian fit beyond a reasonable number of points.

used in calculating the ML Gaussian fit. Fig. 5(b) reveals

that for both problems, as few as 200 sample points produce

an estimation accuracy that is not significantly less from the

average obtained by fitting a Gaussian more closely.

Performance of the approach may suffer for deeply nested

beliefs. This is because its effectiveness rests on being able

to almost exactly update an agent’s belief over other’s models, which requires that we update other’s beliefs exactly.

However, at levels > 1, while we may recursively call the

RB-IPF to update the belief, this is no longer exact.

References

Casella, G., and Robert, C. 1996. Rao-blackwellisation of

sampling schemes. Biometrika 83:81–94.

Doshi, P., and Gmytrasiewicz, P. J. 2005. Approximating

state estimation in multiagent settings using particle filters.

In AAMAS.

Doucet, A.; Freitas, N. D.; and Gordon, N. 2001. Sequential Monte Carlo Methods in Practice. Springer Verlag.

Fudenberg, D., and Tirole, J. 1991. Game Theory. MIT

Press.

Jordan, M. I.; Ghahramani, Z.; Jaakkola, T.; and Saul, L. K.

1999. An introduction to variational methods for graphical

models. Machine Learning 37(2):183–233.

Jordan, M. I. 1995. Why the logistic function? Technical

Report 9503, Computational Cognitive Science, MIT.

Murphy, K. 1999. A variational approximation for bns

with discrete and continuous variables. In UAI.

Rathnas, B.; Doshi, P.; and Gmytrasiewicz, P. 2006. Exact solutions to i-pomdps using behavioral equivalence. In

AAMAS.

717