Principal Component Analysis & Independent Component Analysis Overview Principal Component Analysis

advertisement

Principal Component Analysis & Independent Component Analysis

Overview

Principal Component Analysis

•Purpose

•Derivation

•Examples

Independent Component Analysis

•Generative Model

•Purpose

•Restrictions

•Computation

•Example

Literature

Fall 2004

Pattern Recognition for Vision

Notation & Basics

u

Vector

U

Matrix

uT v, u, v ∈ R N Dot product written

as matrix product

Product of a row vector with

ua

scalar as matrix product, and not au

2

u = u = uT u squared norm

2

Rules for matrix multiplication:

UVW = (UV ) W = U (VW )

(U + V ) W = UW + VW

(UV )T = V T UT

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

Purpose

For a set of samples of a random vector

x ∈ R N , discover or reduce the dimensionality and

identify meaningful variables.

u1

u2

u1

y = Ux, U : p × N , p < N

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

PCA by Variance Maximization

Find the vector u1 ,such that the variance of the data along this

direction is maximized:

uB

uA

σ u2 = E {(u1T x) 2 } , E {x} = 0, u1 = 1

1

σ u2 = E {(u1T x)(xT u1 )} = u1T E {xxT } u1 ,

1

σ u2 = u1T Cu1 ,

1

C = E {xxT }

σ u2 > σ u2

A

B

The solution is the eigenvector e1of C with the largest

eigenvalue λ1.

Ce1 = e1λ1 , λ1 = e1T Ce1 ⇔ σ u21 = λ1

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

PCA by Variance Maximization

For a given p < N , find p orthonormal basis vectors ui

such that the variance of the data along these vectors

is maximally large, under the constraint of decorrelation:

E {(uTi x)(uTn x)} = 0, uTi u n = 0 n ≠ p

The solution are the eigenvectors of C ordered according to

decreasing eigenvalues λ :

u1 = e1 , u 2 = e 2 ,..., u p = e p , λ1 > λ2 ... > λ p

Proof of decorrelation for eigenvectors:

E {(eTi x)(eTn x)} = eTi E {xxT } e n = eTi Ce n = eTi e n λn = 0

N

orthogonal

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

PCA by Mean Square Error Compression

For a given p < N , find p orthonormal basis vectors such that

the mse between x and its projection xˆ into the subspace spanned

by the p orthonormal basis vectors is mimimum:

{

mse = E x − xˆ

2

}

p

, xˆ i = ∑ u k ( xTk u k ), uTk u m = δ k ,m

k =1

uk

x

x̂

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

2

p

2

T

ˆ

mse = E x − x = E x − ∑ u k (x u k )

k =1

scalar

p

p

p T

2

T

T

T

T

= E x − 2 E ∑ x u k (x u k ) + E ∑ (x u n )u n ∑ u k (x u k )

k =1

k =1

n =1

{

}

{ }

{ }

p T 2

p T 2

− 2 E ∑ ( x u k ) + E ∑ ( x u k )

k =1

k =1

{ }

p T 2

− E ∑ ( x u k )

k =1

=E x

=E x

2

2

p

= trace ( C ) − ∑ uTk Cu k , C = E {xxT }

k =1

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

P

mse = trace ( C ) − ∑ uTk Cu k , C = E {xxT }

k =1

maximize

Solution to minimizing mse is any (orthonormal) basis

of the subspace spanned by the p first eigenvectors

e1 ,..., e p of C.

p

mse = trace ( C ) − ∑ λk

k =1

=

N

∑λ

k = p +1

k

The mse is the sum of the eigenvalues corresponding to

the discarded eigenvectors e P +1 ,...,e N of C : mse =

Fall 2004

N

∑λ

k = p +1

k

Pattern Recognition for Vision

Principal Component Analysis (PCA)

How to determine the number of principal components p?

Linear signal model with unknown number p < N of signals:

a1,1

a1, p

x = As + n, A =

%

N× p

a

a

N,p

N ,1

Signal si have 0 mean and are uncorrelated, n is white noise:

E {ssT } = I, E {nnT } = σ n2 I

C = E {xxT } = E {As( As)T } + E {nnT } + E {AsnT }

= A E {ssT } AT + E {nnT } = AAT + σ n2 I

=0

d1 > d 2 > ... > d p > d p +1 = d p + 2 = ... = d N = σ n2

→ cut off when eigenvalues become constants

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

Computing the PCA

Given a set of samples {x1 ,..., x M } of a random vector x

calculate mean and covariance.

1 M

µ =

xi , x → x − µ

∑

M i =1

M

1

T

=

C

x

µ

x

µ

−

−

(

)(

)

∑

i

i

M i =1

e.g. with QR algorithm

Compute eigenvecotors of C

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

Computing the PCA

If the number of samples M is smaller than the

dimensionality N of x :

x1,1

x1, M

B=

%

x

xN , M

N ,1

BBT e = eλ

T

T

N

M

,

,

:

,

:M ×N

=

×

C

BB

B

B

BT Be′ = e′λ ′

BBT (Be′) = (Be′)λ ′

e = Be′, λ ′ = λ

Fall 2004

→ Reducing complexity from

O( N 2 ) to O( M 2 )

Pattern Recognition for Vision

Principal Component Analysis (PCA)

Examples

Eigenfaces for face recognition (Turk&Pentland):

Training:

-Calculate the eigenspace for all faces in the training database

-Project each face into the eigenspace → feature reduction

Classification:

-Project new face into eigenspace

-Nearest neighbor in the eigenspace

Fall 2004

Pattern Recognition for Vision

Principal Component Analysis (PCA)

Examples cont.

Feature reduction/extraction

Original

Reconstruction with 20 PC

http://www.nist.gov/

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Generative model

Noise free, linear signal model:

N

x = As = ∑ ai si

i =1

x = ( x1 ,..., xN ) Observed variables

T

s = ( s1 ,..., sN ) latent signals, independent components

T

a1,1

a1, N

T

A=

%

a

=

a

a

unknown

mixing

matrix,

(

,...,

)

i

N ,i

1,i

a

a

N

N

N

,1

,

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Task

For the linear, noise free signal model, compute A and s

given the measurements x.

S1

X1

S1

X3

S1

S

1

S2

X2

Blind source separation :

separate the three original signals

s1 ,s2 , and s3 from their mixtures

x1 ,x2 , and x3 .

S3

Figure by MIT OCW.

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Restrictions

1.) Statistical independence

The signals si must be statistically independent:

p( s1 , s2 ,..., sN ) = p1 ( s1 ) p2 ( s2 )... pN ( sN )

Independent variables satisfy:

E { g1 ( s1 ) g 2 ( s2 )...g N ( sN )} = E { g1 ( s1 )} E { g 2 ( s2 )} ...E { g N ( sN )}

for any gi ( s ) ∈ L1

E { g1 ( s1 ) g 2 ( s2 )} = ∫

∞

∫

∞

−∞ −∞

∞

∞

−∞

−∞

g1 ( s1 )g 2 ( s2 ) p ( s1 , s2 )ds1ds2

= ∫ g1 ( s1 ) p ( s1 )ds1 ∫ g 2 ( s2 ) p ( s2 )ds2 =E { g1 ( s1 )} E { g 2 ( s2 )}

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Restrictions

Statistical independence cont.

E { g1 ( s1 ) g 2 ( s2 )...g N ( sN )} = E { g1 ( s1 )} E { g 2 ( s2 )} ...E { g N ( sN )}

Independence includes uncorrelatedness:

E {( si − µi )( sn − µ n )} = E {( si − µi )} E {( sn − µ n )} = 0

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Restrictions

2.) Nongaussian components

The components si must have a nongaussian distribution

otherwise there is no unique solution.

Example:

given A and two gaussian signals:

2

1

generate new signals

σ1

2

2

s

s

exp(− 2 − 2 )

p( s1 , s2 ) =

2πσ 1 σ 2

2σ 1 2σ 2

1

σ2

1

1

0

1/ σ 1

s1′2

s′22

1

exp(− ) exp(− )

s′ =

s ⇒ p( s1′, s2′ ) =

0

1/ σ 2

2π

2

2

Scaling matrix S

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Restrictions

Nongaussian components cont.

under rotation the components remain independent:

cos ϕ sin ϕ

s1′′2

s′′2 2

1

s′′ =

exp(− ) exp(− )

s′, p( s1′′, s2′′) =

− sin ϕ cos ϕ

2π

2

2

Rotation matrix R

combine whitening and rotation B = RS :

x = As = AB −1s′′

AB −1 is also a solution to the ICA problem.

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Restrictions

3.) Mixing matrix must be invertible

The number of independent components is equal to

the number of observerd variables.

Which means that there are no redundant mixtures.

In case mixing matrix is not invertible apply PCA

on measurements first to remove redundancy.

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Ambiguities

1.) Scale

N

N

1

i =1

i =1

αi

x = ∑ ai si = ∑ (

ai )(α i si )

Reduce ambiguity by enforcing E {si 2 } = 1

2.) Order

We cannot determine an order of the independant

components

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Computing ICA

a) Minimizing mutual information:

ˆ −1x

sˆ = A

N

Mutual information: I (sˆ ) = ∑ H ( sˆi ) − H (sˆ )

i =1

H is the differential etnropy:

H (sˆ ) = − ∫ p(sˆ ) log 2 ( p(sˆ )) dsˆ

I is always nonnegative and 0 only if the sˆi are

independent.

ˆ −1 such that I (sˆ ) is minimized.

Iteratively modify A

Fall 2004

Pattern Recognition for Vision

Independent Component Analysis (ICA)

Computing ICA cont.

b) Maximizing Nongaussianity

s = A −1x

introduce y and b: y = bT x = bT As = qT s

From central limit theorem:

y = qT s is more gaussian than any of the si and becomes

least gaussian if y = si .

Iteratively modify bT such that the "gaussianity"

of y is minimized. When a local minimum is reached,

bT is a row vector of A −1.

Fall 2004

Pattern Recognition for Vision

PCA Applied to Faces

x1,M

x1,1

xN ,1

…

x1

xN , M

xM

Each pixel is a feature, each face image a point in the feature space.

Dimension of feature vector is given by the size of the image.

x3

ui are the eigenvectors which can be

x1

represented as pixel images in the original

cooridinate system x1...xN

u2

xM

u1 x

2

x1

Fall 2004

Pattern Recognition for Vision

ICA Applied to Faces

xN ,1

x1,1

xN

x1

S1

S1

…

x1, M

xN , M

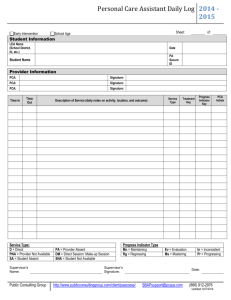

Now each image corresponds to a particular observed variable

measured over time (M samples). N is the number of images.

N

x = As = ∑ ai si

i =1

x = ( x1 ,..., xN ) Observed variables

T

s = ( s1 ,..., sN ) latent signals, independent components

T

Fall 2004

Pattern Recognition for Vision

PCA and ICA for Faces

Features for face recognition

Image removed due to copyright considerations. See Figure 1 in: Baek, Kyungim et. al.

"PCA vs. ICA: A comparison on the FERET data set." International Conference of Computer Vision,

Pattern Recognition, and Image Processing, in conjunction with the 6th JCIS. Durham, NC, March 8-14 2002, June 2001.

Fall 2004

Pattern Recognition for Vision