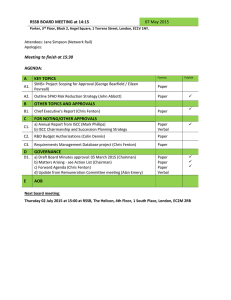

Document 13237904

advertisement