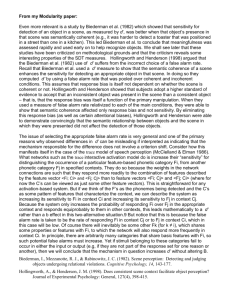

Eye movements serialize memory for objects in scenes

advertisement