Document 13016671

THE INFORMATION GEOMETRY

UNDERLYING NONCOOPERATIVE GAMES

David H. Wolpert, Santa Fe Institute http://davidwolpert.weebly.com

Nils Bertschinger, Max Planck Institute

Eckehard Olbrich, Max Planck Institute

Juergen Jost, Max Planck Institute, Santa Fe Institute

POSITIVE VALUE OF INFORMATION

Row moves first, and Col receives a noise-swamped

observation of Row’s move before Col moves

h m

H: 0, 0 3, -1

M: -2, 1 2, 3

POSITIVE VALUE OF INFORMATION

Row moves first, and Col receives a noise-swamped

observation of Row’s move before Col moves

h m

H: 0, 0 3, -1

M: -2, 1 2, 3

(Unique) Nash Eq. is (H, h)

Col gets 0

POSITIVE VALUE OF INFORMATION

Row moves first, and Col receives a noise-free

observation of Row’s move before Col moves

h m

H: 0, 0 3, -1

M: -2, 1 2, 3

(Unique) Nash Eq. is (M, m)

Col gets 3

POSITIVE VALUE OF INFORMATION

Row moves first, and Col receives a noise-free

observation of Row’s move before Col moves

h m

H: 0, 0 3, -1

M: -2, 1 2, 3

Getting more information gains 3 for Col

NEGATIVE VALUE OF INFORMATION

Cournot duopoly game (moves are production levels)

Row moves first, and Col receives a noise-swamped

observation of Row’s move before Col moves

h m l

H: 0, 0 12, 8 18, 9

M: 8, 12 16, 16 20, 15

L: 9, 18 15, 20 18, 18

NEGATIVE VALUE OF INFORMATION

Cournot duopoly game (moves are production levels)

Row moves first, and Col receives a noise-swamped

observation of Row’s move before Col moves

h m l

H: 0, 0 12, 8 18, 9

M: 8, 12 16, 16 20, 15

L: 9, 18 15, 20 18, 18

(Unique) Nash Eq. is (M, m)

Col gets 16

NEGATIVE VALUE OF INFORMATION

Cournot duopoly game (moves are production levels)

Row moves first, and Col receives a noise-free

observation of Row’s move before Col moves

h m l

H: 0, 0 12, 8 18, 9

M: 8, 12 16, 16 20, 15

L: 9, 18 15, 20 18, 18

(Unique) Nash Eq. is now (H, l)

Col gets only 9

NEGATIVE VALUE OF INFORMATION

Cournot duopoly game (moves are production levels)

Row moves first, and Col receives a noise-free

observation of Row’s move before Col moves

h m l

H: 0, 0 12, 8 18, 9

M: 8, 12 16, 16 20, 15

L: 9, 18 15, 20 18, 18

Getting more information costs Col 7

NEGATIVE VALUE OF UTILITY

Change a game by imposing a tax on Row for every

move she might make. And she benefits:

L R

T: 10, 10 -2, 8

B: 12, -2 0, 0

Nash eq. is (B, R)

Row gets 0

L R

T: 5, 10 -3, 8

B: 4, -2 -4, 0

Nash eq. is (T, L)

Row gets 5

VALUE OF PARAMETERS OF A GAME

1) How analyze

value of changes to the parameters

of a

game?

2) What is relation between a game’s parameters and

the values of those parameters to the players in the game?

3) When is there negative val. of (info., utility) to a player?

4) When is there negative val. of (info., utility) to all players?

5) What are marginal rates of substitution among (values of)

information, utility, etc.?

DIFFERENTIAL VALUE OF INFORMATION

1) “Value of a good” to a consumer is marginal utility of increasing

amount of just that good (per unit good).

DIFFERENTIAL VALUE OF INFORMATION

1) “Value of a good” to a consumer is marginal utility of increasing

amount of just that good (per unit good).

2) Value of a linear combination of goods?

- Marginal utility of increasing amount of just that linear comb .

DIFFERENTIAL VALUE OF INFORMATION

1) “Value of a good” to a consumer is marginal utility of increasing

amount of just that good (per unit good).

2) Value of a linear combination of goods?

- Marginal utility of increasing amount of just that linear comb .

3) Value of arbitrary function f( θ ) of vector of goods θ ?

- Marginal utility of increasing amount of f( θ )

- I.e., directional deriv. of utility along ∇ f( θ ), divided by | ∇ f( θ )| 2

DIFFERENTIAL VALUE OF INFORMATION

1) “Value of a good” to a consumer is marginal utility of increasing

amount of just that good (per unit good).

2) Value of a linear combination of goods?

- Marginal utility of increasing amount of just that linear comb .

3) Value of arbitrary function f( θ ) of vector of goods θ ?

- Marginal utility of increasing amount of f( θ )

- I.e., directional deriv. of utility along ∇ f( θ ), divided by | ∇ f( θ )| 2

4) Value of arbitrary function f of the game parameter θ ?

Directional deriv. of utility along ∇ f( θ ) divided by | ∇ f( θ )| 2

E(u i

)

Θ σ

Θ f

•

θ

is any parameter specifying game structure

•

σ

Θ

is joint strategy of players

• E(u i

)

is expected utility of player i

• f

is a mutual information (or information capacity, or … anything)

DIFFERENTIAL VALUE OF INFORMATION

Directional deriv. of utility along ∇ f( θ ) divided by | ∇ f( θ )| 2

1) θ c an be noise distribution in an information channel

2) θ c an be rationality parameter in a solution concept

- e.g., exponent in a QRE

3) θ c an be parameter specifying a utility function

4) θ c an be regulator’s choices , e.g., tax rates, regulations, etc.

5) f( θ ) can be mutual information between arbitrary nodes of a MAID

specified by θ , information capacity between them, rationality of a

player (or any other component of θ ), etc.

RESULTS

Theorem : ∇ f( θ ) ∇ V( θ ) < | ∇ f( θ )| | ∇ V( θ )|

⇒

∃ changes to θ that reduce f (e.g., a channel’s info capacity) but increase V (e.g., expected utility)

Intuition :

If player values anything in addition to information, a change may reduce their information but increase their expected utility

RESULTS

Theorem : r ( f ) 62 Con ( { r ( V i

) } ) , Con ( { r ( V

⇒

i

) } ) is pointed

∃ changes to θ that decrease f (e.g., a channel’s info. capacity) but increase V (e.g., expected utility) for all players

Intuition : Unless a special condition relates the set of all gradients, there exists a change to game parameter that decreases information but is Pareto-preferred

1) What is relation between a game’s parameters and the values of

information to the players in the game?

2) When is there negative val. of info. to a player?

3) When is there negative val. of info. to all players?

4) What are marginal rates of substitution among (value of)

information, rationality, utility functions, etc.?

Using differential value of information, can answer all these questions - and more

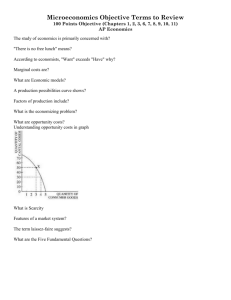

RESULTS

Utility follower

4

3.8

3.6

3.4

3.2

3

2.8

2.6

2.4

2.2

2

0.5

0.45

0.4

0.35

eps

0.3

0.25

0.2

0.15

0.1

0.05

0 100

10

1

0.1

beta

0.01

Follower expected utility plotted against

θ = ( channel noise, QRE exponent ( rationality value ))

Noisy leader-follower binary-move game

- Similar for leader

DIFFERENTIAL VALUE OF INFORMATION

Directional deriv. of utility along ∇ f( θ ) divided by | ∇ f( θ )| 2

Unlike previous approaches, diff. val. of info. is

1) Defined for all edges in an ID

2) Defined for any changes to an edge in a (MA)ID – not just removal

3) Defined separately for each equilibrium in a MAID

4) By evaluating it for multiple f’s, allows us to compare:

• value of information

• value of rationality, etc.

“This much extra information is equivalent to this much extra rationality”

DIFFERENTIAL VALUE OF INFORMATION

Directional deriv. of utility along ∇ f( θ ) divided by | ∇ f( θ )| 2

1) Requires choosing a metric tensor over space of game param. θ

2) N.b., θ fixes joint distribution over all variables in the MAID

3) So natural choice for metric is Fisher information metric:

Information geometry of noncooperative games

QUANTIFYING AMOUNT OF INFORMATION

1) Sample P(x) to get an x.

2) Then sample P(y | x) to get a y.

3) How much “information” is in that y, concerning that x?

Gain in accuracy of predicting x using P(x | y) rather than P(x )

4) Formalize this as a difference: log-likelihood of that x, evaluated under P(x | y)

minus log-likel. of that x, mistakenly evaluated under P(x )

5) Average this difference over x and y:

Average amount of information in y concerning x:

Shannon’s mutual information between y and x

RESULTS

@

2 f

@✓ i @✓ j

| r f ( ✓ ) |

| r V ( ✓ ) |

@

2

V

@✓ i @✓ j

| r V ( ✓ ) | 2 r V ( ✓ ) i r V ( ✓ ) j

=

@ 2 f

@✓ i @✓ j

| r f ( ✓ ) |

| r V ( ✓ ) |

@ 2 V

@✓ i @✓ j

1) One of our ECS slides giving a player's mixed strategy as a function of beta and epsilon

2) The associated slide giving expected utility as a function of beta and epsilon

3) 2-player game Edgeworth box plotting functions for only one of the players

(to keep the figure clear). Use this to illustrate our definition of value of info.

4) The associated mutual information and information capacity heat maps.

5) (3) again, to illustrate Prop. 1 visually.

- Formal statement of Prop. 1

6) (3) again, to illustrate Prop. 3 visually.

- Formal statement of Prop. 3

7) Edgeworth box showing functions for both players, illustrating Prop. 4 visually.

Formal statement of Prop. 4

INFLUENCE DIAGRAMS

Game against Nature

- represented as player-fixed Bayes net :

Chance Nodes C (pre-fixed CPs)

model Nature, player observations.

Decision Nodes D (player fixes

CPs at D, D’, D”) give player’s actions

The player sets CP(s) of her

decision nodes based on expected

utility of resultant full Bayes net

C

2

D’’

C

1

D’

C

3

D

PREVIOUS APPROACH TO VALUE OF INFO.

IN GAMES AGAINST NATURE

1) Calculate maximal expected utility with

red edge – U

1 C

1

C

2

D

D’

D’’

C

3

VALUE OF INFORMATION IN GAMES

AGAINST NATURE

1) Calc. maximal expected utility with

red edge – U

1

2) Calc. maximal expected utility without

red edge – U

2

C

2

C

1

D’

D

D’’

C

3

VALUE OF INFORMATION IN GAMES

AGAINST NATURE

1) Calc. maximal expected utility with

red edge – U

1

2) Calc. maximal expected utility without

red edge – U

2

3) “ Value ” of red edge = U

1

- U

2

C

2

C

1

D’

D

D’’

C

3

VALUE OF INFORMATION IN GAMES

AGAINST NATURE

1) Calc. maximal expected utility with

red edge – U

1

2) Calc. maximal expected utility without

red edge – U

2

3) “ Value ” of red edge = U

1

- U

2

4) Cannot measure value of edges into

chance nodes

5) Cannot measure value of changes to

CPs of edges

6) Cannot measure value of nodes

C

2

D’’

C

1

D’

C

3

D

MULTI-AGENT INFLUENCE DIAGRAMS

Multi-Player Game

- represented as player-fixed Bayes net :

Chance Nodes C give states,

observations, etc. (fixed CPs)

Decision Nodes D i

give i’s actions

(player i sets CP at D i

)

Players sets the CP(s) of their

decision nodes based on expected

utility of resultant full Bayes net.

C

2

D

1

C

1

D

2

C

3

D

1

PREVIOUS APPROACH TO VALUE OF

INFO. IN MULTI-PLAYER GAMES

1) Extensive form rep. used (not MAIDs)

2) Provides ordinal “value” of different

information partitions

C

2

C

1

D

2

D

1

D

1

C

3

PREVIOUS APPROACH TO VALUE OF

INFO. IN MULTI-PLAYER GAMES

1) Extensive form rep. used (not MAIDs)

2) Provides ordinal “value” of different

information partitions

3) Cannot use for numeric analysis

4) Difficulties comparing arbitrary

information partitions

5) Cannot apply to games with multiple

equilibria

C

2

D

1

C

1

D

2

C

3

D

1

SHANNON INFORMATION THEORY

1) Very deep and powerful formalization of information

2) Arises in earlier work on games, e.g., as

• Tool to formalize modeler’s uncertainty

• Model of bounded rational players

- Quantal Response Equilibrum – QRE

• Analogy with statistical physics

• Total payoff in multi-stage games with multiplicative payoffs

3) Never used before to quantify information within the game

(e.g., as mutual information, information capacity, etc.)

SHANNON INFORMATION THEORY

How use Shannon information theory to analyze value of information in games?

SHANNON INFORMATION THEORY

How use Shannon information theory to analyze value of information in games?

Can we answer our motivating questions if we figure out how to answer this one?

SHANNON INFORMATION THEORY

How use Shannon information theory to analyze value of information in games?

Can we answer our motivating questions if we figure out how to answer this one?

Yes

MUST BE MORE SPECIFIC ….

• Value of information

• { Marginal } value of information

• Marginal value of information { capacity between v

1

and v

2

} or

• Marginal value of { mutual } information { between v

1

and v

2

} or

• Marginal value of { multi} information { among v

1

, v

2

, v

3

, … }

…

• Marginal value of mutual information between v

{ to player i }

1

and v

2

ROADMAP

1) Graphical models of games

2) Previous work involving

Shannon Info. Theory and games

3) From marginal utility to value of information; information geometry of games

4) Theorems and plots

NEGATIVE VALUE OF INFO. TO ALL PLAYERS

Braess’ paradox:

t = commute time for each player on indicated road,

T = total number of players on indicated road,

4000 players total,

cost = 4 for a player to try to go from A to B,

A priori probability that road from A to B is open = .1

All players get noise-swamped signal of whether A-to-B is open

Nash Eq : 2000 players go from Start to A to End,

2000 players go from Start to B to End.

All players have 65 minute commute

NEGATIVE VALUE OF INFO. TO ALL PLAYERS

Braess’ paradox :

t = commute time for each player on indicated road,

T = total number of players on indicated road,

4000 players total,

cost = 4 for a player to try to go from A to B,

A prior i probability that road from A to B is open = .1

All players get noise-free signal of whether A-to-B is open

Nash Eq : Same as before when A-to-B is closed (.9 probability)

When A-to-B is open, all players go from Start to A to B to End, in which case all players have 84 minute commute

NEGATIVE VALUE OF INFO. TO ALL PLAYERS

Braess’ paradox :

t = commute time for each player on indicated road,

T = total number of players on indicated road,

4000 players total,

cost = 5 for a player to try to go from A to B,

A prior i probability that road from A to B is open = .1

All players get noise-free signal of whether A-to-B is open

When all players get extra information (is A to B open?), either no change in commute time (.9 probability), or all players have 19 minute longer commute (.1 probability)

VALUE OF INFORMATION

1) What is relation between a game’s parameters and

the

values of information

to the players in the game?

2) When is there negative val. of info. to a player?

3) When is there negative val. of info. to all players?

VALUE OF … ANYTHING?

1) What is relation between a game’s parameters and

the values of information to the players in the game?

2) When is there negative val. of info. to a player?

3) When is there negative val. of info. to all players?

Can we analyze value of tax, regulation, etc., with the same formalism?

DIFFERENTIAL VALUE OF INFORMATION

Row moves first, and Col receives a noisy

observation of Row’s move before Col moves

h m

H: 0, 0 3, -1

M: -2, 1 2, 3

RESULTS

Leader strategy

1

0.9

0.8

0.7

0.6

0.5

0.4

0.3

0.2

0.1

0

0.5

0.45

0.4

0.35

eps

0.3

0.25

0.2

0.15

0.1

0.05

0 100

10

1

0.1

beta

0.01

Leader mixed strategy plotted against

θ = ( channel noise, QRE exponent ( rationality value ))

Noisy leader-follower binary-move game

- Similar for follower

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.15

0.1

0.05

0

0 0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Theorem : ∇ f( θ ) ∇ V( θ ) < | ∇ f( θ )| | ∇ V( θ )|

⇒

∃ changes to θ that reduce f (e.g., a channel’s info capacity) but increase V (e.g., expected utility)

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.15

0.1

0.05

0

0 0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Intuition :

If player values anything in addition to information, a change may reduce their information but increase their expected utility

RESULTS

0.5

0.45

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

5

4.5

0.4

0.35

4

0.3

0.25

0.2

3.5

3

0.15

0.1

2.5

0.05

0

0

2

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

Theorem : r ( f ) 62 Con ( { r ( V i

) } ) , Con ( { r ( V

⇒

i

) } ) is pointed

∃ changes to θ that decrease f (e.g., a channel’s info. capacity) but increase V (e.g., expected utility) for all players

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

0.15

0.1

0.05

0

0

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Intuition : Unless a special condition relates the set of all gradients, there exists a change to game parameter that decreases information but is Pareto-preferred

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.15

0.1

0.05

0

0 0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Level surfaces of expected utilities and channel capacity plotted against channel parameters in noisy leader-follower binary-move game.

RESULTS

0.5

0.45

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

5

4.5

0.4

0.35

4

0.3

0.25

0.2

3.5

3

0.15

0.1

2.5

0.05

0

0

2

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

Theorem :

@

2 f

@✓ i @✓ j

| r f ( ✓ ) |

| r V ( ✓ ) |

@

2

V

@✓ i @✓ j @ 2 f | r f ( ✓ ) | @ 2 V

| r V ( ✓ ) | 2 r V ( ✓ ) i r V ( ✓ ) j

=

@✓ i @✓ j | r V ( ✓ ) | @✓ i @✓ j

⇒

The set { θ : no changes to θ reduce f while increasing V} has measure 0

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

0.15

0.1

0.05

0

0

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Intuition : Unless a special condition between Hessians and gradients holds for all values of game parameter θ , there exists a game parameter θ and changes to it that decrease information but increase expected utility

RESULTS

0.5

0.45

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

5

4.5

0.4

0.35

4

0.3

0.25

0.2

3.5

3

0.15

0.1

2.5

0.05

0

0

2

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

Theorem : r ( f ) 62 Con ( { r ( V i

) } ) , Con ( { r ( V i

) } ) is pointed

⇒

∃ changes to θ that increase f (e.g., a channel’s info. capacity) but increase V (e.g., expected utility) for all players

RESULTS

0.5

0.45

0.4

0.35

0.3

0.25

0.2

0.15

0.1

0.05

0

0

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

5

4.5

4

3.5

3

2.5

2

Intuition : Unless a special condition relates the set of all gradients, there exists a change to game parameter that increases information and is Pareto-preferred

RESULTS

0.5

0.45

Branch 4: CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

UTIL-LEADER

UTIL-FOLLOWER

CHANNEL-CAPACITY

5

4.5

0.4

0.35

4

0.3

0.25

0.2

3.5

3

0.15

0.1

2.5

0.05

0

0

2

0.05

0.1

0.15

0.2

0.25

0.3

0.35

0.4

0.45

0.5

eps1

• Issue is Pareto-improving change to θ , not

Pareto-improving change to mixed strategy profile

• Not uncommon that the “special condition” does hold.

Example : constant-sum games .

• Generically, if number players < dim( θ ), ∃ both changes to θ that: i) Increase f and are Pareto-improving ii) Decrease f and are Pareto-improving.