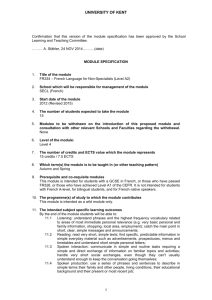

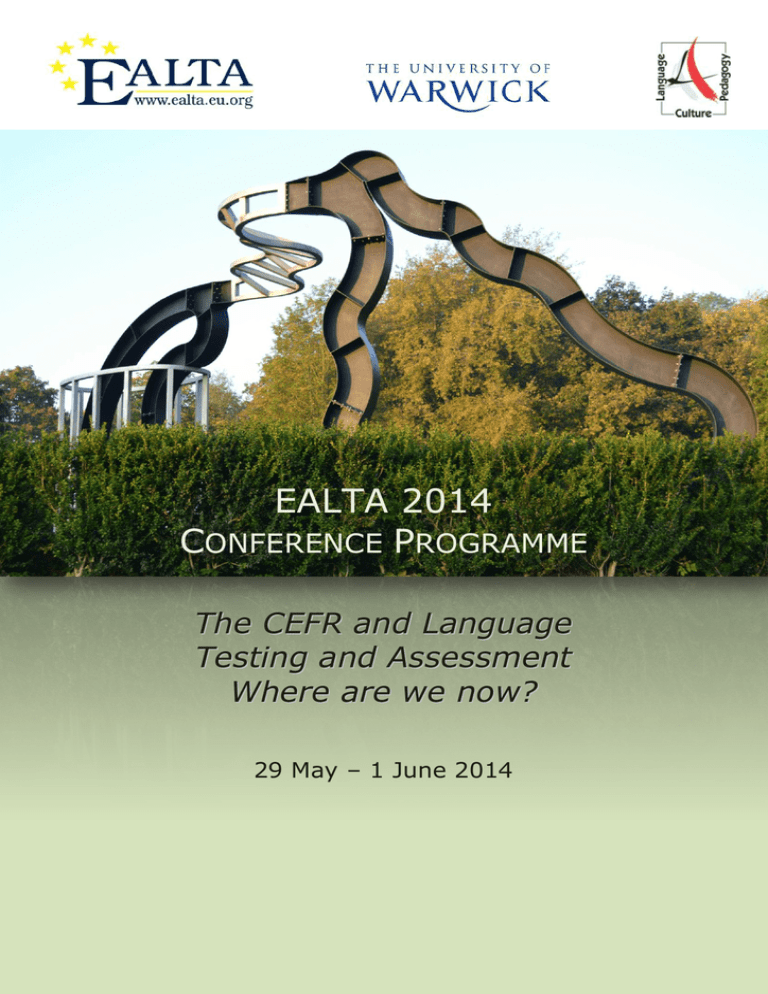

EALTA 2014 C P

advertisement