Assessment Thursday Notes – 11/15/12

advertisement

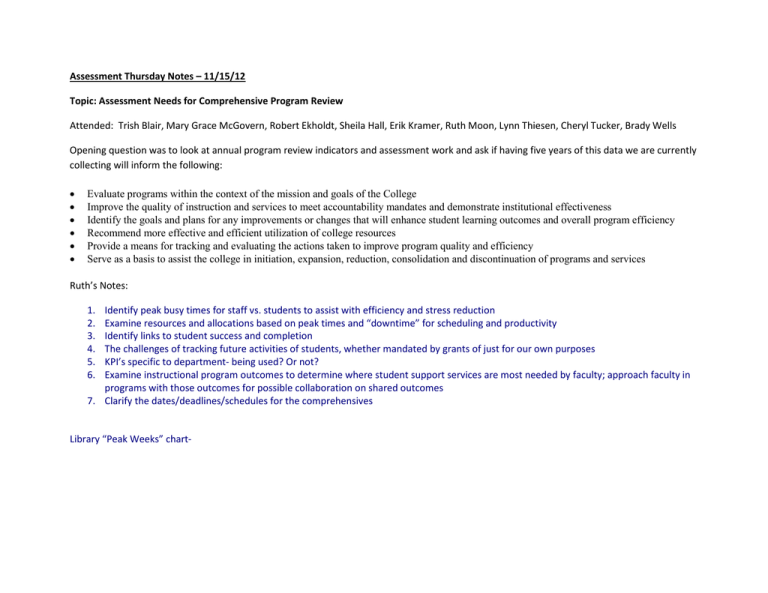

Assessment Thursday Notes – 11/15/12 Topic: Assessment Needs for Comprehensive Program Review Attended: Trish Blair, Mary Grace McGovern, Robert Ekholdt, Sheila Hall, Erik Kramer, Ruth Moon, Lynn Thiesen, Cheryl Tucker, Brady Wells Opening question was to look at annual program review indicators and assessment work and ask if having five years of this data we are currently collecting will inform the following: • • • • • • Evaluate programs within the context of the mission and goals of the College Improve the quality of instruction and services to meet accountability mandates and demonstrate institutional effectiveness Identify the goals and plans for any improvements or changes that will enhance student learning outcomes and overall program efficiency Recommend more effective and efficient utilization of college resources Provide a means for tracking and evaluating the actions taken to improve program quality and efficiency Serve as a basis to assist the college in initiation, expansion, reduction, consolidation and discontinuation of programs and services Ruth’s Notes: 1. 2. 3. 4. 5. 6. Identify peak busy times for staff vs. students to assist with efficiency and stress reduction Examine resources and allocations based on peak times and “downtime” for scheduling and productivity Identify links to student success and completion The challenges of tracking future activities of students, whether mandated by grants of just for our own purposes KPI’s specific to department- being used? Or not? Examine instructional program outcomes to determine where student support services are most needed by faculty; approach faculty in programs with those outcomes for possible collaboration on shared outcomes 7. Clarify the dates/deadlines/schedules for the comprehensives Library “Peak Weeks” chart- The numbers across the bottom are the week numbers; the vertical axis is the number of sessions in that 11-year time frame. I had it going back to 2000 but decided to only use the 16-week semesters. That’s over 700 workshops total; average 25-30 per semester. So now you know, if you need a project worked on, Ruth is free after the 12th week! Cheryl’s notes: For example, if we want to address the issue of staffing for peak busy times for service areas, are we gathering data about service usage? Library and DSPS gather this data. Library has 12 years from survey information as well as other ways they have monitored use. Are we getting the data that will show we are supporting the college mission? What years did we have good completions and achievements – compare staffing/resources/types of service delivery and identify what supported success. What is the impact if you lose or gain staff? Identify cost effective solutions that work. Example: UB reduced hours of summer employees from 40 hours to 30 hours a week by creating a more flexible schedule and prioritizing tasks differently – the scheduling was complicated, but saved about $5,000 per year for the program. We should track conduct violations to ensure there is more support for peak times. Is there a correlation between stress for students vs. staff – if we identify behavior trends then we can also prepare staff. Another topic to add to next survey could be what causes stressors, or gather when we are conducting workshops. First two weeks – what type of data would we have collected or can collect to know that the Week One Welcome is helpful? How do we access the value of activities like this without surveys? Linking library measures to student completions has been difficult – others are struggling with this as well because it isn’t a defined population – it’s really all students and sometimes community members who have been students or are thinking about being students. How can we connect our work to support learning to what is happening in the classrooms – we need more information from faculty on what supports are working and other data such as how many do a research assignment i.e. Discussion took off surrounding the identification of course learning outcomes that could be connected to student development support services. Although we have some programs that have already made some connections to instruction, such as the educational planning work in general studies courses that is supported by Counseling/Advising and assessed for both the courses and the student development area, could there be others? It was suggested that we identify a small sample, perhaps start with four instructors that have an outcome related to how to use the library database for example. Another course level assessment that could connect to student development is “displays characteristics of an active learner.” We need to look at all course SLOs for connections to student development. (Ruth has already looked up course level outcomes connected to the library and will send those to Erik.) The Student Development Assessment Group (SDAG) will work with the Assessment Committee to develop this plan further to include flex or other supportive activities.