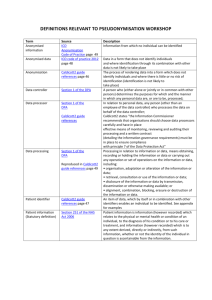

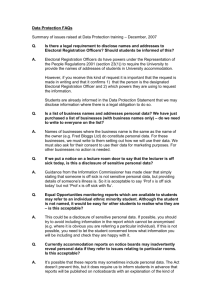

Anonymisation: code of practice managing data

advertisement