Document 11676847

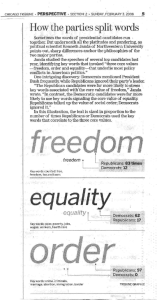

advertisement