Exploiting More ILP

advertisement

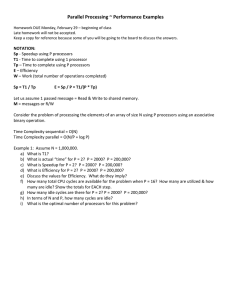

Exploiting More ILP • • Slide Set #20: Advanced Pipelining, Multiprocessors, and El Grande Finale Chapter 7 ILP = __________________ _________________ ________________ (parallelism within a single program) How can we exploit more ILP? 1. ________________________ (Split execution into many stages) 2. ___________________________ (Start executing more than one instruction each cycle) 1 Multiple Issue Processors 2 Multi-processing in SOME form… (chapter 7) • Key metric: CPI IPC 1. Multi-processors – multiple CPUs in a system • Key questions: 1. What set of instructions can be issued together? 2. Multi-core – multiple CPUs on a single chip Processor Processor Processor Cache Cache Cache Single bus 3. Clusters – machines on a network working together Memory I/O Idea: create powerful computers by connecting many smaller ones good news: works for timesharing (better than supercomputer) 2. Who decides which instructions to issue together? – Static multiple issue – bad news: its really hard to write good concurrent programs many commercial failures Dynamic multiple issue 3 4 Who? When? Why? • Multiprocessor/core: How do processors SHARE data? 1. Shared variables in memory “For over a decade prophets have voiced the contention that the organization of a single computer has reached its limits and that truly significant advances can be made only by interconnection of a multiplicity of computers in such a manner as to permit cooperative solution…. Demonstration is made of the continued validity of the single processor approach…” P ro c e s s o r P ro c e s s o r P ro c e s s o r C ache C ache C ache P rocessor OR S in g le b u s M e m o ry P rocessor C a che C a che C a che M em o ry M em o ry M em o ry I/ O N etw ork “Symettric Multiprocessor” “Uniform Memory Access” • P rocessor “Non-Uniform Memory Access” Multiprocessor 2. Send explicit messages between processors “…it appears that the long-term direction will be to use increased silicon to build multiple processors on a single chip.” P roc es s or P roc es s or P roc es s or C a c he C a c he C a c he M em o ry M em o ry M em o ry N etw ork 5 6 Flynn’s Taxonomy of multiprocessors(1966) Multiprocessor/core: How do processors COORDINATE? • synchronization 1. Single instruction stream, single data stream • built-in send / receive primitives 2. Single instruction stream, multiple data streams • operating system protocols 3. Multiple instruction streams, single data stream 4. Multiple instruction streams, multiple data streams 7 8 Example Multi-Core Systems (part 1) Example Multi-Core Systems (part 2) 2 × quad-core Intel Xeon e5345 (Clovertown) 2 × oct-core Sun UltraSPARC T2 5140 (Niagara 2) 2 × quad-core AMD Opteron X4 2356 (Barcelona) 2 × oct-core IBM Cell QS20 9 10 Clusters • • • • • • Constructed from whole computers Independent, scalable networks Strengths: – Many applications amenable to loosely coupled machines – Exploit local area networks – Cost effective / Easy to expand Weaknesses: – Administration costs not necessarily lower – Connected using I/O bus Highly available due to separation of memories Approach taken by Google etc. A Whirlwind tour of Chip Multiprocessors and Multithreading Slides from Joel Emer’s talk at Microprocessor Forum 11 12 Instruction Issue Superscalar Issue Time Time Reduced function unit utilization due to…. Superscalar leads to more performance, but lower utilization 13 Chip Multiprocessor 14 Fine Grained Multithreading Time Time Limited utilization when only running one thread Intra-thread dependencies still limit performance 15 16 Concluding Remarks Simultaneous Multithreading • Time • • • Goal: higher performance by using multiple processors / cores Difficulties – Developing parallel software – Devising appropriate architectures Many reasons for optimism – Changing software and application environment – Chip-level multiprocessors with lower latency, higher bandwidth interconnect An ongoing challenge! Maximum utilization of function units by independent operations 17 18