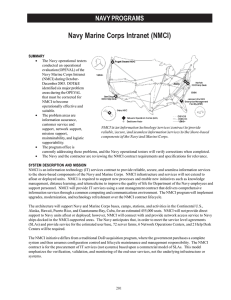

GAO INFORMATION TECHNOLOGY DOD Needs to Ensure

advertisement