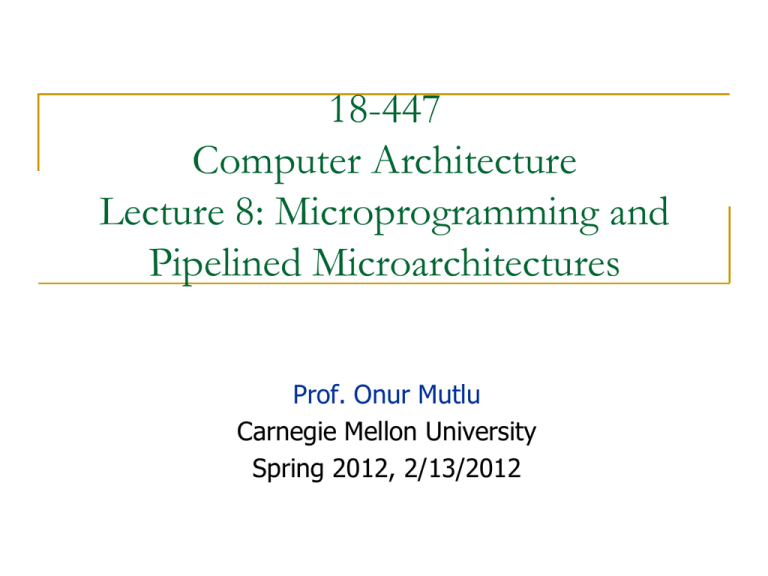

18-447

Computer Architecture

Lecture 8: Microprogramming and

Pipelined Microarchitectures

Prof. Onur Mutlu

Carnegie Mellon University

Spring 2012, 2/13/2012

Reminder: Homeworks

Homework 2

Due today

ISA concepts, ISA vs. microarchitecture, microcoded machines

Homework 3

Will be out tomorrow

2

Homework 1 Grades

Number of Students

35

30

25

20

15

10

5

0

50

60

70

80

90

100

110

Grade

Average

Median

Max

Min

100

103

110

55

Max Possible Points

110

Total number of students

56

3

Reminder: Lab Assignments

Getting your Lab 1 fully correct

We will allow resubmission once, just for the purposes of

testing the correctness of your revised code (no regrading)

Lab Assignment 2

Due Friday, Feb 17, at the end of the lab

Individual assignment

No collaboration; please respect the honor code

Lab Assignment 3

Will be out Wednesday

4

Reminder: Extra Credit for Lab Assignment 2

Complete your normal (single-cycle) implementation first,

and get it checked off in lab.

Then, implement the MIPS core using a microcoded

approach similar to what we are discussing in class.

We are not specifying any particular details of the

microcode format or the microarchitecture; you should be

creative.

For the extra credit, the microcoded implementation should

execute the same programs that your ordinary

implementation does, and you should demo it by the

normal lab deadline.

5

Readings for Today

Pipelining

P&H Chapter 4.5-4.8

6

Readings for Next Lecture

Required

Pipelined LC-3b Microarchitecture Handout

Optional

Hamacher et al. book, Chapter 6, “Pipelining”

7

Announcement: Discussion Sessions

Lab sessions are really discussion sessions

TAs will lead recitations

Go over past lectures

Answer and ask questions

Solve problems and homeworks

Please attend any session you wish

Tue 10:30am-1:20pm (Chris)

Thu 1:30-4:20pm (Lavanya)

Fri 6:30-9:20pm (Abeer)

8

An Exercise in Microcoding

9

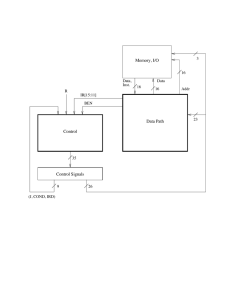

A Simple LC-3b Control and Datapath

10

18, 19

MAR <! PC

PC <! PC + 2

33

MDR <! M

R

R

35

IR <! MDR

32

RTI

To 8

1011

BEN<! IR[11] & N + IR[10] & Z + IR[9] & P

To 11

1010

[IR[15:12]]

ADD

To 10

BR

AND

DR<! SR1+OP2*

set CC

1

0

XOR

JMP

TRAP

To 18

DR<! SR1&OP2*

set CC

[BEN]

JSR

SHF

LEA

LDB

STW

LDW

STB

1

PC<! PC+LSHF(off9,1)

12

DR<! SR1 XOR OP2*

set CC

15

4

MAR<! LSHF(ZEXT[IR[7:0]],1)

To 18

[IR[11]]

0

R

To 18

PC<! BaseR

To 18

MDR<! M[MAR]

R7<! PC

22

5

9

To 18

0

28

1

20

R7<! PC

PC<! BaseR

R

21

30

PC<! MDR

To 18

To 18

R7<! PC

PC<! PC+LSHF(off11,1)

13

DR<! SHF(SR,A,D,amt4)

set CC

To 18

14

2

DR<! PC+LSHF(off9, 1)

set CC

To 18

MAR<! B+off6

6

7

MAR<! B+LSHF(off6,1)

3

MAR<! B+LSHF(off6,1)

MAR<! B+off6

To 18

29

MDR<! M[MAR[15:1]’0]

NOTES

B+off6 : Base + SEXT[offset6]

PC+off9 : PC + SEXT[offset9]

*OP2 may be SR2 or SEXT[imm5]

** [15:8] or [7:0] depending on

MAR[0]

R

31

R

DR<! SEXT[BYTE.DATA]

set CC

MDR<! SR

MDR<! M[MAR]

27

R

R

MDR<! SR[7:0]

16

DR<! MDR

set CC

M[MAR]<! MDR

To 18

To 18

R

To 18

24

23

25

17

M[MAR]<! MDR**

R

R

To 19

R

State Machine for LDW

10APPENDIX C. THE MICROARCHITECTURE OF THE LC-3B, BASIC MACHINE

Microsequencer

COND1

BEN

R

J[4]

J[3]

J[2]

IR[11]

Ready

Branch

J[5]

COND0

J[1]

Addr.

Mode

J[0]

0,0,IR[15:12]

6

IRD

6

Address of Next State

Figure C.5: The microsequencer of the LC-3b base machine

State 18 (010010)

State 33 (100001)

State 35 (100011)

State 32 (100000)

State 6 (000110)

State 25 (011001)

State 27 (011011)

unused opcodes, the microarchitecture would execute a sequence of microinstructions,

starting at state 10 or state 11, depending on which illegal opcode was being decoded.

In both cases, the sequence of microinstructions would respond to the fact that an

instruction with an illegal opcode had been fetched.

Several signals necessary to control the data path and the microsequencer are not

among those listed in Tables C.1 and C.2. They are DR, SR1, BEN, and R. Figure C.6

shows the additional logic needed to generate DR, SR1, and BEN.

The remaining signal, R, is a signal generated by the memory in order to allow the

C.4. THE CONTROL STRUCTURE

11

IR[11:9]

IR[11:9]

DR

SR1

111

IR[8:6]

DRMUX

SR1MUX

(b)

(a)

IR[11:9]

N

Z

P

Logic

BEN

(c)

Figure C.6: Additional logic required to provide control signals

R

IR[15:11]

BEN

Microsequencer

6

Control Store

2 6 x 35

35

Microinstruction

9

(J, COND, IRD)

26

10APPENDIX C. THE MICROARCHITECTURE OF THE LC-3B, BASIC MACHINE

COND1

BEN

R

J[4]

J[3]

J[2]

IR[11]

Ready

Branch

J[5]

COND0

J[1]

0,0,IR[15:12]

6

IRD

6

Address of Next State

Figure C.5: The microsequencer of the LC-3b base machine

Addr.

Mode

J[0]

.M

LD A R

.M

LD D R

. IR

LD

. BE

LD N

. RE

LD G

. CC

LD

.PC

Ga

t eP

Ga C

t eM

Ga DR

t eA

Ga L U

t eM

Ga ARM

t eS

HF UX

PC

MU

X

DR

MU

SR X

1M

AD U X

DR

1M

UX

AD

DR

2M

UX

MA

RM

UX

AL

UK

MI

O.

E

R. W N

DA

TA

LS .SIZ

HF

E

1

J

nd

D

Co

IR

LD

000000 (State 0)

000001 (State 1)

000010 (State 2)

000011 (State 3)

000100 (State 4)

000101 (State 5)

000110 (State 6)

000111 (State 7)

001000 (State 8)

001001 (State 9)

001010 (State 10)

001011 (State 11)

001100 (State 12)

001101 (State 13)

001110 (State 14)

001111 (State 15)

010000 (State 16)

010001 (State 17)

010010 (State 18)

010011 (State 19)

010100 (State 20)

010101 (State 21)

010110 (State 22)

010111 (State 23)

011000 (State 24)

011001 (State 25)

011010 (State 26)

011011 (State 27)

011100 (State 28)

011101 (State 29)

011110 (State 30)

011111 (State 31)

100000 (State 32)

100001 (State 33)

100010 (State 34)

100011 (State 35)

100100 (State 36)

100101 (State 37)

100110 (State 38)

100111 (State 39)

101000 (State 40)

101001 (State 41)

101010 (State 42)

101011 (State 43)

101100 (State 44)

101101 (State 45)

101110 (State 46)

101111 (State 47)

110000 (State 48)

110001 (State 49)

110010 (State 50)

110011 (State 51)

110100 (State 52)

110101 (State 53)

110110 (State 54)

110111 (State 55)

111000 (State 56)

111001 (State 57)

111010 (State 58)

111011 (State 59)

111100 (State 60)

111101 (State 61)

111110 (State 62)

111111 (State 63)

10APPENDIX C. THE MICROARCHITECTURE OF THE LC-3B, BASIC MACHINE

COND1

BEN

R

J[4]

J[3]

J[2]

IR[11]

Ready

Branch

J[5]

COND0

J[1]

0,0,IR[15:12]

6

IRD

6

Address of Next State

Figure C.5: The microsequencer of the LC-3b base machine

Addr.

Mode

J[0]

The Microsequencer: Some Questions

When is the IRD signal asserted?

What happens if an illegal instruction is decoded?

What are condition (COND) bits for?

How is variable latency memory handled?

How do you do the state encoding?

Minimize number of state variables

Start with the 16-way branch

Then determine constraint tables and states dependent on COND

20

The Control Store: Some Questions

What control signals can be stored in the control store?

vs.

What control signals have to be generated in hardwired

logic?

i.e., what signal cannot be available without processing in the

datapath?

21

Variable-Latency Memory

The ready signal (R) enables memory read/write to execute

correctly

Example: transition from state 33 to state 35 is controlled by

the R bit asserted by memory when memory data is available

Could we have done this in a single-cycle

microarchitecture?

22

The Microsequencer: Advanced Questions

What happens if the machine is interrupted?

What if an instruction generates an exception?

How can you implement a complex instruction using this

control structure?

Think REP MOVS

23

The Power of Abstraction

The concept of a control store of microinstructions enables

the hardware designer with a new abstraction:

microprogramming

The designer can translate any desired operation to a

sequence microinstructions

All the designer needs to provide is

The sequence of microinstructions needed to implement the

desired operation

The ability for the control logic to correctly sequence through

the microinstructions

Any additional datapath control signals needed (no need if the

operation can be “translated” into existing control signals)

24

Let’s Do Some Microprogramming

Implement REP MOVS in the LC-3b microarchitecture

What changes, if any, do you make to the

state machine?

datapath?

control store?

microsequencer?

Show all changes and microinstructions

Coming up in Homework 3

25

Aside: Alignment Correction in Memory

Remember unaligned accesses

LC-3b has byte load and byte store instructions that move

data not aligned at the word-address boundary

Convenience to the programmer/compiler

How does the hardware ensure this works correctly?

Take a look at state 29 for LDB

State 17 for STB

Additional logic to handle unaligned accesses

26

Aside: Memory Mapped I/O

Address control logic determines whether the specified

address of LDx and STx are to memory or I/O devices

Correspondingly enables memory or I/O devices and sets

up muxes

Another instance where the final control signals (e.g.,

MEM.EN or INMUX/2) cannot be stored in the control store

Dependent on address

27

Advantages of Microprogrammed Control

Allows a very simple datapath to do powerful computation by

controlling the datapath (using a sequencer)

Enables easy extensibility of the ISA

High-level ISA translated into microcode (sequence of microinstructions)

Microcode enables a minimal datapath to emulate an ISA

Microinstructions can be thought of a user-invisible ISA

Can support a new instruction by changing the ucode

Can support complex instructions as a sequence of simple microinstructions

If I can sequence an arbitrary instruction then I can sequence

an arbitrary “program” as a microprogram sequence

will need some new state (e.g. loop counters) in the microcode for sequencing

more elaborate programs

28

Update of Machine Behavior

The ability to update/patch microcode in the field (after a

processor is shipped) enables

Ability to add new instructions without changing the processor!

Ability to “fix” buggy hardware implementations

Examples

IBM 370 Model 145: microcode stored in main memory, can be

updated after a reboot

B1700 microcode can be updated while the processor is running

User-microprogrammable machine!

29

Microcoded Multi-Cycle MIPS Design

P&H, Appendix D

Any ISA can be implemented this way

We will not cover this in class

However, you can do an extra credit assignment for Lab 2

30

Microcoded Multi-Cycle MIPS Design

[Based on original figure from P&H CO&D, COPYRIGHT

2004 Elsevier. ALL RIGHTS RESERVED.]

31

Control Logic for MIPS FSM

[Based on original figure from P&H CO&D, COPYRIGHT

2004 Elsevier. ALL RIGHTS RESERVED.]

32

Microprogrammed Control for MIPS FSM

[Based on original figure from P&H CO&D, COPYRIGHT

2004 Elsevier. ALL RIGHTS RESERVED.]

33

Microcode

storage

ALUSrcA

IorD

Datapath

IRWrite

control

PCWrite

outputs

PCWriteCond

….

Outputs

n-bit Input

mPC input

1

Microprogram counter

k-bit “control” output

Horizontal Microcode

Sequencing

control

Adder

Address select logic

Inputs from instruction

register opcode field

[Based on original figure from P&H CO&D, COPYRIGHT

2004 Elsevier. ALL RIGHTS RESERVED.]

Control Store: 2n k bit (not including sequencing)

34

Vertical Microcode

1-bit signal means do this RT

(or combination of RTs)

“PC PC+4”

“PC ALUOut”

Datapath

“PC PC[ 31:28 ],IR[ 25:0 ],2’b00”

control

“IR MEM[ PC ]”

outputs

“A RF[ IR[ 25:21 ] ]”

“B RF[ IR[ 20:16 ] ]”

…….

………….

Microcode

storage

Outputs

n-bit mPC

input

Input

m-bit input

1

Microprogram counter

Adder

Address select logic

Inputs from instruction

register opcode field

ROM

k-bit output

ALUSrcA

IorD

IRWrite

PCWrite

PCWriteCond

….

[Based on original figure from P&H CO&D, COPYRIGHT

2004 Elsevier. ALL RIGHTS RESERVED.]

Sequencing

control

If done right (i.e., m<<n, and m<<k), two ROMs together

(2nm+2mk bit) should be smaller than horizontal microcode ROM (2nk bit)

35

Nanocode and Millicode

Nanocode: a level below mcode

Millicode: a level above mcode

mprogrammed control for sub-systems (e.g., a complicated floatingpoint module) that acts as a slave in a mcontrolled datapath

ISA-level subroutines hardcoded into a ROM that can be called by

the mcontroller to handle complicated operations

In both cases, we avoid complicating the main mcontroller

36

Nanocode Concept Illustrated

a “mcoded” processor implementation

ROM

mPC

processor

datapath

We refer to this

as “nanocode”

when a mcoded

subsystem is embedded

in a mcoded system

a “mcoded” FPU implementation

ROM

mPC

arithmetic

datapath

37

Multi-Cycle vs. Single-Cycle uArch

Advantages

Disadvantages

You should be very familiar with this right now

38

Microprogrammed vs. Hardwired Control

Advantages

Disadvantages

You should be very familiar with this right now

39

Can We Do Better?

What limitations do you see with the multi-cycle design?

Limited concurrency

Some hardware resources are idle during different phases of

instruction processing cycle

“Fetch” logic is idle when an instruction is being “decoded” or

“executed”

Most of the datapath is idle when a memory access is

happening

40

Can We Use the Idle Hardware to Improve Concurrency?

Goal: Concurrency throughput (more “work” completed

in one cycle)

Idea: When an instruction is using some resources in its

processing phase, process other instructions on idle

resources not needed by that instruction

E.g., when an instruction is being decoded, fetch the next

instruction

E.g., when an instruction is being executed, decode another

instruction

E.g., when an instruction is accessing data memory (ld/st),

execute the next instruction

E.g., when an instruction is writing its result into the register

file, access data memory for the next instruction

41

Pipelining: Basic Idea

More systematically:

Pipeline the execution of multiple instructions

Analogy: “Assembly line processing” of instructions

Idea:

Divide the instruction processing cycle into distinct “stages” of

processing

Ensure there are enough hardware resources to process one

instruction in each stage

Process a different instruction in each stage

Instructions consecutive in program order are processed in

consecutive stages

Benefit: Increases instruction processing throughput (1/CPI)

Downside: Start thinking about this…

42

Example: Execution of Four Independent ADDs

Multi-cycle: 4 cycles per instruction

F

D

E

W

F

D

E

W

F

D

E

W

F

D

E

W

Time

Pipelined: 4 cycles per 4 instructions (steady state)

F

D

E

W

F

D

E

W

F

D

E

W

F

D

E

W

Time

43

The Laundry Analogy

Time

6 PM

7

8

9

10

11

12

1

2 AM

Task

order

A

B

C

D

“place one dirty load of clothes in the washer”

“when the washer is finished, place the wet load in the dryer”

1

6 PM

8

9

10

11 dry 12

2 AM

“when the

dryer

is7 finished,

take

out

the

load and

fold”

Time

“when folding is finished, ask your roommate (??) to put the clothes

Task

away” order

A

B

C

- steps to do a load are sequentially dependent

- no dependence between different loads

- different steps do not share resources

D

Based on original figure from [P&H CO&D, COPYRIGHT 2004 Elsevier. ALL RIGHTS RESERVED.]

44

Pipelining Multiple Loads of Laundry

6 PM

7

8

9

10

11

12

1

2 AM

6 PM

TimeA

7

8

9

10

11

12

1

2 AM

7

8

9

10

11

12

1

2 AM

7

8

9

10

11

12

1

2 AM

Time

Task

order

Task

B

order

A

C

D

B

C

D

6 PM

Time

Task

order 6 PM

Time

A

Task

order B

A

C

B

D

C

D

Based on original figure from [P&H CO&D, COPYRIGHT 2004 Elsevier. ALL RIGHTS RESERVED.]

- 4 loads of laundry in parallel

- no additional resources

- throughput increased by 4

- latency per load is the same

45

Pipelining Multiple Loads of Laundry: In Practice

Time

6 PM

7

8

9

10

11

12

1

2 AM

6 PM

7

8

9

10

11

12

1

2 AM

6 PM

7

8

9

10

11

12

1

2 AM

6 PM

7

8

9

10

11

12

1

2 AM

Task

order

A

Time

B

Task

order

C

A

D

B

C

D

Time

Task

order

TimeA

Task B

order

C

A

D

B

C

the slowest step decides throughput

D

Based on original figure from [P&H CO&D, COPYRIGHT 2004 Elsevier. ALL RIGHTS RESERVED.]

46

Pipelining Multiple Loads of Laundry: In Practice

6 PM

7

8

9

10

11

12

1

2 AM

A 6 PM

Time

7

8

9

10

11

12

1

2 AM

6 PM

7

8

9

10

11

12

1

2 AM

Task

order 6 PM

Time

A

7

8

9

10

11

12

1

2 AM

Time

Task

order

Task B

order

C

A

D

B

C

D

Time

Task B

order

C

A

B

D

A

B

A

B

C

D

Throughput restored (2 loads per hour) using 2 dryers

Based on original figure from [P&H CO&D, COPYRIGHT 2004 Elsevier. ALL RIGHTS RESERVED.]

47

We did not cover the following slides in lecture.

These are for your preparation for the next lecture.

An Ideal Pipeline

Goal: Increase throughput with little increase in cost

(hardware cost, in case of instruction processing)

Repetition of identical operations

Repetition of independent operations

No dependencies between repeated operations

Uniformly partitionable suboperations

The same operation is repeated on a large number of different

inputs

Processing an be evenly divided into uniform-latency

suboperations (that do not share resources)

Good examples: automobile assembly line, doing laundry

What about instruction processing pipeline?

49

Ideal Pipelining

combinational logic (F,D,E,M,W)

T psec

T/2 ps (F,D,E)

T/3

ps (F,D)

BW=~(1/T)

BW=~(2/T)

T/2 ps (M,W)

T/3

ps (E,M)

T/3

ps (M,W)

BW=~(3/T)

50

More Realistic Pipeline: Throughput

Nonpipelined version with delay T

BW = 1/(T+S) where S = latch delay

T ps

k-stage pipelined version

BWk-stage = 1 / (T/k +S )

BWmax = 1 / (1 gate delay + S )

T/k

ps

T/k

ps

51

More Realistic Pipeline: Cost

Nonpipelined version with combinational cost G

Cost = G+L where L = latch cost

G gates

k-stage pipelined version

Costk-stage = G + Lk

G/k

G/K

52