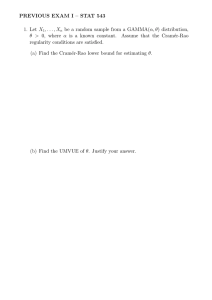

Stat 643 Final Exam December 11, 2000 Prof. Vardeman

advertisement

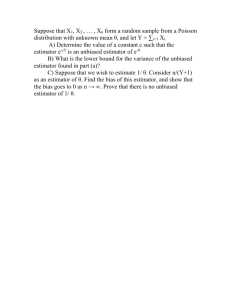

Stat 643 Final Exam December 11, 2000 Prof. Vardeman 1. Suppose the random vector Ð\ß ] ß ^Ñ has joint probability mass function 0 ÐBß Cß DÑ on Ö!ß "ß #ß $ß ÞÞÞ×$ with 0 ÐBß Cß DÑ º expa • BCD bMÒB is oddÓMÒC is evenÓMÒD Á B and D Á CÓ and I wish to approximate T Ò\ € ] € ^ "!Ó. Completely describe an MCMC method that I could use to approximate this probability. (You may assume that standard software libraries are available to allow me to sample from any "standard" distribution.) 2. Consider squared error loss estimation of ) on the basis of \ where (Remember that the ;#/ distribution has mean / and variance #/ .) \ ) µ ;#/ . (a) A possible estimator of ) is s) œ \/ . Argue carefully that s) is inadmissible by considering estimators of the form -s). (b) Find a formal generalized Bayes estimator of ), using an improper prior distribution for ) with RN derivative w.r.t. to 1-dimensional Lebesgue measure on Ð!ß_Ñ, 1Ð)Ñ œ ") . (If 2Ð † Ñ is the ;/# density, then T) has density 0) ÐBÑ œ ") 2ˆ B) ‰. Note that the formal posterior is "Inverse Gamma." You can find this distribution in Schervish's dictionary of distributions.) Is this estimator admissible? Explain. 3. Suppose that \" and \# are independent Poisson Ð-Ñ random variables (for - 0). You may take as given the fact that conditional on W œ \" € \# œ =, \" µ Binomial (=ß "# Ñ. (a) Consider the nonrandomized estimator of -, $Ð\Ñ œ \" and squared error loss. Describe a (possibly randomized) estimator that is a function of W œ \" € \# and has the same risk function as $ . (b) Apply the Rao-Blackwell Theorem and find a nonrandomized estimator of - that is a function of W œ \" € \# and is at least as good as $ under squared error loss. 4. Consider the estimation of a (location) parameter ), for the family of distributions with RN derivatives w.r.t. 1-dimensional Lebesgue measure given by 0) ÐBÑ œ MÒB ž )Óexpa) • Bb Find the Chapman-Robbins lower bound on the variance of an unbiased estimator of ). (You may find it useful to know that B œ "Þ&*$ is the only positive root of the equation Ð#B • B# ÑexpÐBÑ • #B œ !.) 1 DO EXACTLY ONE OF PROBLEMS 5 and 6. 5. Consider a model c œ ÖT) × where T) is Normal Ð)ß )# Ñ measure for @ œ e • Ö!× and the group of transformations Z œ Ö1- × for - Á !,where 1- ÐBÑ œ -B. (a) Argue that Z leaves the model invariant, and for each - Á !, find the transformation 1- mapping @ one-to-one onto @. # (b) For the action space T œ @, argue that the loss function PÐ)ß +Ñ œ ˆ +) • "‰ is invariant under Z and for each - Á !, find the transformation ~ 1 - mapping T one-to-one onto T . (c) Characterize equivariant nonrandomized rules in this decision problem and find the best equivariant rule. 6. Consider a simple decision problem with @ œ T œ Ö"ß #ß $×, T) the exponential distribution with mean ) (i.e. the distribution with R-N derivative w.r.t. 1-dimensional ‰ Lebesgue measure 0) ÐBÑ œ ") expˆ •B !Ó) and !-" loss ) MÒB PÐ)ß +Ñ œ MÒ) Á +Ó For K a (prior) distribution on @, for ) - @ we'll use the notation 1) œ KaÖ)×b. 0" ß 0# and 0$ are plotted together on the attached page in Figure 1. (a) Find in explicit form the Bayes rule versus K uniform on @. That is, suppose that 1" œ 1# œ 1$ œ "$ and find the Bayes rule. (For which B ž ! does one take actions + œ "ß # and $?) (b) Is the rule from (a) admissible? Argue carefully one way or another. Now consider the nonrandomized decision rule Ú" $ÐBÑ œ Û # Ü$ if B • Þ&*" if Þ&*" • B • #Þ%#" if #Þ%#" • B $ is Bayes in this problem versus a prior with 1" œ Þ#&"ß 1# œ Þ$(% and 1$ œ Þ$(&. (c) Prove that $ is minimax. (You may wish to use the fact that the cdf of T) is ‰‰MÒB ž )Ó.) J) ÐBÑ œ ˆ" • expˆ •B ) 2 0.4 0.3 f1( x) f2( x) 0.2 f3( x) 0.1 0 1 2 3 4 5 x Figure 1 3