C Io la o

advertisement

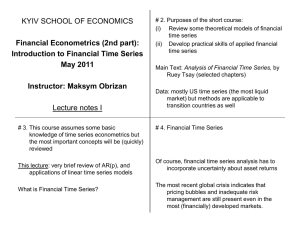

Module 6 6-1 Methods for Nonstationary Time Series Transformations, Differencing, and ARIMA Model Identification Class notes for Statistics 451: Applied Time Series Iowa State University Copyright 2015 W. Q. Meeker. November 11, 2015 17h 20min Dealing with Nonconstant Variance • A common reason: variability increases with level (e.g., σa2 is a percent of the level) This type of variance-nonstationarity can sometimes be handled with a transformation of the response. • Can also have variability changing independently of level. Thousands of Passengers 1953 1955 Year 1957 1959 1961 6-3 This type of nonstationarity may be handled by models allowing for nonstationary variance (e.g. Autoregressive Conditional Heteroscedasticity (ARCH) and Generalized Autoregressive Conditional Heteroscedasticity (GARCH) Models (not covered here in detail). 1951 Module 6 Segment 1 Nonconstant Variance and an Introduction to Transformations Variability Increasing with Level 6-2 • Example: If the standard deviation of sales is 10% of level, then for sales ± σa we have * 100 ± 10 or [90, 110] when sales are 100 * 1000 ± 100 or [900, 1100] when sales are 1000 • On the log scale, * [log(90), log(110)] = [2.2, 2.4] when sales are 100 * [log(900), log(1100)] = [4.5, 4.7] when sales are 1000 6-4 • Common problem when the “dynamic range” of a time series [measured by the ratio max(Zt)/ min(Zt )] is large (say more than 3 or 4). 1950 300 1952 Range-Mean Plot 1951 400 1953 10 w= Thousands of Passengers 1955 time 0 10 1956 International Airline Passengers 1954 0 1957 1958 Lag 20 PACF Lag 20 ACF 1959 30 30 1960 6-6 1961 Plot of the airline data along with a range-mean plot and plots of sample ACF and sample PACF. [iden(airline.tsd)] 1949 200 Mean 1.0 0.5 600 500 Number of international airline passengers from 1949 to 1960 1949 6-5 ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 w Range 400 300 200 100 200 150 100 50 600 500 400 300 200 100 1949 5.0 1950 5.2 5.4 1952 5.6 Range-Mean Plot 1951 5.8 1953 1954 6.2 1955 time 0 0 1956 w= log(Thousands of Passengers) International Airline Passengers 6.0 10 10 1957 1958 Lag 20 PACF Lag 20 ACF 1959 30 30 1960 6-7 1961 Plot of the logarithms of the airline data along with a range-mean plot and plots of sample ACF and sample PACF. [iden(airline.tsd,gamma=0)] 4.8 Mean Module 6 Segment 2 6-9 Box-Cox Transformations and the Range-Mean Plot Range-Mean Plot 1. Divide realization into groups (4 to 12 in each group). If there is a natural seasonal period (e.g., 12 observations per year for monthly data), use it to choose the groups. 2. Transform data with given γ (and perhaps m) 3. Compute the mean and range in each group. 4. Plot the ranges versus the means γ −1 −.333 Zt∗ + m ? No transformation Count data Slightly weaker than log Percentage change Slightly stronger than log Possible Application Very long upper tail Examples of Power Transformations Transformation Zt ∼ −1/(Zt∗ + m) q Zt ∼ −1/ 3 Zt∗ + m q 3 Z∗ + m t q Zt ∼ log(Zt∗ + m) Zt ∼ 0 .333 Zt ∼ Zt ∼ [Zt∗ + m]2 Zt ∼ Zt∗ .5 1 2 γ 6= 0 log(Zt∗ + m) γ = 0 γ ∗ (Zt +m) −1 γ Box-Cox Family of Transformations Zt = 6-8 where Zt∗ is the original, untransformed time series, γ is primary transformation parameter, and log is natural log (i.e., base e). • For γ < 0, the γ in the denominator of the transformation assures that Zt is an increasing function of Zt∗ so that a plot of Zt has the same direction of trend as Zt∗. • Because (Zt∗ + m)γ − 1 lim = log(Zt∗ + m) γ→0 γ the transformation is a continuous function in γ. 6 - 10 • The quantity m is typically chosen to be 0. Choose m > 0 if some Zt∗ < 0 or Zt∗ ≈ 0. Increasing m has the effect of weakening the transformation. 1949 1950 25 1951 1952 Range-Mean Plot 30 35 1953 time 1955 0 0 10 10 1956 w= ((Thousands of Passengers)^0.5)-1)/0.5 International Airline Passengers 1954 40 1957 1958 Lag 20 PACF Lag 20 ACF 1959 30 30 1960 6 - 12 1961 Plot of the square roots (γ = .5) of the airline data along with a range-mean plot and plots of sample ACF and sample PACF. [iden(airline.tsd,gamma=.5)] 20 Mean 1.0 0.5 45 40 1.0 6.5 6.0 5. Repeat for different values of γ 6 - 11 ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 w Range 35 30 25 20 10 9 8 7 6 5 4 ACF Partial ACF 0.5 0.0 -1.0 1.0 0.5 0.0 -1.0 w Range 5.5 5.0 0.45 0.40 0.35 1949 5.0 1950 5.2 1951 Mean 1952 5.6 Range-Mean Plot 5.4 1951 1952 Range-Mean Plot 0.995 Mean 1953 5.8 1953 1954 6.2 1955 time 0 0 1956 w= log(Thousands of Passengers) International Airline Passengers 6.0 1954 0.998 1955 time 0 0 1956 w= ((Thousands of Passengers)^(-1))-1)/(-1) International Airline Passengers 0.997 1957 10 10 1957 10 10 1958 1958 ACF 20 Lag PACF 20 Lag ACF 20 Lag PACF 20 Lag 1959 1959 1960 30 30 1960 30 30 1961 6 - 13 1961 6 - 15 1950 1951 1952 Range-Mean Plot Mean 2.50 2.55 1953 time 1955 0 0 10 10 1956 w= ((Thousands of Passengers)^(-0.3333))-1)/(-0.3333) International Airline Passengers 1954 2.60 1957 1958 Lag 20 PACF Lag 20 ACF 1959 30 30 1960 6 - 14 1961 Plot of the reciprocal cube-root (γ = −.3333) of the airline data. [iden(airline.tsd,gamma=-.3333)] 1949 2.45 Effects of Doing a Box-Cox Transformation Nonconstant Level and Exponential Growth Segment 3 Module 6 6 - 18 6 - 16 • Changes the shape of the distribution of the residuals (e.g., from lognormal to normal) • Changes the shape of a trend line (e.g., exponential or percentage trend versus linear trend) • Changes the relationship between amount of variability and level (as reflected in a range-mean plot) 2.40 1.0 0.5 2.65 2.60 2.55 Plot of the logarithms (γ = 0) of the airline data along with a range-mean plot and plots of sample ACF and sample PACF. [iden(airline.tsd,gamma=0)] 4.8 1950 0.994 0.996 Iden plot of the reciprocal (γ = −1) of the airline data. [iden(airline.tsd,gamma=-1)] 1949 0.993 1. Try γ = 1 (no transformation) 2. Try γ = .5, .333, 0 (moderate to strong transformations) 3. Try γ = −.333, −.5, −1 (even stronger transformations) 4. Choose one tentative value of γ. • Use the range-mean plot as a rough guide. It is not necessary to obtain 0 correlation between means and ranges. • Choose one value for γ and use if for initial modeling. Then experiment with other values with your chosen model (usually the chosen model does not depend strongly on the choice of γ). 6 - 17 • Box-Cox transformations useful in other kinds of data analysis (e.g., regression analysis). • Some computer programs attempt to estimate γ. ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 w Range 2.50 2.45 2.40 0.075 0.070 0.065 0.060 1.0 1.0 0.5 Usual Procedure for Deciding on the Use of a Transformation 0.992 ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 0.998 0.996 -1.0 ACF Partial ACF 0.5 0.0 -1.0 1.0 0.5 0.0 -1.0 6.5 6.0 5.5 5.0 0.45 0.40 0.35 w Range w Range 0.994 0.992 0.0025 0.0020 0.0015 0.0010 Dealing with Nonconstant Level (Trend or Cycle) • Fit a trend line (possibly after a transformation) • Analyze differences (changes) instead of the actual time series [e.g., fit a model to Wt = Zt − Zt−1] 6 - 19 • If transformation and differencing is used always do the transformation first Number 3 • 4 • 5 • 6 • 100% Growth per Period • 2 7 • 1 • 2 • 3 • Time 4 • 5 • 6 • 6 - 23 6 - 21 7 • Log of 100% Growth per Period Plots showing the effect of a log transformation on exponential growth • 1 Segment 4 Module 6 Log Number Introduction to Time Series Differencing and Integrated Models Time 5 4 3 2 1 120 100 80 60 40 20 0 t 1 2 3 4 5 6 7 Difference Amount Zt − Zt−1 NA 2 4 8 16 32 64 Log Amount log(Zt) 0.69 1.39 2.08 2.77 3.47 4.16 4.85 Difference Log Amount log(Zt) − log(Zt−1) NA .70 .69 .69 .70 .69 .69 Example of Exponential (Percentage) Growth Number of Bacteria in a Dish Doubling Every Time Period (100% Growth) Amount Zt 2 4 8 16 32 64 128 Differences of Zt also grow exponentially! 6 - 24 6 - 22 6 - 20 Differences of log(Zt) are constant (except for roundoff) Exponential (Percentage) Growth Zt∗ = β0∗ [β1∗ ]t Zt = log(β0∗ ) + log(β1∗ )t Zt = log(Zt∗) = log(β0∗ ) + log([β1∗ ]t ) Zt = β 0 + β 1 t where β0 = log(β0∗ ) and β1 = log(β1∗ ). " ∗ # " # ∗ ∗ t+1 − β ∗ [β ∗ ]t Z − Z∗ β [β ] t t+1 = 100 0 1 ∗ ∗ t 0 1 Zt∗ β0[β1] Growth is rate in percent is 100 = 100[β1∗ − 1] Ha : µW 6= 0 H0 : µ W = 0 = 100[exp(β1) − 1] ≈ 100β1 Gambler’s Winnings P “Integrated” Incremental Zt Wt = Zt − Zt−1 100 NA 110 +10 108 −2 115 +7 119 +4 118 −1 125 +7 127 +2 125 −2 134 +9 140 +6 for small β1 t 1 2 3 4 5 6 7 8 9 10 11 H0 : µW = 0 40 Wi = =4 W = 10 s10 P (Wi − 4)2 = 4.52 9 SW = W −0 W −0 √ t= = = 2.79 SW SW / 10 • 2 • • • 4 • 4 • Cumulative Winnings • • 6 Time • 8 • Incremental Winnings • • 6 Time • 8 • • • 10 • 10 • • 6 - 25 Plots of the gambler’s cumulative winnings and incremental winnings • • 2 56 10 58 60 20 62 w= Dollars time 0 0 30 10 10 1979 Weekly Closing Price of ATT Common Stock Range-Mean Plot Mean 40 ACF 20 Lag PACF 20 Lag 30 30 50 6 - 27 Forecasting the Gambler’s Cumulative Winnings • Because Wt = (1−B)Zt = Zt −Zt−1 appears to be stationary, we can build an ARMA model for Wt and use Zt = Zt−1 +Wt. • If Wt is Wt = θ0 + at, then Zt = Zt−1 + θ0 + at is a “a random walk with drift” with E(increase) = θ0 per period. b a = SW = 4.52 • Estimated from data: θb0 = W = 4, σ 6 - 26 • A forecast (or prediction) interval for Wt can be translated into a prediction interval for Zt (because we know Zt−1) • One-step-ahead forecast for Zt is Zbt = Zt−1 + θb0 • One-step-ahead 100(1 − α)% prediction interval: Zbt ± t(1−α/2,nw −1)S(W −W ) q q 2 + S 2 = S 2 + S 2 /n SW w W W W • S(W −W ) = b a for large nw • S(W −W ) ≈ SW = σ 0 0 10 10 ACF 20 Lag 20 time 30 0 10 Lag 20 PACF 40 1979 Weekly Closing Price of ATT Common Stock w= (1-B)^ 1 Dollars 30 Module 6 Segment 5 30 50 ARIMA Models Part 1: ARIMA(0, 1, 1) 6 - 30 6 - 28 Function iden Output for the First Differences of 1979 Weekly Closing Prices of AT&T Common Stock. [iden(att.tsd,d=1)] 3 2 1 0 -2 1.0 Function iden Output for 1979 Weekly Closing Prices of AT&T Common stock. [iden(att.tsd)] 0 54 Forecasting a Random Walk • If the model for Wt = (1 − B)Zt = Zt − Zt−1 is Wt = at, then Zt = Zt−1 + at = at−1 + at−2 + at−3 + · · · + at which is a “a random walk” process. This is like an AR(1) model with φ1 = 1 (note that model is nonstationary). • The only parameter of this model is σa • A forecast (or prediction interval) for Wt can be translated into a forecast (or prediction interval) for Zt (because we know Zt−1) Partial ACF 1.0 • One-step-ahead point forecast for Zt is Zbt = Zt−1 w ACF 64 62 • One-step-ahead prediction interval is: Zbt ± t(1−α/2,n−1)SW 6 - 29 1.0 0.0 -1.0 ACF Partial ACF 0.5 0.0 -1.0 1.0 0.5 0.0 -1.0 0.0 -1.0 140 120 100 2 4 6 8 -2 Dollars Dollars w Range 60 58 56 54 52 4.5 4.0 3.5 3.0 2.5 20 60 100 Range-Mean Plot 120 Mean 30 140 w= Sales time 0 0 Simulated Time Series #B 160 40 10 10 50 ACF 20 Lag PACF 20 Lag PACF 20 Lag 30 30 30 50 6 - 33 60 20 0 10 ACF 20 Lag Mean 30 45 Range-Mean Plot 40 30 30 50 0 40 Simulated Time Series #B w= (1-B)^ 1 Sales time w= Sales time 40 0 0 10 10 Simulated Time Series #C 55 10 Lag 20 PACF 50 ACF 20 Lag PACF 20 Lag 30 30 30 50 Function iden Output for Simulated Series C 35 -1.0 1.0 60 6 - 34 6 - 36 This model is also known as exponentially weighted moving average (EWMA) and its forecast equations can be shown to be equivalent to exponential smoothing. 0.0 Phi which is a nonstationary “ARMA(1,1)” Zt = Zt−1 − θ1at−1 + at Wt = (1 − B)1Zt = (1 − θ1B)at Special Case: ARIMA(0, 1, 1) Model [a.k.a. IMA(1,1)] 30 6 - 32 Function iden Output for the First Differences of Simulated Series B 60 Function iden Output for Simulated Series B 80 6 - 31 AutoRegressive Integrated Moving Average Model (ARIMA) • An ARIMA(p, d, q) model is 10 1.0 0.5 φp(B)(1 − B)d Zt = θq (B)at Simulated Time Series #C w= (1-B)^ 1 Sales 40 time 0 Partial ACF ACF Partial ACF can be viewed as a generalized ARMA model, with d roots on the unit circle. • Letting Wt = (1 − B)d Zt be the “working series” and substituting gives an ARMA model for Wt φp(B)Wt = θq (B)at 30 1.0 0.0 -1.0 0 10 -20 1.0 0.0 -1.0 w ACF w Range 50 40 30 20 30 25 0.0 -1.0 1.0 0.5 0.0 -1.0 • Can fit an ARMA model to Wt 30 ACF 20 Lag 6 - 35 1.0 Theta 0.0 -1.0 • Can unscramble the Zt for forecasting and interpretation 10 Partial ACF 20 1.0 Function iden Output for the First Differences of Simulated Series C 20 0 1.0 0.0 -1.0 15 ACF Partial ACF 0.5 0.0 -1.0 1.0 0.5 0.0 -1.0 180 160 140 120 100 80 60 45 40 35 30 25 20 15 w Range w ACF 0 10 -20 1.0 0.0 -1.0 Module 6 Segment 6 ARIMA Models Part 2: ARIMA(1, 1, 0) 0 10 50 ACF 20 Lag 100 30 Simulated data w= (1-B)^ 1 Data 150 time 0 200 10 250 PACF 20 Lag 30 300 6 - 37 w 0 50 Mean 10 Range-Mean Plot 5 100 15 20 w= Data 10 200 0 10 Simulated data time 150 0 ACF 20 Lag PACF 20 Lag 250 30 30 6 - 38 300 Function iden Output for Simulated Series D 0 -5 Special Case: ARIMA(1, 1, 0) Model leading to Zt = (1 + φ1)Zt−1 − φ1Zt−2 + at • (1 − φ1B)(1 − B)1Zt = at or (1 − φ1B − B + φ1B2)Zt = at , 0 500 50 -2 -1 0 Phi1 1 2 ′ ′ Zt = φ1 Zt−1 + φ2 Zt−2 + at 1000 Range-Mean Plot Mean 100 w= Data time 150 0 10 10 200 0 Simulated data 1500 ACF 20 Lag PACF 20 Lag 250 30 30 Function iden Output for Simulated Series E ′ = (1 + φ ) and φ′ = −φ or φ′ = 1 − φ′ where φ1 1 1 2 2 1 0 6 - 42 300 6 - 40 which is a nonstationary “ARIMA(2,0,0)” or “AR(2)” 1.0 Phi2 0.0 -1.0 Function iden Output for the First Differences of Simulated Series D 0 6 - 39 How to Choose d (the amount of differencing) 1. d = 0 to d = 1 most common; d = 2 not common; d ≥ 3 generally not useful; 2. Plot data versus time, looking for trend and cycle? 1.0 0.5 1.0 0.5 3. Consider the physical process (is the process changing?) 4. Examine the ACF for d = 0, stepping up to d = 1 or d = 2, if needed. Look for the smallest d such that ACF “dies down quickly.” 5. Fit AR(1) model and test H0 : φ1 = 1 using 1 φb − 1 t= 1 Sφb 6 - 41 ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 Need special tables [see Dickey and Fuller 1979 JASA] 6. Minimize SW 7. Compare forecasts and prediction intervals. ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 30 20 10 0 -10 16 14 12 10 8 6 4 1500 Partial ACF 2 Range w Range 1000 500 0 200 150 100 50 0 1.0 0.0 -1.0 w ACF 0 -2 1.0 0.0 -1.0 0 10 50 ACF 20 Lag 30 100 30 w= Sales 40 time 0 0 0 Simulated Time Series #A 180 200 10 10 10 250 Lag 20 PACF 50 ACF 20 Lag PACF 20 Lag 30 30 30 300 6 - 43 6 - 45 60 0 10 50 ACF 20 Lag 100 30 Simulated data w= (1-B)^ 2 Data 150 time 0 200 10 250 PACF 20 Lag 30 An Example of a Nonstationary Model at ∼ NID(0, σa2) 300 6 - 44 Function iden Output for the Second Differences of Simulated Series E 20 0 10 30 ACF 20 Lag 30 Simulated Time Series #A w= (1-B)^ 1 Sales 40 time 0 10 50 PACF 20 Lag 30 6 - 48 60 Function iden Output for the First Differences of Simulated Series A 6 - 46 Beware of differencing when differencing is not warranted. Is there a better solution for fitting a model to Zt?? Therefore, Zt is nonstationary, but Wt is stationary. What kind of model does Wt have?? 2 = Var(W ) = 2σ 2 σW t a E(Wt) = β1 = β1 − at−1 + at = [β0 + β1t + at] − [β0 + β1(t − 1) + at−1 ] Wt = (1 − B)Zt = Zt − Zt−1 E(Zt) = β0 + β1t Zt = β0 + β1t + at, Let t = 1, 2, . . . be coded time. 0 1.0 Simulated data w= (1-B)^ 1 Data 150 time Module 6 Segment 7 160 w Function iden Output for the First Differences of Simulated Series E 0 Mean 120 Range-Mean Plot 100 140 Function iden Output for Simulated Series A 80 Partial ACF 20 0 -40 1.0 Beware of Differencing When You Should Not 20 60 6 - 47 Partial ACF 4 2 0 -2 -4 1.0 0.0 -1.0 0.0 -1.0 1.0 0.0 -1.0 1.0 ACF w ACF Partial ACF ACF Partial ACF 0.5 0.0 -1.0 1.0 0.5 0.0 -1.0 0.0 -1.0 1.0 0.0 -1.0 15 0 5 -10 1.0 0.0 -1.0 w ACF w Range 150 100 50 40 35 30 25 Trivial model: at ∼ NID(0, σa2) Beware of Over Differencing Zt = θ0 + at, First difference of Zt = [θ0 + at ] − [θ0 + at−1] Wt = (1 − B)Zt = Zt − Zt−1 = −at−1 + at is a noninvertible MA(1)! Second difference of Zt Wt = (1 − B)2Zt = Zt − 2Zt−1 + Zt−2 = −2at−1 + at−2 + at 50 ACF 20 Lag 150 time 0 200 Simulated Seried F (from Trivial Model) w= (1-B)^ 1 Data 100 30 10 250 PACF 20 Lag 30 300 6 - 49 -0.2 50 Mean 0.2 Range-Mean Plot 0.0 0.6 w= Data time 150 0 0 10 10 200 Simulated Seried F (from Trivial Model) 100 0.4 ACF 20 Lag PACF 20 Lag 250 30 30 Function iden Output for Simulated Series F 0 0 0 10 50 ACF 20 Lag 150 time 0 200 Simulated Seried F (from Trivial Model) w= (1-B)^ 2 Data 100 30 10 250 PACF 20 Lag 30 6 - 50 300 300 6 - 54 • Situation is similar, but more complicated, with higher order differencing. ◮ The physical nature of the data-generating process ◮ H0 : µW = 0 by looking at t = (W − 0)/SW • Use of a constant term after differencing is rare. Check: the deterministic trend is θ0 each time period. Zt = Zt−1 + θ0 + Wt • If there is differencing (d = 1) then a constant term should be included only if there is need to or evidence of deterministic trend. With stationary Wt, E(Wt) = 0, and • With no differencing (d = 0) include a constant term in the model to allow estimation of the process mean. 6 - 52 Function iden Output for the Second Differences of Simulated Series F -0.4 1.0 0.5 When to Include θ0 in an ARIMA Model Partial ACF is a noninvertible MA(2)!! 0 10 1.0 0.0 -1.0 2 4 6 Function iden Output for the First Differences of Simulated Series F 0 6 - 51 6 - 53 ACF Partial ACF 0.0 -1.0 1.0 0.5 0.0 -1.0 2 1 0 -1 -2 4.0 3.5 3.0 2.5 2.0 w Range w ACF 4 2 Using a Constant Term After Differencing and Exponential Smoothing Segment 8 Module 6 Partial ACF -2 -6 1.0 0.0 -1.0 1.0 0.0 -1.0 w ACF 0 -2 -4 1.0 0.0 -1.0 Relationship Between IMA(1,1) and Exponential Smoothing IMA(1,1) model, unscrambled Zt = Zt−1 − θ1at−1 + at IMA(1,1) forecast = Zt−1 − θb1(Zt−1 − Zbt−1) b t−1 Zbt = Zt−1 − θb1a = (1 − θb1)Zt−1 + θb1Zbt−1 = αZt−1 + (1 − α)Zbt−1 = αZt−1 + α(1 − α)Zt−2 + α(1 − α)2Zt−3 + · · · where α = 1−θ1. Usually 0.01 < α < 0.1. This shows why the IMA(1,1) forecast equation is sometimes called “exponential smoothing” or “exponentially weighted moving average” (EWMA). 6 - 55 Issues in Applying Exponential Smoothing • Choice of the smoothing constant α. • Start-up value for the forecasts? • Single, double, or triple exponential smoothing? (Single exponential smoothing is equivalent to IMA(1,1), double exponential smoothing is equivalent to IMA(2,2), ...) • Seasonality (Winter’s method) • Prediction intervals or bounds? 6 - 56