Belief space planning assuming maximum likelihood observations Robert Platt

Belief space planning assuming maximum likelihood observations

Robert Platt

Russ Tedrake, Leslie Kaelbling, Tomas Lozano-Perez

Computer Science and Artificial Intelligence Laboratory,

Massachusetts Institute of Technology

June 30, 2010

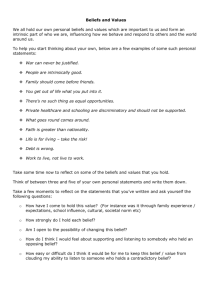

Planning from a manipulation perspective

(image from www.programmingvision.com

, Rosen Diankov )

• The “system” being controlled includes both the robot and the objects being manipulated.

• Motion plans are useless if environment is misperceived.

• Perception can be improved by interacting with environment: move head, push objects, feel objects, etc…

The general problem: planning under uncertainty

Planning and control with:

1.

Imperfect state information

2.

Continuous states, actions, and observations most robotics problems

N. Roy, et al.

1. Redefine problem:

Strategy: plan in belief space

(underlying state space) (belief space)

“Belief” state space

2. Convert underlying dynamics into belief space dynamics goal

3. Create plan start

Related work

1.

Prentice, Roy, The Belief Roadmap: Efficient Planning in Belief

Space by Factoring the Covariance , IJRR 2009

2.

Porta, Vlassis, Spaan, Poupart, Point-based value iteration for continuous POMDPs, JMLR 2006

3.

Miller, Harris, Chong, Coordinated guidance of autonomous

UAVs via nominal belief-state optimization, ACC 2009

4.

Van den Berg, Abeel, Goldberg, LQG-MP: Optimized path planning for robots with motion uncertainty and imperfect state information , RSS 2010

Simple example: Light-dark domain underlying state action

Underlying system:

Observations: z t

x t x t

x t

1

w

t u t

1 observation noise observation

“dark” “light” State dependent noise: w

t

~ N

x t

; 0 ,

x

5

2

start goal

Simple example: Light-dark domain underlying state action

Underlying system:

Observations: z t

x t x t

x t

1

w

t u t

1 observation noise observation

“dark” “light” State dependent noise: w

t

~ N

x t

; 0 ,

x

5

2

start goal

Nominal information gathering plan

Belief system state

Underlying system: x t

1

f

x t

, u t

observation z t

g x t x t action

(deterministic process dynamics)

(stochastic observation dynamics)

Belief system:

• Approximate belief state as a Gaussian b t

m t

,

t

P

x | b t

N

x ; m t

,

t

Similarity to an underactuated mechanical system

State space:

Planning objective:

Underactuated dynamics:

Acrobot x

x g

0

f

,

, u

Gaussian belief: b

m

b g

x g

0

???

Belief space dynamics goal start

Generalized Kalman filter:

m t

1

,

t

1

F

z t

, u t

, m t

,

t

Belief space dynamics are stochastic goal unexpected observation start

Generalized Kalman filter:

m t

1

,

t

1

F

z t

, u t

, m t

,

t

BUT – we don’t know observations at planning time

Plan for the expected observation

Generalized Kalman filter:

m t

1

,

t

1

F

z t

, u t

, m t

,

t

Plan for the expected observation:

m t

1

,

t

1

F

z

ˆ t

, u t

, m t

,

t

n

Model observation stochasticity as Gaussian noise

We will use feedback and replanning to handle departures from expected observation….

Belief space planning problem

Find finite horizon path, , starting at that minimizes cost function: u

1 : T b

1

Minimize: J

b

1

, u

1 : T

i k

1 n i

T

T n i

t

T

1 u t

T

Ru t

Minimize covariance at final state

•

Minimize state uncertainty n i

Subject to: m

T x goal

Trajectory must reach this final state

Action cost

• Find least effort path

Existing planning and control methods apply

Now we can apply:

• Motion planning w/ differential constraints (RRT, …)

• Policy optimization

• LQR

• LQR-Trees

Planning method: direct transcription to SQP

1. Parameterize trajectory by via points:

2. Shift via points until a local minimum is reached:

•

Enforce dynamic constraints during shifting

3. Accomplished by transcribing the control problem into a

Sequential Quadratic Programming (SQP) problem.

• Only guaranteed to find locally optimal solutions

Example: light-dark problem

X

Y

• In this case, covariance is constrained to remain isotropic

Replanning

New trajectory m m

r goal

Original trajectory

• Replan when deviation from trajectory exceeds a threshold:

m

m

m

m

r

2

Replanning: light-dark problem

Planned trajectory

Actual trajectory

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Replanning: light-dark problem

Originally planned path

Path actually followed by system

Planning vs. Control in Belief Space

Given our specification, we can also apply control methods:

• Control methods find a policy – don’t need to replan

• A policy can stabilize a stochastic system

A plan A control policy

Control in belief space: B-LQR

In general, finding an optimal policy for a nonlinear system is hard.

• Linear quadratic regulation (LQR) is one way to find an approximate policy

• LQR is optimal only for linear systems w/ Gaussian noise.

Belief space LQR (B-LQR) for light-dark domain:

Combination of planning and control

Algorithm:

1. repeat

2.

u

1 : T

, b

1 : T

create _ plan

1

3. for

4.

u t t

1 : T

lqr _ control

b t

, u t

, b t

b t

b t

0

6. if belief mean at goal

7. halt

Analysis of replanning with B-LQR stabilization

Theorem:

• Eventually (after finite replanning steps) belief state mean reaches goal with low covariance.

Conditions:

1.

Zero process noise.

2.

Underlying system passively critically stable

3.

Non-zero measurement noise.

4.

SQP finds a path with length < T to the goal belief region from anywhere in the reachable belief space.

5.

Cost function is of correct form (given earlier).

Laser-grasp domain

Laser-grasp: the plan

Laser-grasp: reality

Initially planned path

Actual path

Conclusions

1.

Planning for partially observable problems is one of the keys to robustness.

2.

Our work is one of the few methods for partially observable planning in continuous state/action/observation spaces.

3.

We view the problem as an underactuated planning problem in belief space.