Architecture of the Earth System Modeling Framework Climate Data

advertisement

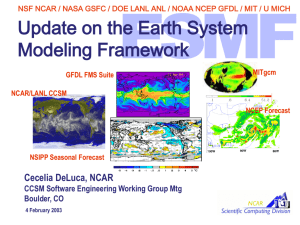

NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Architecture of the Earth System Modeling Framework NCAR/LANL CCSM Data Assimilation Climate Weather Cecelia DeLuca GEM Snowmass, CO www.esmf.ucar.edu GFDL FMS Suite NASA GMAO Analysis MITgcm GMAO Seasonal Forecast NCEP Forecast NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Motivation for ESMF In climate research and NWP... increased emphasis on detailed representation of individual physical processes; requires many teams of specialists to contribute components to an overall modeling system In computing technology... increase in hardware and software complexity in high-performance computing, shift toward the use of scalable computing architectures In software … development of frameworks, such as the GFDL Flexible Modeling System (FMS) and Goddard Earth Modeling System (GEMS) that encourage software reuse and interoperability The ESMF is a focused community effort to tame the complexity of models and the computing environment. It leverages, unifies and extends existing software frameworks, creating new opportunities for scientific contribution and collaboration. www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Project Description GOALS: To increase software reuse, interoperability, ease of use and performance portability in climate, weather, and data assimilation applications PRODUCTS: • Core framework: Software for coupling geophysical components and utilities for building components • Applications: Deployment of the ESMF in 15 of the nation’s leading climate and weather models, assembly of 8 new science-motivated applications METRICS: Reuse Interoperability Ease of Adoption Performance 15 applications use ESMF component coupling services and 3+ utilities 8 new applications comprised of neverbefore coupled components 2 codes adopt ESMF with < 2% lines of code changed, or within 120 FTE-hours No more than 10% overhead in time to solution, no degradation in scaling RESOURCES and TIMELINE: $9.8M over 3 years, starting February 2002 www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Modeling Applications SOURCE GFDL APPLICATION FMS B-grid atmosphere at N45L18 FMS spectral atmosphere at T63L18 FMS MOM4 ocean model at 2°x2°xL40 FMS HIM isopycnal C-language ocean model at 1/6°x1/6°L22 MIT MITgcm coupled atmosphere/ocean at 2.8°x2.8°, atmosphere L5, ocean L15 MITgcm regional and global ocean at 15kmL30 GMAO/NSIPP NSIPP atmospheric GCM at 2°x2.5°xL34 coupled with NSIPP ocean GCM at 2/3°x1.25°L20 NCAR/LANL CCSM2 including CAM with Eulerian spectral dynamics and CLM at T42L26 coupled with POP ocean and data ice model at 1°x1°L40 www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Data Assimilation Applications SOURCE GMAO/DAO APPLICATION PSAS interface layer with 2O0K observations/day CAM with finite volume dynamics at 2°x2.5°L55, including CLM NCEP Global atmospheric spectral model at T170L42 SSI analysis system with 250K observations/day, 2 tracers WRF regional atmospheric model at 22km resolution CONUS forecast 345x569L50 GMAO/NSIPP ODAS with OI analysis system at 1.25°x1.25°L20 resolution with ~10K observations/day MIT MITgcm 2.8° century / millennium adjoint sensitivity www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Interoperability Demonstrations COUPLED CONFIGURATION NEW SCIENCE ENABLED GFDL B-grid atm / MITgcm ocn Introduction of global biogeochemistry into seasonal forecasts. GFDL MOM4 / NCEP forecast New seasonal forecasting system. NSIPP ocean / LANL CICE Extension of seasonal prediction system to centennial timescales. NSIPP atm / DAO analysis Assimilated initial state for seasonal prediction system. DAO analysis / NCEP model Intercomparison of systems for NASA/NOAA joint center for satellite data assimilation. NCAR fvCAM/ NCEP analysis Intercomparison of systems for NASA/NOAA joint center for satellite data assimilation. NCAR CAM Eul / MITgcm ocn Component exchange for improved climate prediction capability. NCEP WRF / GFDL MOM4 Development of hurricane prediction capability. www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Interoperability Experiments Completed 1 GFDL B-grid atmosphere coupled to MITgcm ocean Atmosphere, ocean, and coupler are set up as ESMF components Uses ESMF regridding tools 3 2 Temperature SSI import Temperature SSI export Temperature difference NCAR Community Atmospheric Model (CAM) coupled to NCEP Spectral Statistical Interpolation (SSI) System, both set up as ESMF components www.esmf.ucar.edu Experiment utilizes same observational stream used operationally at NCEP NCAR Community Atmospheric Model (CAM) coupled to MITgcm ocean Atmosphere, ocean, and coupler are set up as ESMF components Uses ESMF regridding tools NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Characteristics of Weather and Platforms Climate Simulation • Mix of global transforms and local communications • Load balancing for diurnal cycle, event (e.g. storm) tracking • Applications typically require 10s of GFLOPS, 100s of PEs – but can go to 10s of TFLOPS, 1000s of PEs • Required Unix/Linux platforms span laptop to Earth Simulator • Multi-component applications: component hierarchies, ensembles, and exchanges ocean • Data and grid transformations between components • Applications may be MPMD/SPMD, concurrent/sequential, combinations • Parallelization via MPI, OpenMP, shmem, combinations • Large applications (typically 100,000+ lines of source code) www.esmf.ucar.edu Seasonal Forecast sea ice coupler assim_atm assim atmland atm physics land dycore NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Architecture 1. ESMF provides an environment for assembling geophysical components into applications, with support for ensembles and hierarchies. 2. ESMF provides a toolkit that components use to i. increase interoperability ii. improve performance portability iii. abstract common services www.esmf.ucar.edu ESMF Superstructure AppDriver Component Classes: GridComp, CplComp, State User Code ESMF Infrastructure Data Classes: Bundle, Field, Grid, Array Utility Classes: Clock, LogErr, DELayout, Machine NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Hierarchies and Ensembles ESMF encourages applications to be assembled hierarchically and intuitively Coupling interfaces are standard at each layer Components can be used in different contexts Ensemble Forecast Seasonal Forecast ocean sea ice atmland atm coupler physics www.esmf.ucar.edu assim_atm assim_atm assim ESMF supports ensembles with multiple instances of components running sequentially (and soon, concurrently) land dycore assim_atm assim_atm NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Class Structure GridComp Land, ocean, atm, … model CplComp Xfers between GridComps Superstructure State Data imported or exported Bundle Collection of fields Field Physical field, e.g. pressure Regrid Computes interp weights Infrastructure Grid LogRect, Unstruct, etc. Array Hybrid F90/C++ arrays PhysGrid Math description DistGrid Grid decomposition DELayout Communications F90 Route Stores comm paths C++ Utilities Machine, TimeMgr, LogErr, I/O, Config, Base etc. www.esmf.ucar.edu Data Communications NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Data Classes Model data is contained in a hierarchy of multi-use classes. The user can reference a Fortran array to an Array or Field, or retrieve a Fortran array out of an Array or Field. • Array – holds a cross-language Fortran / C++ array • Field – holds an Array, an associated Grid, and metadata • Bundle – collection of Fields on the same Grid • State – contains States, Bundles, Fields, and/or Arrays • Component – associated with an Import and Export State www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF DataMap Classes These classes give the user a systematic way of expressing interleaving and memory layout, also hierarchically (partially implemented) • ArrayDataMap – relation of array to decomposition and grid, row / column major order, complex type interleave • FieldDataMap – interleave of vector components • BundleDataMap – interleave of Fields in a Bundle www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Standard Methods ESMF uses consistent names and behavior throughout the framework, for example • Create / Destroy – create a new object, e.g. FieldCreate • Set / Get – set or get a value, e.g. ArrayGetDataPtr • Add / Get / Remove – add to, retrieve from, or remove from a list, e.g. StateAddField • Print – to print debugging info, e.g. BundlePrint • And so on www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Superstructure • • • • • • ESMF is a standard component architecture, similar to CCA but designed for the Earth modeling domain and for ease of use with Fortran codes Components and States are superstructure classes All couplers are the same derived type (ESMF_CplComp) and have a standard set of methods with prescribed interfaces All component models (atm, ocean, etc.) are the same derived type (ESMF_GridComp) and have a standard set of methods with prescribed interfaces Data is transferred between components using States. ESMF components can interoperate with CCA components – demonstrated at SC03 www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Becoming an ESMF GridComp • • • • • • ESMF GridComps have 2 parts: one part user code, one part ESMF code The ESMF part is a GridComp derived type with standard methods including Initialize, Run, Finalize User code must also be divided into Initialize, Run, and Finalize methods – these can be multi-phase (e.g. Run phase 1, Run phase 2) User code interfaces must follow a standard form – that means copying or referencing data to ESMF State structures Users write a public SetServices method that contains ESMF SetEntryPoint calls - these associate a user method (“POPinit”) with a framework method (the Initialize call for a GridComp named “POP”) Now you’re an ESMF GridComp www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Infrastructure • Data classes are Bundles, Fields, and Arrays • Tools for expressing interleaved data stuctures • Tools for resource allocation, decomposition, load balancing • Toolkits for communications, time management, logging, IO www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Virtual Machine (VM) • • • • • • VM handles resource allocation Elements are Persistent Execution Threads or PETs PETs reflect the physical computer, and are one-to-one with Posix threads or MPI processes Parent Components assign PETs to child Components PETs will soon have option for computational and latency / bandwidth weights The VM communications layer does simpleMPI-like communications between PETs (alternative communication mechanisms are layered underneath) www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH DELayout • • • • • • Handles decomposition Elements are Decomposition Elements, or DEs (decomposition that’s 2 pieces in x by 4 pieces in y is a 2 by 4 DELayout) DELayout maps DEs to PETs, can have more than one DE per PET (for cache blocking, user-managed OpenMP threading) A DELayout can have a simple connectivity or more complex connectivity, with weights between DEs - users specify dimensions where greater connection speed is needed DEs will also have computation weights Array, Field, and Bundle methods perform inter-DE communications www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Communications • Communication methods include Regrid, Redist, Halo, Gather, Scatter, etc. • Communications methods are implemented at multiple levels, e.g. FieldHalo, ArrayHalo • Communications hide underlying ability to switch between shared and distributed memory parallelism www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Load Balancing Three levels of graphs: • Virtual Machine – machine-level PETs will have computational and connectivity weights • DELayout – DE chunks have connectivity weights, will have computational weights • Grid – grid cells will have computational and connectivity weights Intended to support standard load balancing packages (e.g. Parmetis) and user-developed load balancing schemes www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Open Development • • • • • • Open source Currently ~800 unit tests, ~15 system tests are bundled with the ESMF distribution, can be run in non-exhaustive or exhaustive modes Results of nightly tests on many platforms are accessible on a Test and Validation webpage Test coverage, lines of code, requirements status are available on a Metrics webpage Exhaustive Reference Manual, including design and implementation notes, is available on a Downloads and Documentation webpage Development is designed to allow users clear visibility into the workings and status of the system, to allow users to perform their own diagnostics, and to encourage community ownership www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Port Status • • • • SGI IBM Compaq Linux (Intel, PGI, NAG, Absoft, Lahey) www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Key Accomplishments • • • • • Public delivery of prototype ESMF v1.0 in May 2003 Monthly ESMF internal releases with steadily increasing functionality Completion of first 3 coupling demonstrations using ESMF in March 2004 – NCAR CAM with NCEP SSI – NCAR CAM with MITgcm ocean – GFDL B-grid atmosphere with MITgcm ocean – All codes above running as ESMF components and coupled using the framework, codes available from Applications link on website – Other codes running as ESMF components: MOM4, GEOS-5 – Less than 2% lines of source code change Delivered ESMF v2.0 in June 2004 3rd Community Meeting to be held on 15 July 2004 at NCAR www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Priorities Status • Components, States, Bundles, Fields mature • On-line parallel regridding (bilinear, 1st order conservative) completed • Other parallel methods, e.g. halo, redist, low-level comms implemented • Comm methods overloaded for r4 and r8 • Communications layer with uniform interface to shared / distributed memory, hooks for load balancing Near-term priorities • Concurrent components – currently ESMF only runs in sequential mode • More optimized grids (tripolar, spectral, cubed sphere) and more regridding methods (bicubic, 2nd order conservative) from SCRIP • Comms optimization and load balancing capability • IO (based on WRF IO) • Development schedule on-line, see Development link, SourceForge site tasks www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Next Steps – Integration with data archives and metadata standardization efforts, anticipate collaboration with Earth System Grid (ESG) and European infrastructure project PRISM – Integration with scientific model intercomparison projects (MIPs), anticipate collaboration with the Program for Climate Model Diagnosis and Intercomparison (PCMDI), other community efforts – Integration with visualization and diagnostic tools for end-to-end modeling support, anticipate collaboration with the Earth Science Portal (ESP) – ESMF “vision” for the future articulated in multi-agency white paper on the Publications and Talks webpage www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Multi-Agency Follow-on • 3 ESMF FTEs at NCAR slated to have ongoing funding through core NCAR funds • NASA commitment to follow-on support, level TBD • DoD and NSF proposals outstanding • Working with other agencies to secure additional funds www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH Outline • • • • • • • Background and Motivation Applications Architecture Implementation Status Future Plans Conclusions www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH ESMF Overall • Clear, simple hierarchy of data classes • Multi-use objects mean that the same object can carry information about decomposition, communications, IO, coupling • Tools for multithreading, cache blocking, and load balancing are being integrated into the architecture • Objects have consistent naming and behavior across the framework www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH The Benefits • • • • Standard interfaces to modeling components promote increased interoperability between centers, faster movement of modeling components from research to operations The ability to construct models hierarchically enables developers to add new modeling components more systematically and easily, facilitates development of complex coupled systems Multi-use objects mean that the same data structure can carry information about decomposition, communications, IO, coupling – this makes code smaller and simpler, and therefore less bug-prone and easier to maintain Shared utilities encourage efficient code development, higher quality tools, more robust codes www.esmf.ucar.edu NSF NCAR | NASA GSFC | DOE LANL ANL | NOAA NCEP GFDL | MIT | U MICH More Information ESMF website: http://www.esmf.ucar.edu Acknowledgements The ESMF is sponsored by the NASA Goddard Earth Science Technology Office. www.esmf.ucar.edu