Mgt 540 Research Methods Additional “sources” Data Analysis

advertisement

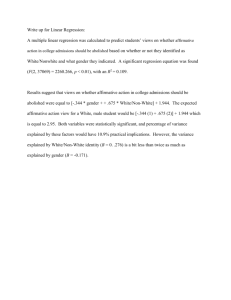

Mgt 540 Research Methods Data Analysis 1 Additional “sources” Compilation of sources: http://lrs.ed.uiuc.edu/tseportal/datacollectionmethodologies/jin-tselink/tselink.htm http://web.utk.edu/~dap/Random/Order/Start.htm Data Analysis Brief Book (glossary) http://rkb.home.cern.ch/rkb/titleA.html Exploratory Data Analysis http://www.itl.nist.gov/div898/handbook/eda/eda.htm http://obelia.jde.aca.mmu.ac.uk/resdesgn/arsham/opre330.htm Statistical Data Analysis 2 1 3 Copyright © 2003 John Wiley & Sons, Inc. Sekaran/RESEARCH 4E FIGURE 12.1 Data Analysis Get the “feel” for the data Get Mean, variance' and standard deviation on each variable See if for all items, responses range all over the scale, and not restricted to one end of the scale alone. Obtain Pearson Correlation among the variables under study. Get Frequency Distribution for all the variables. Tabulate your data. Describe your sample's key characteristics (Demographic details of sex composition, education, age, length of service, etc. ) See Histograms, Frequency Polygons, etc. 4 2 Quantitative Data Each type of data requires different analysis method(s): Nominal Labeling No inherent “value” basis purposes only Categorization Ordinal Ranking, Interval sequence Relationship basis (e.g. age) 5 Descriptive Statistics Describing key features of data Central Mean, Tendency median mode Spread Variance, standard deviation, range Distribution Skewness, (Shape ) kurtosis 6 3 Descriptive Statistics Describing key features of data Nominal Identification / categorization only Ordinal (Example on pg. 139) Non-parametric Do statistics not assume equal intervals Frequency Averages Interval counts (median and mode) Parametric Mean, Standard Deviation, variance 7 Testing “Goodness of Fit” Split Half Reliability Internal Consistency Convergent Validity Involves Correlations and Factor Analysis Discriminant Factorial 8 4 Testing Hypotheses Use appropriate statistical analysis T-test (single or twin-tailed) Test the significance of differences of the mean of two groups ANOVA Test the significance of differences among the means of more than two different groups, using the F test. Regression (simple or multiple) Establish the variance explained in the DV by the variance in the IVs 9 Statistical Power Claiming Errors Type a significant difference in Methodology 1 error Reject the null hypothesis when you should not. an “alpha” error Called Type Fail 2 error to reject the null hypothesis when you should. Called a “beta” error Statistical power refers to the ability to detect true differences avoiding type 2 errors 10 5 Statistical Power see discussion at http://my.execpc.com/4A/B7/helberg/pitfalls/ Depends Sample on 4 issues size The effect size you want to detect The alpha (type 1 error rate) you specify The variability of the sample Too little power Too much power Overlook Any effect difference is significant 11 Parametric vs. nonparametric Parametric (characteristics referring to specific population parameters) Parametric assumptions Independent samples Homogeneity of variance Data normally distributed Interval or better scale Nonparametric Sometimes assumptions independence of samples 12 6 t-tests (Look at t tables; p. 435) Used to compare two means or one observed mean against a guess about a hypothesized mean For large samples t and z can be considered equivalent Calculate t = - µ S Where S is the standard error of the mean, S/√n and df = n-1 13 t-tests Statistical programs will give you a choice between a matched pair and an independent t-test. Your sample and research design determine which you will use. 14 7 z-test for Proportions (Look at t tables; p. 435) When data are nominal Describe by counting occurrences of each value From counts, calculate proportions Compare proportion of occurrence in sample to proportion of occurrence in population Hypotheses testing allows only one of two outcomes: success or failure 15 z-test for Proportions (Look at t tables; p. 435) Comparing sample proportion to the population proportion H0: π = k, where k is a value H1: π≠k between 0 and 1 z=p-π = p-π σp √(π(1- π)/n) Equivalent to χ2 for df = 1 16 8 Chi-Square Test(sampling distribution) One Sample Measures sample variance Squared deviations from the mean – based on normal distribution Nonparametric Compare expected with observed proportion H0: Observed proportion = expected proportion df = number of data points categories, cells (k) minus 1 χ2 = (O – E) 2 E 17 Univariate z Test Test a guess about a proportion against an observed sample; eg., MBAs constitute 35% of the managerial population H0: π = .35 H1: π .35 (two-tailed test suggested) 18 9 Univariate Tests Some univariate tests are different in that they are among statistical procedures where you, the researcher, set the null hypothesis. In many other statistical tests the null hypothesis is implied by the test itself. 19 Contingency Tables Relationship between nominal variables http://www.psychstat.smsu.edu/introbook/sbk28m.htm Relationship between subjects' scores on two qualitative or categorical variables (Early childhood intervention) If the columns are not contingent on the rows, then the rows and column frequencies are independent. The test of whether the columns are contingent on the rows is called the chi square test of independence. The null hypothesis is that there is no relationship between row and column frequencies. 20 10 Correlations A statistical summary of the degree and direction of association between two variables Correlation itself does not distinguish between independent and dependent variables Most common – Pearson’s r 21 Correlations You believe that a linear relationship exists between two variables The range is from –1 to +1 R2, the coefficient of determination, is the % of variance explained in each variable by the other 22 11 Correlations r = Sxy/SxSy or the covariance between x and y divided by their standard deviations Calculations needed The means, x-bar and y-bar Deviations from the means, (x – x-bar) and (y – y-bar) for each case The squares of the deviations from the means for each case to insure positive distance measures when added, (x - xbar)2 and (y – y-bar)2 The cross product for each case (x – xbar) times (y – y-bar) 23 Correlations The null hypothesis for correlations is H0: ρ = 0 and the alternative is usually H1: ρ ≠ 0 However, if you can justify it prior to analyzing the data you might also use H1: ρ > 0 or H1: ρ < 0 , a one-tailed test 24 12 Correlations Alternative Spearman measures rank correlation, rranks and r are nearly always equivalent measures for the same data (even when not the differences are trivial) rranks Phi coefficient, rΦ, when both variables are dichotomous; again, it is equivalent to Pearson’s r 25 Correlations Alternative measures Point-biserial, rpb when If correlating a dichotomous with a continuous variable a scatterplot shows a curvilinear relationship there are two options: A data transformation, or Use the correlation ratio, η2 (etasquared) SSwithin 1SStotal 26 13 ANOVA For two groups only the t-test and ANOVA yield the same results You must do paired comparisons when working with three or more groups to know where the means lie 27 Multivariate Techniques Dependent variable Regression in its various forms Discriminant analysis MANOVA Classificatory Cluster or data reduction analysis analysis Multidimensional scaling Factor 28 14 Linear Regression We would like to be able to predict y from x Simple scores linear regression with raw sy = dependent variable sx x = independent variable b = regression coefficient = rxy c = a constant term y The general model is y = bx + c (+e) 29 Linear Regression The statistic for assessing the overall fit of a regression model is the R2 , or the overall % of variance explained by the model R2 = 1 – unpredictable variance total variance = predictable variance total variance = 1 – (s2e / s2y), where s2e is the variance of the error or residual 30 15 Linear Regression Multiple regression: more than one predictor y = b1x1 + b2x2 + c Each regression coefficient b is assessed independently for its statistical significance; H0: b = 0 So, in a statistical program’s output a statistically significant b rejects the notion that the variable associated with b contributes nothing to predicting y 31 Linear Regression Multiple regression R2 still tells us the amount of variation in y explained by all of the predictors (x) together The F-statistic tells us whether the model as a whole is statistically significant Several other types of regression models are available for data that do not meet the assumptions needed for least-squares models (such as logistic regression for dichotomous dependent variables) 32 16 Regression by SPSS & other Programs Methods for developing the model Stepwise: let’s computer try to fit all chosen variables, leaving out those not significant and reexamining variables in the model at each step Enter: researcher specifies that all variables will be used in the model Forward, backward: begin with all (backward) or none (forward) of the variables and automatically adds or removes variables without reconsideration of variables already in the model 33 Multicollinearity Best regression model has uncorrelated IVs Model stability low with excessively correlated IVs Collinearity diagnostics identify problems, suggesting variables to be dropped High tolerance, low variance inflation factor are desirable 34 17 Discriminant Analysis Regression requires DV to be interval or ratio If DV categorical (nominal) can use discriminant analysis IVs should be interval or ratio scaled Key result is number of cases classified correctly 35 MANOVA Compare means on two or more DVs (ANOVA limited to one DV) Pure MANOVA via SPSS only from command syntax Can use the general linear model though 36 18 Factor Analysis A data reduction technique – a large set of variables can be reduced to a smaller set while retaining the information from the original data set Data must be on an interval or ratio scale E.g., a variable called socioeconomic status might be constructed from variables such as household income, educational attainment of the head of household, and average per capita income of the census block in which the person resides 37 Cluster Analysis Cluster analysis seeks to group cases rather than variables; it too is a data reduction technique Data must be on an interval or ratio scale E.g., a marketing group might want to classify people into psychographic profiles regarding their tendencies to try or adopt new products – pioneers or early adopters, early majority, late majority, laggards 38 19 Factor vs. Cluster Analysis Factor analysis focuses on creating linear composites of variables Number of variables with which we must work is then reduced Technique begins with a correlation matrix to seed the process Cluster analysis focuses on cases 39 Potential Biases Asking the inappropriate or wrong research questions. Insufficient literature survey and hence inadequate theoretical model. Measurement problems Samples not being representative. Problems with data collection: researcher biases respondent biases instrument biases Data analysis biases: Biases (subjectivity) in interpretation of results. coding errors data punching & input errors inappropriate statistical analysis 40 20 Questions to ask: Adopted from Robert Niles Where did the data come from? How (Who) was the data reviewed, verified, or substantiated? How were the data collected? How is the data presented? What is the context? Cherry-picking? Be skeptical when dealing with comparisons Spurious correlations 41 Copyright © 2003 John Wiley & Sons, Inc. Sekaran/RESEARCH 4E FIGURE 11.2 21