View File

advertisement

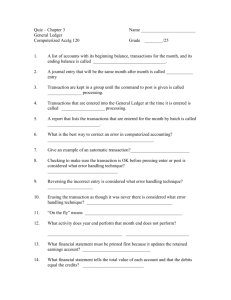

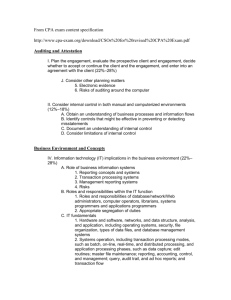

Data & Database Administration Instructor: Engr. Sana Ziafat Database Recovery and Backup Databases are often damaged or lost because of system problems that may be caused by: Human error, Hardware failure, Incorrect or invalid data, Program errors, Viruses, Network failures, Conflicting transactions or, Natural disasters Mechanisms for restoring a database quickly and accurately after loss or damage are known as Database recovery. Back-up Facilities Automatic dump facility that produces backup copy of the entire database Periodic backup (e.g. nightly, weekly) Stored in secure, off-site location Incremental back-up Cold backup database is shut down during backup Hot backup selected portion is shut down and backed up at a given time Back-up strategies based on demands Backup Facilities (Contd.) Backups of static/less frequently changing data may be taken less often. Incremental backups which record changes made since last full backup, may be taken on interim basis. Incremental backups take lesser time. Journalizing Facilities A DBMS must provide journalizing facilities to keep an audit trail of transactions and database changes. Transaction: a discrete unit of work that must be completely processed or not processed at all within a computer system. e.g., entering a customer record In the event of failure, a consistent database state can be re-established by using the information in the journals together with the most recent complete backup. There are two types of journals or logs: Transaction log Database change log Journalizing Facilities Audit trail of transactions and database updates Kept on disk or tape; Must be backed-up Transaction log record of essential data for each transaction processed against the database Example of data in the transaction log? Database change log–images of updated data Before-image–copy before modification After-image–copy after modification Produces an audit trail Journalizing Facilities (Contd.) Transaction log Data typically recorded for a transaction include transaction code or ID Action or type of transaction (e.g. insert) Time of transaction Terminal number or user ID Input data values Tables and records accessed Records modified And possibly the old and new field values Figure - Database audit trail Transaction Recovery action DBMS Effect of transaction or recovery action Database (current) Database (backup) Copy of transaction Transaction Log Copy of database affected by transaction Database Change log Checkpoint Facilities A facility by which DBMS periodically refuses to accept any new transactions. All transactions in progress are completed and the journal files are updates. At this point, system is in a quiet state, and the database & transaction logs are synchronized. The DBMS writes a special record (checkpoint record) to log file which is like a snapshot of the state of database. The checkpoint record contains info necessary to restart the system. Checkpoint Facilities (Contd.) Any dirty blocks (memory pages containing changes not yet written to the disks) are written from memory to the disk storage, ensuring that all changes made prior to taking checkpoint have been written to long-term storage. DBMS may perform checkpoints automatically or in response to user application programs. Checkpoints should be taken frequently (several times an hour). In case of failures, it is often possible to resume processing from the most recent checkpoint. Thus only few minutes processing work must be repeated, compared with several hours for a complete restart of the day’s processing. Recovery Manager It is a module of the DBMS which restores the database to a correct condition when a failure occurs and which resumes processing user requests. The type of restart used depends on the nature of the failure. Recovery manager uses the logs as well as the backup copy to restore the database Recovery and Restart Procedures 1. 2. Disk Mirroring–switch between identical copies of databases: fastest recovery; high availability of data required Restore/Rerun–reprocess transactions against the backup copy; simple, but two disadvantages/last resort: Sequence of transaction Time to reprocess transaction Transaction Integrity–commit or abort all transaction changes (business transactions are well-defined sequence of steps) Define transaction boundaries: BEGIN TRANSACTION & COMMIT Transactions should follow 4 properties. Transaction ACID Properties Atomic Transaction cannot be subdivided Consistent Constraints don’t change from before transaction to after transaction. Example: inventory level Isolated Database changes not revealed to users until after transaction has completed; know on-hand inventory after inventory transaction is completed. Durable Database changes are permanent Recovery and Restart Procedures Backward Recovery (Rollback) apply before- images Reverse the changes made Forward Recovery (Roll Forward)–apply the most recent after-images (much faster and more accurate than restore/rerun) Figure Basic recovery techniques a) Rollback Figure Basic recovery techniques (cont.) b) Rollforward Database Failure Responses (1) Aborted transactions Transaction terminated abnormally; human error, hardware failure of loss of transmission etc. Preferred recovery: rollback Automatically done by DBMS Incorrect data Difficult to detect; Need human intervention, e.g. wrong grades or payment Preferred recovery: rollback Alternative 1: rerun transactions not including inaccurate data updates Alternative 2: compensating transactions, human intervention to correct the error Database Failure Responses (2) System failure (database intact) System crash due to power loss, system software failure Preferred recovery: switch to duplicate database Alternative 1: rollback Alternative 2: restart from checkpoint Database destruction (database is lost, destroyed) Preferred recovery: switch to duplicate database Alternative 1: rollforward to the state before loss Alternative 2: reprocess transactions What is Concurrency Control? The process of managing simultaneous operations against a database so that data integrity is maintained and the operations do not interfere with each other in a multi-user environment. Background Most DBMS run in a multi-user environment, where several users are able to share data contained in a database. No data integrity problems occur when data is being read. But problems can arise when several users are updating data. When more than one transaction is being processed against a DB at the same time, the transactions are considered to be concurrent. The actions that must be made to ensure data integrity are called concurrency control actions. Background (Contd.) Remember that CPU can process only one instruction at a time. As new transactions are submitted while other processing is occurring against the database, the transactions are usually interleaved. The CPU switches among the transactions so that some portion of each transaction is performed as the CPU addresses each transaction in turn. Problems without Concurrency Control Lost Updates problem can occur when multiple users attempt to update database. Example Two users with joint account trying to withdraw cash at the same time using ATM at different locations. User A 1.Read account balance($1000) User B 1.Read account balance ($1000) 2.Withdraw $200 time 2.Withdraw $300 3.Write account balance($800) 3.Write account balance($700) ERROR Problems without Concurrency Control Inconsistent read problems can occur when one user reads data that has been partially updated by another user. This read will be incorrect, also called dirty read or unrepeatable read. Serializabilty Concurrent transactions need to be processed in isolation so that they do not interfere with each other. If one transaction were entirely processes at a time before another one, no interference would occur. Procedures which emulate this are called serializable. Serializable schedules process transactions that will give results as if they had been processed one after the other. Schedules are designed such that transactions that will not interfere with each other can be run in parallel, e.g., transactions that access data from different tables in a database will not conflict with each other. Serializability is achieved by different ways, but locking mechanisms are the most common. Locking Locking implies that any data retrieved by a user for updating must be locked, or denied to other users, until the update is completed or aborted. Locking mechanism enforces a sequential updating process that prevents erroneous updates. It is the pessimistic approach of concurrency control Concurrency Control through Locking User A 1.Read account balance 2.Lock account balance User B 1.Read account balance (denied) 2.Read account balance($1000) 3.Write account balance($800) time 2.Withdraw $200 4. Unlock account balance 2.Lock account balance 3.Read account balance($800) 4.Withdraw $300 5.Write account balance($500) 6. Unlock account balance Locking Levels There are different locking levels (granularity) Database Entire DB is locked & becomes unavailable. This level can be used during backups Table Entire table containing a requested record is locked. This level can be used for bulk updates that will update entire table (e.g., salary raise) Block or page The physical storage block (or page) containing the requested record is locked. This level is commonly implemented. Record level Only the requested record (row) is locked. All other records within table are available. Field level Only the particular field (column) in the requested record is Types of locks Shared (S locks or read locks) Allows other transactions to read but not update a record or resource. Placing a shared lock prevents another user from placing an exclusive lock, but not a shared lock, on that record. Exclusive (X locks or write locks) Prevent other transaction from reading and therefore updating a record until unlocked. Should be placed when updating records. Placing an exclusive lock on a record prevents another user from placing any type of lock on that record Deadlocks Locking may prevent erroneous updates but can lead to deadlocks problem. Deadlock: When two or more transactions lock a common resource, each one of them waits for the other one to unlock that resource. Deadlock prevention: users must lock all required records at the beginning of the transaction. Deadlock resolution: the DBMS detects and breaks the deadlock by backing out of the the deadlocked transactions. Any changes made by that transaction up to the time of deadlock are removed, The transaction is restarted when the resources become available. Versioning Optimistic approach of concurrency control Each transaction is restricted to a view of a database as of the time that transaction started and when a transaction modifies a record, the DBMS creates a new record version instead of overwriting the old record.