ppt

advertisement

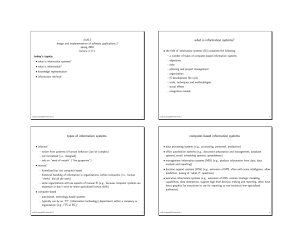

Lemur Toolkit Introduction http://net.pku.edu.cn/~wbia 彭波 pb@net.pku.edu.cn 北京大学信息科学技术学院 3/21/2011 Recap Information Retrieval Models Vector Space Model Probabilistic models Language model Some formulas for Sim(VSM) Dot product Cosine Sim( D, Q) D Q Sim( D, Q) (a * b ) i i ai 2 * i Dice bi 2 (a * b ) t Sim( D, Q) a b (a * b ) Sim( D, Q) a b (a * b ) 2 i D θ i Q t1 i 2 i 2 2 i i i Jaccard t3 (ai * bi ) i i i i i i 2 2 i i i i i i i 3 BM25 (Okapi system) – Robertson et al. Consider tf, qtf, document length (k1 +1)tfi (k3 +1)qtfi avdl - dl Score(D,Q) = å ci + k2 | Q | K + tfi k3 + qtfi avdl + dl ti ÎQ dl K = k1 ((1- b) + b ) avdl - dl TF factors Doc. length normalization k1, k2, k3, b: parameters qtf: query term frequency dl: document length avdl: average document length 4 Standard Probabilistic IR Information need P(R | Q, d) matching query d1 d2 … dn document collection 5 IR based on Language Model (LM) Information need P(Q | M d ) generation query M d1 d1 M d2 d2 A query generation process For an information need, imagine an ideal document Imagine what words could appear in that document Formulate a query using those words M dn … … dn document collection 6 Language Modeling for IR Estimate a multinomial probability distribution from the text Smooth the distribution with one estimated from the entire collection P(w|D) = (1-) P(w|D)+ P(w|C) Query Likelihood ? P(Q|D) = P(q|D) Estimate probability that document generated the query terms Kullback-Leibler Divergence Estimate models for document and query and compare ? = KL(Q|D) = P(w|Q) log(P(w|Q) / P(w|D)) Question Among the three classic information retrieval model, which one is your best choice in designing your retrieval system? How can you tune the model parameters to achieve optimized performance? When you have a new idea on retrieval problem, how can you prove it? A Brief History of IR Slides from Prof. Ray Larson University of California, Berkeley School of Information http://courses.sims.berkeley.edu/i240/s11/ Experimental IR systems Probabilistic indexing – Maron and Kuhns, 1960 SMART – Gerard Salton at Cornell – Vector space model, 1970’s SIRE at Syracuse I3R – Croft Cheshire I (1990) TREC – 1992 Inquery Cheshire II (1994) MG (1995?) Lemur (2000?) IS 240 – Spring 2011 Historical Milestones in IR Research 1958 Statistical Language Properties (Luhn) 1960 Probabilistic Indexing (Maron & Kuhns) 1961 Term association and clustering (Doyle) 1965 Vector Space Model (Salton) 1968 Query expansion (Roccio, Salton) 1972 Statistical Weighting (Sparck-Jones) 1975 2-Poisson Model (Harter, Bookstein, Swanson) 1976 Relevance Weighting (Robertson, SparckJones) 1980 Fuzzy sets (Bookstein) 1981 Probability without training (Croft) IS 240 – Spring 2011 Historical Milestones in IR Research (cont.) 1983 Linear Regression (Fox) 1983 Probabilistic Dependence (Salton, Yu) 1985 Generalized Vector Space Model (Wong, Rhagavan) 1987 Fuzzy logic and RUBRIC/TOPIC (Tong, et al.) 1990 Latent Semantic Indexing (Dumais, Deerwester) 1991 Polynomial & Logistic Regression (Cooper, Gey, Fuhr) 1992 TREC (Harman) 1992 Inference networks (Turtle, Croft) 1994 Neural networks (Kwok) 1998 Language Models (Ponte, Croft) IS 240 – Spring 2011 Information Retrieval Research Boolean model, statistics of language (1950’s) Vector space model, probablistic indexing, relevance feedback (1960’s) Probabilistic querying (1970’s) Fuzzy set/logic, evidential reasoning (1980’s) Regression, neural nets, inference networks, latent semantic indexing, TREC (1990’s) – Historical View Industry DIALOG, Lexus-Nexus, STAIRS (Boolean based) Information industry (O($B)) Verity TOPIC (fuzzy logic) Internet search engines (O($100B?)) (vector space, probabilistic) IS 240 – Spring 2011 Research Systems Software INQUERY (Croft) OKAPI (Robertson) PRISE (Harman) SMART (Buckley) MG (Witten, Moffat) CHESHIRE (Larson) http://potomac.ncsl.nist.gov/prise http://cheshire.berkeley.edu LEMUR toolkit Lucene Others IS 240 – Spring 2011 Lemur Project Some slides from Don Metzler, Paul Ogilvie & Trevor Strohman Zoology 101 Lemurs are primates found only in Madagascar 50 species (17 are endangered) Ring-tailed lemurs lemur catta Zoology 101 The indri is the largest type of lemur When first spotted the natives yelled “Indri! Indri!” Malagasy for "Look! Over there!" About The Lemur Project The Lemur Project was started in 2000 by the Center for Intelligent Information Retrieval (CIIR) at the University of Massachusetts, Amherst, and the Language Technologies Institute (LTI) at Carnegie Mellon University. Over the years, a large number of UMass and CMU students and staff have contributed to the project. The project's first product was the Lemur Toolkit, a collection of software tools and search engines designed to support research on using statistical language models for information retrieval tasks. Later the project added the Indri search engine for large-scale search, the Lemur Query Log Toolbar for capture of user interaction data, and the ClueWeb09 dataset for research on web search. Installation http://www.lemurproject.org Linux, OS/X: Extract software/lemur-4.12.tar.gz ./configure --prefix=/install/path ./make ./make install Windows Run software/lemur-4.12-install.exe Documentation in windoc/index.html Installation Use Lemur-4.12 instead~ JAVA Runtime(JDK 6) need for evaluation tool. Environment Variable : PATH Linux: modify ~/.bash_profile Windows: MyComputer/Properties… Indexing Document Preparation Indexing Parameters Time and Space Requirements Two Index Formats KeyFile Term Positions Metadata Offline Incremental InQuery Query Language Indri Term Positions Metadata Fields / Annotations Online Incremental InQuery and Indri Query Languages Indexing – Document Preparation Document Formats: The Lemur Toolkit can inherently deal with several different document format types without any modification: HTML TREC Text XML TREC Web PDF Plain Text Mbox Microsoft Word(*) Microsoft PowerPoint(*) (*) Note: Microsoft Word and Microsoft PowerPoint can only be indexed on a Windows-based machine, and Office must be installed. Indexing – Document Preparation 1. 2. If your documents are not in a format that the Lemur Toolkit can inherently process: If necessary, extract the text from the document. Wrap the plaintext in TREC-style wrappers: <DOC> <DOCNO>document_id</DOCNO> <TEXT> Index this document text. </TEXT> </DOC> – or – For more advanced users, write your own parser to extend the Lemur Toolkit. Indexing - Parameters Basic usage to build index: IndriBuildIndex <parameter_file> Parameter file includes options for Where to find your data files Where to place the index How much memory to use Stopword, stemming, fields Many other parameters. Indexing – Parameters Standard parameter file specification an XML document: <parameters> <option></option> <option></option> … <option></option> </parameters> Indexing – Parameters where to find your source files and what type to expect BuildIndex <dataFiles> name of file containing list of datafiles to index. IndriBuildIndex <parameters> <corpus> <path>/path/to/source/files</path> <class>trectext</class> </corpus> </parameters> Indexing - Parameters The <index> parameter tells IndriBuildIndex where to create or incrementally add to the index If index does not exist, it will create a new one If index already exists, it will append new documents into the index. <parameters> <index>/path/to/the/index</index> </parameters> Indexing - Parameters <memory> - used to define a “soft-limit” of the amount of memory the indexer should use before flushing its buffers to disk. Use K for kilobytes, M for megabytes, and G for gigabytes. <parameters> <memory>256M</memory> </parameters> Indexing - Parameters Stopwords defined within <stopwords>filename</stopwords> IndriBuildIndex <parameters> <stopper> <word>first_word</word> <word>next_word</word> … <word>final_word</word> </stopper> </parameters> Indexing – Parameters Term stemming can be used while indexing as well via the <stemmer> tag. Specify the stemmer type via the <name> tag within. Stemmers included with the Lemur Toolkit include the Krovetz Stemmer and the Porter Stemmer. <parameters> <stemmer> <name>krovetz</name> </stemmer> </parameters> Retrieval Parameters Query Formatting Interpreting Results Retrieval - Parameters Basic usage for retrieval: IndriRunQuery/RetEval <parameter_file> Parameter file includes options for Where to find the index The query or queries How much memory to use Formatting options Many other parameters. Retrieval - Parameters The <index> parameter tells IndriRunQuery/RetEval where to find the repository. <parameters> <index>/path/to/the/index</index> </parameters> Retrieval - Parameters The <query> parameter specifies a query plain text or using the Indri query language <parameters> <query> <number>1</number> <text>this is the first query</text> </query> <query> <number>2</number> <text>another query to run</text> </query> </parameters> Query file format <DOC> <DOCNO> 1 </DOCNO> What articles exist which deal with TSS (Time Sharing System), anoperating system for IBM computers? </DOC> <DOC> <DOCNO> 2 </DOCNO> I am interested in articles written either by Prieve or Udo PoochPrieve, B.Pooch, U. </DOC> Retrieval – Query Formatting TREC-style topics are not directly able to be processed via IndriRunQuery/RetEval. Format the queries accordingly: Format by hand Write a script to extract the fields (可爱的Python~) Retrieval – Parameters To specify a maximum number of results to return, use the <count> tag: <parameters> <count>50</count> </parameters> Retrieval - Parameters Result formatting options: IndriRunQuery/RetEval has built in formatting specifications for TREC and INEX retrieval tasks Retrieval – Parameters TREC – Formatting directives: <runID>: a string specifying the id for a query run, used in TREC scorable output. <trecFormat>: true to produce TREC scorable output, otherwise use false (default). <parameters> <runID>runName</runID> <trecFormat>true</trecFormat> </parameters> Outputting INEX Result Format Must be wrapped in <inex> tags <participant-id>: specifies the participant-id attribute used in submissions. <task>: specifies the task attribute (default CO.Thorough). <query>: specifies the query attribute (default automatic). <topic-part>: specifies the topic-part attribute (default T). <description>: specifies the contents of the description tag. <parameters> <inex> <participant-id>LEMUR001</participant-id> </inex> </parameters> Retrieval - Evaluation To use trec_eval: format IndriRunQuery results with appropriate trec_eval formatting directives in the parameter file: <runID>runName</runID> <trecFormat>true</trecFormat> Resulting output will be in standard TREC format ready for evaluation: <queryID> Q0 <DocID> <rank> <score> <runID> 150 Q0 AP890101-0001 1 -4.83646 runName 150 Q0 AP890101-0015 2 -7.06236 runName Use RetEval for TF.IDF First run ParseToFile to convert doc formatted queries into queries <parameters> <docFormat>web</docFormat> <outputFile>filename</outputFile> <stemmer>stemmername</stemmer> <stopwords>stopwordfile</stopwords> </parameters> ParseToFile paramfile queryfile http://www.lemurproject.org/lemur/parsing.html#parseto file Use RetEval for TF.IDF Then run RetEval <parameters> <index>index</index> <retModel>0</retModel> // 0 for TF-IDF, 1 for Okapi, // 2 for KL-divergence, // 5 for cosine similarity <textQuery>querie filename</textQuery> <resultCount>1000</resultCount> <resultFile>tfidf.res</resultFile> </parameters> RetEval paramfile http://www.lemurproject.org/lemur/retrieval.html#RetEva l Evluate Results TREC qrels Ground Truth: judge by human assessors. Ireval tool java -jar “D:\Program Files\Lemur\Lemur 4.12\bin\ireval.jar”re sult qrels >pr.result Use Lemur API & Make Extension to Lemur Task When you have a new idea on retrieval problem, how can you prove it? Introducing the API Lemur “Classic” API Many objects, highly customizable May want to use this when you want to change how the system works Support for clustering, distributed IR, summarization Indri API Two main objects Best for integrating search into larger applications Supports Indri query language, XML retrieval, “live” incremental indexing, and parallel retrieval Lemur “Classic” API Primarily useful for retrieval operations Most indexing work in the toolkit has moved to the Indri API Indri indexes can be used with Lemur “Classic” retrieval applications Extensive documentation and tutorials on the website (more are coming) Lemur Index Browsing The Lemur API gives access to the index data (e.g. inverted lists, collection statistics) IndexManager::openIndex Returns a pointer to an index object Detects what kind of index you wish to open, and returns the appropriate kind of index class Index:: docInfoList (inverted list), termInfoList (document vector), termCount, documentCount Lemur: DocInfoList Index::docInfoList( int termID ) Returns an iterator to the inverted list for termID. The list contains all documents that contain termID, including the positions where termID occurs. Lemur: TermInfoList Index::termInfoList( int docID ) Returns an iterator to the direct list for docID. The list contains term numbers for every term contained in document docID, and the number of times each word occurs. (use termInfoListSeq to get word positions) Lemur Index Browsing Index::termCount termCount() : Total number of terms indexed termCount( int id ) : Total number of occurrences of term number id. Index::documentCount docCount() : Number of documents indexed docCount( int id ) : Number of documents that contain term number id. Lemur Index Browsing Index::term term( char* s ) : convert term string to a number term( int id ) : convert term number to a string Index::document document( char* s ) : convert doc string to a number document( int id ) : convert doc number to a string Lemur Index Browsing Index::docLength( int docID ) The length, in number of terms, of document number docID. Index::docLengthAvg Average indexed document length Index::termCountUnique Size of the index vocabulary Browsing Posting List Lemur Retrieval Class Name Description TFIDFRetMethod BM25 SimpleKLRetMethod KL-Divergence InQueryRetMethod Simplified InQuery CosSimRetMethod Cosine CORIRetMethod CORI OkapiRetMethod Okapi IndriRetMethod Indri (wraps QueryEnvironment) Lemur Retrieval RetMethodManager::runQuery query: text of the query index: pointer to a Lemur index modeltype: “cos”, “kl”, “indri”, etc. stopfile: filename of your stopword list stemtype: stemmer datadir: not currently used func: only used for Arabic stemmer model->scoreCollection() RetMethodManager :: createModel Lemur Retrieval Method lemur::api::RetrievalMethod Class Reference TextQueryMethod A text query retrieval method is determined by specifying the following elements: A method to compute the query representation A method to compute the doc representation The scoring function A method to update the query representation based on a set of (relevant) documents TextQueryMethod Scoring Function Given a query q =(q1,q2,...,qN) and a document d=(d1,d2,...,dN), where q1,...,qN and d1,...,dN are terms: s(q,d) = g(w(q1,d1,q,d) + ... + w(qN,dN,q,d),q,d) function w gives the weight of each matched term function g makes it possible to perform any further transformation of the sum of the weight of all matched terms based on the "summary" information of a query or a document (e.g., document length). TextQueryMethod Scoring Function ScoreFunction::matchedTermWeight compute the score contribution of a matched term ScoreFunction:: adjustedScore score adjustment (e.g., appropriate length normalization) Lemur: Other tasks Clustering: ClusterDB Distributed IR: DistMergeMethod Language models: UnigramLM, DirichletUnigramLM, etc. More Stories about Indri Why Can’t We All Get Along? Structured Data and Information Retrieval Prof. Bruce Croft Center for Intelligent Information Retrieval (CIIR) University of Massachusetts Amherst Similarities and Differences Common interest in providing efficient access to information on a very large scale indexing and optimization key topics Until recently, concern about effectiveness (accuracy) of access was domain of IR Focus on structured vs. unstructured data is historically true but less relevant today Statistical inference and ranking are central to IR, becoming more important in DB Similarities and Differences IR systems have focused on providing access to information rather than answers e.g. Web search evaluation typically based on topical relevance and user relevance rather than correctness (except QA) IR works with multiple databases but not multiple relations IR query languages more like calculus than algebra Integrity, security, concurrency are central for DB, less so in IR What is the Goal? An IR system with extended capability for structured data i.e. extend IR model to include combination of evidence from structured and unstructured components of complex objects (documents) backend database system used to store objects (cf. “one hand clapping”) many applications look like this (e.g. desktop search, web shopping) users seem to prefer this approach (simple queries or forms and ranking) Combination of Evidence Example: Where was George Washington born? Returns a ranked list of sentences containing the phrase George Washington, the term born, and a snippet of text tagged as a PLACE named entity “Structured Query” .vs. TextQuery Indri – A Candidate IR System Indri is a separate, downloadable component of the Lemur Toolkit Influences INQUERY [Callan, et. al. ’92] Lemur [http://www.lemurproject.org] Language modeling (LM) toolkit Lucene [http://jakarta.apache.org/lucene/docs/index.html] Inference network framework Structured Query language Popular off the shelf Java-based IR system Based on heuristic retrieval models Designed for new retrieval environments i.e. GALE, CALO, AQUAINT, Web retrieval, and XML retrieval Zoology 101 The indri is the largest type of lemur When first spotted the natives yelled “Indri! Indri!” Malagasy for "Look! Over there!" Design Goals Off the shelf (Windows, *NIX, Mac platforms) Robust retrieval model Inference net + language modeling [Metzler and Croft ’04] Powerful query language Simple to set up and use Fully functional API w/ language wrappers for Java, etc… Designed to be simple to use, yet support complex information needs Provides “adaptable, customizable scoring” Scalable Highly efficient code Distributed retrieval Incremental update Model Based on original inference network retrieval framework [Turtle and Croft ’91] Casts retrieval as inference in simple graphical model Extensions made to original model Incorporation of probabilities based on language modeling rather than tf.idf Multiple language models allowed in the network (one per indexed context) Model Model hyperparameters (observed) Document node (observed) α,βbody D α,βh1 α,βtitle Context language models θtitle r1 … θbody rN Representation nodes (terms, phrases, etc…) r1 … q1 Information need node (belief node) θh1 rN r1 … rN q2 I Belief nodes (#combine, #not, #max) Model α,βbody D α,βh1 α,βtitle θtitle r1 … θbody rN r1 … q1 θh1 rN r1 q2 I … rN P( r | θ ) Probability of observing a term, phrase, or feature given a context language model Assume r ~ Bernoulli( θ ) ri nodes are binary “Model B” – [Metzler, Lavrenko, Croft ’04] Nearly any model may be used here tf.idf-based estimates (INQUERY) Mixture models Model α,βbo D dy α,βtitle θtitle r1 … α,βh1 θbody rN r1 … q1 θh1 rN r1 q2 I … rN P( θ | α, β, D ) Prior over context language model determined by α, β Assume P( θ | α, β ) ~ Beta( α, β ) Bernoulli’s conjugate prior αr = μP( r | C ) + 1 βr = μP( ¬ r | C ) + 1 μ is a free parameter P(ri | , , D) P(ri | ) P( | , , D) tfr , D P(r | C ) | D | Model α,βbo D dy α,βtitle θtitle r1 … α,βh1 θbody rN r1 … q1 θh1 rN r1 q2 I … rN P( q | r ) and P( I | r ) Belief nodes are created dynamically based on query Belief node estimates are derived from standard link matrices Combine evidence from parents in various ways Allows fast inference by making marginalization computationally tractable Information need node is simply a belief node that combines all network evidence into a single value Documents are ranked according to P( I | α, β, D) Belief Inference p1 p2 q …… Pn Example: #AND P(Q=true|a,b) A B 0 false false 0 false true 0 true false 1 true true A B Q P#and (Q true) P(Q true | A a, B b) P( A a) P( B b) a ,b P(t | f , f )(1 p A )(1 p B ) P(t | f , t )(1 p A ) pB P(t | t , f ) p A (1 pB ) P(t | t , t ) p A pB 0(1 p A )(1 p B ) 0(1 p A ) pB 0 p A (1 pB ) 1 p A pB p A pB Other Belief Operators Document Representation <html> <head> <title>Department Descriptions</title> </head> <body> The following list describes … <h1>Agriculture</h1> … <h1>Chemistry</h1> … <h1>Computer Science</h1> … <h1>Electrical Engineering</h1> … … <h1>Zoology</h1> </body> </html> <title> context <title>department descriptions</title> <title> extents <body> context <body>the following list describes … <h1>agriculture</h1 > … </body> <body> extents <h1> context <h1>agriculture</h1 > <h1>chemistry</h1> … <h1>zoology</h1> <h1> extents . . . 1. department descriptions 1. the following list describes <h1>agricultur e </h1> … 1. agriculture 2. chemistry … 36. zoology Terms Type Example Matches Stemmed term dog All occurrences of dog (and its stems) Surface term “dogs” Exact occurrences of dogs (without stemming) Term group (synonym group) <”dogs” canine> All occurrences of dogs (without stemming) or canine (and its stems) POS qualified term <”dogs” canine>.NNS Same as previous, except matches must also be tagged with the NNS POS tag Proximity Type #odN(e1 … em) or #N(e1 … em) Example #od5(dog cat) or #5(dog cat) Matches #uwN(e1 … em) #uw5(dog cat) All occurrences of dog and cat that appear in any order within a window of 5 words #phrase(e1 … em) #phrase(#1(willy wonka) #uw3(chocolate factory)) #syntax:np(fresh powder) System dependent implementation (defaults to #odm) #syntax:xx(e1 … em) All occurrences of dog and cat appearing ordered within a window of 5 words System dependent implementation Context Restriction Example Matches dog.title All occurrences of dog appearing in the title context dog.title,paragraph All occurrences of dog appearing in both a title and paragraph contexts (may not be possible) <dog.title dog.paragraph> #5(dog cat).head All occurrences of dog appearing in either a title context or a paragraph context All matching windows contained within a head context Context Evaluation Example Evaluated dog.(title) The term dog evaluated using the title context as the document dog.(title, paragraph) The term dog evaluated using the concatenation of the title and paragraph contexts as the document dog.figure(paragra ph) The term dog restricted to figure tags within the paragraph context. Belief Operators INQUERY #sum / #and #wsum* #or #not #max INDRI #combine #weight #or #not #max * #wsum is still available in INDRI, but should be used with discretion Extent Retrieval Example Evaluated #combine[section](dog canine) Evaluates #combine(dog canine) for each extent associated with the section context #combine[title, section](dog Same as previous, except is evaluated for canine) each extent associated with either the title context or the section context #sum(#sum[section](dog)) Returns a single score that is the #sum of the scores returned from #sum(dog) evaluated for each section extent #max(#sum[section](dog)) Same as previous, except returns the maximum score Ad Hoc Retrieval Flat documents Query likelihood retrieval: q1 … qN ≡ #combine( q1 … qN) SGML/XML documents Can either retrieve documents or extents Context restrictions and context evaluations allow exploitation of document structure Web Search Homepage / known-item finding Use mixture model of several document representations [Ogilvie and Callan ’03] P( w | , ) body P( w | body ) inlinkP( w | inlink ) λtitleP( w | title) Example query: Yahoo! #combine( #wsum( 0.2 yahoo.(body) 0.5 yahoo.(inlink) 0.3 yahoo.(title) ) ) Example Indri Web Query #weight( 0.1 #weight( 1.0 #prior(pagerank) 0.75 #prior(inlinks) ) 1.0 #weight( 0.9 #combine( #wsum( 1 stellwagen.(inlink) 1 stellwagen.(title) 3 stellwagen.(mainbody) 1 stellwagen.(heading) ) #wsum( 1 bank.(inlink) 1 bank.(title) 3 bank.(mainbody) 1 bank.(heading) ) ) 0.1 #combine( #wsum( 1 #uw8( stellwagen bank ).(inlink) 1 #uw8( stellwagen bank ).(title) 3 #uw8( stellwagen bank ).(mainbody) 1 #uw8( stellwagen bank ).(heading) ) ) ) ) Question Answering More expressive passage- and sentence-level retrieval Example: Where was George Washington born? #combine[sentence]( #1( george washington ) born #any:place) Returns a ranked list of sentences containing the phrase George Washington, the term born, and a snippet of text tagged as a PLACE named entity Indri Examples Paragraphs from news feed articles published between 1991 and 2000 that mention a person, a monetary amount, and the company InfoCom #filreq(#band( NewsFeed.doctype #date:between(1991 2000) ) #combine[paragraph]( #any:person #any:money InfoCom ) ) Getting Help http://www.lemurproject.org http://www.lemurproject.org/phorum Central website, tutorials, documentation, news Discussion board, developers read and respond to questions README file in the code distribution Paul Ogilvie Trevor Strohman Don Metzler Thank You! Q&A