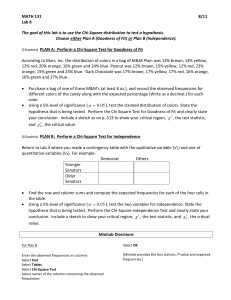

HYPOTHESIS TESTING

advertisement

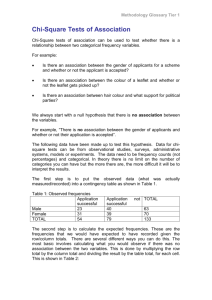

A Review of Widely-Used Statistical Methods 1 REVIEW OF FUNDAMENTALS When testing hypotheses, all statistical methods will always be testing the null. Null Hypothesis? No difference/no relationship If we do not reject the null, conclusion? Found no difference/no relationship If we do decide to reject the null, conclusion? A significant relationship/difference is found and reported o The observed relationship/difference is too large to be attributable to chance/sampling error. 2 How do we decide to reject/not reject the null? Statistical tests of significance always test the null and always report a? a (sig. level)—probability of erroneously rejecting a true null based on sample data. a represents the odds of being wrong if we decide to reject the null the probability that null is in fact true and that any apparent relationship/difference is a result of chance/sampling error and, thus the odds of being wrong if we report a significant relationship/difference. Rule of thumb for deciding to reject/not reject the null? 3 STATITICAL DATA ANALYSIS COMMON TYPES OF ANALYSIS? – Examine Strength and Direction of Relationships • Bivariate (e.g., Pearson Correlation—r) Between one variable and another: Y = a + b1 x 1 • Multivariate (e.g., Multiple Regression Analysis) Between one dep. var. and an independent variable, while holding all other independent variables constant: Y = a + b1 x1 + b2 x2 + b3 x3 + … + bk xk – Compare Groups • Between Proportions (e.g., Chi Square Test—2) H0: P1 = P2 = P3 = … = Pk • Between Means (e.g., Analysis of Variance) H0: µ1 = µ2 = µ3 = …= µk Let’s first review some fundamentals. 4 Remember: Level of measurement determines choice of statistical method. Statistical Techniques and Levels of Measurement: INDEPENDENT NOMINAL/CATEGORICAL N O M I N A L M E T R I C * Chi-Square * Fisher’s Exact Prob. * T-Test * Analysis of Variance METRIC (ORDERED METRIC or HIGHER) * Discriminant Analysis * Logit Regression * Correlation (and Covariance) Analysis * Regression Analysis 5 Correlation and Covariance: Measures of Association Between Two Variables Often we are interested in the strength and nature of the relationship between two variables. Two indices that measure the linear relationship between two continuous/metric variables are: a. Covariance b. Correlation Coefficient (Pearson Correlation) Covariance Covariance is a measure of the linear association between two metric variables (i.e., ordered metric, interval, or ratio variables). Covariance (for a sample) is computed as follows: ( xi x )( yi y ) sxy n 1 for samples Positive values indicate a positive relationship. Negative values indicate a negative (inverse) relationship. Covariance (sxy ) of Two Variables Example: Golfing Study A golf enthusiast is interested in investigating the relationship, if any, between golfers’ driving distance (x) and their 18-hole score (y). He uses the following sample data (i.e., data from n = 6 golfers) to examine the issue: x =Average Driving Distance (yards.) 277.6 259.5 269.1 267.0 255.6 272.9 y = Golfer’s Average 18-Hole Score 69 71 70 70 71 69 Covariance (sxy ) of two variables Example: Golfing Study x y 277.6 259.5 269.1 267.0 255.6 272.9 69 71 70 70 71 69 Average 267.0 70.0 Std. Dev. 8.2192 .8944 sxy ( xi x )( yi y ) n 1 ( xi x ) ( yi y ) ( xi x )( yi y ) 10.65 -7.45 2.15 0.05 -11.35 5.95 -1.0 1.0 0 0 1.0 -1.0 -10.65 -7.45 0 0 -11.35 -5.95 Total -35.40 n=6 Covariance Example: Golfing Study Covariance: s xy ( x x )( y i y ) 35.40 7.08 n1 61 i • What can we say about the relationship between the two variables? The relationship is negative/inverse. That is, the longer a golfer’s driving distance is, the lower (better) his/her score is likely to be. • How strong is the relationship between x and y? Hard to tell; there is no standard metric to judge it by! Values of covariance depend on units of measurement for x and y. WHAT DOES THIS MEAN? Covariance s xy (x i x )( y i y ) 35.40 61 7.08 n1 It means: If driving distance (x) were measured in feet, rather than yards, even though it is the same relationship (using the same data), the covariance sxy would have been much larger. WHY? Because x-values would be much larger, and thus ( xi x ) ( values will be much larger which, in turn, will make ( xi x )( yi y ) much larger. SOLUTION: Correlation Coefficient comes to the rescue! • Correlation Coefficient (r) is a standard measure/metric for judging strength of linear relationship that, unlike covariance, is not affected by the units of measurement for x and y. This is why correlation coefficient (r) is much more widely used that covariance. Correlation Coefficient Correlation Coefficient rxy (Pearson/simple correlation) is a measure of linear association between two variables. It may or may not represent causation. The correlation coefficient rxy (for sample data) is computed as follows: for samples rxy s xy sx s y sxy = Covariance of x & y sx = Std. Dev. of x sy = Std Dev. of y Correlation Coefficient = r Francis Galton (English researcher, inventor of fingerprinting, and cousin of Charles Darwin) In1888, plotted lengths of forearms and head sizes to see to what degree one could be predicted by the other. Stumbled upon the mathematical properties of correlation plots (e.g., y intercept, size of slope, etc.). RESULT: An objective measure of how two variables are “co-related“--CORRELATION COEFFICIENT (Pearson Correlation), r. Assesses the strength of a relationship based strictly on empirical data, and independent of human judgment or opinion 13 Correlation Coefficient (Pearson Correlation) = r What do you use it for? Karl Pearson, a Galton Student & the Founder of Modern Statistics To examine: a. Whether a relationship exists between two metric variables • e.g., income and education, or workload and job satisfaction and b. What the nature and strength of that relationship may be. Range of Values for r? 14 Correlation Coefficient (Pearson Correlation) rxy -1 < r < +1. • • r-values closer to -1 or +1 indicate stronger linear relationships. • r-values closer to zero indicate a weaker relationship. NOTE: Once rxy is calculated, we need to see whether it is statistically significant (if using sample data). • Null Hypothesis when using r? H0: r = 0 There is no relationship between the two variables. 16 Correlation Coefficient (Pearson Correlation) rxy Example: Golfing Study A golf enthusiast is interested in investigating the relationship, if any, between golfers’ driving distance (x) and their 18-hole score (y). He uses the following sample data (i.e., data from n = 6 golfers) to examine the issue: x =Average Driving Distance (yards.) 277.6 259.5 269.1 267.0 255.6 272.9 y =Average 18-Hole Score 69 71 70 70 71 69 Correlation Coefficient (Pearson Correlation) rxy Example: Golfing Study x y 277.6 259.5 269.1 267.0 255.6 272.9 69 71 70 70 71 69 Average 267.0 70.0 Std. Dev. 8.2192 .8944 ( xi x ) ( yi y ) ( xi x )( yi y ) 10.65 -7.45 2.15 0.05 -11.35 5.95 -1.0 1.0 0 0 1.0 -1.0 -10.65 -7.45 0 0 -11.35 -5.95 Total -35.40 Correlation Coefficient (Pearson Correlation) rxy Example: Golfing Study We had calculated sample Covariance sxy to be: s xy (x i x )( y i y ) n1 35.40 7.08 61 Correlation Coefficient (Pearson Correlation) rxy sxy 7.08 rxy -.9631 sx sy (8.2192)(.8944) Conclusion? Not only is the relationship negative, but also extremely strong! Correlation Coefficient (Pearson Correlation): r ( x x)( y y ) ( x x) 2 ( y y) 2 s xy ( s x ).( s y ) To understand the practical meaning of r, we can square it. • What would r2 mean/represent? • e.g., r = 0.96 r2 = 92% r2 Represents the proportion (%) of the total/combined variation in both x and y that is accounted for by the joint variation (covariation) of x and y together (x with y and y with x) • r2 always represents a % • Why do we show more interest in r, rather than r2? 20 Correlation Coefficient: Computation r2 = (Covariation of X and Y together) / (All of variation of X & Y combined) r Blood Age Pressure X 4 6 9 . . _ X=7 ( x x)( y y ) ( x x) _ _ Y X–X 12 -3 19 -1 14 2 . . . . _ Y=16 Y–Y -4 3 -2 . . 2 _ ( y y) _ (X – X) (Y – Y) 12 -3 -4 . . _ _ ∑(X – X) (Y – Y) 2 s xy ( s x ).( s y ) _ _ (X – X)2 (Y – Y)2 9 16 1 9 4 4 . . . . _ _ ∑ (X – X)2 ∑ (Y – Y)2 NOTE: Once r is calculated, we need to see if it is statistically significant (if sample data). That is, we need to test H0: r = 021 Correlation Coefficient? Suppose the correlation between X (say, Students’ GMAT Scores) and Y (their 1st year GPA in MBA program) is r = +0.48 and is statistically significant. How would we interpret this? a) GMAT score and 1st year GPA are positively related so that as values of one variable increase, values of the other also tend to increase, and b) R2 = (0.48)2 = 23% of variations/differences in students’ GPAs are explained by (or can be attributed to) variations/ differences in their GMAT scores. Lets now practice on SPSS Menu Bar: Analyze, Correlate, Bivariate, Pearson EXAMPLE: Using data in SPSS File Salary.sav we wish to see if beginning salary is related to seniority, age, work experience, and education 22 STATITICAL DATA ANALYSIS COMMON TYPES OF ANALYSIS: – Examine Strength and Direction of Relationships • Bivariate (e.g., Pearson Correlation—r) Between one variable and another: Y = a + b1 x 1 • Multivariate (e.g., Multiple Regression Analysis) Between one dep. var. and an independent variable, while holding all other independent variables constant: Y = a + b1 x1 + b2 x2 + b3 x3 + … + bk xk – Compare Groups • Between Proportions (e.g., Chi Square Test—2) H0: P1 = P2 = P3 = … = Pk • Between Means (e.g., Analysis of Variance) H0: µ1 = µ2 = µ3 = …= µk 23 STATITICAL DATA ANALYSIS Chi-Square Test of Independence? Developed by Karl Pearson in 1900. Is used to compare two or more groups regarding a categorical characteristic. That is, to compare proportions/percentages: – Examines whether proportions of different groups of subjects (e.g., managers vs professionals vs operatives) are equal/ different across two or more categories (e.g., males vs females). Examines whether or not a relationship exists between two categorical/nominal variables (e.g., employee status and gender) – A categorical DV and a categorical IV. – EXAMPLE? Is smoking a function of gender? That is, is there a difference between the percentages of males and females who smoke? 24 •Chi-Square Test of Independence Research Sample (n=100): ID Gender Smoking Status 1 0 = Male 1 = Smoker 2 1 = Female 0 = Non-Smoker 3 1 1 4 1 0 5 0 0 . . . . . . . . . 100 1 0 Dependent variable (smoking status) and the independent variable (gender) are both categorical. Null Hypothesis? H0: There is no difference in the percentages of males and females who smoke/don’t smoke (i.e., Smoking is not a function of gender). QUESTION: Logically, what would be the first thing you would do? 25 •Chi-Square Test of Independence H0: There is no difference in the percentages of males and females who smoke (Smoking is not a function of gender). H1: The two groups are different with respect to the proportions who smoke. TESTING PROCEDURE AND THE INTUITIVE LOGIC: Construct a contingency Table: Cross-tabulate the observations and compute Observed (actual) Frequencies (Oij ): Smoker Nonsmoker TOTAL Male O11 = 15 O21 = 5 Female O12 = 25 O22 = 55 TOTAL 40 60 20 80 n = 100 26 • Chi-Square Test of Independence Next, ask yourself: What numbers would you expect to find in the table if you were certain that there was absolutely no difference between the percentages of males and females who smoked (i.e., if you expected the Null to be true)? That is, compute the Expected Frequencies (Eij ). Hint: What % of all the subjects are smokers/non-smokers? Smoker Male O11 = 15 Female O12 = 25 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 TOTAL 20 80 n = 100 27 • Chi-Square Test of Independence If there were absolutely no differences between the two groups with regard to smoking, you would expect 40% of individuals in each group to be smokers (and 60% non-smokers). Compute and place the Expected Frequencies (Eij ) in the appropriate cells: Male O11 = 15 Female O12 = 25 E11 = 8 E12 = 32 Nonsmoker O21 = 5 O22 = 55 60 TOTAL E21 = 12 20 E22 = 48 80 n = 100 Smoker NOW WHAT? What is the next logical step? TOTAL 40 28 • Chi-Square Test of Independence Compare the Observed and Expected frequencies—i.e., examine the (Oij – Eij) discrepancies. Smoker Nonsmoker TOTAL Male O11 = 15 Female O12 = 25 E11 = 8 E12 = 32 O21 = 5 E21 = 12 O22 = 55 E22 = 48 20 80 TOTAL 40 60 n = 100 QUESTION: What can we infer if the observed/actual frequencies happen to be reasonably close (or identical) to the expected frequencies? 29 •Chi-Square Test of Independence So, the key to answering our original question lies in the size of the discrepancies between observed and expected frequencies. If the observed frequencies were reasonably close to the expected frequencies: – Reasonably certain that no difference exists between percentages of males and females who smoke, – Good chance that H0 is true • That is, we would be running a large risk of being wrong if we decide to reject it. On the other hand, the farther apart the observed frequencies happen to be from their corresponding expected frequencies: – The greater the chance that percentages of males and females who smoke would be different, – Good chance that H0 is false and should be rejected • That is, we would run a relatively small risk of being wrong if we decide to reject it. What is, then, the next logical step? 30 Chi-Square Test of Independence Compute an Overall Discrepancy Index: One way to quantify the total discrepancy between observed (Oij) and expected (Eij) frequencies is to add up all cell discrepancies--i.e., compute S (Oij – Eij). • Problem? Positive and negative values of (Oij – Eij) RESIDUALS for different cells will cancel out. • Solution? Square each (Oij – Eij) and then sum them up--compute S(Oij – Eij)2. • Any Other Problems? Value of S(Oij – Eij)2 is impacted by sample size (n). – For example, if you double the number of subjects in each cell, even though cell discrepancies remain proportionally the same, the above discrepancy index will be much larger and may lead to a different conclusion. Solution? 31 Chi-Square Test of Independence • Divide each (Oij – Eij)2 value by its corresponding Eij value before summing them up across all cells • That is, compute an index for average discrepancy per subject. S (Oij – Eij)2 Eij You have just developed the formula for 2 Statistic: 2 = S (Oij – Eij)2 Eij 2 can be intuitively viewed as: An index that shows how much the observed frequencies are in agreement with (or apart from) the expected frequencies (for when the null is assumed to be true). So, let’s compute 2 statistic for our example: 32 • Chi-Square Test of Independence Smoker Male O11 = 15 E11 = 8 Female O12 = 25 E12 = 32 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 E21 = 12 E22 = 48 20 80 TOTAL 2 = (15 – 8)2 8 + (25 – 32)2 32 + (5 – 12)2 12 + n = 100 (55 – 48)2 48 = 12.76 33 •Chi-Square Test of Independence Let’s Review: Obtaining a small 2 value means? – Observed frequencies are in close agreement with what we would expect them to be if there were no differences between our comparison groups. – That is, there is a strong likelihood that no difference exists between the percentages of males and females who smoke. – Hence, we would be running a significant risk of being wrong if we were to reject the null hypothesis. That is, a is expected to be relatively large. • Therefore, we should NOT reject the null. › NOTE: Smaller 2 values result in larger a levels (if n remains the same). A large 2 value means? 34 Chi-Square Test of Independence A large 2 value means: – Observed frequencies are far apart from what they ought to be if the null hypothesis were true. – That is, there is a strong likelihood for existence of a difference in the percentages of male and female smokers. – Hence, we would be running a small risk of being wrong if we were to reject the null hypothesis. That is, a is likely to be small. • Thus, we should reject the null. › NOTE: larger 2 values result in smaller a levels (if n remains the same). But, how large is large? For example, does 2 = 12.76 represent a large enough departure (of observed frequencies) from expected frequencies to warrant rejecting the null? Check out the associated a level! 35 a reflects whether 2 is large enough to warrant rejecting the null. •Chi-Square Test of Independence Answer: – Consult the table of probability distribution for 2 statistic to see what the actual value of a is (i.e., what is the probability that our 2 value is not large enough to be considered significant). – That is, look up the alevel associated with your 2 value (under appropriate degrees of freedom). • Degrees of Freedom: df = (r-1) (c-1) df = (2 – 1) (2 – 1) = 1 where r and c are # of rows and columns of the contingency table. 36 a 37 •Chi-Square Test of Independence From the table, the a level for 2 = 10.83 (with df = 1) is 0.001 . Our 2 = 12.76 > 10.83 QUESTION: for our 2 = 12.76 will a be smaller or greater than 0.001? • Smaller than 0.001 • Therefore, If we reject the null, the odds of being wrong will be even smaller than 1 in 1000. Can we afford to reject the null? Is it safe to do so? CONCLUSION? –% of males and females who smoke are not equal. –That is, smoking is a function of gender. –Can we be more specific? »Percentage of males who smoke is significantly larger than that of the females (75% vs. 31%, respectively) 38 • CAUTION: Select the appropriate percentages to report (Row% vs. Column%) • Chi-Square Test of Independence Smoker Male O11 = 15 Female O12 = 25 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 20 80 TOTAL 15 / 20 = 75% n = 100 25 / 80 = 31% Phi (a non-parametric correlation for categorical data): Φ= χ2 / N = 12.76 / 100 = 0.357 (Note: sign is NA) 39 Chi-Square Test of Independence VIOLATION OF ASSUMTIONS: 2 test requires expected frequencies (Eij) to be reasonably large. If this requirement is violated, the test may not be applicable. SOLUTION: – For 2 x 2 contingency tables (df = 1), use the Fisher’s Exact Probability Test results (automatically reported by SPSS). That is, look up a of the Fisher’s exact test to arrive at your conclusion. – For larger tables (df > 1), eliminate small cells by combining their corresponding categories in a meaningful way. That is, recode the variable that is causing small cells into a new variable with fewer categories and then use this new variable to redo the Chi-Square test. 40 •Chi-Square Test of Independence Let’s now use SPSS to do the same analysis! Menu Bar: Analyze, Descriptive Statistics, Crosstabs Statistics: Chi-Square, Contingency Coefficient. Cells: Observed, Row/Column percentages (for the independent variable) SPSS File: smoker SPSS File: GSS93 Subset 41 Chi-Square Test of Independence Suppose we wish to examine the validity of the “gender gap hypothesis” for the 1992 presidential election between Bill Clinton, George Bush, and Ross Perot. SPSS File: Voter 42 Correlation Coefficient (Pearson Correlation) = r What do you use it for? Karl Pearson, a Galton Student & the Founder of Modern Statistics To examine whether a relationship exists between two metric variables (e.g., income and education, or workload and job satisfaction) and what the nature and strength of that relationship may be. Range of Values for r? -1 < r < +1 Null Hypothesis when using r? r = 0 (There is no relationship between the two variables.) 43 44 Correlation Coefficient: To understand the practical meaning of r, we can square it. • What would r2 mean/represent? r2 Represents the proportion (%) of the total/combined variation in both x and y that is accounted for by the joint variation (covariation) of x and y together (x with y and y with x) • How is it calculated? r2 = (Covariation of X and Y together) / (Total variation of X & Y combined) How do we measure/quantify variations? r2 r ( x x)( y y) / n 1 2 [ ( x x) 2 / n 1][ ( y y) 2 / n 1] ( x x)( y y) ( x x) ( y y ) 2 • r2 always represents a % 2 • Why do we show more interest in r , rather than r2? 45 Correlation Coefficient: Computation r2 = (Covariation of X and Y together) / (All of variation of X & Y combined) r (x (x X 4 6 9 . . . _ X=7 x)( y y ) x) 2 (y _ _ Y X–X 12 -3 19 -1 14 2 . . . . . . _ Y=16 Y–Y -4 3 -2 . . . y) 2 _ _ (X – X) (Y – Y) 12 -3 -4 . . . _ _ ∑(X – X) (Y – Y) _ _ (X – X)2 (Y – Y)2 9 16 1 9 4 4 . . . . . . _ _ ∑ (X – X)2 ∑ (Y – Y)2 NOTE: Once r is calculated, we need to see if it is statistically significant (if sample data). That is, we need to test H0: r = 046 Correlation Coefficient? Suppose the correlation between X (say, Students’ GMAT Scores) and Y (their 1st year GPA in MBA program) is r = +0.48 and is statistically significant. How would we interpret this? a) GMAT score and 1st year GPA are positively related so that as values of one variable increase, values of the other also tend to increase, and b) 23% of variations/differences in students’ GPAs are explained by (or can be attributed to) variations/ differences in their GMAT scores. Lets now practice on SPSS Menu Bar: Analyze, Correlate, Bivariate, Pearson Using data in SPSS File Salary.sav we wish to see if beginning salary is related to seniority, age, work experience, and education 47 STATITICAL DATA ANALYSIS COMMON TYPES OF ANALYSIS: – Examine Strength and Direction of Relationships • Bivariate (e.g., Pearson Correlation—r) Between one variable and another: Y = a + b1 x1 • Multivariate (e.g., Multiple Regression Analysis) Between one dep. var. and an independent variable, while holding all other independent variables constant: Y = a + b1 x1 + b2 x2 + b3 x3 + … + bk xk – Compare Groups • Proportions (e.g., Chi Square Test—2) • Means (e.g., Analysis of Variance) 48 STATITICAL DATA ANALYSIS Chi-Square Test of Independence? To examine whether proportions of different groups of subjects (e.g., managers vs operatives) are equal/different across two or more categories (e.g., males vs females). To examine whether or not a relationship exists between two categorical/nominal variables (e.g., employee status and gender)--categorical dependent variable, categorical independent variable. – EXAMPLE? Is smoking a function of gender? That is, is there a difference between the percentages of males and females who smoke? 49 •Chi-Square Test of Independence Research Sample: ID Gender Smoking Status 1 0 = Male 1 = Smoker 2 1 = Female 0 = Non-Smoker 3 1 1 4 1 0 5 0 0 . . . . . . . . . 100 1 0 dependent variable (smoking status) and the independent variable (gender) are both categorical. Null Hypothesis? H0: There is no difference in the percentages of males and females who smoke (Smoking is not a function of gender). 50 QUESTION: Logically, what would be the first thing you would do? •Chi-Square Test of Independence H0: There is no difference in the percentages of males and females who smoke (Smoking is not a function of gender). H1: The two groups are different with respect to the proportions who smoke. TESTING PROCEDURE AND THE INTUITIVE LOGIC: Construct a contingency Table: Cross-tabulate the observations and compute Observed (actual) Frequencies (Oij ) : Smoker Nonsmoker TOTAL Male O11 = 15 O21 = 5 Female O12 = 25 O22 = 55 TOTAL 40 60 20 80 n = 100 51 • Chi-Square Test of Independence Next, ask yourself: What numbers would you expect to find in the table if you were certain that there was absolutely no difference between the percentages of males and females who smoked? That is, compute the Expected Frequencies (Eij ). Hint: What % of all the subjects are smokers/non-smokers? Smoker Male O11 = 15 Female O12 = 25 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 TOTAL 20 80 n = 100 52 • Chi-Square Test of Independence If there were absolutely no differences between the two groups with regard to smoking, you would expect 40% of individuals in each group to be smokers (and 60% non-smokers). Compute and place the Expected Frequencies (Eij ) in the appropriate cells: Male O11 = 15 Female O12 = 25 E11 = 8 E12 = 32 Nonsmoker O21 = 5 O22 = 55 60 TOTAL E21 = 12 20 E22 = 48 80 n = 100 Smoker NOW WHAT? What is the next logical step? TOTAL 40 53 • Chi-Square Test of Independence Compare the Observed and Expected frequencies—i.e., examine the (Oij – Eij) discrepancies. Smoker Nonsmoker TOTAL Male O11 = 15 Female O12 = 25 E11 = 8 E12 = 32 O21 = 5 E21 = 12 O22 = 55 E22 = 48 20 80 TOTAL 40 60 n = 100 QUESTION: What can we infer if the observed frequencies happen to be reasonably close (or identical) to the expected frequencies? 54 •Chi-Square Test of Independence If the observed frequencies were reasonably close to the expected frequencies: – Reasonably certain that no difference exists between percentages of males and females who smoke, – Good chance that H0 is true • That is, we would be running a large risk of being wrong if we decide to reject it. On the other hand, the farther apart the observed frequencies happen to be from their corresponding expected frequencies: – The greater the chance that percentages of males and females who smoke would be different, – Good chance that H0 is false and should be rejected • That is, we would run a relatively small risk of being wrong if we decide to reject it. So, the key to answering our original question lies in the size of the discrepancies between observed and expected frequencies. What is, then, the next logical step? 55 Chi-Square Test of Independence Compute an Overall Discrepancy Index: To quantify the overall discrepancy between observed (Oij). and expected (Eij). frequencies, we can add up all our cell discrepancies--i.e., compute S (Oij – Eij). • Problem? Positive and negative values of (Oij – Eij) RESIDUALS for different cells will cancel out. • Solution? Square each (Oij – Eij) and then sum them up--compute S(Oij – Eij)2. • Any Other Problems? Value of S(Oij – Eij)2 is impacted by sample size (n). – For example, if you double the number of subjects in each cell, even though cell discrepancies remain proportionally the same, the above discrepancy index will be much larger and may lead to a different conclusion. Solution? 56 Chi-Square Test of Independence • Divide each (Oij – Eij)2 value by its corresponding Eij value before summing them up across all cells • That is, compute the total discrepancy per subject index. S (Oij – Eij)2 Eij You have just developed the formula for 2 Statistic: 2 = S (Oij – Eij)2 Eij 2 can be intuitively viewed as an index that shows how much the observed frequencies are in agreement with (or apart from) the expected frequencies (when the null is assumed to be true). So, let’s compute 2 statistic for our example: 57 • Chi-Square Test of Independence Smoker Male O11 = 15 E11 = 8 Female O12 = 25 E12 = 32 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 E21 = 12 E22 = 48 20 80 TOTAL 2 = (15 – 8)2 8 + (25 – 32)2 32 + (5 – 12)2 12 + n = 100 (55 – 48)2 48 = 12.76 58 •Chi-Square Test of Independence Let’s Review: Obtaining a small 2 value means? – Observed frequencies are in close agreement with what we would expect them to be if there were no differences between our comparison groups. – That is, there is a strong likelihood that no difference exists between the percentages of males and females who smoke. – Hence, we would be running a significant risk of being wrong if we were to reject the null hypothesis. That is, a is expected to be relatively large. • Therefore, we should NOT reject the null. A large 2 value means? 59 Chi-Square Test of Independence A large 2 value means: – Observed frequencies are far apart from what they ought to be if the null hypothesis were true – That is, there is a strong likelihood for existence of a difference in the percentages of male and female smokers. – Hence, we would be running a small risk of being wrong if we were to reject the null hypothesis. That is, a is likely to be small. • Thus, we should reject the null. But, how large is large? For example, does 2 = 12.76 represent a large enough departure (of observed frequencies) from expected frequencies to warrant rejecting the null? 60 •Chi-Square Test of Independence Answer: – Consult the table of probability distribution for 2 statistic to see what the actual value of a is (i.e., what is the probability that it is not large enough to be considered significant). – That is, look up the a level associated with your 2 value (under appropriate degrees of freedom). • Degrees of Freedom: df = (r-1) (c-1) df = (2 – 1) (2 – 1) = 1 where r and c are # of rows and columns of the contingency table. 61 a 62 •Chi-Square Test of Independence From the table, the a level for 2 = 10.83 (with df = 1) is 0.001 . Our 2 = 12.76 > 10.83 QUESTION: If we decide to reject the Null, will a be smaller or greater than 0.001? • Smaller than 0.001 • Therefore, If we reject the null, the odds of being wrong will be even smaller than 1 in 1000. Can we afford to reject the null? Is it safe to do so? CONCLUSION? –% of males and females who smoke are not equal. –That is, smoking is a function of gender. –Can we be more specific? »Percentage of males who smoke is significantly larger than that of the females (75% vs. 31%, respectively) 63 • CAUTION: Select the appropriate percentages to report (Row% vs. Column%) • Chi-Square Test of Independence Smoker Male O11 = 15 Female O12 = 25 TOTAL 40 Nonsmoker O21 = 5 O22 = 55 60 20 80 TOTAL 15 / 20 = 75% n = 100 25 / 80 = %31 Phi (a non-parametric correlation for categorical data): Φ= χ2 / N 64 Chi-Square Test of Independence VIOLATION OF ASSUMTIONS: 2 test requires expected frequencies (Eij) to be reasonably large. If this requirement is violated, the test may not be applicable. SOLUTION: – For 2 x 2 contingency tables (df = 1), use the Fisher’s Exact Probability Test results (automatically reported by SPSS). • That is, look up a of the Fisher’s exact test – For larger tables (df > 1), eliminate small cells by combining their corresponding categories in a meaningful way. 65 •Chi-Square Test of Independence Let’s now use SPSS to do the same analysis! Menu Bar: Analyze, Descriptive Statistics, Crosstabs Statistics: Chi-Square, Contingency Coefficient. Cells: Observed, Row/Column percentages (for the independent variable) SPSS File: smoker SPSS File: GSS93 Subset 66 Chi-Square Test of Independence Suppose we wish to examine the validity of the “gender gap hypothesis” for the 1992-93 presidential elections between Bill Clinton, George Bush, and Ross Perot. SPSS File: Voter 67 Assignment #3 1. As a population demographer, you have long suspected that women’s fertility rate in different countries (fertility--average number of children born to a woman) would be related to male and female literacy rates (lit-male and lit_fema), access to health care, as characterized by number of hospital beds per 10,000 people (hospbed) and number of doctors per 10,000 people(docs), infant mortality rate (babymort--number of deaths per 1,000 live births), as well as male and female life expectancies(lifeexpm and lifeexpf). The data file “World95.sav” (in the U drive) contains 1995 population and socioeconomic statistics from 109 different countries, including statistics on all of the abovementioned variables. Please use the data to test your suspicion (i.e., see fertility rate is correlated with which of the above variables and in what way). 68 Assignment #3 2. Suppose you are a medical researcher and you wish to examine whether there is a relationship between incidents of coronary heart disease (CHD) and family history of CHD. Specifically, if CHD has in part a genetic component. In other words, you wish to know if incidents of CHD is proportionally higher among men with a family history of CHD, than among men without such family history. Researchers in the Western Electric Study have collected data on such issues using a sample of 240 men, ½ with and ½ without prior incidents of CHD (variable chd). All these men are by now deceased. Included in the data set is information on whether or not each subject has had a history of CHD in his immediate family (variable famhxcvr), as well as the day of the week when the subject’s death has occurred (variable dayofwk). The data is available in the electric.sav SPSS data file. (Note: Due to SPSS site license restrictions, this hyperlink will not work if you are off campus). a) Please conduct the appropriate statistical test to address the above research objective. b) Suppose you have been noticing that for most people earlier working days of the week (especially Mondays, Tuesdays, and Wednesday) appear to be more stressful in comparison with Fridays, Saturdays, and Sundays. Also, suppose you have come across prior research that indicates stress and CHD tend to create a deadly combination. As such, you have recently begun to suspect (hypothesize) that a larger percentage of men with CHD, compared with those without CHD, tend to die during Mondays, Tuesdays, and Wednesdays (as opposed to Fridays, Saturdays, and Sundays). That is, you suspect that having CHD increases likelihood of dying during the more stressful days of the week. Please perform the necessary analysis to verify 69 the validity of your suspicion (plausibility of your hypothesis). Assignment #3 NOTE: If you examine the value labels for the variable daysofwk, you will see that it is coded as 1=Sunday, 2=Monday, 3=Tuesday, 4=Wednesday, 5=Thursday, 6=Friday, and 7=Saturday. Therefore, for part (b), you will need to create a new variable--i.e., Recode daysofwk into a new dichotomous variable (say, deathday), that would represent death during Mondays, Tuesdays, and Wednesdays vs. Fridays, Saturdays and Sundays. Notice that the subjects who died on Thursdays should not be included in the analysis (i.e., should not be represented in any of the two categories of days represented by the new variable) Also, make sure you properly define the attributes (e.g., label, value label, etc.) of this new variable (i.e., deathday). REMINDERS: For each analysis, include the Notes in the printout. Also, edit the first page of your first analysis output to include your name. Make sure that on your printout you explain your findings and conclusions. Be specific as to what parts of the output you have used, and how you have used them, to reach your conclusions. Make sure that you tell the whole story and that your explanations of the findings are complete. For example, it is not enough to say that there is a significant relationship between characteristic A and characteristic B. You have to go on to indicate how the two characteristics are related and what that relationship really means. 70 HYPOTHESIS TESTING QUESTIONS OR COMMENTS ? 71