Center-based clustering

advertisement

Unsupervised learning

Supervised and Unsupervised learning

General considerations

Clustering

Dimension reduction

The lecture is partly based on:

Hastie, Tibshirani & Friedman. The Elements of Statistical Learning. 2009. Chapter 2.

Pattern Classification (2nd ed) by R. O. Duda, P. E. Hart and D. G. Stork, John Wiley & Sons,

2000. Chapter 2.

Dudoit, S., Fridlyand, J., & Speed, T. (2000). Comparison of discrimination methods for the

classification of tumors using gene expression data. JASA 2000.

General considerations

This is the common structure of microarray gene expression data

from a simple cross-sectional case-control design.

Data from other high-throughput technology are often similar.

Control 1 Control 2

Gene 1

Gene 2

Gene 3

Gene 4

Gene 5

Gene 6

Gene 7

Gene 8

Gene 9

Gene 10

Gene 11

……

Gene 50000

9.25

6.99

4.55

7.04

2.84

6.08

4

4.01

6.37

2.91

3.71

……

3.65

9.77

5.85

5.3

7.16

3.21

6.26

4.41

4.15

7.2

3.04

3.79

……

3.73

……

……

……

……

……

……

……

……

……

……

……

……

……

……

Control 25 Disease 1 Disease 2

9.4

5

4.73

6.47

3.2

7.19

4.22

3.45

8.14

3.03

3.39

……

3.8

8.58

5.14

3.66

6.79

3.06

6.12

4.42

3.77

5.13

2.83

5.15

……

3.87

5.62

5.43

4.27

6.87

3.26

5.93

4.09

3.55

7.06

3.86

6.23

……

3.76

……

Disease 40

……

……

……

……

……

……

……

……

……

……

……

……

……

6.88

5.01

4.11

6.45

3.15

6.44

4.26

3.82

7.27

2.89

4.44

……

3.62

General considerations

Supervised learning

In supervised learning, the problem is well-defined:

Given a set of observations {xi, yi},

estimate the density Pr(Y|X)

Usually the goal is to find the parameter that

minimize the expected classification error, or some loss

derived from it.

Objective criteria exists to measure the success of a

supervised learning mechanism:

Error rate from testing (or cross-validation) data

Disease classification, predict survival, predict cost … …

3

General considerations

Unsupervised learning

There is no output variable, all we observe is a set {xi}.

The goal is to infer Pr(X) and/or some of its properties.

When the dimension is low, nonparametric density estimation is

possible; When the dimension is high, may need to find simple

properties without density estimation, or apply strong assumptions

to estimate the density.

There is no objective criteria from the data itself; to justify a result:

> Heuristic arguments,

> External information,

> Reasonable explanation of the outcome

Find co-regulated sets, infer hidden regulation signals, infer

regulatory networks, …….

4

General considerations

Correlation structure

There is always correlations between features (genes, proteins,

metabolites …) in biological data. This is caused by the intrinsic

biological interactions and regulations.

The problem is:

(1) We don’t know what the correlation structure is

(in some cases we have some idea, e.g. DNA)

(2) We cannot reliably estimate it because the dimension is too

high and there is not enough data

General considerations

Curse of Dimensionality

Bellman R.E.,

1961.

In p-dimensions, to get a hypercube with volume r, the edge length

needed is r1/p.

In 10 dimensions, to capture 1% of the data to get a local average,

we need 63% of the range of each input variable.

General considerations

Curse of Dimensionality

In other words,

To get a “dense” sample, if we need N=100 samples in 1

dimension, then we need N=10010 samples in 10 dimensions.

In high-dimension, the data is always sparse and do not support

density estimation.

More data points are closer to the boundary, rather than to any

other data point prediction is much harder near the edge of the

training sample.

General considerations

General considerations

Curse of Dimensionality

We have talked about the curse of dimensionality in the

sense of density estimation.

In a classification problem, we do not necessarily need

density estimation.

Generative model --- care about class density function.

Discriminative model --- care about boundary.

Example: Classifying belt fish and carp. Looking at the

length/width ratio is enough. Why should we care other

variables such as shape of fins, or number of teeth?

General considerations

N<<p problem

We talk about “curse of dimensionality” when N is not >>>p.

In bioinformatics, usually N<100, and p>1000.

How to deal with this N<<p issue?

Dramatically reduce p before model-building.

Filter genes based on: variation, normal/disease test statistic,

projection……

Use methods that are resistant to large numbers of nuisance

variables: Support vector machines, random forests, boosting

……

Borrow other information: functional annotation, meta-analysis

……

A typical workflow

(1) Obtain high-throughput data

(2) Unsupervised learning (dimension reduction/clustering)

to show that sample from different treatment are indeed

separated, and identify any interesting pattern.

(3) Feature selection based on testing – find features that

are differentially expressed between treatment. FDR is

used here.

(4) Experimental validation of the selected features, using

more reliable biological techniques. (e.g. real-time PCR

is used to validate microarray expression data.)

(5) Classification model building.

(6) From an independent group of samples, measure the

feature levels using reliable technique.

(7) Find the sensitivity/specificity of the model using the

independent data.

11

Clustering

Finding features/samples that are similar.

Can tolerate n<p.

Irrelevant features contribute random noise

that shouldn’t change strong clusters.

Some false clusters may be due to noise.

But their size should be limited.

12

Hierarchical clustering

Agglomerative: build tree by joining nodes;

Divisive: build tree by dividing groups of objects.

Hierarchical clustering

Single linkage,d SL (G , H ) min d ii ;

iG

i H

Complete linkage,d CL (G , H ) max d ii ;

iG

i H

1

Average linkage,d GA (G , H )

NG N H

d

iG i H

14

ii

.

Hierarchical clustering

Example data:

Hierarchical clustering

Single linkage: find the distance between any two nodes

by nearest neighbor distance.

Hierarchical clustering

Single linkage:

Hierarchical clustering

Complete linkage: find the distance between any

two nodes by farthest neighbor distance.

Average linkage: find the distance between

any two nodes by average distance.

Hierarchical clustering

Comments:

Hierarchical clustering generates a tree; to

find clusters, the tree needs to be cut at a

certain height;

Complete linkage method favors compact,

ball-shaped clusters; single linkage method

favors chain-shaped clusters; average

linkage is somewhere in between.

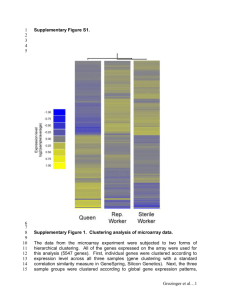

Hierarchical clustering

Average linkage on

microarray data.

Row: genes

Column: samples

20

Hierarchical clustering

Figure 14.12: Dendrogram from agglomerative hierarchical clustering with average linkage to

the human tumor microarray data.

21

Center-based clustering

Have objective functions which define how good a

solution is;

The goal is to minimize the objective function;

Efficient for large/high dimensional datasets;

The clusters are assumed to be convex shaped;

The cluster center is representative of the cluster;

Some model-based clustering, e.g. Gaussian

mixtures, are center-based clustering.

Center-based clustering

K-means clustering. Let

disjoint clusters.

be k

Error is defined as the sum of the distance

from the cluster center

Center-based clustering

The k-means algorithm:

Center-based clustering

Understanding k-means as an optimization

procedure:

The objective function is:

Minimize the P(W,Q) subject to:

Center-based clustering

The solution is iteratively solving sub-problems:

When

only if:

is fixed,

When

is fixed,

and only if

is minimized if and

is minimized if

Center-based clustering

In terms of optimization, the k-means procedure is

greedy.

Every iteration decreases the value of the

objective function; The algorithm converges to a

local minimum after a finite number of iterations.

Results depend on initiation values.

The computational complexity is proportional to the

size of the dataset efficient on large data.

The clusters identified are mostly ball-shaped.

Works only on numerical data.

Center-based clustering

K-means

How to decide the number of clusters?

If there are truly k* groups, for k<k*, some groups are

merged, and within-cluster dissimilarity should be big; it

should drop substantially when increasing k; when k>k*,

some true groups are partitioned. Increasing k should not

bring much improvement on within-cluster dissimilarity.

Figure 14.8: Total within cluster sum of squares for K-means clustering applied to the

human tumor microarray data.

28

Center-based clustering

Automated selection of k?

The x-means algorithm based on AIC/BIC.

A family of models at different k:

Is the likelihood of the data given the

jth model. pj is the number of parameters.

We have to assume a model to get the

likelihood. The convenient one is Gaussian.

Center-based clustering

Under the assumption of identical spherical

Gaussian assumption, (n is sample size; k is

number of centroids)

μ(i) is the centroid associated with xi.

The likelihood is:

The number of parameters is (d is dimension):

(class probabilities + parameters for mean & variance)

Dimension Reduction

The purpose of dimension reduction:

Data simplification

Data visualization

Reduce noise (if we can assume only the

dominating dimensions are signals)

Variable selection for prediction (in supervised

learning)

PCA

Explain the variance-covariance structure

among a set of random variables by a few

linear combinations of the variables;

Does not require normality!

PCA

First PC :

a' X that maximizes Var(a' X),

subject to a'a = 1

ith PC :

a 'i X that maximizes Var(a 'i X),

subject to a 'ia i = 1 and Cov(a 'i X, a 'k X) = 0, " k < i

PCA

PCA

The eigen values are the variance components:

Proportion of total variance explained by the kth PC:

PCA

PCA

The geometrical interpretation of PCA:

PCA

PCA using the correlation matrix, instead of the

covariance matrix?

This is equivalent to first standardizing all X

vectors.

PCA

Using the correlation matrix avoids the domination

from one X variable due to scaling (unit changes),

for example using inch instead of foot. Example:

é1 4 ù

é 1 0.4ù

S=ê

ú, r = ê

ú

4

100

0.4

1

ë

û

ë

û

PCA from S :

l1 = 100.16, e1' = [0.040 0.999]

l2 = 0.84, e'2 = [0.999 -0.040]

PCA from r :

l1 = 1+ r = 1.4, e1' = [0.707 0.707]

l2 = 1- r = 0.6, e'2 = [0.707 -0.707]

PCA

Selecting the number of

components?

Based on eigen values (% variation

explained). Assumption: the small

amount of variation explained by

low-rank PCs is noise.

PCA

Figure 14.21: The best rank-two linear

approximation to the half-sphere data. The

right panel shows the projected points with

coordinates given by U2D2, the first two

principal components of the data.

41

Factor Analysis

If we take the first several PCs that explain most of

the variation in the data, we have one form of

factor model.

L: loading matrix

F: unobserved random vector (latent variables).

ε: unobserved random vector (noise)

Factor Analysis

Rotations in the m-dimensional subspace defined by

the factors make the solution non-unique:

PCA is one unique solution, as the vectors are

sequentially selected. Maximum likelihood estimator

is another solution:

Factor Analysis

As we said, rotations within the m-dimensional subspace

doesn’t change the overall amount of variation explained. Do

rotation to make the results more interpretable:

Factor Analysis

Orthogonal simple factor

rotation:

Rotate the orthogonal

factors around the origin

until the system is

maximally aligned with the

separate clusters of

variables.

Oblique Simple Structure

Rotation:

Allow the factors to

become correlated. Each

factor is rotated individually

to fit a cluster.

45