statLecture9b

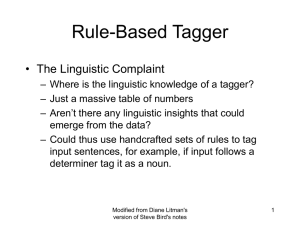

advertisement

Corpora and Statistical Methods Lecture 9 Albert Gatt Part 2 POS Tagging overview; HMM taggers, TBL tagging The task Assign each word in continuous text a tag indicating its part of speech. Essentially a classification problem. Current state of the art: taggers typically have 96-97% accuracy figure evaluated on a per-word basis in a corpus with sentences of average length 20 words, 96% accuracy can mean one tagging error per sentence Sources of difficulty in POS tagging Mostly due to ambiguity when words have more than one possible tag. need context to make a good guess about POS context alone won’t suffice A simple approach which assigns only the most common tag to each word performs with 90% accuracy! The information sources Syntagmatic information: the tags of other words in the context of w 1. Not sufficient on its own. E.g. Greene/Rubin 1977 describe a context-only tagger with only 77% accuracy Lexical information (“dictionary”): most common tag(s) for a given word 2. e.g. in English, many nouns can be used as verbs (flour the pan, wax the car…) however, their most likely tag remains NN distribution of a word’s usages across different POSs is uneven: usually, one highly likely, other much less Tagging in other languages (than English) In English, high reliance on context is a good idea, because of fixed word order Free word order languages make this assumption harder Compensation: these languages typically have rich morphology Good source of clues for a tagger Evaluation and error analysis Training a statistical POS tagger requires splitting corpus into training and test data. Often, we need a development set as well, to tune parameters. Using (n-fold) cross-validation is a good idea to save data. randomly divide data into train + test train and evaluate on test repeat n times and take an average NB: cross-validation requires the whole corpus to be blind. To examine the training data, best to have fixed training & test sets, perform cross-validation on training data, and final evaluation on test set. Evaluation Typically carried out against a gold standard based on accuracy (% correct). Ideal to compare accuracy of our tagger with: baseline (lower-bound): standard is to choose the unigram most likely tag ceiling (upper bound): e.g. see how well humans do at the same task humans apparently agree on 96-7% tags means it is highly suspect for a tagger to get 100% accuracy HMM taggers Using Markov models Basic idea: sequences of tags are a Markov Chain: Limited horizon assumption: sufficient to look at previous tag for information about current tag Time invariance:The probability of a sequence remains the same over time Implications/limitations Limited horizon ignores long-distance dependences e.g. can’t deal with WH-constructions Chomsky (1957): this was one of the reasons cited against probabilistic approaches Time invariance: e.g. P(finite verb|pronoun) is constant but we may be more likely to find a finite verb following a pronoun at the start of a sentence than in the middle! Notation We let ti range over tags Let wi range over words Subscripts denote position in a sequence Use superscripts to denote word types: wj = an instance of word type j in the lexicon tj = tag t assigned to word wj Limited horizon property becomes: P(ti 1 | t1,.., i ) P(ti 1 | ti ) Basic strategy Training set of manually tagged text extract probabilities of tag sequences: k j C ( t , t ) k j P(t | t ) C (t j ) e.g. using Brown Corpus, P(NN|JJ) = 0.45, but P(VBP|JJ) = 0.0005 Next step: estimate the word/tag probabilities: These are basically l j C ( w , t ) l j P( w | t ) C (t j ) symbol emission probabilities Training the tagger: basic algorithm 1. Estimate probability of all possible sequences of 2 tags in the tagset from training data 2. For each tag tj and for each word wl estimate P(wl| tj). 3. Apply smoothing. Finding the best tag sequence Given: a sentence of n words Find: t1,n = the best n tags arg max P(t1,n | w1,n ) arg max t i ,n P( w1,n | t1,n ) P(t1,n ) P( w1,n ) arg max P( w1,n | t1,n ) P(t1,n ) t1,n t1,n Application of Bayes’ rule denominator can be eliminated as it’s the same for all tag sequences. Finding the best tag sequence The expression needs to be reduced to parameters that can be estimated from the training corpus need to make some simplifying assumptions 1. words are independent of eachother 2. a word’s identity depends only on its tag The independence assumption P( w1,n n | t1,n ) P(t1,n ) P( wi | t1, n ) P(t n | t n 1 ) ... P(t 2 | t1 ) i 1 • Probability of a sequence of words given a sequence of tags is computed as a function of each word independently The identity assumption P( w1,n n | t1,n ) P(t1,n ) P( wi | t1,n ) P(t n | t n 1 ) ... P(t 2 | t1 ) i 1 n P( wi | ti ) P(t n | t n 1 ) ... P(t 2 | t1 ) i 1 Probability of a word given a tag sequence = probability a word given its own tag Applying these assumptions arg max P(t1,n | w1,n ) arg max t i ,n P( w1,n | t1,n ) P(t1,n ) P( w1,n ) arg max P( w1,n | t1,n ) P(t1,n ) t1,n t1,n n arg max t1,n P(w | t )P(t | t i i 1 i i i 1 ) Tagging with the Markov Model Can use the Viterbi Algorithm to find the best sequence of tags given a sequence of words (sentence) Reminder: probability of being in state (tag) j at word i on the best path i ( j) i 1 most probable state (tag) at word i given that we’re in state j at word i+1 The algorithm: initialisation i ( PERIOD ) 1.0 i (t ) 0 for all other t Assume that P(PERIOD) = 1 at end of sentence Set all other tag probs to 0 Algorithm: induction step for i = 1 to n step 1: for all tags tj do: i 1 (t ) max [ i (t ) P(t | t ) P(wi 1 | t )] j k j k j 1 k T Probability of tag tj at i+1 on best path through i i 1 (t j ) arg max [ i (t k ) P(t j | t k ) P( wi 1 | t j )] i k T Most probable tag leading to tj at i+1 Algorithm: backtrace X n 1 arg max n 1 (t j ) State at n+1 1 j T for j = n to 1 do: X j j 1 ( X j 1 ) retrieve the most probable tags for every point in sequence P( X 1... X n ) max n1 (t j ) 1 j T Calculate probability for the sequence of tags selected Some observations The model is a Hidden Markov Model we only observe words when we tag In actuality, during training we have a visible Markov Model because the training corpus provides words + tags “True” HMM taggers Applied to cases where we do not have a large training corpus We maintain the usual MM assumptions Initialisation: use dictionary: set emission probability for a word/tag to 0 if it’s not in dictionary Training: apply to data, use forward-backward algorithm Tagging: exactly as before