ppt

advertisement

Graph-Based Segmentation

Readings: Szeliski, chapter5.4 5.4

http://www.cis.upenn.edu/~jshi/GraphTutorial/

Image segmentation

• How do you pick the right segmentation?

•Bottom up segmentation:

- Tokens belong together because

they are locally coherent.

•Top down segmentation:

- Tokens grouped because

they lie on the same object.

“Correct” segmentation

• There may not be a single correct answer.

• Partitioning is inherently hierarchical.

• Presentation:

– “Use the low-level coherence of brightness, color,

texture or motion attributes to come up with

partitions”

Affinity (Similarity)

• Pixels in group A and B: high affinities

• Connections between A, B: weak affinity

• Cut

ij

Normalized Cut

• Cut

cut ( A, B)

iA, jB

ij

Using a minimum cut usually involves isolating a single pixel

Normalized Cut

cut ( A, B)

cut ( A, B)

Ncut ( A, B)

assoc( A,V ) assoc( B,V )

Association within a cluster

assoc( A, A)

i , jA

assoc( A,V ) assoc( A, A) cut ( A, B)

ij

Graph-based Image Segmentation

G = {V,E}

V: graph nodes

E: edges connection nodes

Pixels

Pixel similarity

Slides from Jianbo Shi

Graph terminology

• Similarity matrix: W wi , j

X(i ) X( j )

wi , j e

X2

2

2

Slides from Jianbo Shi

Affinity matrix

N pixels

Similarity of image pixels to selected pixel

Brighter means more similar

M pixels

Warning

the size of W is quadratic

with the number

of parameters!

Reshape

N*M pixels

N*M pixels

Graph terminology

• Degree of node:

d i wi , j

j

…

…

Slides from Jianbo Shi

Graph terminology

• Volume of set:

vol( A) di , A V

Association of A

iA

Slides from Jianbo Shi

Graph terminology

Cuts in a graph:

cut ( A, A )

w

iA, jA

i, j

Slides from Jianbo Shi

Representation

segments

Partition matrix X:

Pair-wise similarity matrix W:

pixels

X X1,..., X K

W (i, j ) aff (i, j )

Degree matrix D:

D (i, i ) j wi , j

Laplacian matrix L:

L D W

D=Diag(d)

Pixel similarity functions

Pixel similarity functions

Intensity

I( i ) I( j )

W (i , j ) e

Distance

2

2

I2

X(i ) X( j )

W (i , j ) e

Texture

X2

c( i ) c( j )

W (i , j ) e

c2

2

2

2

2

Pixel similarity functions

Intensity

I( i ) I( j )

W (i , j ) e

I2

W (i , j ) e

X

2

2

here c(x) is a vector of filter outputs.

A natural thing to do is to square the outputs

of a range of different filters

Distance

at different scales and orientations,

2

X(i ) X( j )

smooth the result, and rack

2

these into a vector.

2

Texture

c( i ) c( j )

W (i , j ) e

c2

2

2

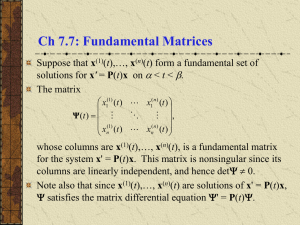

Definitions

• Methods that use the spectrum of the affinity

matrix to cluster are known as spectral

clustering.

• Normalized cuts, Average cuts, Average

association make use of the eigenvectors of

the affinity matrix.

• Why these methods work?

Spectral Clustering

Data

Similarities

* Slides from Dan Klein, Sep Kamvar, Chris Manning, Natural Language Group Stanford University

Eigenvectors and blocks

• Block matrices have block eigenvectors:

1= 2

2= 2

1

1

0

0

.71

0

1

1

0

0

.71

0

0

0

1

1

0

.71

0

0

1

1

0

.71

eigensolver

3= 0

4= 0

• Near-block matrices have near-block eigenvectors:

1= 2.02

2= 2.02

3= -0.02

4= -0.02

1

1

.2

0

.71

0

1

1

0

-.2

.69

-.14

.2

0

1

1

.14

.69

0

-.2

1

1

0

.71

eigensolver

* Slides from Dan Klein, Sep Kamvar, Chris Manning, Natural Language Group Stanford University

Spectral Space

Can put items into blocks by eigenvectors:

e1

1

1

.2

0

.71

0

1

1

0

-.2

.69

-.14

.2

0

1

1

.14

.69

0

-.2

1

1

0

.71

e1

e2

Clusters clear regardless of row ordering:

1

.2

1

0

.71

0

.2

1

0

1

.14

.69

1

0

1

-.2

.69

-.14

0

1

-.2

1

0

.71

e1

e2

e2

e1

* Slides from Dan Klein, Sep Kamvar, Chris Manning, Natural Language Group Stanford University

e2

Outline

1. Graph terminology and representation.

2. “Min cuts” and “Normalized cuts”.

How do we extract a good cluster?

• Simplest idea: we want a vector x giving the

association between each element and a cluster

• We want elements within this cluster to, on the

whole, have strong affinity with one another

• We could maximize x TWx

T

• But need the constraint x x 1

• This is an eigenvalue problem - choose the

eigenvector of W with largest eigenvalue.

Minimum cut

Criterion for partition:

min cut ( A, B ) min

A, B

w(u, v)

uA,vB

A

Problem!

Weight of cut is directly proportional to

the number of edges in the cut.

B

Cuts with

lesser weight

than the

ideal cut

Ideal Cut

First proposed by Wu and Leahy

Normalized Cut

Normalized cut or balanced cut:

1

1

Ncut ( A, B) cut ( A, B )

vol( A) vol( B )

Finds better cut

Normalized Cut

• Volume of set (or association):

vol( A) assoc ( A,V ) uA,tV w(u, t )

A

B

Normalized Cut

• Volume of set (or association):

vol( A) assoc ( A,V ) uA,tV w(u, t )

A

B

• Define normalized cut: “a fraction of the total edge connections to

all the nodes in the graph”:

cut ( A, B)

cut ( A, B)

Ncut ( A, B)

assoc( A,V ) assoc( B,V )

A

B

Define normalized association: “how tightly on average nodes

within the cluster are connected to each other”

assoc( A, A) assoc( B, B)

N assoc ( A, B)

assoc( A,V ) assoc( B,V )

A

B

Observations(I)

• Maximizing Nassoc is the same as minimizing

Ncut, since they are related:

Ncut ( A, B) 2 Nassoc ( A, B)

Slides from Jianbo Shi

Slides from Jianbo Shi

Slides from Jianbo Shi

Slides from Jianbo Shi

Slides from Jianbo Shi

Algorithm

• How to minimize Ncut?

– Transform Ncut equation to a matricial form.

– After simplifying:

D (i , i ) j W (i , j )

yT ( D W ) y

min x Ncut( x) min y

T

y

Dy

T

Subject to:

y D1 0

Rayleigh quotient

NP-Hard!

y’s values are quantized

Algorithm

• Instead, relax into the continuous domain by solving

generalized eigenvalue system:

max y yT D W y subject to yT Dy 1

min

• Which gives: ( D W ) y Dy

• Note that ( D W )1 0 so, the first eigenvector is y0=1 with

eigenvalue 0.

• The second smallest eigenvector is the real valued solution to

this problem!!

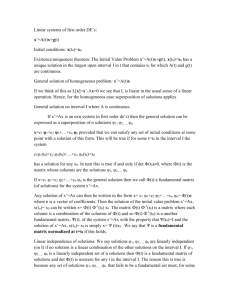

Algorithm

1. Define a similarity function between 2 nodes. i.e.:

F( i ) F( j )

wi , j e

I2

2

2

X(i ) X( j )

X2

2

2

2. Compute affinity matrix (W) and degree matrix (D).

3. Solve ( D W ) y Dy

4. Use the eigenvector with the second smallest

eigenvalue to bipartition the graph.

5. Decide if re-partition current partitions.

Note: since precision requirements are low, W is very sparse and only few

eigenvectors are required, the eigenvectors can be extracted very fast

using Lanczos algorithm.

Algorithm

Solve

(D - W) y Dy

(I - N ) z z

1

2

N D WD

1

2

1

2

zD y

Normalized affinity matrix, (Weiss 1999)

Discretization

•

Sometimes there is not a clear threshold to binarize since

eigenvectors take on continuous values.

•

How to choose the splitting point?

a)

b)

c)

Pick a constant value (0, or 0.5).

Pick the median value as splitting point.

Look for the splitting point that has the minimum Ncut value:

1.

2.

3.

Choose n possible splitting points.

Compute Ncut value.

Pick minimum.

Use k-eigenvectors

•

•

Recursive 2-way Ncut is slow.

We can use more eigenvectors to re-partition the graph, however:

–

•

Not all eigenvectors are useful for partition (degree of smoothness).

Procedure: compute k-means with a high k. Then follow one of these

procedures:

a)

b)

Merge segments that minimize k-way Ncut criterion.

Use the k segments and find the partitions there using exhaustive search.

e1

•

1

1

.2

0

.71

0

1

1

0

-.2

.69

-.14

.2

0

1

1

.14

.69

0

-.2

1

1

0

.71

e1

e2

Compute Q (next slides).

e2

Toy examples

Images from Matthew Brand (TR-2002-42)

Example (I)

Eigenvectors

Segments

Example (II)

Segments

Original

* Slide from Khurram Hassan-Shafique CAP5415 Computer Vision 2003

Example (III)

Comparative segmentation results (Alpert, Galun, Basri et al. 2007)

probabilistic bottom-up merging

Segmentation Methods Using

Eigenvectors

1. Graph terminology and representation.

2. “Min cuts” and “Normalized cuts”.

3. Other segmentation methods using

eigenvectors.

Other Methods

• Average association

– Use the eigenvector of W associated to the biggest

eigenvalue for partitioning.

– Tries to maximize:

assoc ( A, A) assoc( B, B)

A

B

A

– Has a bias to find tight clusters. Useful for

gaussian distributions.

B

Other Methods

• Average cut

– Tries to minimize:

cut ( A, B) cut ( A, B)

A

B

– Very similar to normalized cuts.

– We cannot ensure that partitions will have a tight

within-group similarity since this equation does

not have the nice properties of the equation of

normalized cuts.

Other Methods

Other Methods

Normalized cut

Average cut

20 points are randomly distributed from 0.0 to 0.5

12 points are randomly distributed from 0.65 to 1.0

Average association

Other Methods

Data

W

First ev

Second ev

• Scott and Longuet-Higgins (1990).

–

–

–

–

V contains the first k eigenvectors of W.

Normalize V by rows.

Compute Q=VTV

Values close to 1 belong to the same cluster.

Q

Other Applications

Data

M

Q

• Costeira and Kanade (1995).

– Used to segment points in motion.

– Compute M=(XY).

– The affinity matrix W is compute as W=MTM. This trick computes

the affinity of every pair of points as a inner product.

– Compute Q=VTV

– Values close to 1 belong to the same cluster.

Other Applications

• Face clustering in

meetings.

– Grab faces from video in

real time (use a face

detector + face tracker).

– Compare all faces using a

distance metric (i.e.

projection error into

representative basis).

– Use normalized cuts to

find best clustering.

Conclusions

• Good news:

– Simple and powerful methods to segment images.

– Flexible and easy to apply to other clustering problems.

• Bad news:

– High memory requirements (use sparse matrices).

– Slow --- multi-grid, hierarchical

– Very dependant on the scale factor for a specific problem.

X(i ) X( j )

W (i , j ) e

X2

2

2

5.5 Graph Cuts and Energy-based

Methods

http://research.microsoft.com/enus/um/cambridge/projects/visionimagevideoediting/segmentation/grabcut.htm